Tom's Guide Verdict

The Pixel 2 XL is the best pure Android phone you can buy, with a gorgeous 6-inch OLED screen, easy access to Google Assistant, sharp cameras and very long battery life.

Pros

- +

Squeezable Google Assistant

- +

Impressive Google Lens feature

- +

Sharp and colorful 6-inch OLED screen

- +

Great photo quality with Portrait mode

- +

Faster than Galaxy S8

- +

Long battery life

Cons

- -

Screen has some color issues

- -

No wireless charging or headphone jack

- -

Can't preview Portrait mode effect

Why you can trust Tom's Guide

Update: Google has discontinued the Google Pixel 2 and Pixel 2 XL, although you can buy them used. We recommend the newer Google Pixel 4a.

The Google Pixel 2 and Pixel 2 XL are anything but conformists. Unlike with other flagship phones, you won't find dual-lens cameras or edge-to-edge screens on these handsets.

Instead, Google focused on making these the smartest smartphones ever. And based on my testing, the new Pixels aptly fit that description, with a versatile Google Assistant you can now summon with a squeeze and a new object-recognition feature in the camera app that's truly impressive.

Design

"Send it back — it still needs more bezel!" That’s how I imagine the insane conversation about the regular Pixel 2 took place at Google's headquarters. At a time when other phones are stretching their screens to reach practically from one corner to the other, the front of the Pixel 2 is a throwback in the worst possible sense of the word.

I measured the top and bottom bezels at over 0.6 inches, compared to 0.2 inches for the Galaxy S8. Seeing that gorgeous 5-inch OLED screen sandwiched between such large blocks of black glass is downright offensive.

It's not all bad news, though, as the two front-firing stereo speakers get nice and loud, offering richer audio than the speaker on the bottom edge of the Galaxy S8. Plus, if you're adamant that a phone be easy to use with one hand, you'll prefer the Pixel 2's more-compact dimensions of 5.7 x 2.7 x 0.3 inches, versus 6.2 x 3 x 0.3 inches for the Pixel 2 XL. The Pixel 2 weighs a fairly light 5 ounces, compared to 6.2 ounces for the Pixel 2 XL.

The Pixel 2 XL also has a much more modern vibe, as its 6-inch display covers much more of the phone's face. The top and bottom bezels measure a more eye-pleasing 0.4 inches and 0.35 inches thick, respectively. This handset also sports stereo speakers above and below the screen.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Around back, you'll find Google’s trademark two-tone design on both phones, with a stripe of black glass across the top around the camera and flash, and sturdy aluminum covering the bottom three-quarters of the phones. The fingerprint sensor is positioned right in the center, beneath the stripe, making it easy to reach.

Google offers a range of colors for the Pixel 2, including Just Black, Clearly White, Black and White (which reminds us of the cookie), and Kinda Blue. The Pixel 2 XL comes in Just Black and Black and White.

Unfortunately, neither the Pixel 2 nor the Pixel 2 XL features a headphone jack. Google includes a USB-C dongle in the box, but you're probably better off just going wireless.

Google Pixel 2 and Pixel 2 XL: Specs Compared

| Pixel 2 | Pixel 2 XL | |

| Price | $649 | $849 |

| OS | Android 8.0 | Android 8.0 |

| Display | 5 inches (1920 x 1080 pixels) | 6 inches (2880 x 1440 pixels) |

| CPU | Snapdragon 835 | Snapdragon 835 |

| RAM | 4GB | 4GB |

| Storage | 64GB, 128GB | 64GB, 128GB |

| Rear Camera | 12 MP (f/1.8) | 12 MP (f/1.8) |

| Front Camera | 8 MP (f/2.4) | 8 MP (f/2.4) |

| Battery | 2700 mAh | 3520 mAh |

| Headphone Jack | No | No |

| Charging | USB-C | USB-C |

| Size | 5.7 x 2.7 x 0.3 inches | 6.2 x 3 x 0.3 inches |

| Weight | 5.01 ounces | 6.2 ounces |

Durability: Not the toughest phone

We tested the Google Pixel 2 XL’s toughness by dropping it on its face onto wood from a height of 4 feet and 6 feet; we then dropped it on its edge and face onto concrete from 4 feet; we then dropped it on its edge and face from 6 feet onto concrete.

The Pixel 2 XL survived drops from 4 and 6 feet onto wood with no issues. However, when this phone landed on its face from 4 feet onto concrete, the screen cracked, obscuring the front camera.

An edge drop from 6 feet didn't do too much more damage, but a face drop from that height caused half the screen to go black. As a result, the Pixel 2 XL earned a fairly weak toughness score of 4.3 out of 10.

To see the results of other smartphones, as well as our complete scoring methodology, check out our smartphone drop tests.

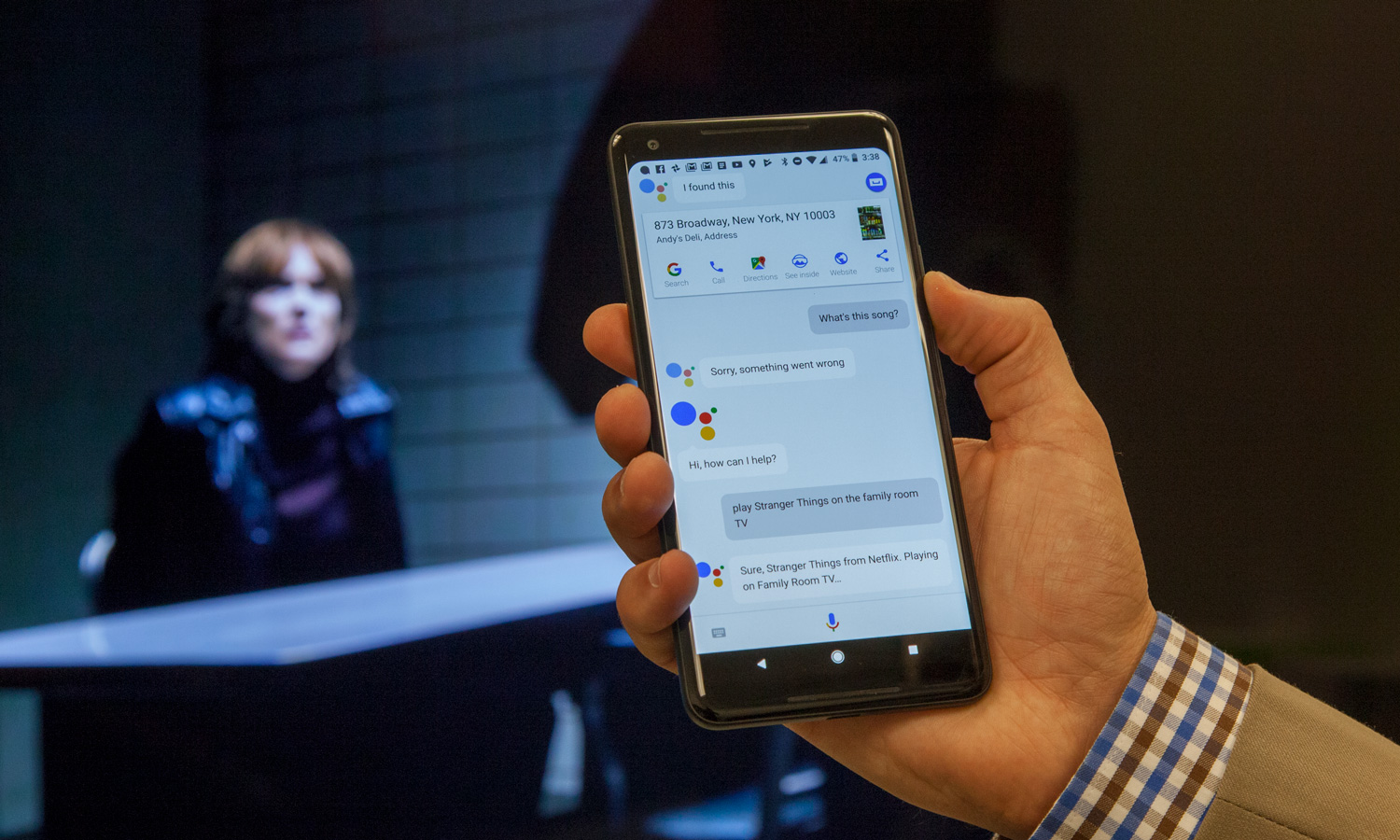

Google Assistant: Squeezable and smart

When I first heard about Active Edge on the Pixel 2 and Pixel 2 XL, I thought it was a gimmick. But after using it just for a few minutes, I changed my mind. With a firm, quick squeeze toward the bottom of the phone, you can activate Google Assistant, which is faster and more accurate and versatile than Siri.

For instance, I said, "Play BoJack Horseman on my living room TV," and Google Assistant on the Pixel 2 launched the Netflix app right to this deranged cartoon on my big screen. Of course, it helps that I had a Chromecast plugged in to the set, but that's exactly Google's plan: to show you how its hardware and software work well together across its growing ecosystem of products. The Google-owned Nest thermostat also works with Google Assistant, so you could say, "Set the temperature to 68 degrees."

With the Assistant just a squeeze away, I had no problem finding out how the Yankees were doing against the Astros (not great), telling the Pixel 2 to snap a selfie, summoning up all the photos I took that day, or firing up my Liked from Radio Playlist on Spotify. Sure, I could do all of this by saying, "OK, Google," first, but I found it more convenient and less awkward to use Active Edge.

You can also adjust the sensitivity level of the squeeze required and allow the Active Edge feature to work when the screen is off. I rarely activated the Assistant by accident.

Displays: An Update Makes the Pixel 2 XL Better

While Apple charges $999 for the privilege of owning a colorful OLED display and enjoying its wide viewing angles and perfect blacks on the iPhone X, and while the OLED Galaxy S8 starts at $750, you can get this screen tech on the Pixel 2 for $649

The Pixel 2's 5-inch screen isn't the sharpest, at 1920 x 1080 pixels, and it's much smaller than the 5.8-inch Galaxy S8, but it produced an excellent 148 percent of the sRGB color gamut. When I watched the Star Wars: The Last Jedi trailer on the Pixel 2's display, the golden orange around the insanely cute porg's eyes popped against its white fur, and the reflection of two clashing weapons in Captain Phasma's gleaming, silver helmet looked gorgeous.

The Pixel 2 XL's 6-inch screen sports a sharper, 2880 x 1440-pixel resolution. This panel registered a slightly lower 130 percent of the color gamut, but its colors are just about as accurate on paper. The Pixel 2 XL's display scored 0.26 on the Delta-E error test (0 is perfect), and the Pixel 2 hit 0.29.

The Pixel 2 XL's had some issues at launch. We found that there is a blue tint present on the Pixel 2 XL's panel at off-angles and that there was some graininess when viewing solid colored backgrounds. The Pixel 2 XL was also reportedly suffering from burn-in issues, with the icons at the bottom of the display staying on the screen even when they're not supposed to be present.

MORE: The Best Smartphones You Can Buy Today

Fortunately, a new update from Google provided more saturated hues via a new mode, and it should also mitigate burn-in.

The Pixel 2 XL is the phone you want to have outdoors, as it registered a higher 438 nits on our light meter, compared to just 346 nits on the Pixel 2. The Galaxy S8 notched 437 nits. The smartphone category average is 433.

Similar to the Galaxy S8 and Note 8, the Pixel 2 and Pixel 2 XL offer always-on displays, which show you the time and notifications at a glance. But the new Pixels go even further, with a Now Playing feature that can recognize music playing in the background and automatically display the title and artist.

From there, you can double tap the track to learn more about the song or to play it. It sometimes took 30 seconds or more for Now Playing to kick in, but it's not designed to replace Shazam; it's more about serendipitous discovery.

Google Lens: Amazing potential

While the cameras on the Pixel 2 and Pixel 2 XL take great-looking images, that's not what really makes the photo experience stand out. That would be Google Lens, an object-detection feature in the Camera and in Google Photos app.

With Google Lens, the Pixel 2 can recognize landmarks with ease, such as the Flatiron Building in New York City. I just snapped a photo, then tapped on the lens icon on the bottom of the screen; Google Assistant then displayed an information card on the building with info from Wikipedia.

I had mixed results when taking pictures of businesses. After I took a shot of the neon sign for Gleason's Tavern, Google Assistant gave the the star rating and a brief description of the establishment, along with its hours of operation. But Google Lens didn't recognize the sign for Dough, which is a premium donut shop.

Google Lens worked pretty well on a business card; the phone easily picked up the email address of the contact and his phone number. Last but not least, I tried photographing a Spider-Man Homecoming movie poster, and the phone returned a synopsis and rating from Rotten Tomatoes.

Google Lens will be coming to other Android phones later, but for now at least, it's a Pixel exclusive.

There's one other camera feature that's coming that I did not have a chance to test, which is AR stickers. With this perk, you'll be able to insert characters from franchises like Stranger Things and Star Wars right into your images.

Cameras and Image Quality: Great, but no iPhone 8 killer

Considering the Pixel 2 and Pixel 2 XL sport single rear 12-megapixel cameras, you might think that they're snapping pics with one arm tied around their backs compared to dual-lens camera phones. Nope. They aren't, and that's because these phones are smart enough to offer a Portrait mode (bokeh effect) through software that works even on the phones' front 8-MP camera.

In one portrait I took of my colleague Cortney with the Pixel 2, the New York City skyline blurred into the background, but not too much — just enough for her red-orange hair, blue shirt and green jacket to pop. As I zoomed in, though, I noticed that one part of a building behind her didn't have the blur effect. It's too bad that you can't preview Portrait Mode results in real time, as you can with the iPhone 8 and Galaxy Note 8; the bokeh effect is applied after you take the shot.

In terms of overall image quality, the Pixel 2 and Pixel 2 Plus give the best camera phone, the iPhone 8 Plus, a run for its money. But they don't surpass it.

In this shot of the Flatiron Building, the Pixel 2's HDR+ setting brought out more details in the shadows than the iPhone 8 Plus did. The iPhone's image has a bit better contrast, but overall, the Pixel 2's image is the one I would share on Facebook or Instagram.

The iPhone 8 Plus pulled ahead with this shot of an outdoor art piece. The iPhone 8 Plus delivered a brighter image with more vibrant oranges, blues, purples and pinks.

Indoors, at the Lego store, it was a toss-up between the Pixel 2 and iPhone 8 Plus. The image of a Lego man in a top hat captured by Google's phone delivered more-realistic hues, while Apple's camera delivered a brighter image.

The iPhone 8 Plus won on this close-up of a lantana flower. The image turned out brighter than the Pixel 2 XL's shot, making it easier to tell that the sun was shining, although I could make out a great amount of detail in the leaves on both photos.

If you're an Android fan and you've been jealous of Apple's Live Photos, you'll get a kick out of the Motion photos feature on the Pixel 2 and Pixel 2 Plus. It captures a few seconds of video with each image and plays it back with a loop-like effect, as I noticed in one shot I took that had moving cars in the foreground.

Performance: Faster real-world speed

With the same Snapdragon 835 processor as the Galaxy S8 and the same 4GB of RAM as the Galaxy S8 and S8+, the Pixel 2 and Pixel 2 XL might be expected to offer the same performance. Actually, they're faster in real-world tasks.

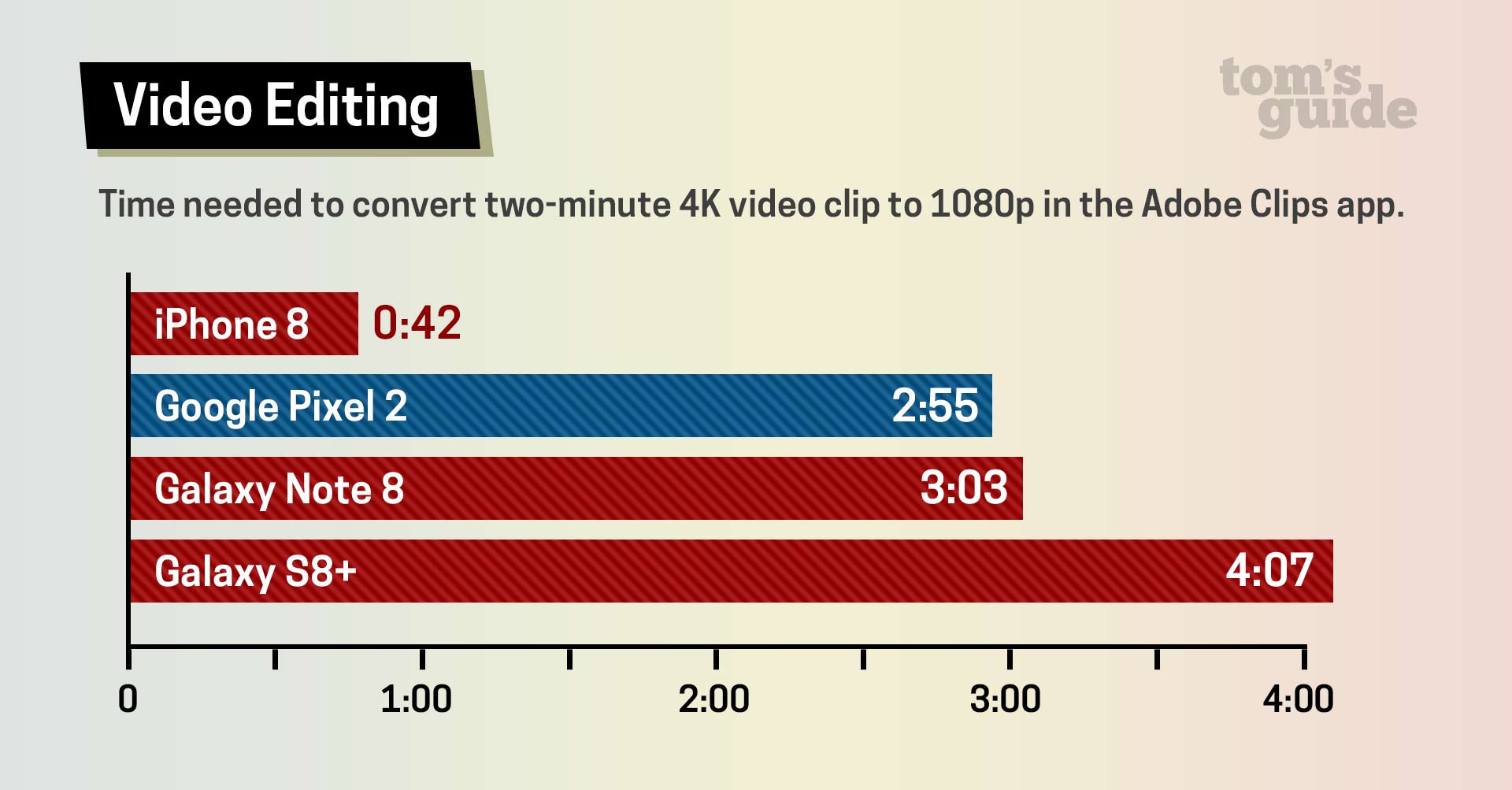

On our video-editing test, in which we transcode a 2-minute 4K video clip to 1080p in the Adobe Clips app, the Pixel 2 took 2 minutes and 55 seconds, compared to 4:07 for the Galaxy S8. The Galaxy Note 8 took 3:03, and that phone packs 6GB of RAM. The Pixel 2 still wasn't nearly as fast as the iPhone 8 and its A11 Bionic chip, which needed 42 seconds.

Next, we opened up a 5.1 MB PDF file. The Pixel 2 took just 2 seconds, compared to nearly 6.41 seconds for the Galaxy Note 8. The iPhone 8 took 0.74 seconds.

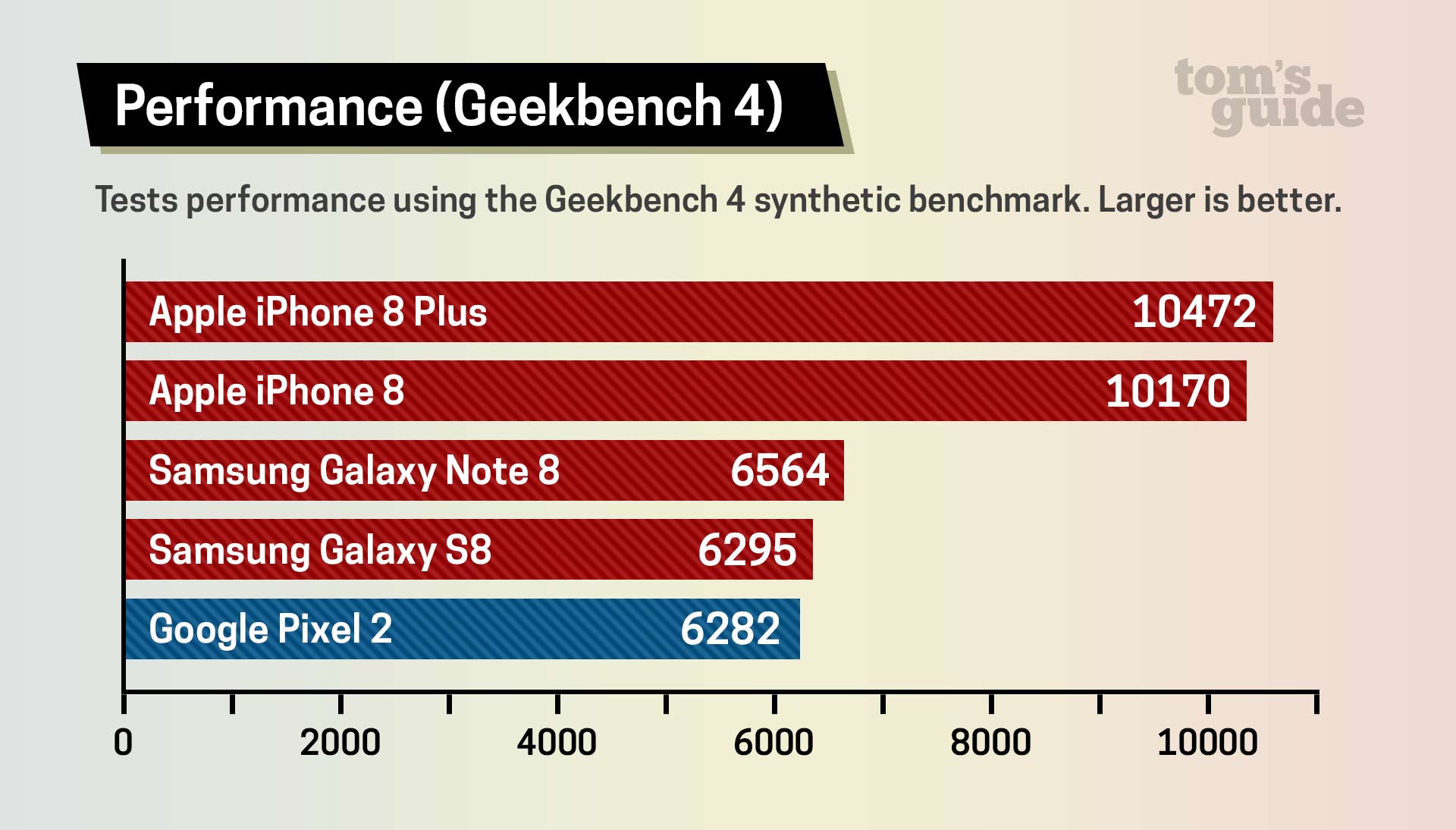

The Pixel 2 didn't fare as well on synthetic benchmarks. On Geekbench 4, which measures overall performance, the Pixel 2 notched 6,282. That's a little bit behind the Galaxy S8's score (6,295) and well short of the Galaxy Note 8's showing (6,564). The iPhone 8 was in another league, at 10,170.

When performing everyday tasks, like running two apps side by side in split-screen mode, the Pixel 2 felt snappy and responsive. I played the demanding Warhammer 40,000: Freeblade game — busting up tanks with my mech — without any lag.

Battery Life: Excellent

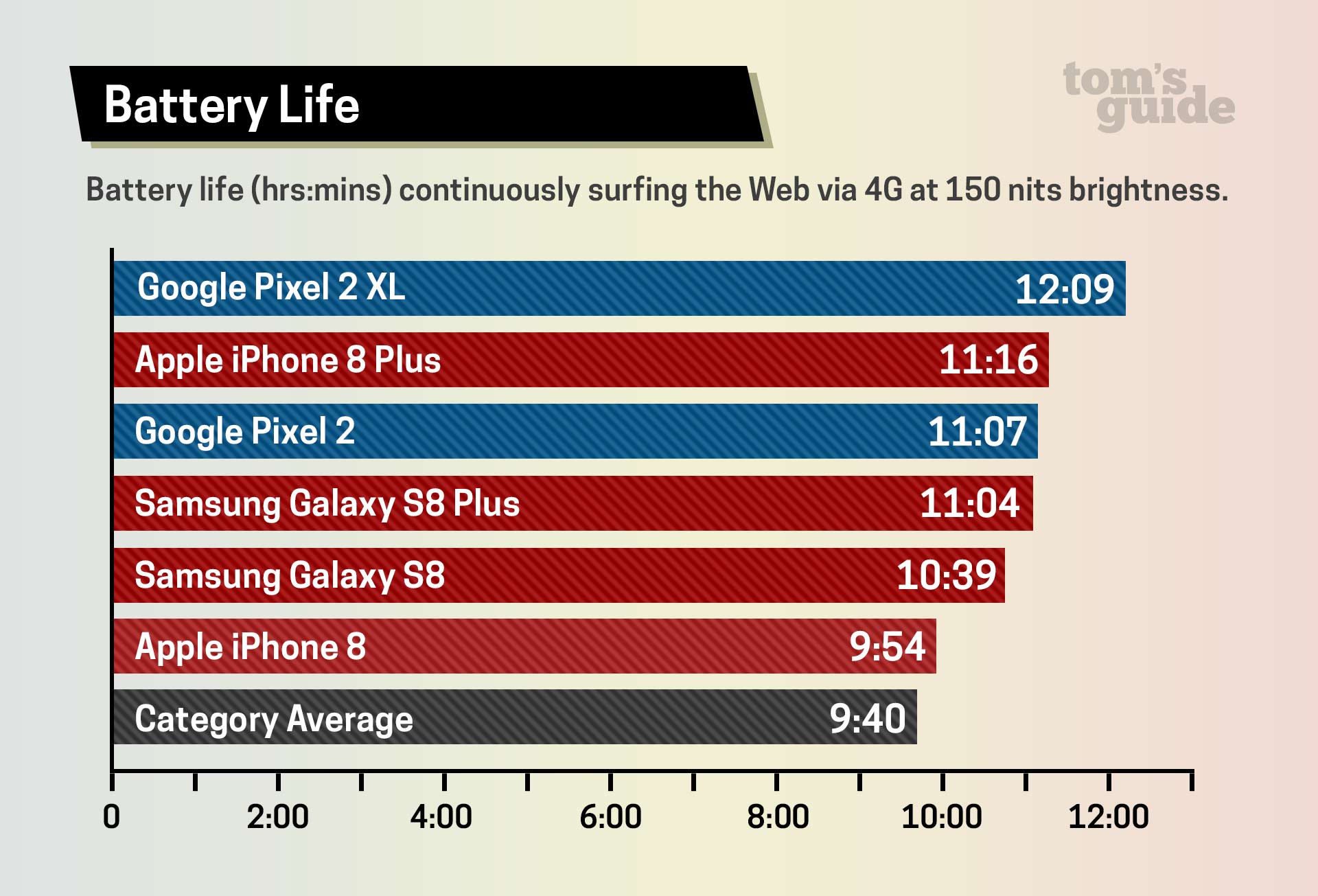

Both the Pixel 2 and Pixel 2 XL beat their Apple and Samsung foes in endurance. On the Tom's Guide Battery Test, which involves continuous web surfing over 4G LTE (in this case on Verizon), the Pixel 2 lasted a strong 11 hours and 7 minutes. The Pixel 2 XL lasted about an hour longer, at 12:09.

These runtimes far surpass the 9:40 smartphone average, and they beat the respective times of the iPhone 8 (9:54) and iPhone 8 Plus (11:16) and the Galaxy S8 (10:39) and Galaxy S8+ (11:04).

The Pixel 2 XL easily makes our list of the longest-lasting phones. Plus, you can expect even longer battery life should you choose T-Mobile for your Pixel 2 or Pixel 2 XL, as we've found that phones tend to last longer on that network.

One bummer, though, is that neither the Pixel 2 nor the Pixel 2 XL supports wireless charging. You'll need to plug in a USB-C cable if you want juice. Google claims that you'll get up to 7 hours of power in just 15 minutes. On our tests, we saw the Pixel 2 get up to 19 percent capacity in just 10 minutes, reaching 39 percent by the 30-minute mark.

Android Oreo: Minor (but welcome) upgrades

It's no surprise that Google's own phones are the first to ship with the new Android Oreo software installed. The updated OS doesn't offer dramatic improvements, but there are some worthwhile new features.

The new picture-in-picture mode isn't one of them. Although you can already run two apps side by side, picture-in-picture lets you exit an app and still see a floating window of one of the supported apps, such as YouTube and Google Maps. In the case of YouTube, you need to be signed up for YouTube Red ($9.99 per month) for this feature to work. I was able to easily drag a small window of the playback window around the screen as I worked in another app, but I just didn't see it as very practical.

I found the app shortcuts feature, which is similar to the 3D Touch feature on iOS, more useful. You long press an app icon to activate various shortcuts without having to open the app; for instance, you can long press the Phone app to quickly dial one of your top three contacts, or long press the Camera app to Take a video or Take a selfie.

Similarly helpful are the notification dots. When you log press an icon that has a dot on the upper right corner, you'll see the waiting notification without having to open the app. This came in handy for the Gmail app.

With Google's new ARCore initiative, compelling augmented reality apps will be making their way to certain Android Oreo phones, such as the Galaxy S8. The apps will be hitting the Google Play store this winter.

Oreo has lots of other features, including ones under the hood to optimize performance and battery life, as well as to enable stronger security. See our full Android Oreo review along with a look at the top 21 Oreo features.

Accessories: Not your average add-ons

With a lot of phones, the accessories are an afterthought, but not with the Pixel 2 and Pixel 2 XL. The new Pixel Buds ($159) are wireless headphones that let you carry on a conversation with someone else in their native language. Leveraging Google Translate and Google Assistant, these headphones help you say phrases in any one of 40 languages, and your Pixel 2 will utter that phrase aloud so that the person you're speaking with can understand you.

I did not have an opportunity to test out the Pixel Buds yet, but I look forward to it.

Another new accessory on the way is Google Clips, a pricier $249 camera that you can attach to pretty much anything to record video of loved ones, allowing you to keep your hands free. Because it uses machine learning, the Clips camera not only knows when there's a person in the frame to take a shot, but it can also learn who your friends and family members are over time.

If you're looking to get into virtual reality, there's also a new version of the Google Daydream View headset ($99), which offers improved comfort and optics with a wider sweet spot for content and increased resolution.

Bottom Line

With the Pixel 2 and Pixel 2 XL, it's clear that Google has chosen AI as its weapon of choice to fight the smartphone war. And given the company's prowess in machine learning, this is a smart move that has paid off. I found myself using Google Assistant a lot more in just a few days than I do Siri in a whole month with the iPhone, and not just because I was testing the new Pixels. Google Assistant understood me even when I didn't utter the exact right words, and I loved being able to summon it with just a squeeze.

If you own other Google gear, such as the Google Home Mini (which you can get for free right now when you order the Pixel 2) or Chromecast, the Assistant's powers multiply. In a way, Google is starting to pull away from other Android phone makers, because the company is creating its own Apple-like walled garden. It's a more purposeful fragmentation.

The Pixel 2 and Pixel 2 XL also benefit from faster performance than the Galaxy S8, stellar front and back cameras and long battery life. Overall, I prefer the sleeker designs and curved edge-to-edge displays on the Galaxy S8 and Galaxy S8+, and the iPhone 8 Plus remains the king of camera phones. But if you want a pure Android experience, you'll love the $849 Pixel 2 XL. The Pixel 2's bezels are just way too big and it's screen too small for me to take this phone seriously, even for its relatively low $649.

Credit: Shaun Lucas/Tom's Guide

Mark Spoonauer is the global editor in chief of Tom's Guide and has covered technology for over 20 years. In addition to overseeing the direction of Tom's Guide, Mark specializes in covering all things mobile, having reviewed dozens of smartphones and other gadgets. He has spoken at key industry events and appears regularly on TV to discuss the latest trends, including Cheddar, Fox Business and other outlets. Mark was previously editor in chief of Laptop Mag, and his work has appeared in Wired, Popular Science and Inc. Follow him on Twitter at @mspoonauer.

-

shane.brysch I'd like to see a match up of the Huawei Mate 10 Pro vs the Pixel 2 XL. Because of Apple's push to have their own proprietary means of product distribution along with limitations that have come from that through the products' growth cycles, there are plenty of people that will just use an android based OS. However, Samsung, along with several other brands, push lots of bloatware onto the consumer to begin with. That coupled with a carrier's bloatware really makes the whole experience suck.Reply

That said, Huawei seems to be sticking to a more stock Android experience as well with just a few things added rather than an all out "load the boat" mentality. I'd presume that the performance for the Mate 10 Pro is going to be quite impressive on these marks as well. -

mount.victor.oz Look at the detail differences between the Pixel 2 and the iPhone 8 in both the Lego and Raccoon pictures. While the iphone is brighter, it's overexposed. The outside environment (looking out of the window) shows that the iphone simply has the exposure incorrect. The finer details in the raccoon's fur are also quite noticeable. If you ratchet up the exposure on the Pixel, the images would have similar brightness but the details would be present in the Pixel where they are not in the iPhone.Reply -

Mark Spoonauer Good point on the cameras. We'll be doing a more in-depth camera face-off.Reply

Agree on detail on raccoon but not Lego. Also worth keeping in mind that only iPhone 8 has true 2x optical zoom, and you can actually preview the Portrait Mode effect when shooting.

20282429 said:Look at the detail differences between the Pixel 2 and the iPhone 8 in both the Lego and Raccoon pictures. While the iphone is brighter, it's overexposed. The outside environment (looking out of the window) shows that the iphone simply has the exposure incorrect. The finer details in the raccoon's fur are also quite noticeable. If you ratchet up the exposure on the Pixel, the images would have similar brightness but the details would be present in the Pixel where they are not in the iPhone.

-

tolkien00 I agree with Anonymous' response to Mark Spoonaur, I'm glad someone point it out and feel that the iPhone has way more color saturation that while will make what you're aiming at will pop more, the rest of the picture just looks blah. Balance needs work, possibly could get handled software-level hopefully.Reply -

Linked_1 The pixel 2 is a straight up scam phone.Reply

It always rushes about 2 months to get Oreo out before other phones have it so that it can have a temporary edge. If it took the additional 2 months it wouldnt have all the issues it has but it also wouldn't seem as impressive since all the devices will be a lot smoother, snappier and efficient once they're all on the same developmental cycle.

The pixel also doesn't have the hardware integration and optimization that galaxies have and that takes time. The audio, display, security, radio, etc all have hardware optimized functionality and capabilites that the pixel doesn't have.

It forgoes all that to have a bare framework that is much easier to have drop less frames and also much easier to patch.

There isn't any coverage of the hardware optimization that goes into galaxies so users are often surprised when they switch over to stock and find that so much of it is less refined and restrictive..

If all you do is use basic apps then you might not notice as much but you'll still miss out on all the audio video security radio and general customization refinements.

You also miss out on things like the pressure sensitive home button and app rescaler, auto hiding nav bar and lots of stuff like that. All that takes time.

Google can also get away with rushing out updates and then just patch up issues that users have later on -

origsquig linked sounds like an s8 sales guy, pixel kills the s8 period, the tests prove it, the performance proves it.Reply -

tntom I just bought two Pixel 2 phones (5 days). My wife and I love them. Since I was coming from the LG G3 (LineageOS 14.1) I was repulsed by the large bezels but after seeing it in person and holding it in hand it is a total non-issue. Going from 5.5" screen to a 5" is what is difficult for me. It is just too small and cramps my hand to hold on to it. A protective case may help add some bulk. So it is going back to be traded in for the Pixel 2 XL. For my wife it is perfect as she is coming from the Lumia 640. I just installed Square Home and she is thrilled to have working apps.Reply