Tom's Guide Verdict

Amazon's Alexa-powered camera that tells you what to wear is a neat idea, but it's not for everyone.

Pros

- +

Easy to use

- +

Good photos

- +

Quick feedback

- +

Useful app features

Cons

- -

Bad audio

- -

Style feedback is very general

- -

Creepy-looking

Why you can trust Tom's Guide

When it comes to fashion, I'm close to clueless. I buy clothing based on what others (particularly the celebrities and influencers I stalk on social media) seem to be wearing. I shop at T.J. Maxx, Nordstrom Rack and whatever other department store I happen to pass on my way home from work.

As a New Year's resolution, I decided to step up my fashion game with the help of Amazon's Echo Look.

This $199 selfie camera has Alexa built in, meaning you can use it to call an Uber, check the weather and do everything else Amazon's voice assistant can do. But the Echo Look's primary role is as an AI-powered stylist. Stand in front of this device and say, "Alexa, take a photo," and the Look will count down, lights will flash and a full-body photo of you will appear in the Echo Look's companion app.

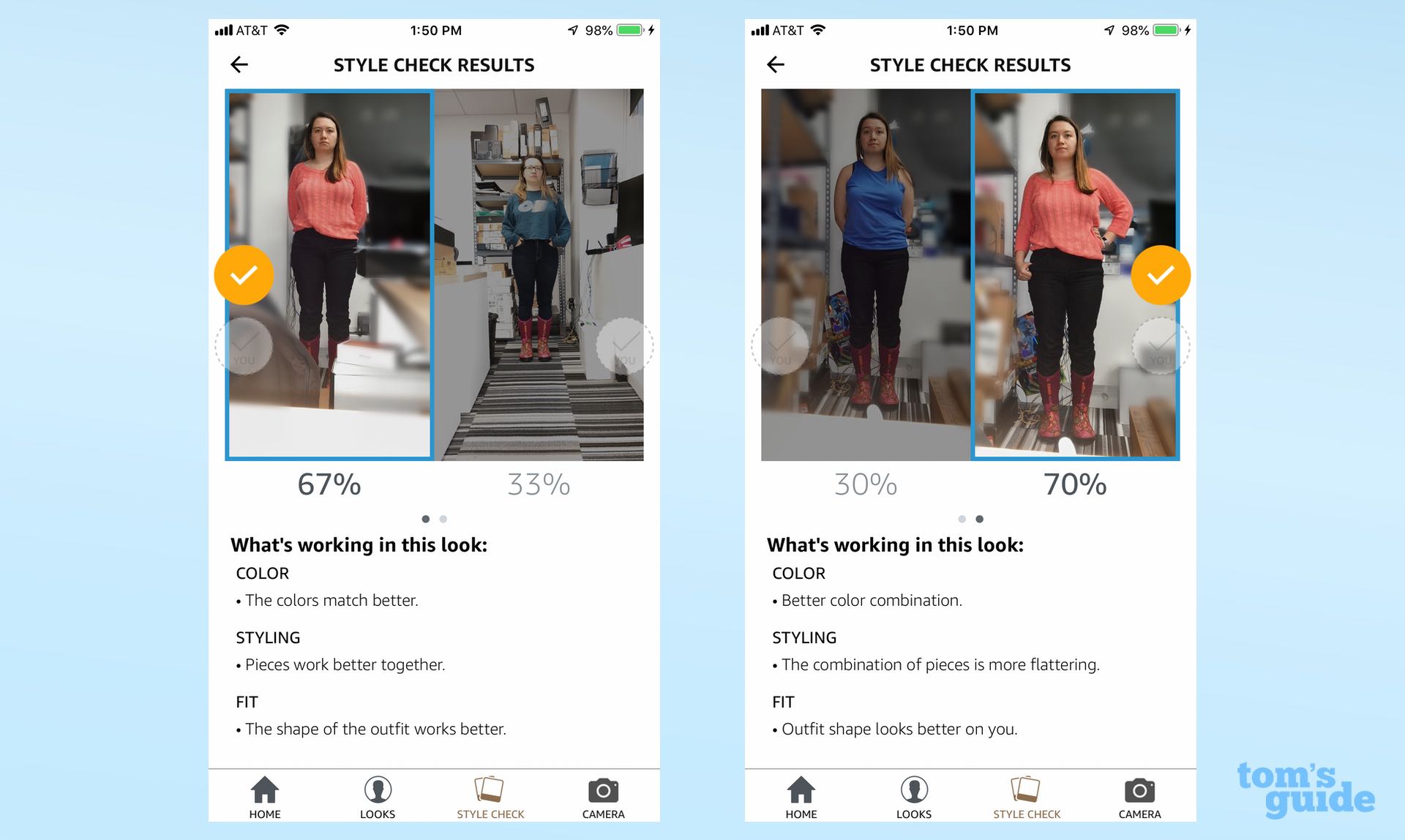

The app is where the real fun begins. Upload two photos of you wearing two different outfits, and Alexa will tell you which one looks better, using an algorithm informed by human fashion experts. (You can upload photos from any camera, but good luck getting a full-body selfie on your own.)

I spent a week taking fashion advice from the Echo Look. There were a lot of things I liked — and a few that I didn't.

Style assistant first, smart speaker second

First things first — while this is an Alexa-enabled device, don't look at it as a smart speaker. I used it to play some music, and it wasn’t ideal.

A couple tracks by 'N Sync and the Spice Girls were tinny and thin, and the audio doesn't get nearly as loud as that of the Look's counterparts in the Echo lineup (including the relatively small Echo Spot and Echo Dot). What's more, there aren't any physical buttons on the device (apart from the one that mutes the camera), meaning you need to open up the Alexa app to change the Look's volume.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

The Look is so periscope-y, and its camera so pronounced, that it really looks like it's watching you. I found myself turning it around before I went to sleep.

Second, anyone who is creeped out by a camera in their room should steer clear of this speaker. I barely notice my laptop's exposed webcam, but even I was uneasy with this thing staring at me as I went about my day. The Look is so periscope-y, and its camera so pronounced, that it really looks like this device is watching you (even though the camera isn't on until you ask it to take a picture). I found myself turning it around before I went to sleep.

MORE: Best Amazon Alexa Skills - Top Skills For Your Echo Speaker

But the Echo Look served its intended purpose just fine: It helped me dress. Each morning I submitted two outfits, and each morning the app told me which one to wear.

What I like about Echo Look

With a 5-megapixel camera, the Look is no DSLR. The selfies weren't premium-quality and probably not something I'd share on Instagram. But that's not their purpose.

What this product does really well, with the help of Intel RealSense SR300 depth-sensing technology, is blur out the background behind you in portrait-mode-style. The camera never once mistook something else for the subject of the photo. Even when my environment was quite busy, the Look put the focus entirely on my outfits.

I like that you can sort your outfits into collections based on weather and season and that Alexa shows you your top colors each month.

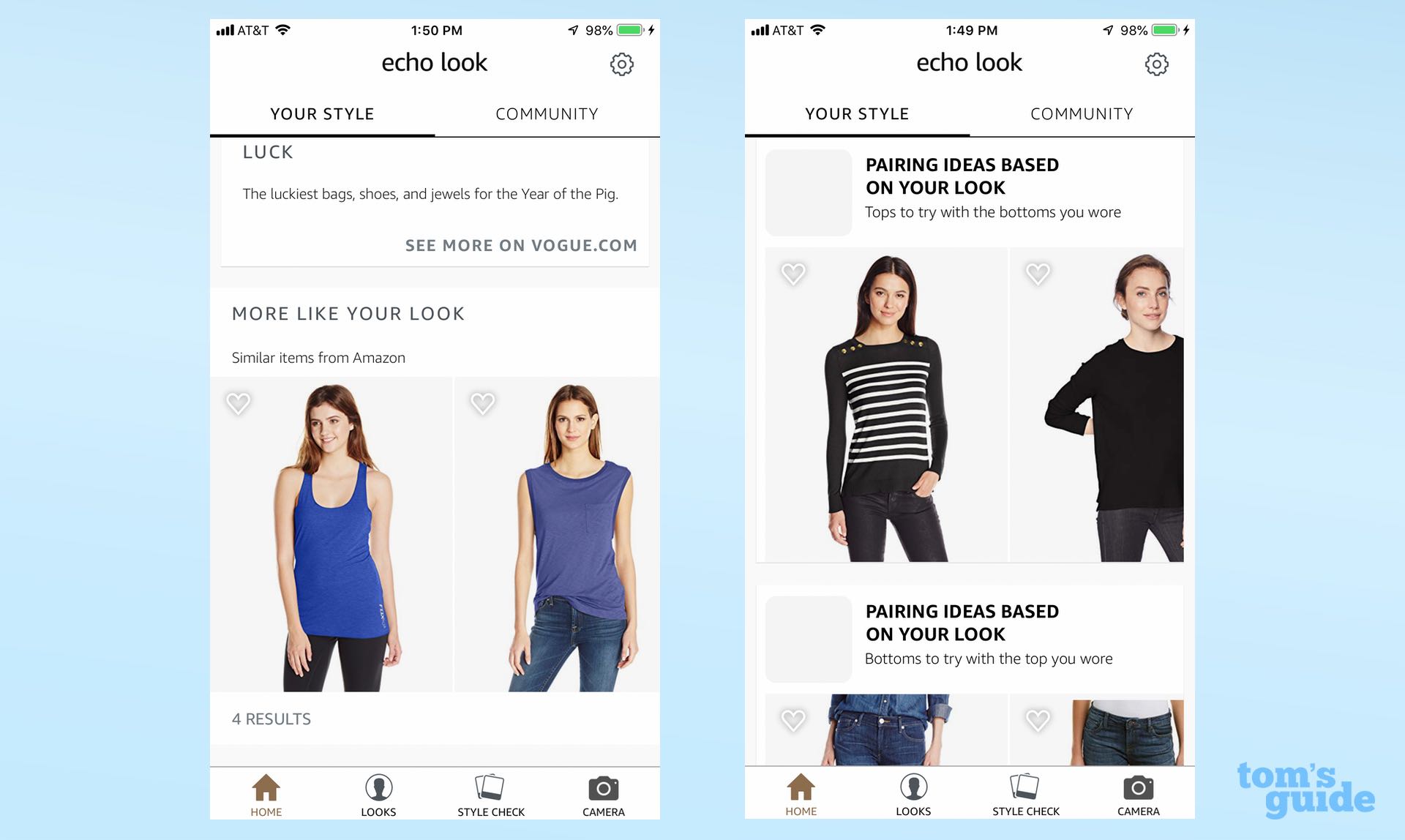

I found many of the app's other features useful. I like that you can sort your outfits into collections based on weather and season and that Alexa shows you your top colors each month. Most of all, I like that the app recommends items of clothing based on your past looks; I was surprised that it actually showed me things I would wear and things I could afford.

Cookie-cutter advice?

I did feel that the Echo Look was pushing me toward certain trends. It encouraged brighter colors, nudging me toward sweaters and away from tank tops and crop tops, toward looser pants and away from tight jeans. Those are understandable preferences, but I would've liked to have known why.

The app gives reasons, but they're very cookie-cutter: "Outfit shape looks better on you," or "The combination of pieces is more flattering." But what do those phrases mean? Surely qualities like "better" and "flattering" are at least somewhat subjective when it comes to clothing on a body.

As VentureBeat discovered, the stylists who trained the Look's AI tend to be young women from the Seattle area. Perhaps this algorithm is excellent for that demographic, and perhaps it can even be generalized to young women in New York or New Yorkers in general. But I wonder how it could possibly meet everyone's needs — atypical body shapes, non-Western countries, odd dress codes, varying cultures and customs — without inadvertently pushing or enforcing some sort of biased norm along the way. Without more context and more about the reasoning behind Alexa's choices here, it's hard to know.

MORE: Our Favorite Smart Home Gadgets and Systems

I contacted Amazon about my concern, and in response, a spokesperson said that the Echo Look "takes into account the characteristics of each customer to make the holistic assessment. Two customers wearing the same outfit may receive different responses depending on how it looks on them."

Bottom Line

I don't think I will continue to use the Echo Look, mainly because it doesn't quite suit my lifestyle. I just don't have the time to put on two distinct outfits each morning, take full-body photos and wait for Alexa to get back to me before heading out the door.

I'd rather have an app that could offer feedback on just one outfit. ("Try a darker shirt," or "It's too cold for a tank top, you idiot.") I'm sure something like that is more difficult to program, but if an Echo Look 2 with such a feature comes out in the future, count me in.

But I like the idea of this device, and the Look works well for what it's trying to do. If you're the target audience for this device — and you probably know who you are — the Look may be worth a look.

Credit: Tom's Guide

Monica Chin is a writer at The Verge, covering computers. Previously, she was a staff writer for Tom's Guide, where she wrote about everything from artificial intelligence to social media and the internet of things to. She had a particular focus on smart home, reviewing multiple devices. In her downtime, you can usually find her at poetry slams, attempting to exercise, or yelling at people on Twitter.