Google Assistant and Siri can be silently hacked through a table: What to do

Ultrasonic waves can command Siri and Google Assistant through wood, metal or glass

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

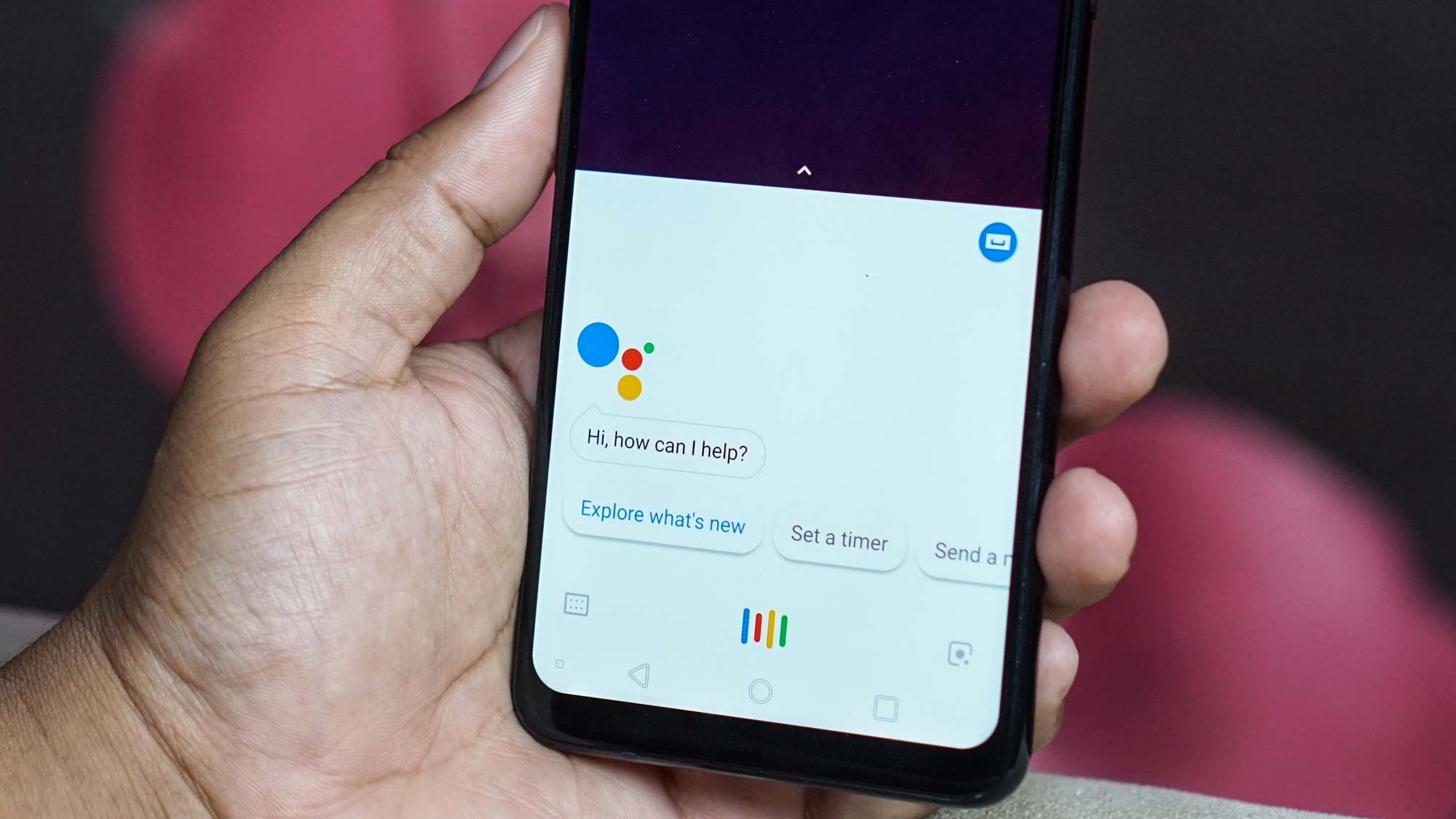

Inaudible vibrations sent through a tabletop from up to 30 feet away can secretly command Apple's Siri and Google Assistant without a phone owner's knowledge, academic researchers revealed last week.

"By leveraging the unique properties of acoustic transmission in solid materials, we design a new attack called SurfingAttack that would enable multiple rounds of interactions between the voice-controlled device and the attacker over a longer distance," the researchers said on a website dedicated to showcasing their discovery.

- Best Android antivirus: Protect your smartphone

- iPhone 9 release date in serious danger due to coronavirus

"SurfingAttack enables new attack scenarios, such as hijacking a mobile Short Message Service (SMS) passcode, making ghost fraud calls without owners' knowledge, etc."

Article continues belowA fun video, one of many posted on YouTube by the researchers, shows a first-generation Google Pixel taking selfies and reading out text messages (including two-factor-authentication secret codes), all in response to inaudible commands transmitted by a flat speaker attached to the tabletop and connected to an off-screen laptop.

The Pixel is even shown making a call to an older-model iPhone in response to a hidden command. A synthesized voice on the Pixel requests a secret access code from the iPhone's owner, and Siri responds with the code.

In theory, a naughty office worker could attach a piezoelectric speaker to the bottom of a conference table during an important company meeting, then send out silent commands from his laptop to his co-workers' phones as long as they were lying on the table, preferably unlocked.

How to keep your phone safe

The attack -- ahem, SurfingAttack -- worked on 15 out of 17 phone models tested, including four iPhones (5, 5s, 6 and X), the first three Google Pixels, three Xiaomis (Mi 5, Mi 8 and Mi 8 Lite), the Samsung Galaxy S7 and S9 and the Huawei Honor View 8.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

It failed on the Samsung Galaxy Note 10 Plus and the Huawei Mate 9, both of which have slightly curved backs.

If you don't have a curved phone, then the researchers recommend placing your phone on a "soft woven fabric" or using "thicker phone cases made of uncommon materials such as wood." Or, they add, you could just disable voice-assistant access when the phone is locked, then make sure the phone is locked when you're sitting in a long meeting.

There are other caveats. The silent commands sent through the tabletop still have to sound like your voice to work, but a good machine-learning algorithm, or a skilled human impersonator, can pull that off.

Siri and Google Assistant will audibly talk back to the silent commands, which won't escape attention if there's anyone around to hear them in a quiet room. However, the silent command also told the voice assistants to turn down the volume, so the responses were hard to hear in a loud room but could still be heard by the attacker's hidden microphone.

An attack for all surfaces

In any case, as researcher Qiben Yan of Michigan State University told The Register, "many people left their phones unattended on the table."

"Moreover, the Google Assistant is not very accurate in matching a specific human voice," Yan added. "We found that many smartphones' Google Assistants could be activated and controlled by random people’s voices."

Previous work on secretly triggering voice assistants have involved sending ultrasonic commands through the air and laser beams focused on smart speakers' microphones from hundreds of feet away. This is the first time anyone has demonstrated an attack through a solid surface, however.

The tested surfaces included tables made of metal, glass and wood. Phone cases didn't do much to dampen the vibrations.

The research was carried out by Qiben Yan and Hanqing Guo at SEIT Lab, Michigan State University; Kehai Liu at Chinese Academy of Sciences; Qin Zhou at the University of Nebraska-Lincoin; and Ning Zhang at Washington University in St. Louis. You can read their academic paper here.

Paul Wagenseil is a senior editor at Tom's Guide focused on security and privacy. He has also been a dishwasher, fry cook, long-haul driver, code monkey and video editor. He's been rooting around in the information-security space for more than 15 years at FoxNews.com, SecurityNewsDaily, TechNewsDaily and Tom's Guide, has presented talks at the ShmooCon, DerbyCon and BSides Las Vegas hacker conferences, shown up in random TV news spots and even moderated a panel discussion at the CEDIA home-technology conference. You can follow his rants on Twitter at @snd_wagenseil.

Club Benefits

Club Benefits