Intel RealSense 3D: What It Is and What You Do With It

Here are 10 ways you can use Intel's new RealSense 3D (depth-sensing) camera technology, which is appearing in a lot of new PCs and tablets.

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

We've been talking about Intel's RealSense 3D depth-sensing cameras for years, but devices with the tech inside finally made their debut in mainstream products at CES 2015. With more than half a dozen PCs and tablets from the likes of Acer, Dell, Lenovo and HP now shipping with the technology and more devices to come, a lot of consumers and business users will be getting systems with RealSense this year. But just what does it do? Here's a quick guide.

Depth-Sensing Technology

RealSense cameras feature three lenses, a standard 2D camera for regular photo and video, along with an infrared camera and an infrared laser projector. The infrared parts allow RealSense to see the distance between objects, separating objects from the background layers behind them and allowing for much better object, facial and gesture recognition than a traditional camera. The devices come in three flavors: front-facing, rear-facing and snapshot.

Right now, the front-facing cameras are most common, appearing on a number of new PCs and allowing all kinds of gaming, gestures and communication. Rear-facing cameras will arrive on tablets later this year and focus more on 3D scanning. The Dell Venue 8 7000 tablet is the first device with RealSense SnapShot, which is made just for still photography and allows you to change the focus or measure real distances in a photo after it has already been shot.

MORE: Lenovo B50: The First All-In-One with Intel's 3D Camera

RealSense Uses So Far

The chipmaker has demonstrated several ingenious demos of its depth-sensing technology. These are the 10 best uses of RealSense 3D so far, but many more are sure to come.

1. Realistic Avatars

RealSense is really good at scanning faces and turning them into objects that can be used, not only in 3D printing but also in software. I tested FaceShift, an application that replaces your real face with an avatar for video chats.

After leaning in for a few seconds so that the software could scan my face, I was able to chat as a robot, a red-haired boy or an ogre. As I moved my eyes, eye brows, lips and cheek muscles, the avatar followed my expressions closely. Unfortunately, it couldn't stick out its tongue when I did because it doesn't detect the inside of your mouth.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

FaceShift is cute, but not particularly useful and reminded me a lot of the silly Talking Tomcat app that many people have used on Android and iOS with their phones' standard 2D cameras. However, the potential is there to use this technology for serious conversations with people who want to remain anonymous. Hopefully there will be a "shadowy face" avatar at some point so journalists can interview secret sources using this technology.

Another app called 3Dme scans your face and puts it on a digital body, which can be printed as a figurine. However, if that avatar could be used in games or virtual worlds, it could be even more compelling than a chat ogre.

2. 3D Scanning for 3D Printouts

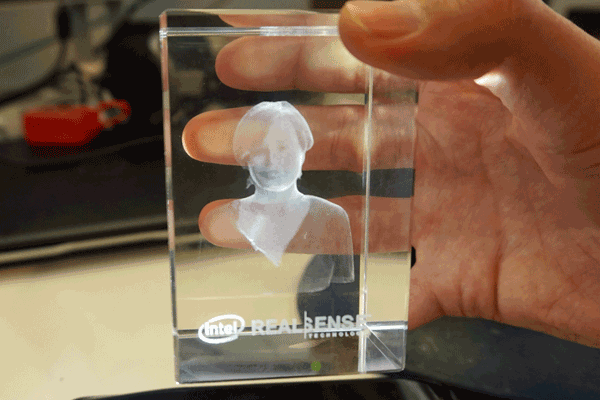

Though you won't be able to buy a system with a rear-facing RealSense camera until later in the year, Intel has a prototype tablet that can scan people's torsos and then 3D print them as decorative crystal paperweights.

The potential is there to create not just interesting paperweights, but fully-functional 3D-printed objects. Just imagine 3D scanning one screw in so you can print out another one.

Intel product manager Anil Nanduri told me that front-facing RealSense cameras can perform 3D scanning when used with the right software. Of course, the ergonomics of pointing your laptop webcam at an object presents a challenge. You'd have to lower the lid to just the right angle so you can get a small object in front of the deck and then carefully rotate it or use a rotating platform to capture all sides.

3. Green Screening Without a Screen

If you're doing a video chat and want someone to see your face, but not the messy living room behind you, a program called Personify will automatically remove the background and replace it with a graphic or a plain white space. In the past, you needed to film against a solid color background to separate your torso from the objects behind you, but RealSense's depth-sensors can tell the difference on its own.

Imagine doing a podcast about outerspace with animated stars behind you instead of your basement paneling. Picture yourself doing a video call from home with your boss and showing a blank white space behind you, rather than the wall your toddler just scribbled on.

4. Measuring Distances in Pictures

The Dell Venue 8 7000 tablet has the RealSense Snapshot camera, which adds depth information to photos it takes. With photos taken on the tablet, I was able to measure the distance between two points by drawing a line on the picture with my finger. For example, the software told me that the radius of a bike wheel in a photo was 27 inches.

Unfortunately, the software can't calculate everything. When I tried drawing measurement lines on a photo of an ice cream stand, the software informed me that many of my lines represented distances that were "too far" to measure.

5. Photo Layer Editing and Focusing

The Venue 8's camera also allows you to change which layers of a photo are in focus, long after you capture the image. You can even use filters that transform the color of some layers while leaving others untouched.

When I opened a photo of a vegetable stand and selected "Refocus" from the editing menu, I was able to blur or sharpen each set of vegetables, just by tapping on them.

I also enjoyed applying filters to the different layers on an ice cream stand photo so that the ice cream and the torch lights above the stand were in full color, but the buildings behind it were grayscale.

6. Holographic Navigation

Intel has shown a prototype PC with a virtual nav bar that appeared to float on air in front of the lower screen bezel. RealSense didn't create the holograph itself but it allowed me to interact with the virtual buttons by judging whether my fingers were at the right height to tap them.

MORE: Watch Intel's Wild Holographic PC Concept in Action

7. Accurate Gesture Control

You can do gesture control with a regular 2D camera, but it doesn't always work well, particularly if your hand blends into the background or you put one hand in front of another. RealSense can easily distinguish all your fingers from each other and from your body and the world behind you.

At CES 2015, Intel had a giant wall of LED displays with a snow scene displayed on them and a series of RealSense cameras on top. As I raised my hand and moved my arms, the snow on the screen wall moved to shadow me.

8. Gaming With Your Body

A good used case for RealSense's gesture control is playing games. I got to play a spaceship game that relied on my movements for turning and firing.

RealSense games can use other body parts as controls. In a LEGO racing game I tried, I moved a speed boat right and left by titling my neck. When I navigated up a ramp and jumped into the air, I could lift my hands to grab powerups.

9. 3D Gaming with Oculus

RealSense can add hand recognition to existing game systems such as the Oculus Rift VR glasses. Editor Mike Andronico played a game of volley ball on the headset, sticking his hands out in front of him to hit the ball. While Oculus has always provided the ability to play games in an immersive virtual world, it normally requires a controller. Adding Intel's depth-sensing cameras allows users to use their hands as the controller.

MORE: "Oculus Killer" Headset Even Worse Than it Looks

10. Object Avoidance

How do you make sure your new drone doesn't run into someone else's drone when you take it for a flight in the park? Intel showed off a sample drone with RealSense cameras on each side, allowing it to see how far it is from other objects and adjust its course to avoid a collision.

Imagine using this same technology in a self-driving car or to assist you when you're parallel parking your own vehicle.

-

quilciri "Hopefully there will be a "shadowy face" avatar at some point so journalists can interview secret sources using this technology."Reply

I believe this technology is called "audio only" -

aztec_scribe That faceshift application could be great for MMOs or simply for making youtube videos if it where incorporated into games. It really does need to let you stick your tongue out though.Reply

Club Benefits

Club Benefits