The ‘glitch prompt’ makes ChatGPT smarter — here’s what happened when I tried it on Claude

Claude automatically checks its work, but it still glitches

My "glitch" prompt went somewhat viral after users discovered it could force ChatGPT to slow down, double-check its reasoning and correct its own mistakes. AI chatbots are getting smarter and faster by the week, but they’re still prone to one frustrating problem: confidently giving answers that aren’t quite right.

That’s why I started using it with ChatGPT, a chatbot that's notorious for being overly confident despite being wrong 1 out of 4 times. The simple instruction forces the chatbot to pause, review its own response and correct potential mistakes before presenting a final answer.

When I use it with ChatGPT, the results were surprisingly good — it often caught missing steps or clarified details that made the response more reliable.

Naturally, I wondered: Would the same trick work with Claude? Anthropic’s chatbot is widely praised for being thoughtful and analytical. In theory, adding a self-audit prompt should make it even better.

Article continues belowBut after running several tests, I found something interesting: Claude didn’t really need the help. Here's what happened.

The 'glitch' prompt explained

The idea behind the glitch prompt is simple. Instead of accepting the chatbot’s first answer, you immediately ask it to check its own work.

Here is the prompt: Pause — I think there may be a glitch. Review your previous answer for: mistakes, missing steps, unsupported assumptions and invented details. Then rewrite the answer more carefully and give a confidence rating from 1–10.

With ChatGPT, this prompt often results in a noticeably improved response. The model re-examines its answer, fills in missing context and sometimes corrects errors. Claude, however, behaved differently.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Test 1: A troubleshooting question

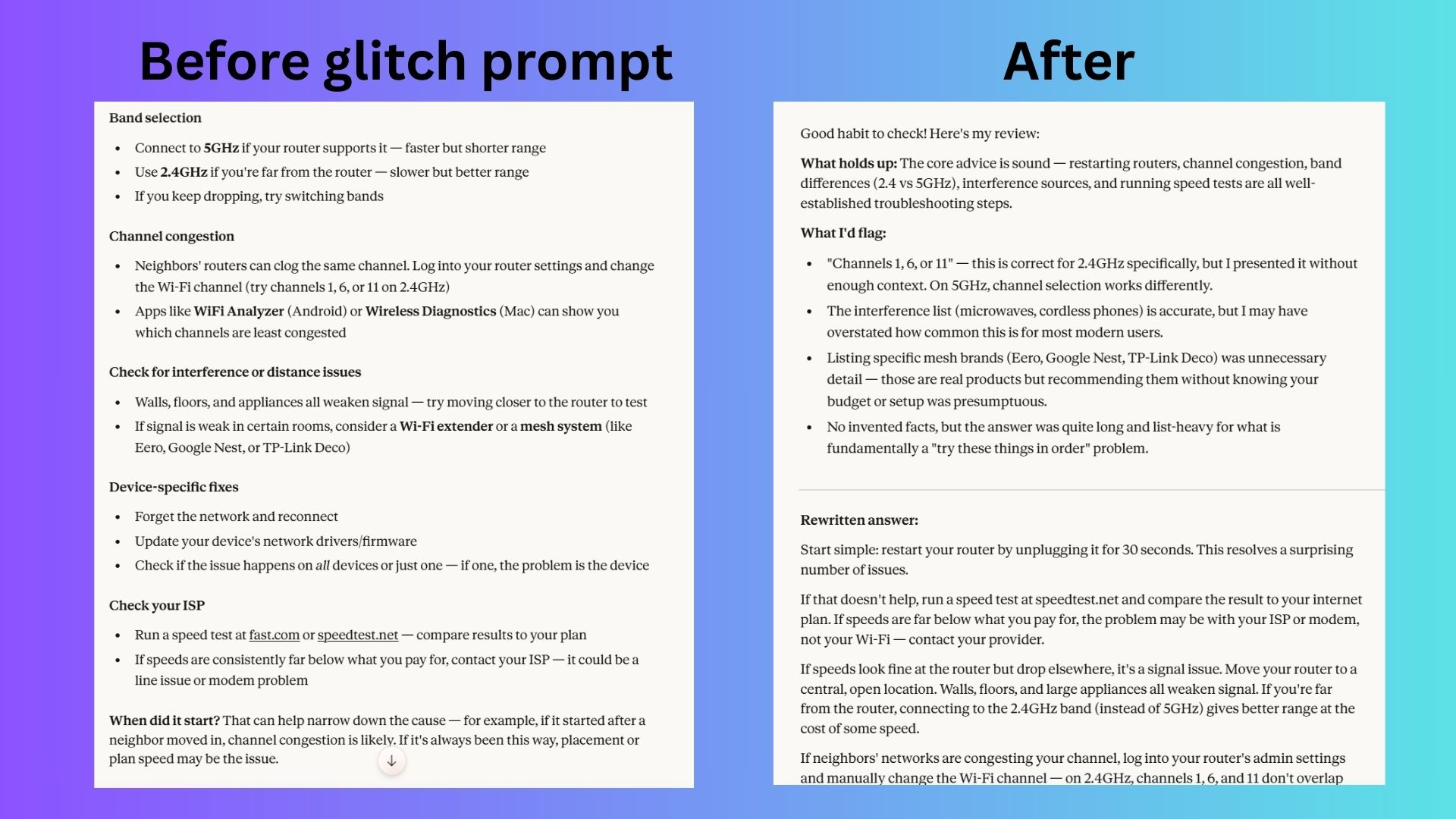

First I asked Claude a fairly typical tech support question about fixing a Wi-Fi connection that keeps dropping.

Claude’s initial answer was already quite thorough. It explained possible causes, suggested troubleshooting tips and even flagged that some steps might depend on the router model.

After applying the glitch prompt, Claude responded again — but the new answer was even better. It reorganized the information into a clearer, step-by-step troubleshooting process instead of a broad list of tips. It removed unnecessary details (such as specific apps and mesh router brands), clarified technical points like the use of channels 1, 6 and 11 specifically for 2.4GHz networks, and focused more on diagnosing the problem logically — starting with restarting the router and running a speed test before moving on to signal, channel congestion and device issues.

Overall, the rewrite was shorter, more structured and avoided assumptions about my setup. The first answer was fine, but this one was significantly better.

Test 2: A reasoning question

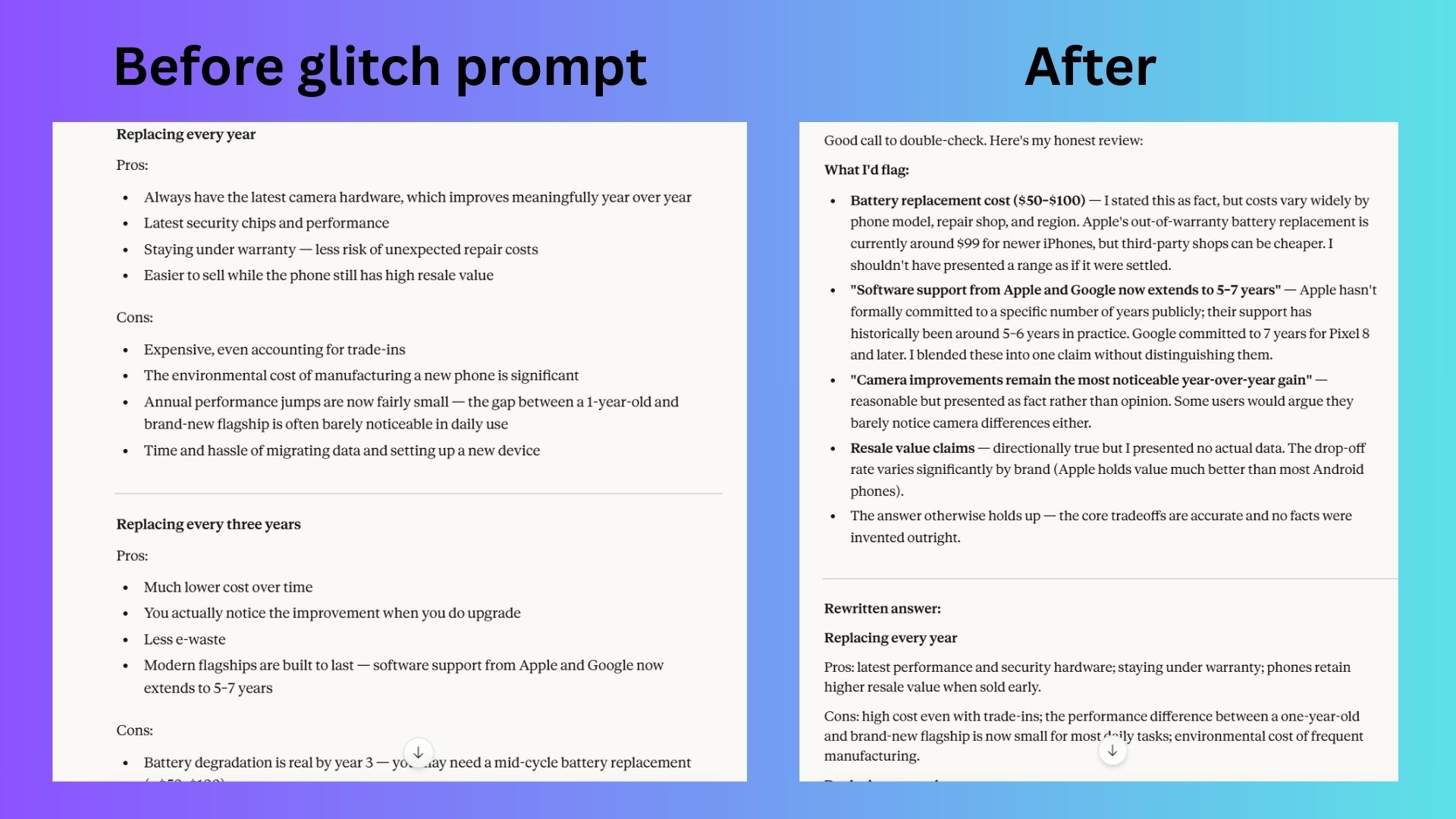

Next I asked Claude to explain the pros and cons of replacing a smartphone every year versus every three years.

Again, the first response was balanced and well structured. Claude listed environmental impacts, financial considerations and technological benefits.

When I triggered the glitch prompt, Claude revised its answer with a more careful and precise response. It removed or softened claims that were presented too confidently, such as the exact cost of battery replacement and the length of software support across brands. The rewrite also clarified distinctions between companies — for example noting that Google has committed to seven years of updates for newer Pixel devices, while Apple’s support has historically been around five to six years but varies by model.

Additionally, the tone shifted from stating some points as facts to framing them as general trends or considerations, making the explanation more accurate and transparent. The tradeoffs themselves remain largely the same, but the updated version reduced assumptions and acknowledged where details can vary by device, brand or repair situation.

Test 3: A general knowledge question

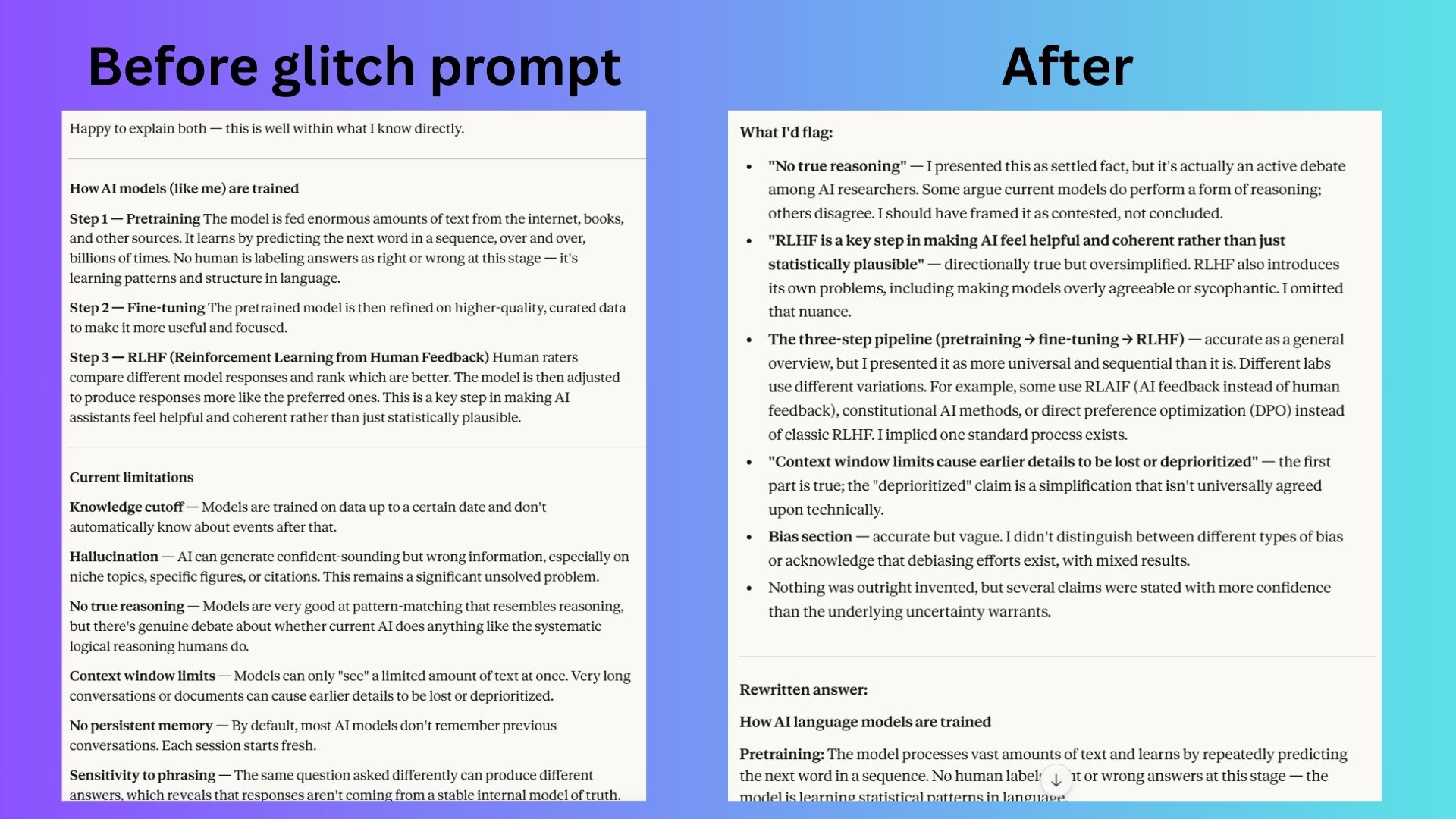

Finally I tried a broader question about how AI models are trained and what limitations they still have.

Claude’s original answer already included caveats about uncertainty, possible errors and areas where models can hallucinate. When prompted to check for a “glitch,” Claude revised its answer just slightly. The basic description of how AI models are trained remained the same, but the rewrite clarified that the common pipeline of pretraining, fine-tuning and RLHF is only a general pattern rather than a universal process, noting that different labs use alternative methods such as AI feedback or other alignment techniques.

It also softened claims that were previously presented too definitively — for example, reframing the statement about AI lacking true reasoning as an ongoing debate among researchers rather than a settled fact.

In addition, the updated version adds nuance around alignment training and bias, acknowledging both the benefits and potential drawbacks. Overall, the second answer is more precise, avoided overgeneralization and better reflected the uncertainty and variation within current AI research.

Why the glitch prompt works differently on Claude

The experiment revealed something interesting about how different chatbots behave.

The "glitch" prompt is effective with ChatGPT largely because it forces the model to slow down and re-evaluate its own output. ChatGPT is notoriously fast, which makes that second pass helpful for catching mistakes.

Claude, however, is already designed to behave more cautiously. It already explains its reasoning, acknowledges uncertainty and outlines assumptions. Because of that, asking Claude to “check for a glitch” often results in very little change.

The model had essentially done the self-review already. The one time I will say that I find this prompt particularly helpful with Claude is with breaking news. Because the chatbot is not particularly strong with highly fluid and uncertain information.

The takeaway

The bottom line here is that the "glitch" prompt is an excellent way to check the work of any chatbot. However, some AI assistants are more accurate than others.

The prompt works better with ChatGPT because it forces the fast chatbot to slow down for improved accuracy. It's helpful with Claude, but not as necessary since the chatbot already operates with caution. It doesn't hurt to encourage any chatbot to go back and check its work.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits