I tested Anthropic’s new Claude Opus 4.7 — and it’s the first AI that actually ‘reasons’ through tasks

7 stress tests to see if Claude Opus 4.7 can really code, research, and design autonomously

Anthropic just released Claude Opus 4.7, and after testing it, I can say that the difference I noticed immediately is how the model listens and responds to my questions. I put the latest update through its paces with a battery of tasks designed to trip it up: autonomous coding, self-verifying research and even some home design advice.

What I found was a model that’s moving away from being a mere chatbot and toward being a reliable digital architect. If you’re used to AI taking 'creative liberties' with your requests, Opus 4.7 is about to be a major wake-up call.

Here are seven prompts that highlight what the model can do now.

1. Autonomous engineering

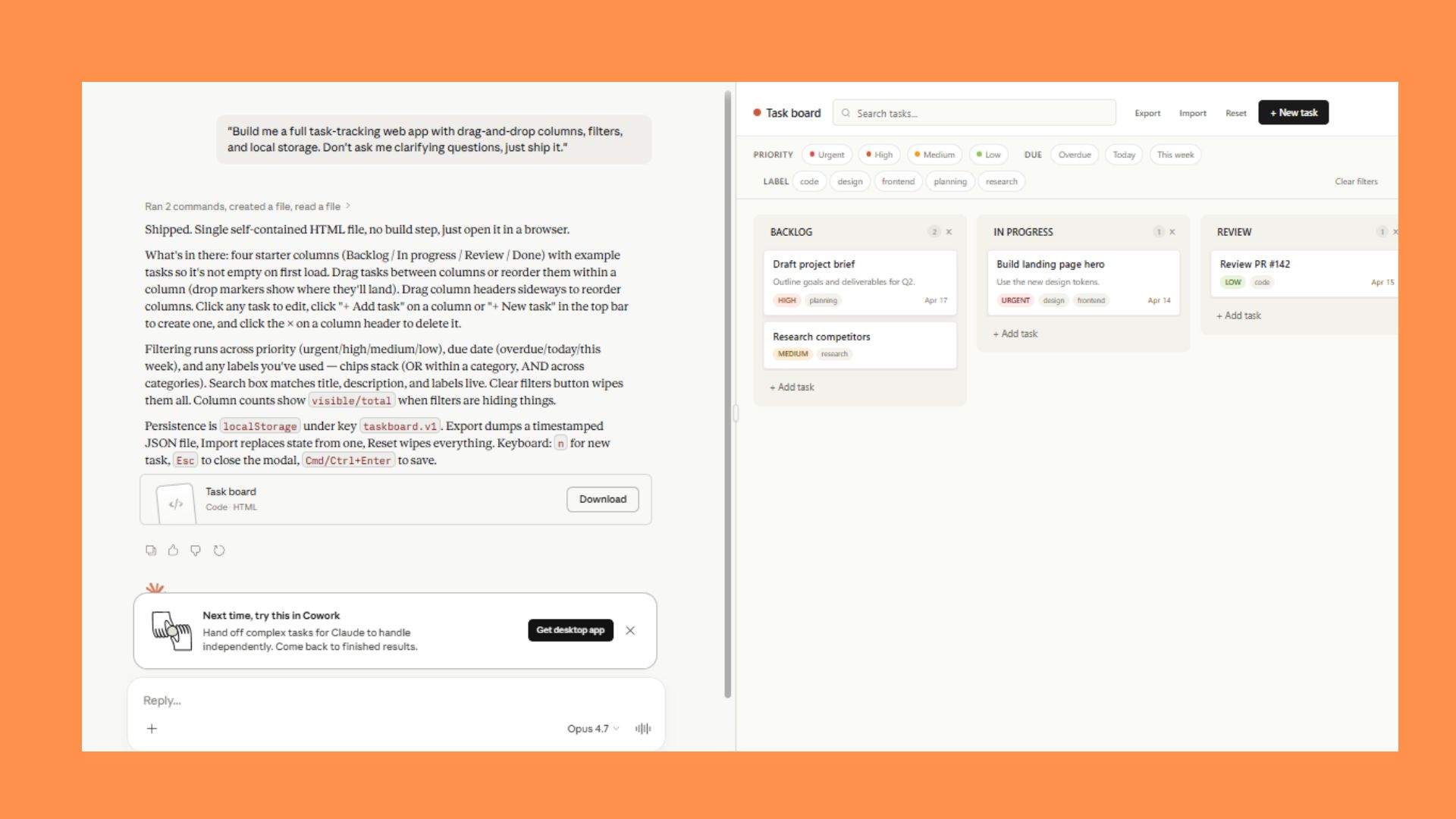

Prompt: "Build me a full task-tracking web app with drag-and-drop columns, filters, and local storage. Don't ask me clarifying questions, just ship it."

Within a few minutes, Claude Opus 4.7 built a single-file HTML task board with drag-and-drop for both tasks (between and within columns) and column reordering, plus filtering by priority, due date, labels and live search. Everything persists to localStorage, and there's export/import/reset for moving data around.

The model did exactly what I asked to create a sandboxed Linux environment where it can write files and then share them with me via the outputs directory, which is how I got a downloadable .html file instead of just a code block in chat.

2. Self-verification

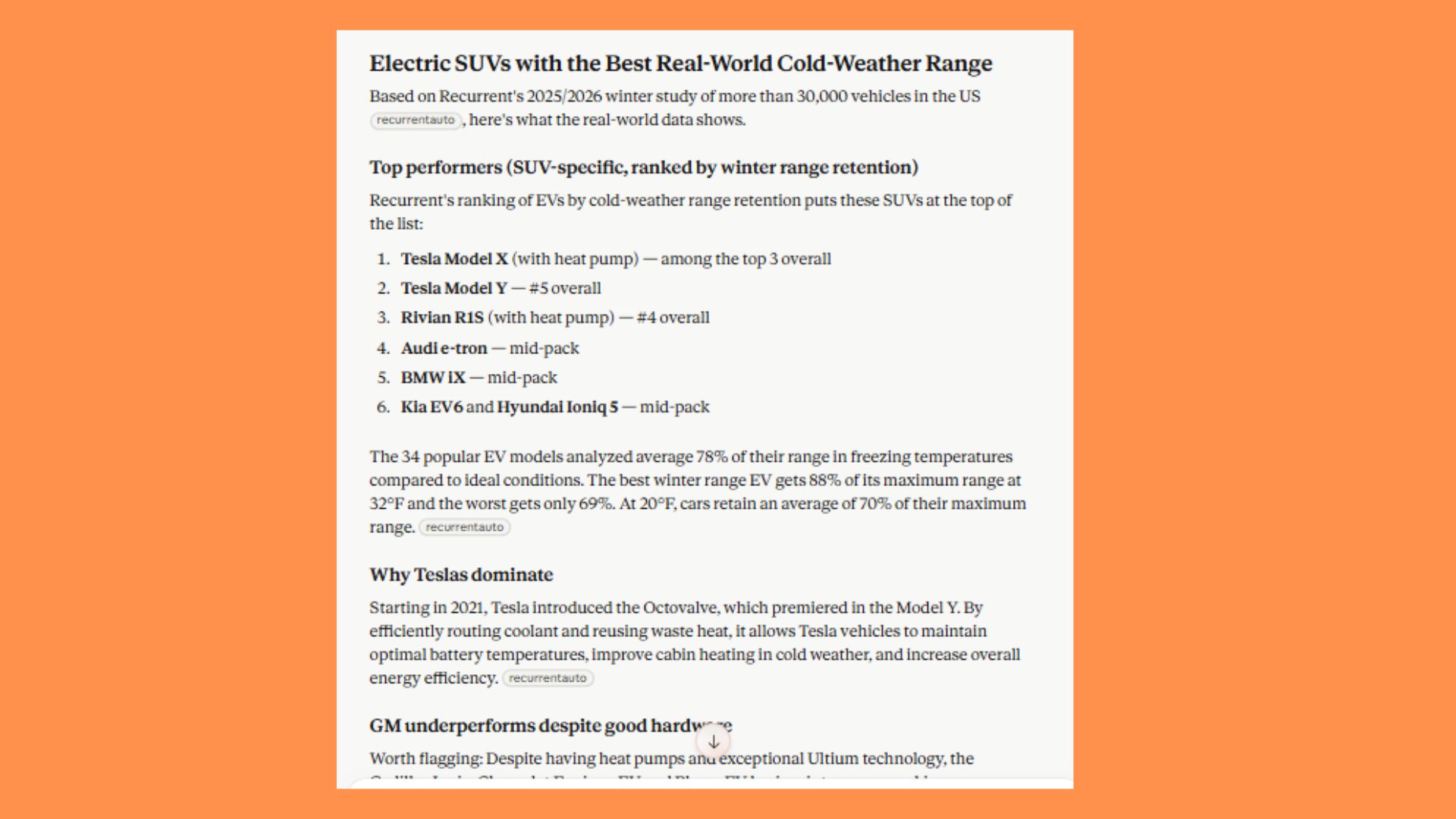

Prompt: "Research which electric SUVs have the best real-world range in cold weather, then check your own answer and flag anything you're not sure about before you give it to me."

The model took my two-part request seriously and separated what it knows from what it was guessing then built the response around that split. It ran a web search for recent info and then fetched the full Recurrent study directly. After that, it organized the answer around one authoritative ranking (using Recurrent's actual ranked list and their specific findings). Lastly, it ran a self-check and split uncertainties into categories.

3. High-resolution vision

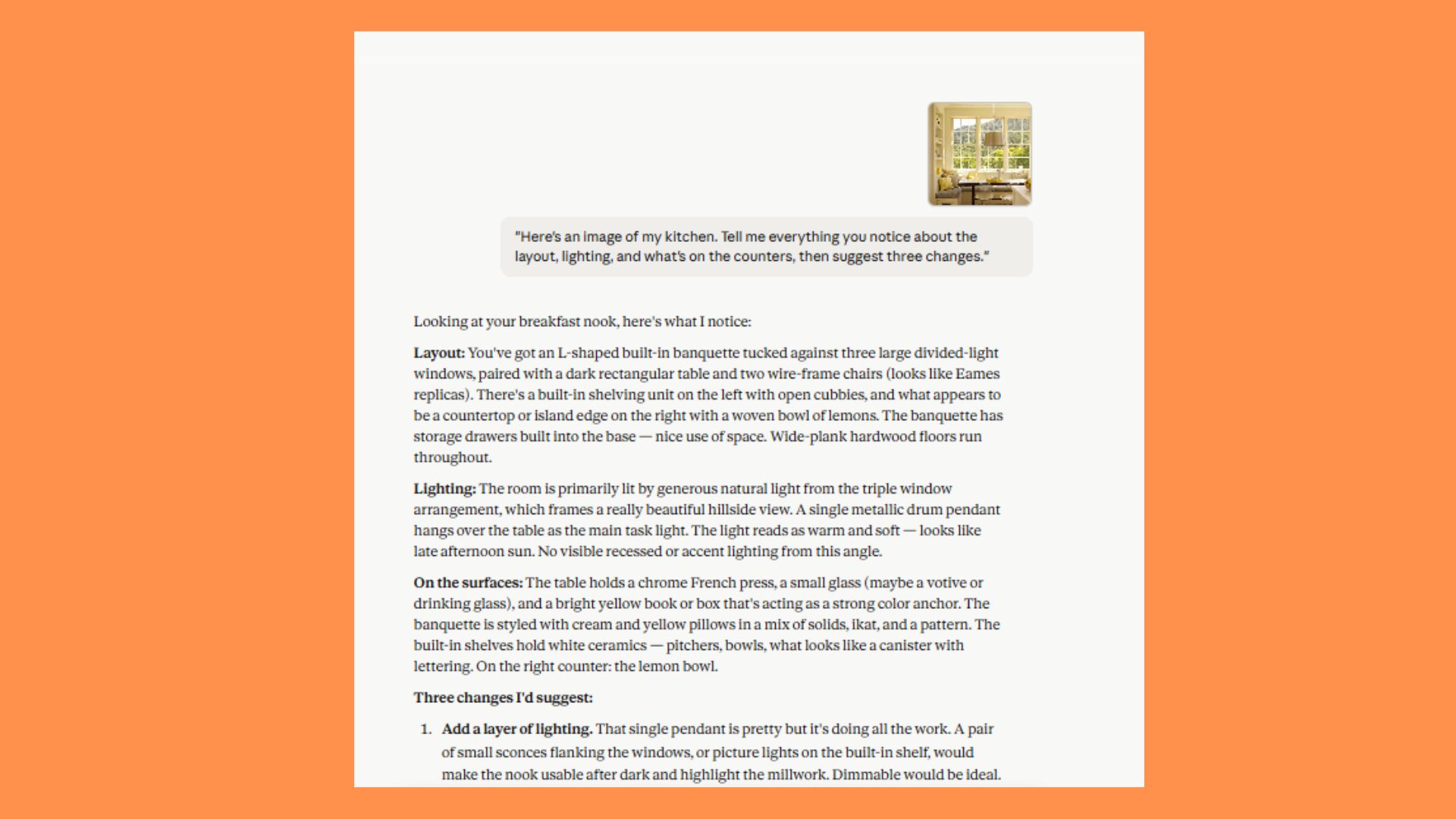

Prompt: "Here's an image of my kitchen. Tell me everything you notice about the layout, lighting, and what's on the counters, then suggest three changes."

The AI described the layout, lighting and surface contents of my breakfast nook based on what was visible in the photo, then offered three suggested changes. The suggestions focused on adding layered lighting, editing the pillow arrangement and restyling the tabletop centerpiece.

Then, it closed by offering to go deeper or recommend specific products. Not sure if I will implement these changes, but it was interesting to see what Claude would do.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

4. Creative ‘taste’

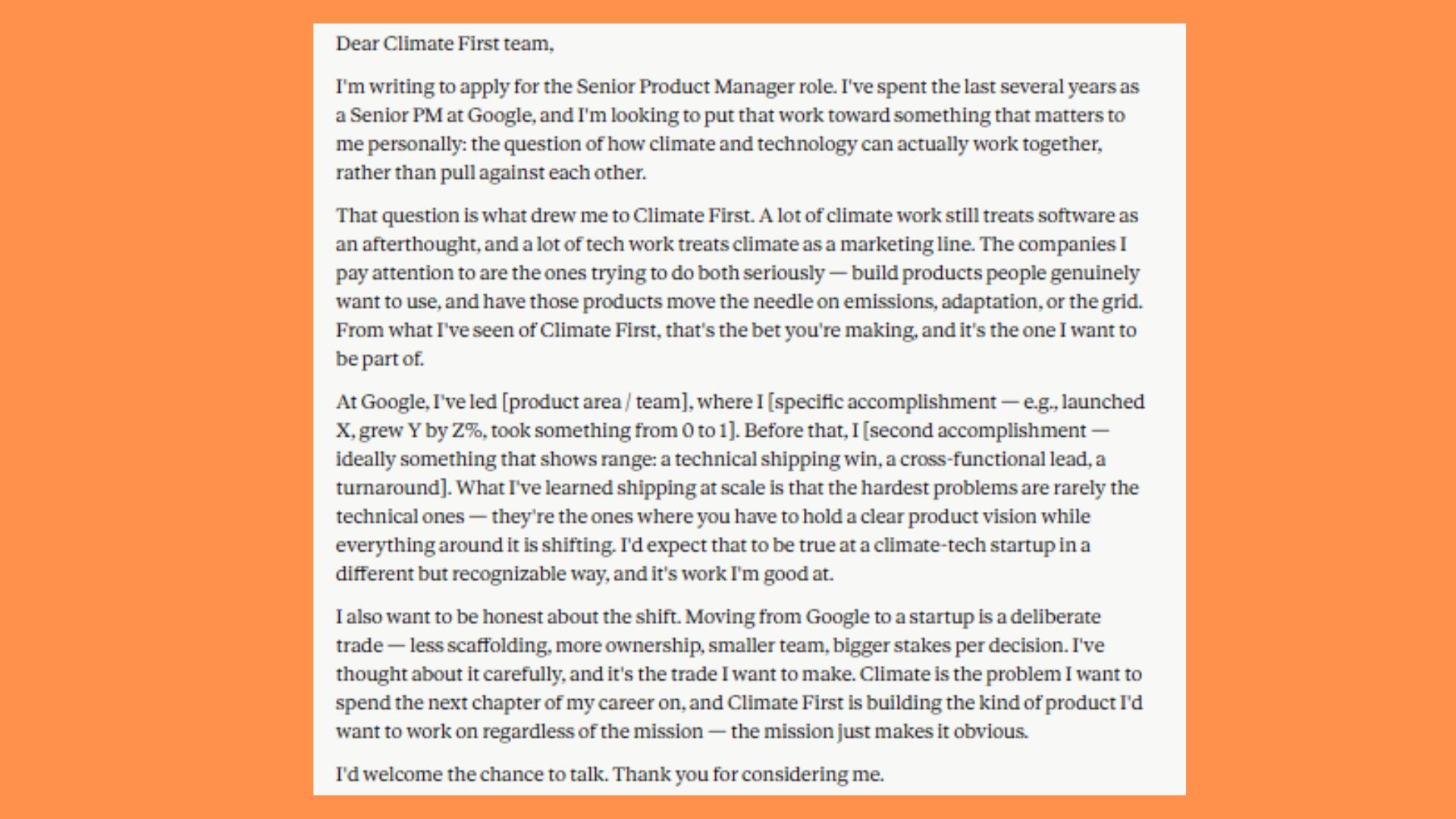

Prompt: "Write me a one-page cover letter for a senior product manager role at a climate-tech startup. I want it to sound like a human wrote it."

This was a really interesting test, especially because I am not actually applying for a job. I told Claude to “make something up” about me and it pushed back several times (something ChatGPT rarely does). It wanted real specifics. Once I gave some details it then drafted it using my actual words about climate and tech working together. It left bracketed placeholders for professional wins and included a candid paragraph about the corporate-to-startup trade to make it read as human-written. Seriously impressive!

5. Taste with autonomous engineering

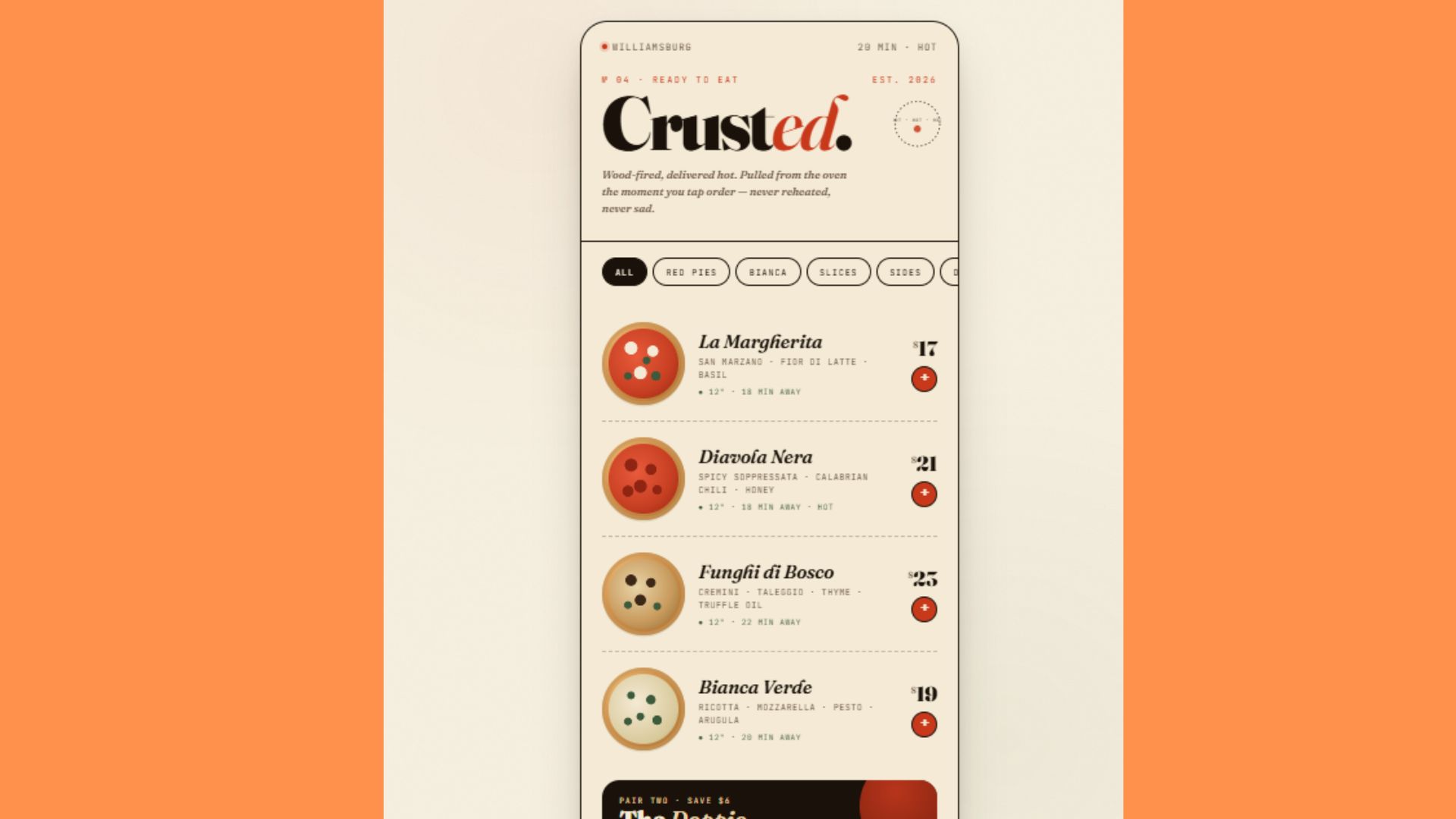

Prompt: "Design a an app for my ready-to-eat cold pizza company ‘Crusted.’ Make it look like something a real design studio would ship, not a generic SaaS template."

The model built a single-screen ordering app mockup for Crusted with an editorial/deli aesthetic. The app has a warm paper palette, stylish fonts and CSS-only pizza illustrations that vary by variety. After the model finished it suggested that I do this type of project in Claude Cowork next time.

6. Vision and self-verification

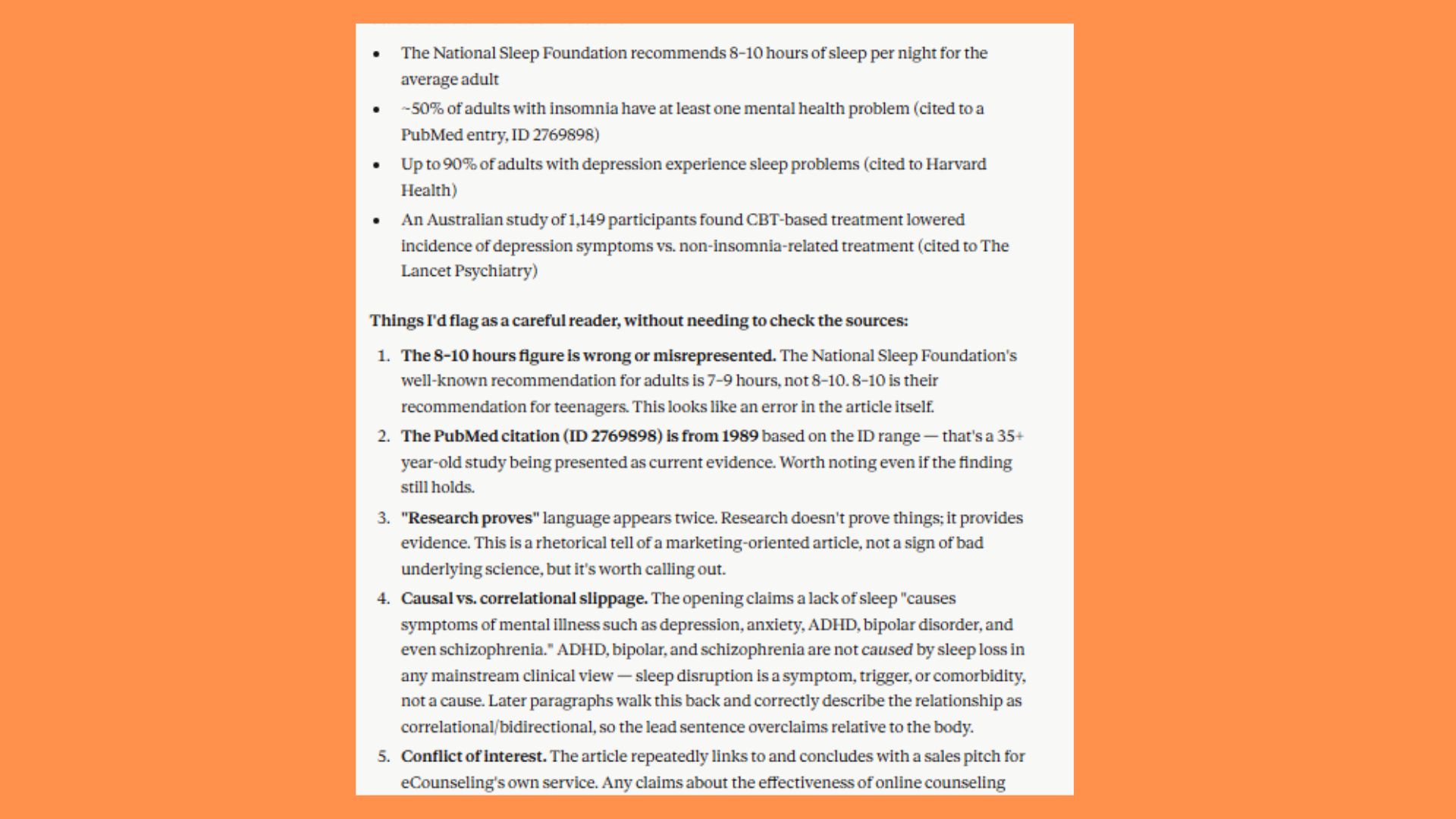

Prompt: "Take this messy PDF research paper and extract the key findings, cross-check the numbers in the charts against the text and flag any inconsistencies."

The first upload for this prompt, I purposely made a mistake to see if Claude would recognize it. Sure enough, it did immediately and even said, “I can’t go any further. This appears to be the wrong document.” Once I uploaded a whitepaper on Insomnia and Mental Health it extracted the main statistical claims and flagged problems within the text and even said the images were wrong/didn’t match the text well enough. Finally, it noted that the information should be cross-checked, which was a correct suggestion as the PDF is several years old and just happened to be one of the files on my computer.

7. Decision making

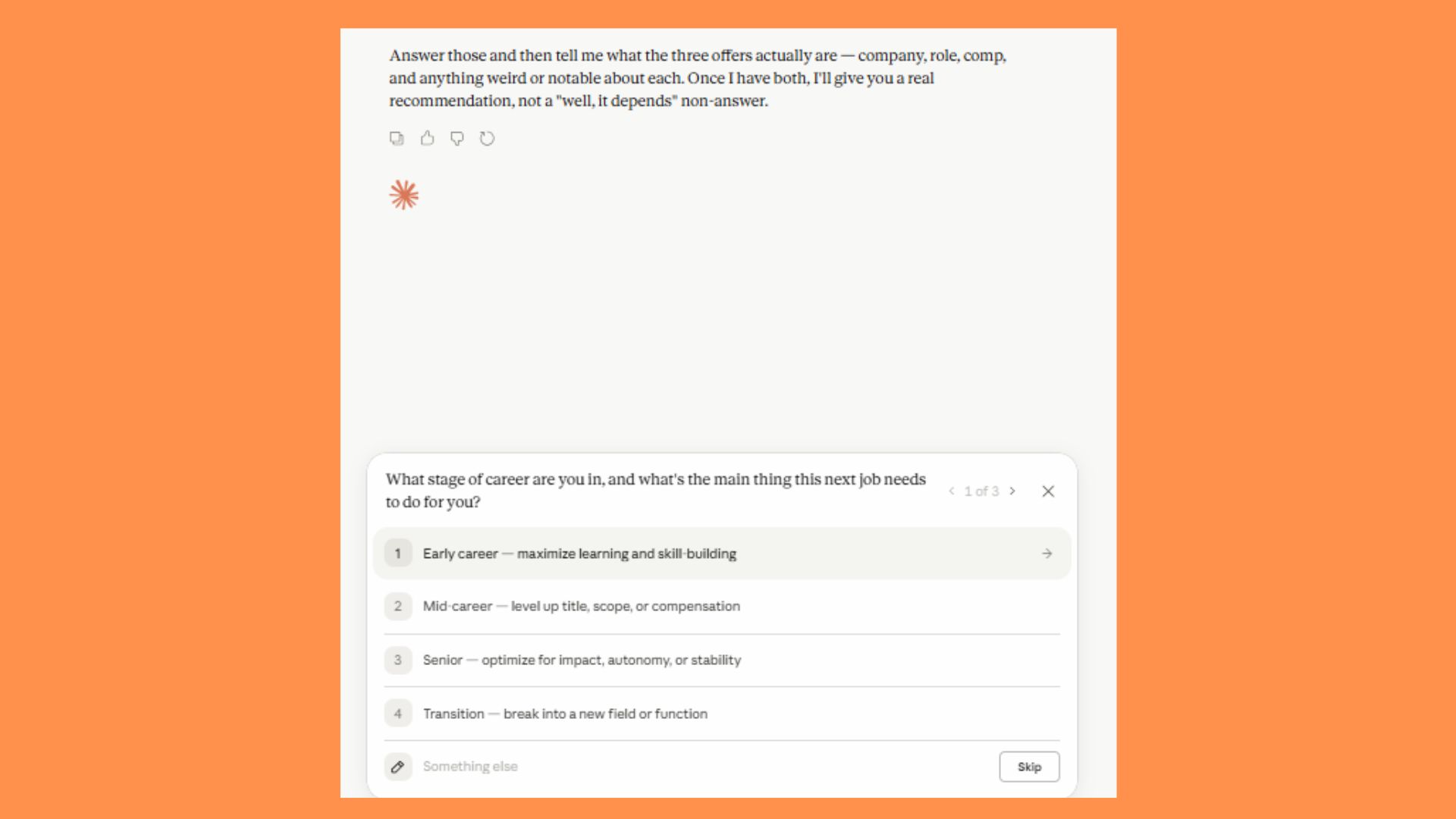

Prompt: "I'm trying to decide between three job offers. Aks me the questions that actually matter, then give me your real recommendation."

This prompt is completely hypothetical, but I could see it being useful for someone with a tough decision. Claude immediately asks questions in a multiple choice fashion. They can be answered or skipped (at which time Claude will ask a new question). Each question I answered led to Claude diving deeper into the decision process. For tricky life scenarios, this seems like it could be a good place to start to help lay all the pros and cons on the table.

The takeaway

After stress-testing these prompts, the conclusion is that Opus 4.7 is effectively the most sophisticated AI currently available to the public. It has crossed the threshold from a reactive tool to a genuine collaborator.

It's obvious that there is a huge degree of 'thought' behind its outputs and a level of discernment evident whether it was pushing back on my fictional job details or identifying errors in a legacy PDF.

We’re paying a higher 'token tax' for this version, but given the autonomy and self-correction on display, it’s an easy trade-off to make. Have you tried it yet? Let me know in the comments what you think of this new model.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

- ChatGPT’s new ‘Thinking’ mode just hit a 94% reasoning score — 7 prompts it can solve that standard AI can’t

- The 'Learn to Code' era is over — as an AI editor, here's the 'Intent Architecture' roadmap I’m giving my kids instead

- Google just unlocked 'Agent Mode' for Gemini 3.1 — here are 7 things it can now do for you

Amanda Caswell is the AI Editor at Tom's Guide and one of today’s leading voices in AI and technology.

A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies.

As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits