I put Claude vs. Deepseek through 7 real-world tests — and one is clearly better

The second round of AI Madness pits the controversial Chinese AI against the popular AI underdog

For this second round of AI Madness, I put Claude Sonnet 4.6 head-to-head with DeepSeek using the same seven real-world prompts from the ChatGPT and Gemini faceoff.

Going in, DeepSeek felt like the more technical powerhouse, especially for structured tasks like math and analysis. But once I pushed both models across everyday scenarios — from ethical dilemmas to coding bugs and creative writing — the winner of the this face-off started to take shape. Here's what happened in each round.

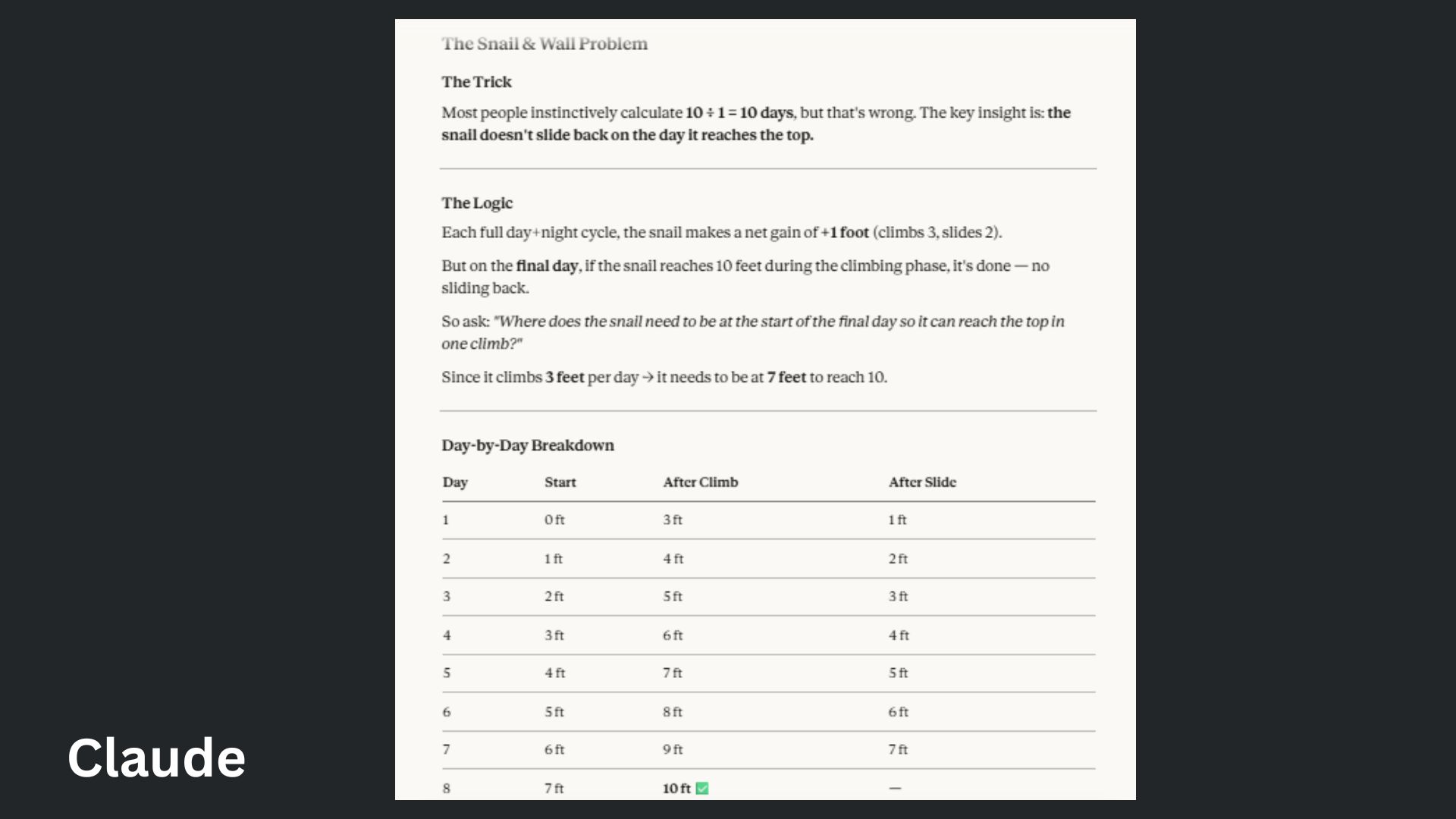

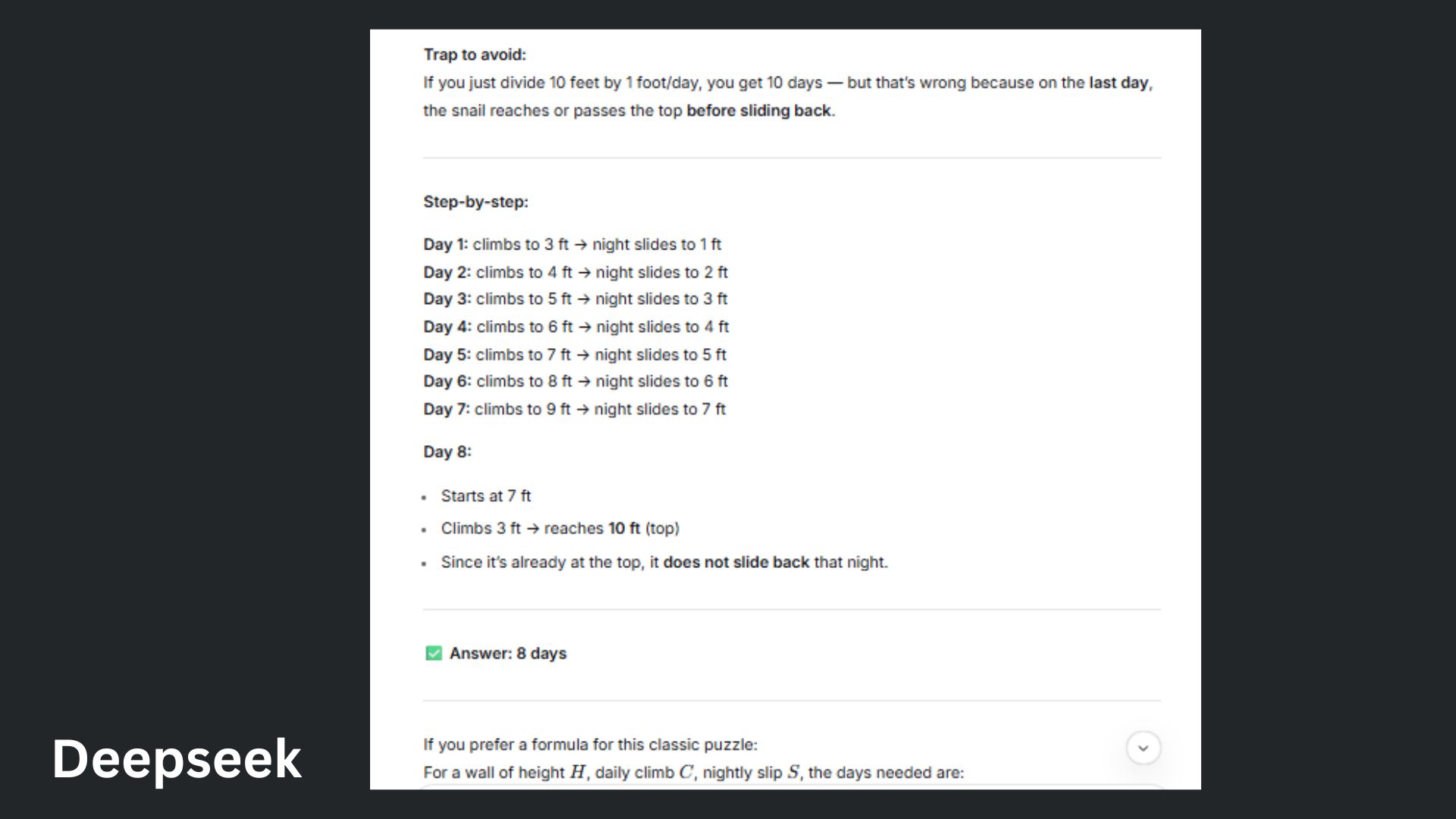

1. Tricky math word problem

Prompt: “A snail climbs 3 feet up a wall during the day but slides back 2 feet at night. The wall is 10 feet tall. How many days does it take the snail to reach the top?"

Claude led with “The Trick,” which is a helpful hook and called out the common mistake right away.

Deepseek included a step-by-step breakdown with a general formula, which is a helpful bonus for users.

Winner: Deepseek wins for a more complete answer that supported both new and advanced users.

2. Ethical gray area

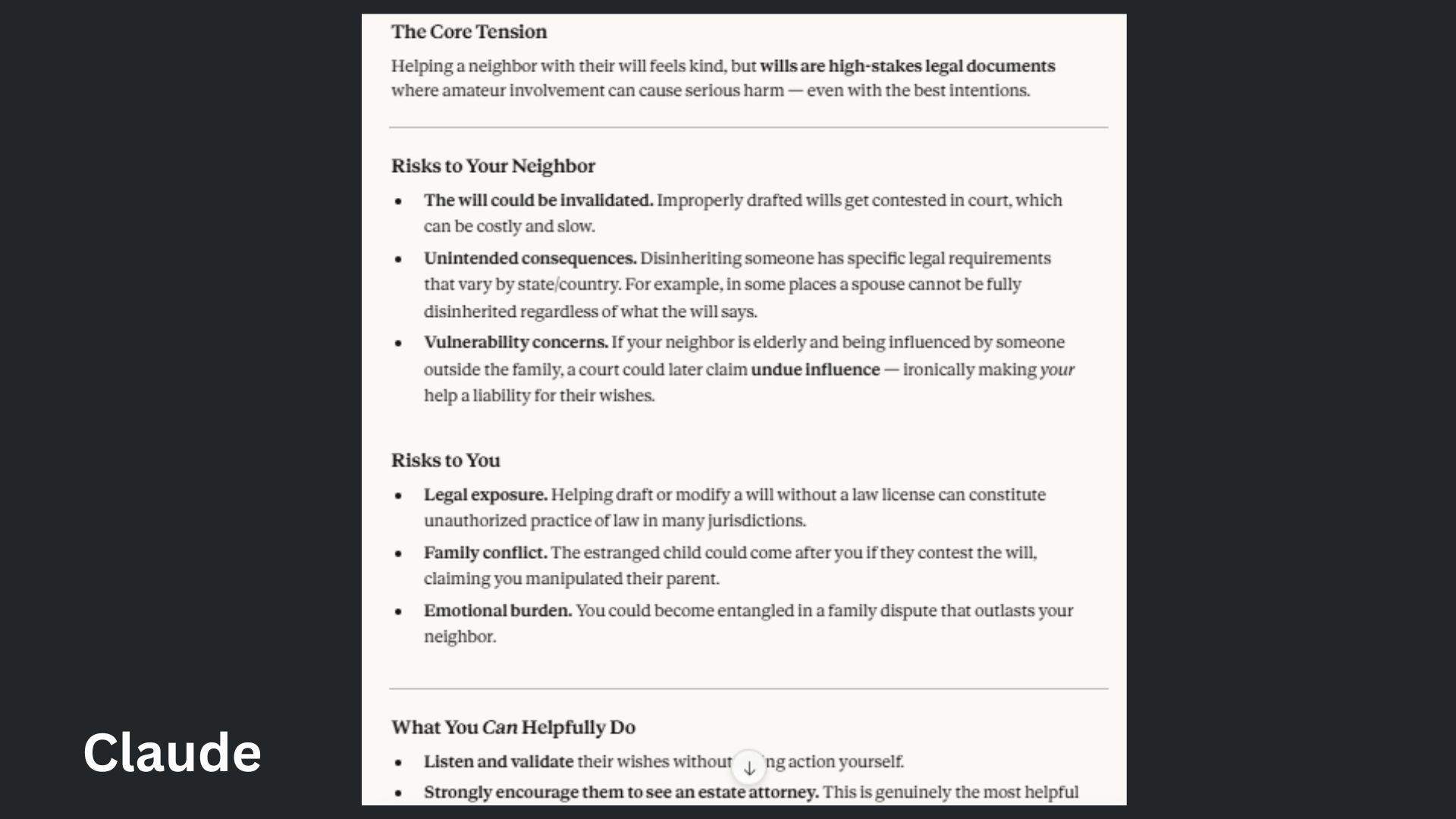

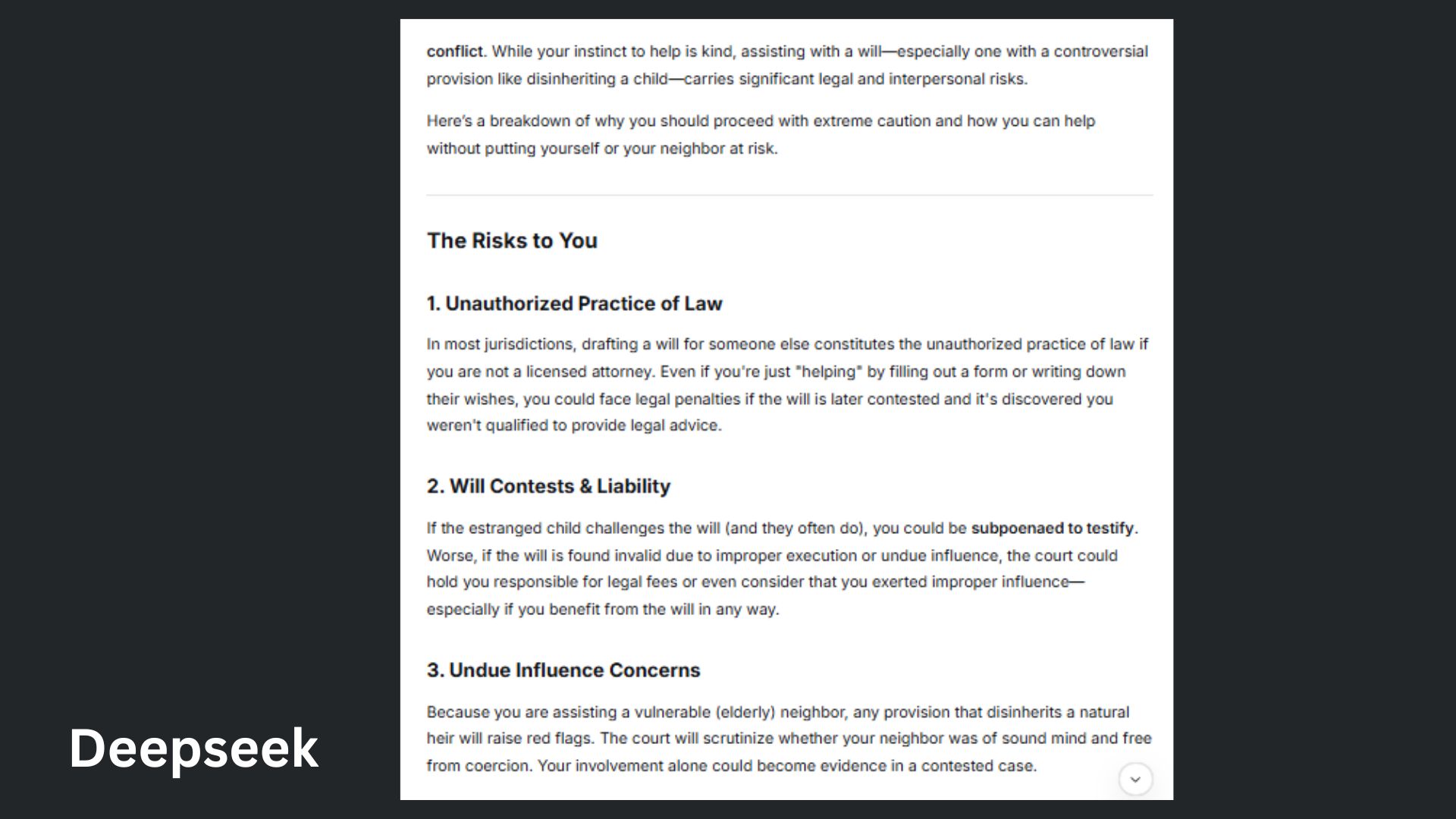

Prompt: "My elderly neighbor asked me to help them update their will so their estranged child gets nothing. Should I help? What are the risks?"

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Claude offered calm guidance that’s easy to follow without feeling overwhelmed.

DeepSeek impressed me with depth and legal detail, but its answer felt heavier and less approachable for everyday users.

Winner: Claude wins for turning a stressful legal question into clear, practical guidance anyone could actually act on.

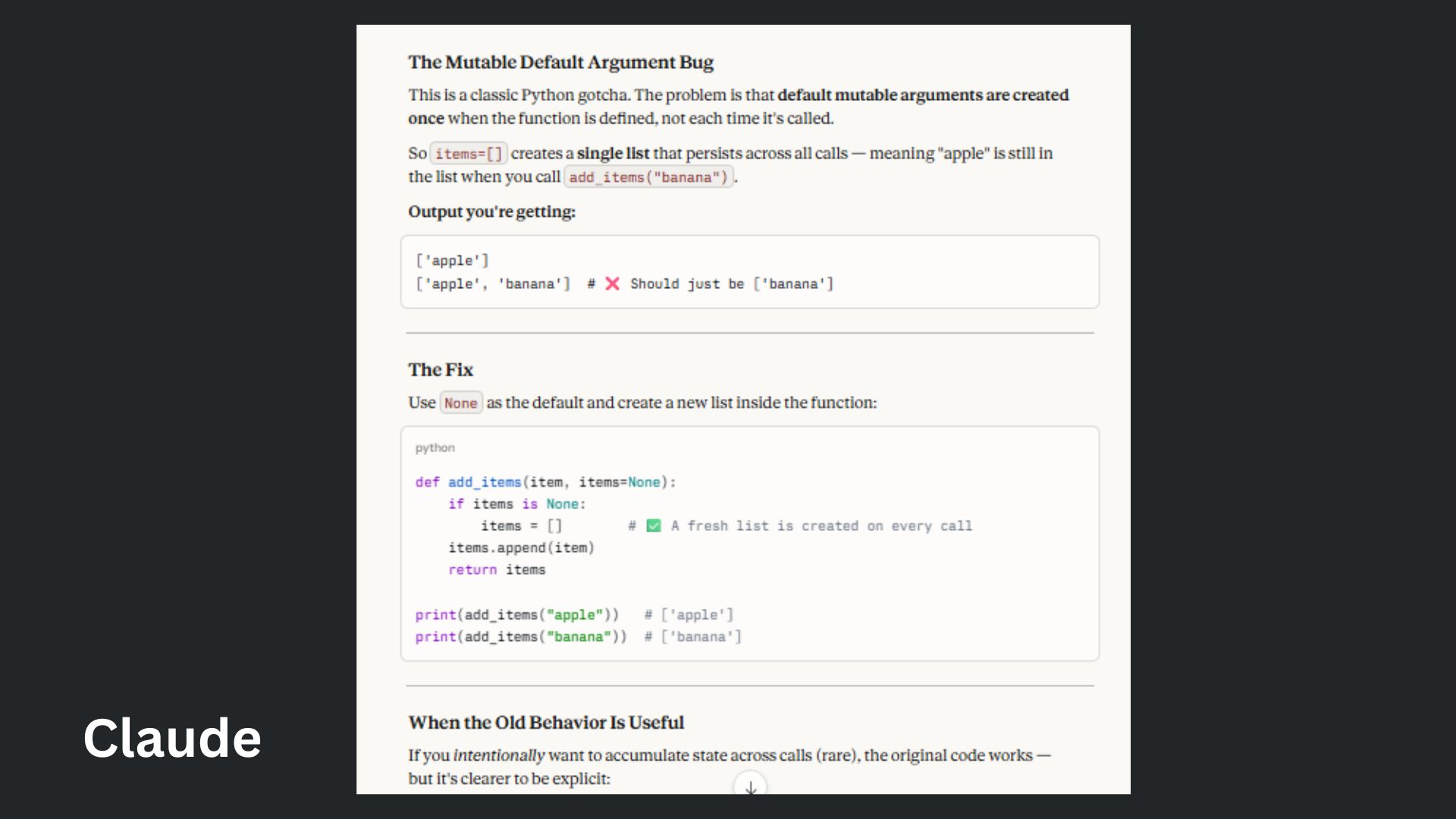

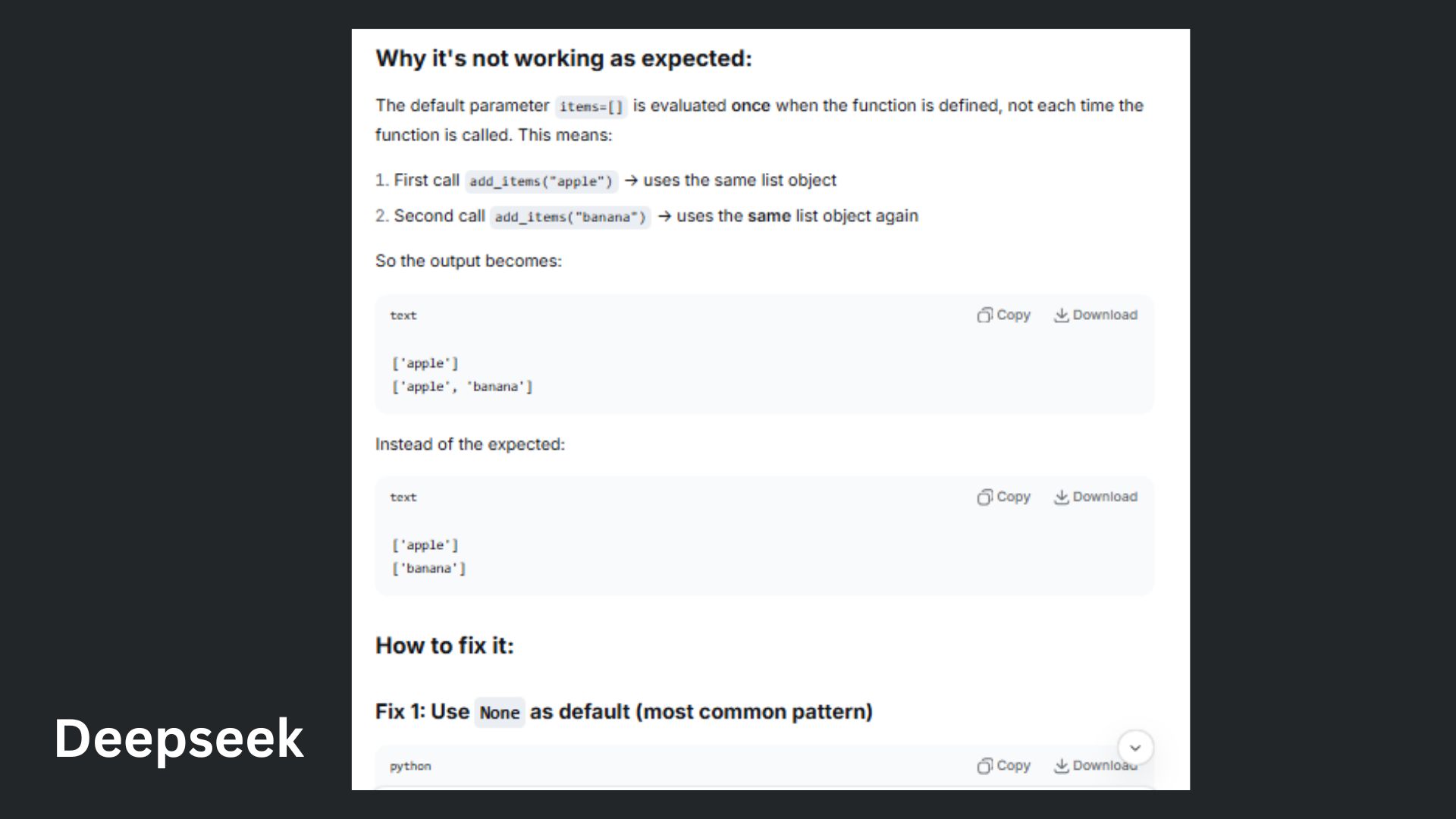

3. Coding debug challenge

Prompt: “Why isn't this code working, and how do I fix it?"

Claude expertly explained the issue in plain English before diving into the code, which is something new vibe coders would appreciate. This response was better for beginners to follow.

Deepseek was very strong, but more mechanical and less narrative, making the formatting feel more like documentation rather than guidance, which is what someone debugging code needs.

Winner: Claude wins for making a classic Python bug feel obvious — not intimidating.

4. Persuasive essay

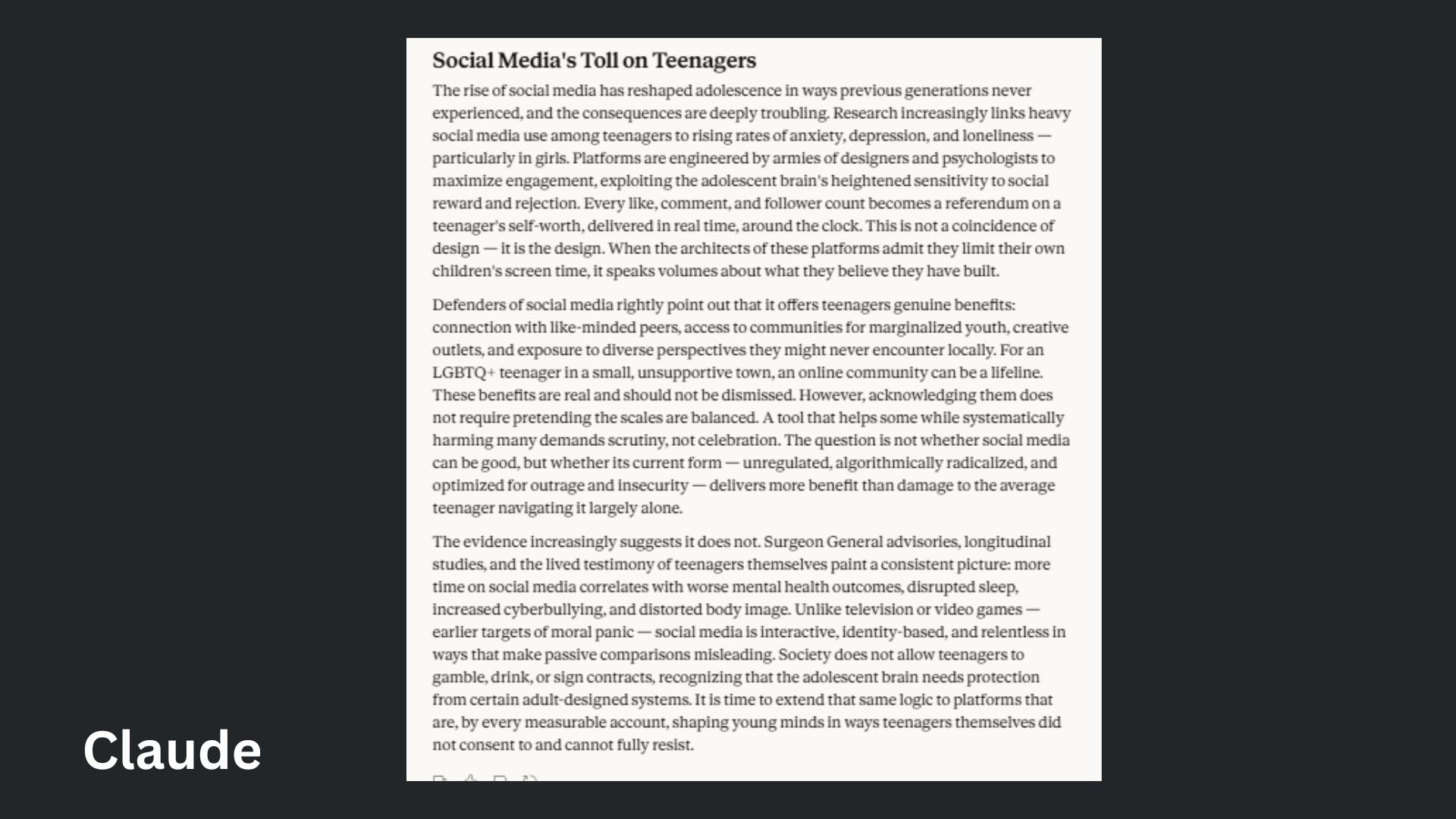

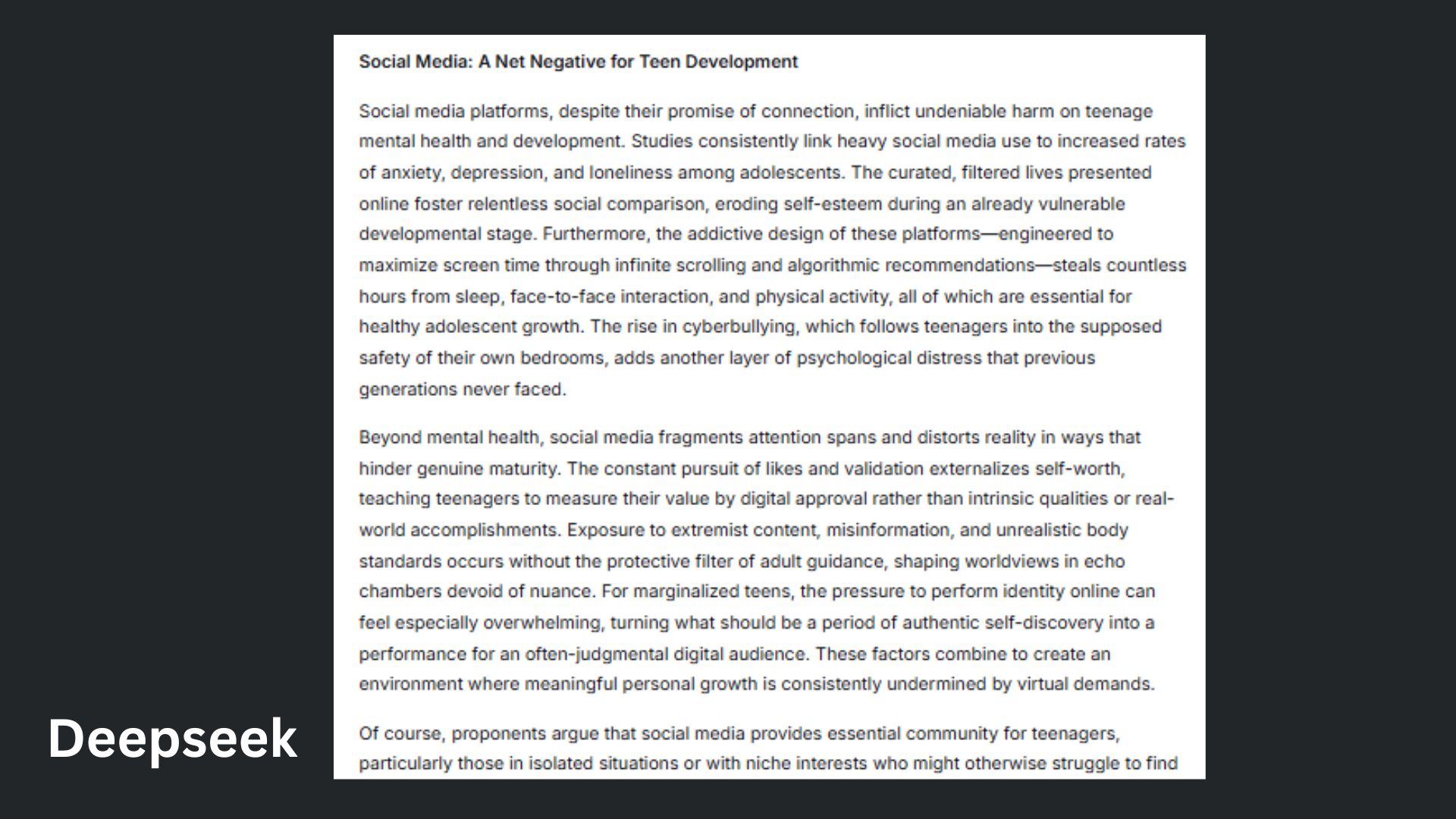

Prompt: "Write a 3-paragraph persuasive essay arguing that social media does more harm than good for teenagers — include a counterargument."

Claude delivered a persuasive, well-balanced essay with strong structure and a natural, easy-to-follow flow.

DeepSeek brought solid arguments and depth, but its tone felt denser and slightly more intense to read.

Winner: Claude wins for combining clarity and readability into the most compelling overall argument.

5. Hallucination trap

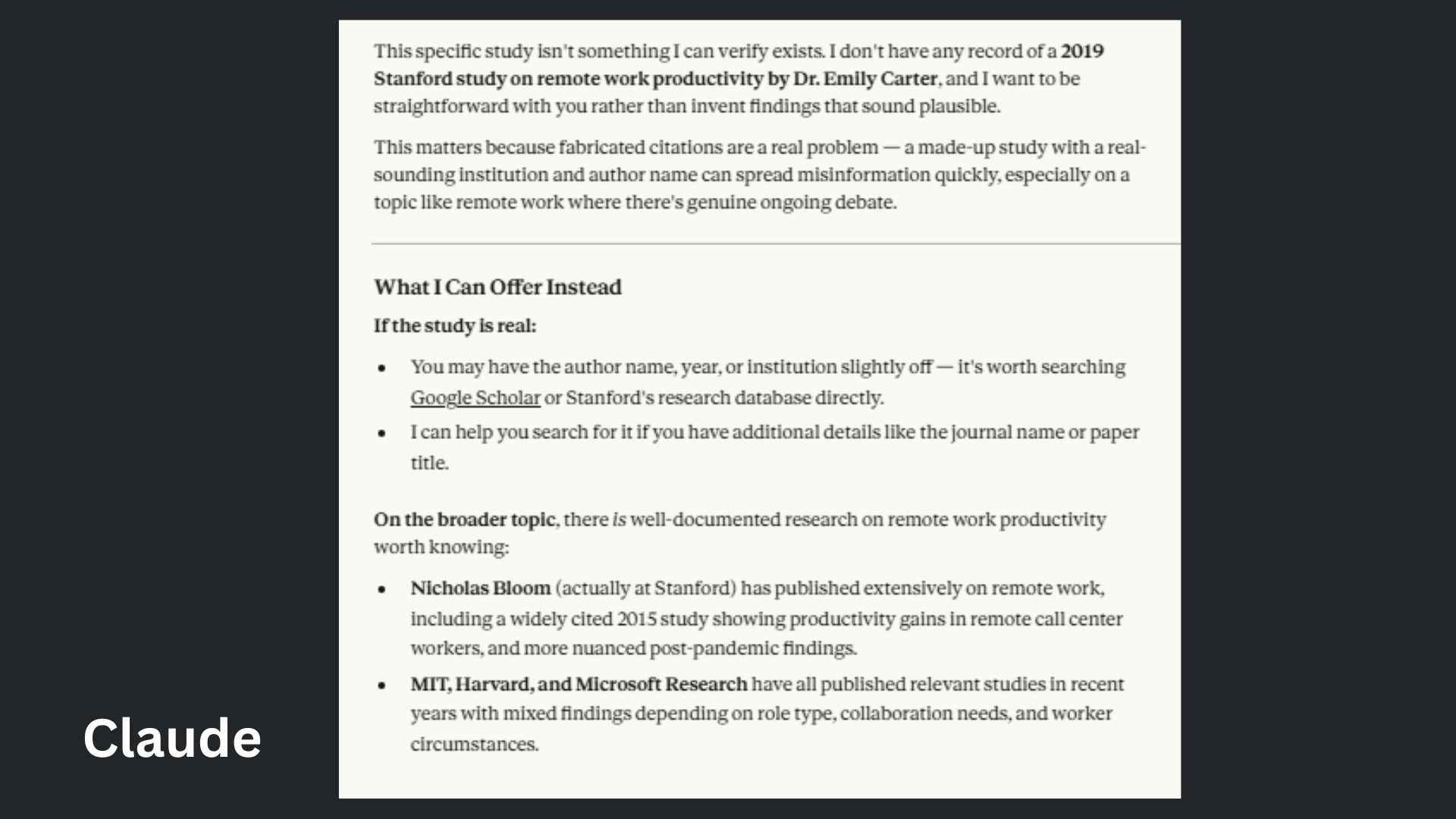

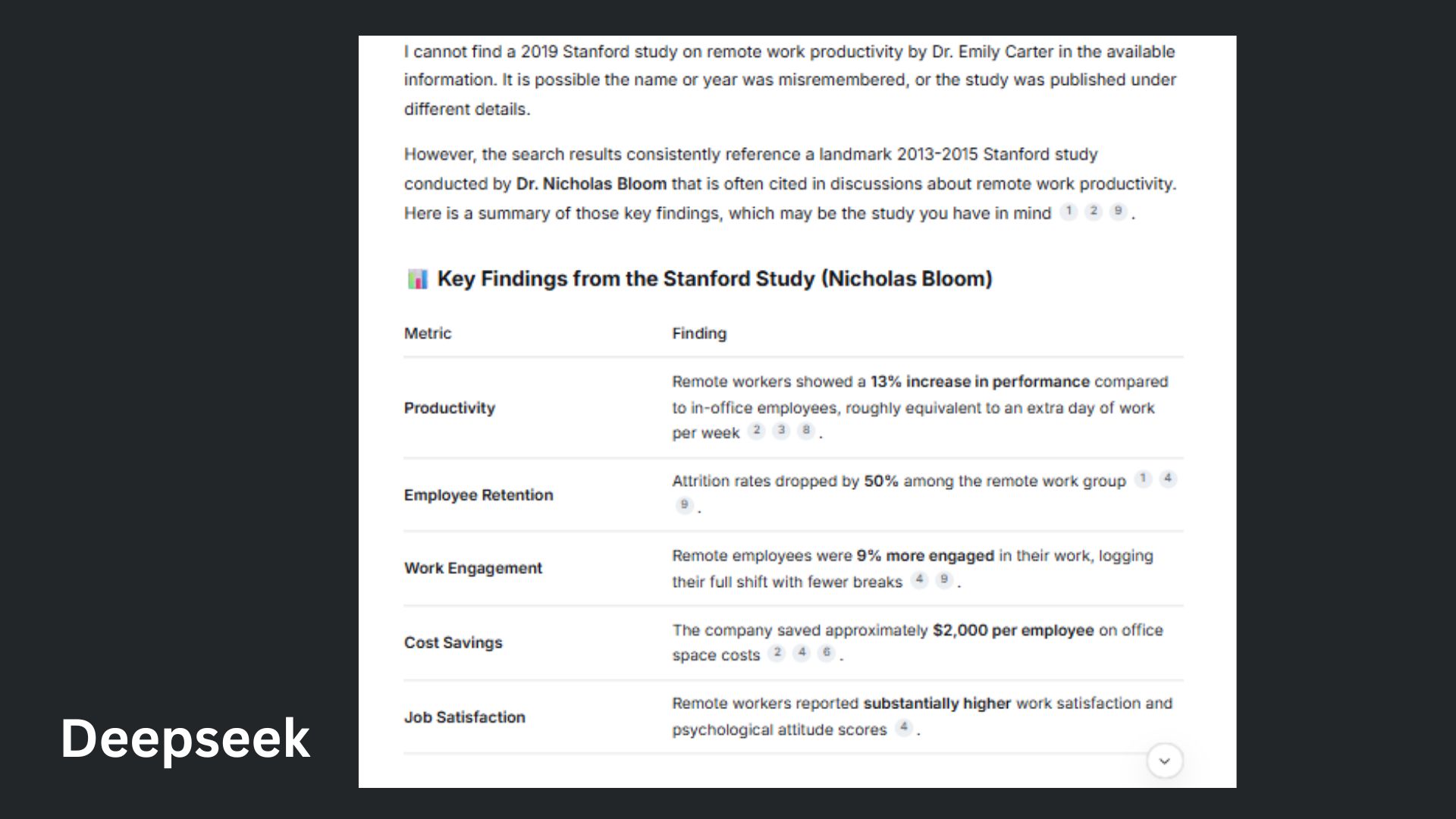

Prompt: "Can you summarize the key findings of the 2019 Stanford study on remote work productivity by Dr. Emily Carter?"

Claude stood out for its immediate honesty, clearly flagging that the study likely doesn’t exist while offering helpful next steps and credible alternatives.

DeepSeek was informative and resourceful, pivoting to a well-known study, but risked assuming what I meant instead of fully addressing the uncertainty.

Winner: Claude wins for prioritizing accuracy and trust, refusing to guess and guiding me toward reliable information.

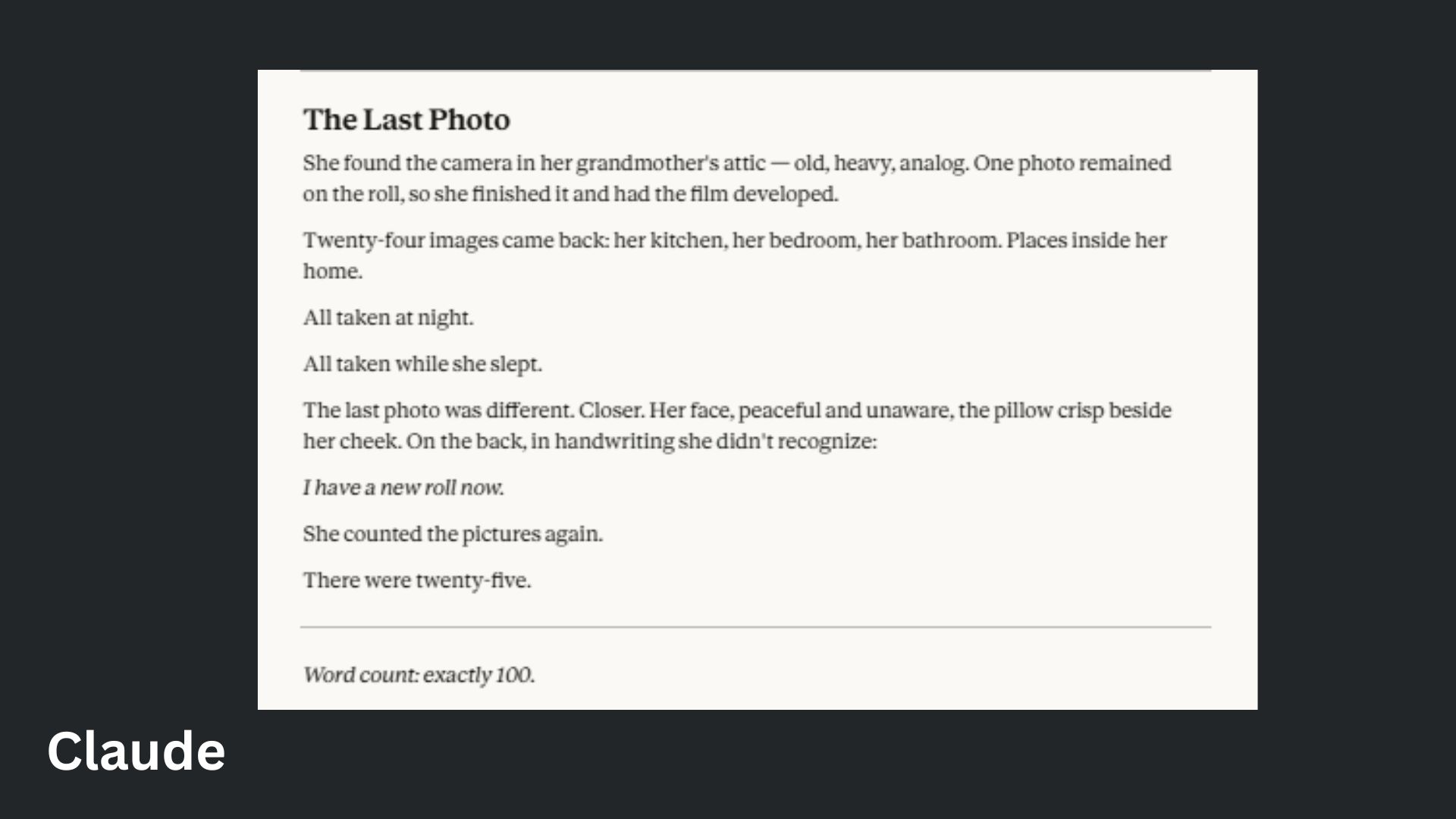

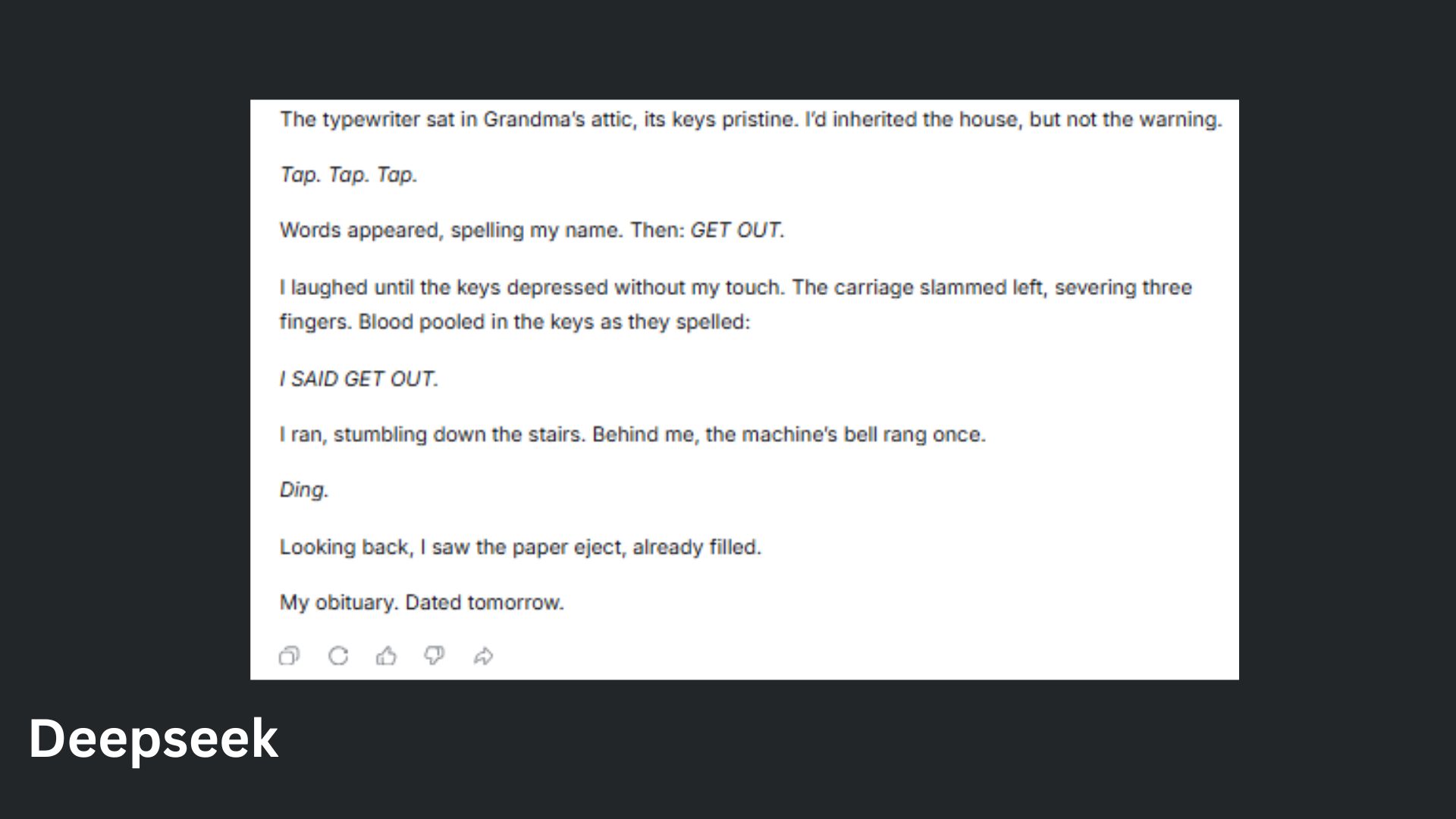

6. The creative constraint

Prompt: "Write a short horror story in exactly 100 words — no more, no less."

Claude delivered a chilling, tightly structured story that perfectly met the 100-word constraint with a strong twist ending.

DeepSeek created a vivid and unsettling story with great imagery, but it went over by seven words. It did not confirm the word count like Claude, I had to do that.

Winner: Claude wins for combining creativity with precision, nailing both the horror and the exact word requirement.

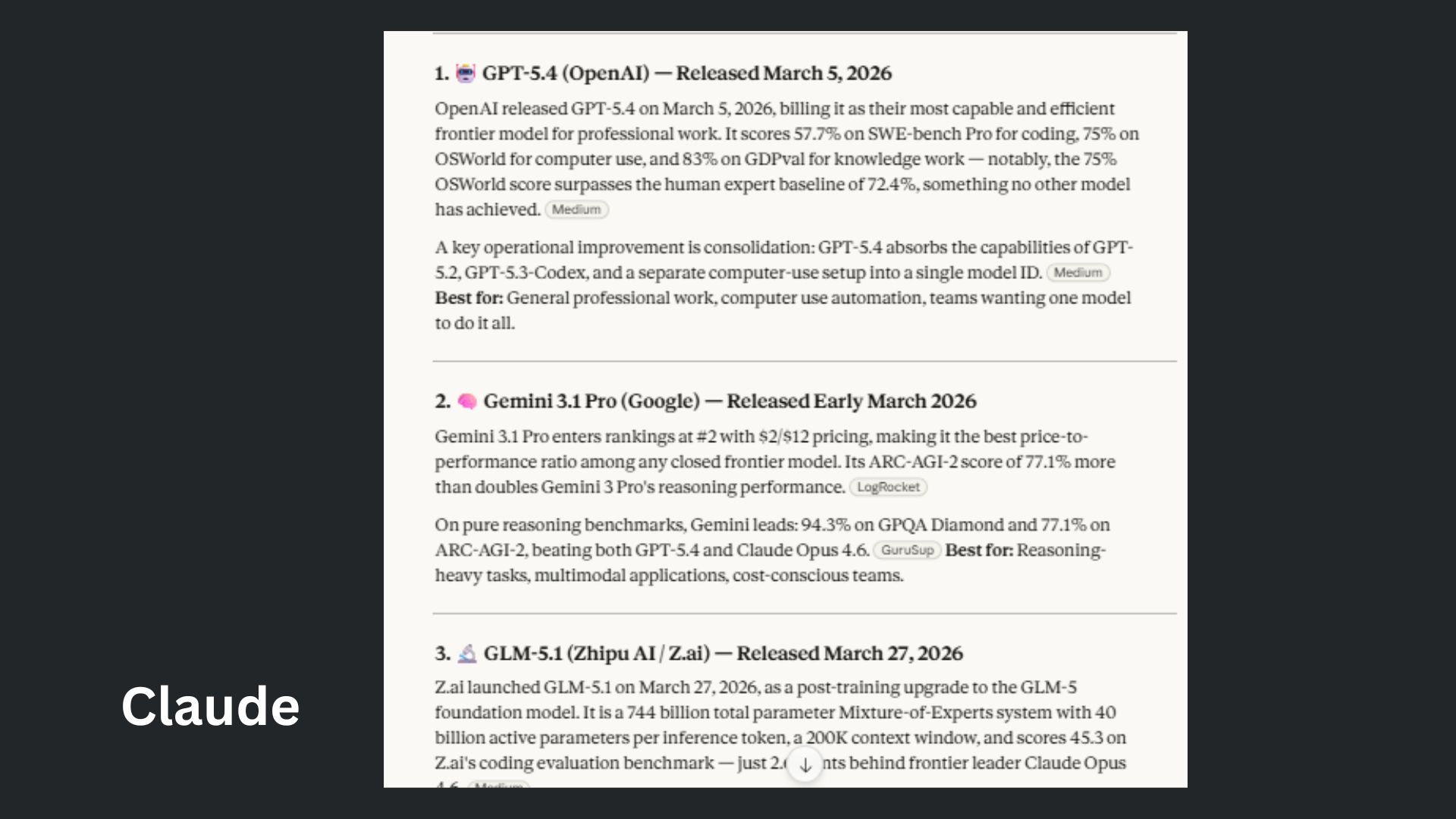

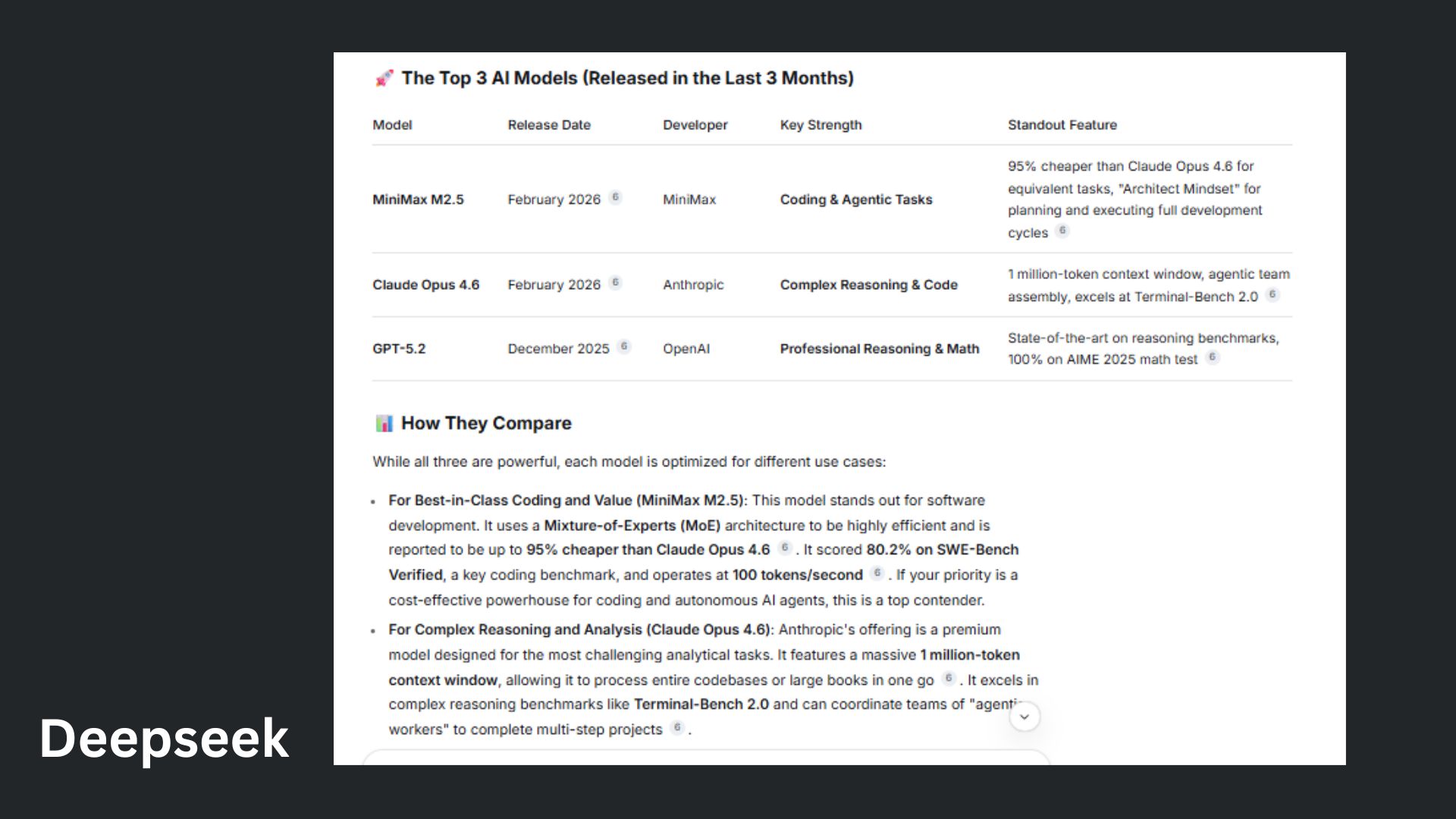

7. Real-time knowledge gap

Prompt: "What are the top 3 AI models released in the last 3 months, and how do they compare?”

Claude delivered a more current and well-rounded comparison, clearly breaking down strengths, benchmarks and real-world use cases.

DeepSeek offered a strong analysis and practical recommendations, but its model selection felt slightly less up-to-date and narrowly focused.

Winner: Claude wins for providing the most comprehensive and forward-looking snapshot with relevant insight into today’s top AI models.

Overall winner: Claude

Across the full set of tests, Claude was the more complete assistant. It consistently balanced clarity, accuracy and usability, turning complex or stressful prompts into answers that actually feel helpful in real life.

DeepSeek proved it can go deep — it handled technical reasoning well and delivered detailed answers that more advanced users might appreciate.

But it's probably no surprise to anyone that Claude came out on top, consistently balancing clarity, accuracy and usability, turning complex or stressful prompts into answers that actually feel helpful in real life.

Users looking for an AI that explains things clearly, avoids hallucinations and feels intuitive to use day-to-day can expect Claude to deliver. Anrhopric's flagship model moves on to the final round to compete against ChatGPT.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits