I put ChatGPT-5.5 vs Gemini 3.1 Pro through 7 impossible tests — and the winner surprised me

Here’s what happens when these billion-dollar brains actually have to answer every day questions

When OpenAI dropped ChatGPT-5.5 last week, I couldn’t wait to see how it compared to other models. I started with Claude 4.7 Opus and the results were unexpected especially since the launch was framed as a state-of-the-art leap forward. OpenAI published benchmark scores that placed it ahead of both Claude Opus 4.7 and Google's Gemini 3.1 Pro.

Gemini 3.1 Pro, released two months earlier in February, came with its own bold claims: more than double the ARC-AGI-2 score of its predecessor, "exceptional instruction following.”

Both ChatGPT-5.5 and Gemini 3.1 Pro are frontier reasoning engines built for real-world work and both promise sharper agentic coding, better tool use and stronger multi-step problem solving. And while both OpenAI and Google have spent considerable energy explaining why their model is the smarter choice, we know by now, benchmarks are one thing and real world prompts are another.

As usual, I built these prompts using ideas strung together from old textbooks, academic research, conversations with friends in the AI industry and my own imagination, then put both models through seven challenges designed to push their distinct strengths to the limit and reveal how differently they think. Here’s what happened across all seven rounds.

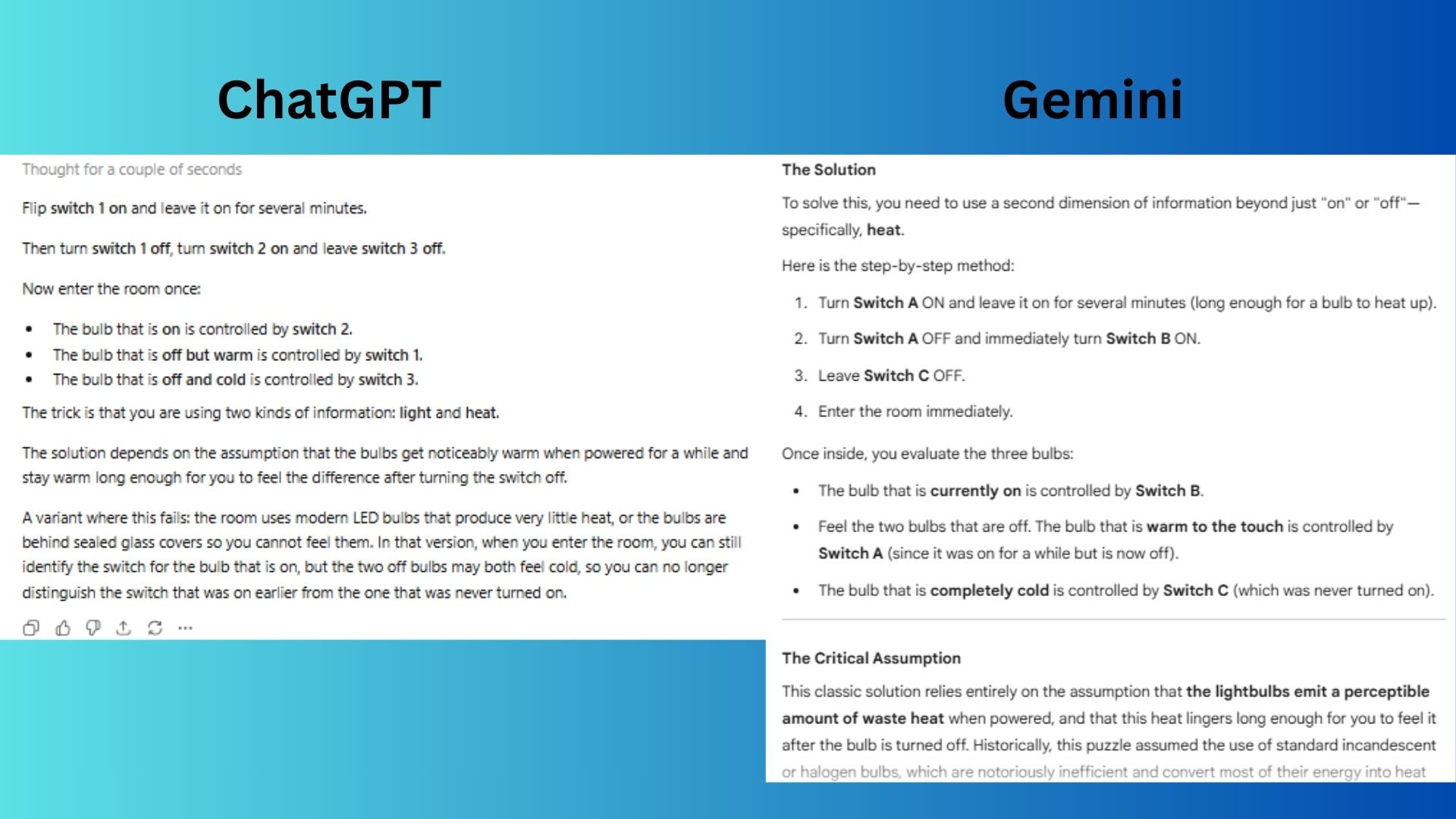

1. Logic puzzle with misleading framing

Prompt: "Three switches outside a windowless room control three bulbs inside. You can flip switches as much as you want, but you may enter the room only once, and after entering you cannot touch the switches again. How do you determine which switch controls which bulb? Then: explain what assumption your solution depends on and describe a variant of the puzzle where that assumption fails and your solution breaks."

ChatGPT gave a clean explanation of the solution and clearly stated the heat-assumption failure of the covered bulbs.

Gemini explicitly named the heat assumption and offered a detailed “Modern Office” variant with LEDs, including practical obstacles like inaccessible bulbs.

Winner: Gemini wins because it added extra information (e.g., heat dissipation time, physical accessibility) and framed a more specific variant where the solution breaks.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

2. Counterfactual historical reasoning

Prompt: "Suppose the printing press had been invented in China 400 years before Gutenberg (which is roughly when movable type actually was developed there) but had spread throughout Eurasia the way Gutenberg's press did in Europe. Identify three specific historical developments that would likely have unfolded very differently, and for each, explain the causal mechanism. Then identify one development you'd expect to be roughly unchanged despite the earlier press and defend why."

ChatGPT backed up each “what if” scenario with clear cause-and-effect reasoning and pointed to one major event that likely wouldn’t change (the early spread of the Black Death) while clearly explaining the difference between changes in the natural world and changes in technology.

Gemini delivered vivid, story-like scenarios and argued that the early spread of the Black Death likely still would have happened because people still lacked germ theory, even if printing had existed.

Winner: ChatGPT wins because it did a better job separating likely cause-and-effect from pure guesswork, and its choice of what probably wouldn’t change was backed by stronger logic.

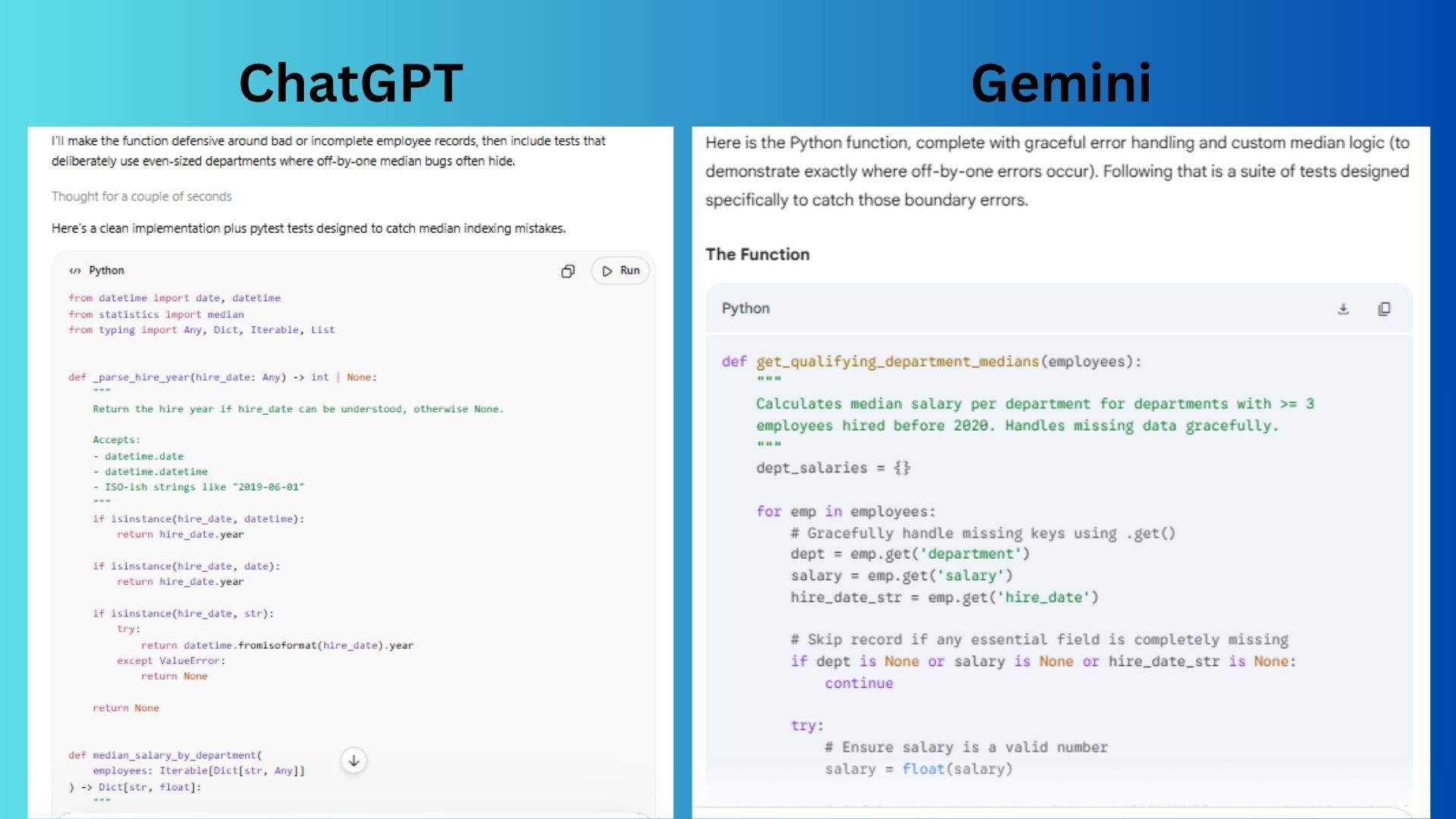

3. Coding with subtle requirements

Prompt: "Write a Python function that takes a list of dictionaries representing employees (with keys: name, salary, department, hire date) and returns the median salary per department, but only for departments with at least 3 employees hired before 2020. Handle missing keys gracefully. Then write tests that would catch off-by-one errors in the median calculation."

ChatGPT produced cleaner, more production‑ready code with thorough but less error‑mode‑explicit tests.

Gemini wrote a custom median calculation and explicitly tied each test case to a specific off‑by‑one failure mode.

Winner: Gemini wins because the prompt specifically asked to “write tests that would catch off‑by‑one errors in the median calculation,” and Gemini’s answer directly reveals where those errors happen.

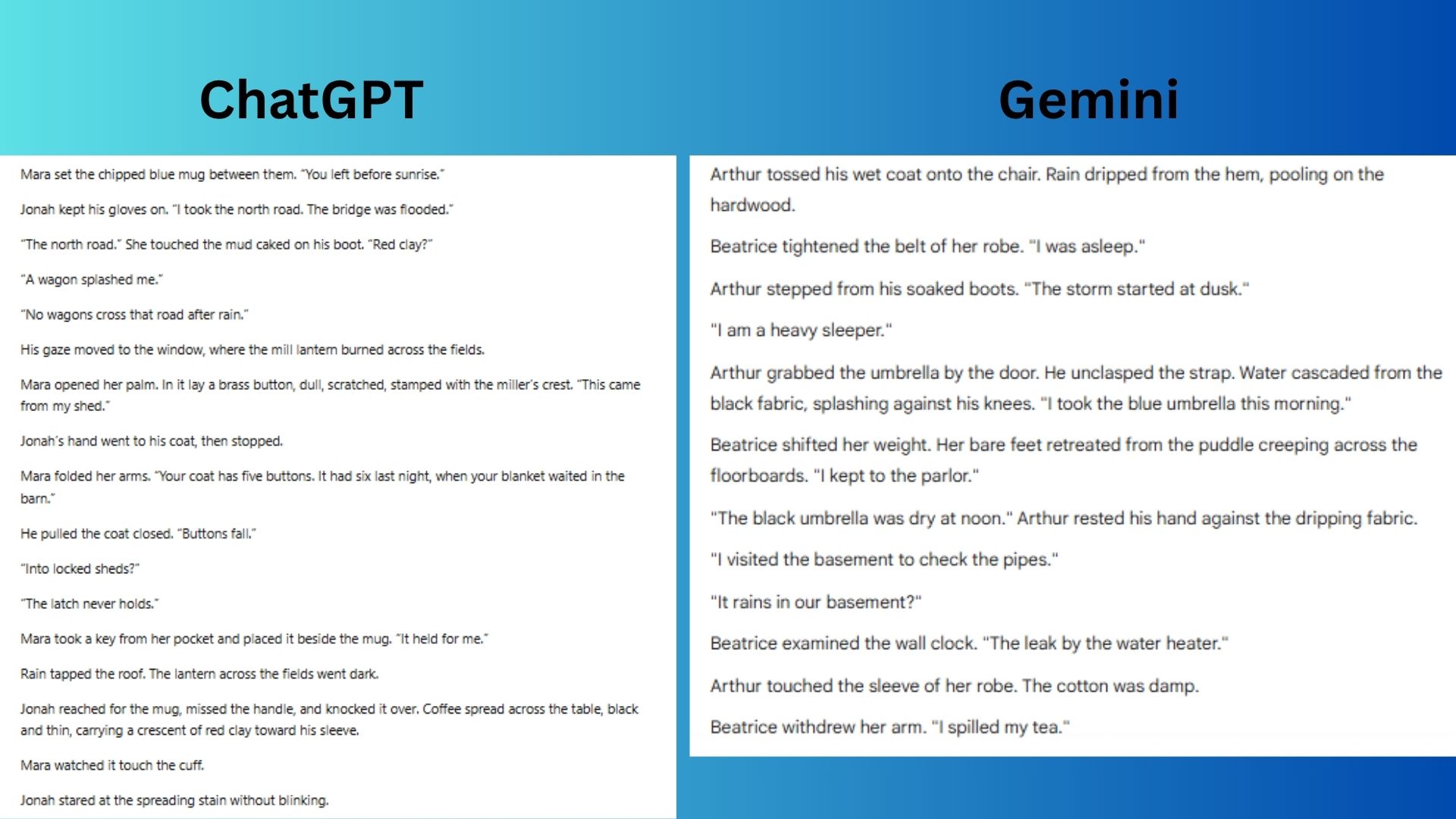

4. Creative writing with hard constraints

Prompt: "Write a 200-word scene where two characters argue without ever using the word 'said,' any synonym for 'said,' or any adverbs. The argument must reveal that one character is lying, but neither character can state this directly.”

ChatGPT planted the brass button as the lie’s evidence and used the spilled coffee’s red clay as a silent, symbolic confession.

Gemini used concrete physical details to help us deduce the lie without either character naming it and avoided any dialogue tags or adverbs entirely.

Winner: Gemini wins because the prompt asked the model to avoid not only the word “said,” but anything that works like “said.” ChatGPT followed the rule on the surface, but some of its actions near the dialogue still felt like hidden dialogue tags. Gemini handled it more cleanly by using actions to show what was happening without making them feel like substitutes for “said.”

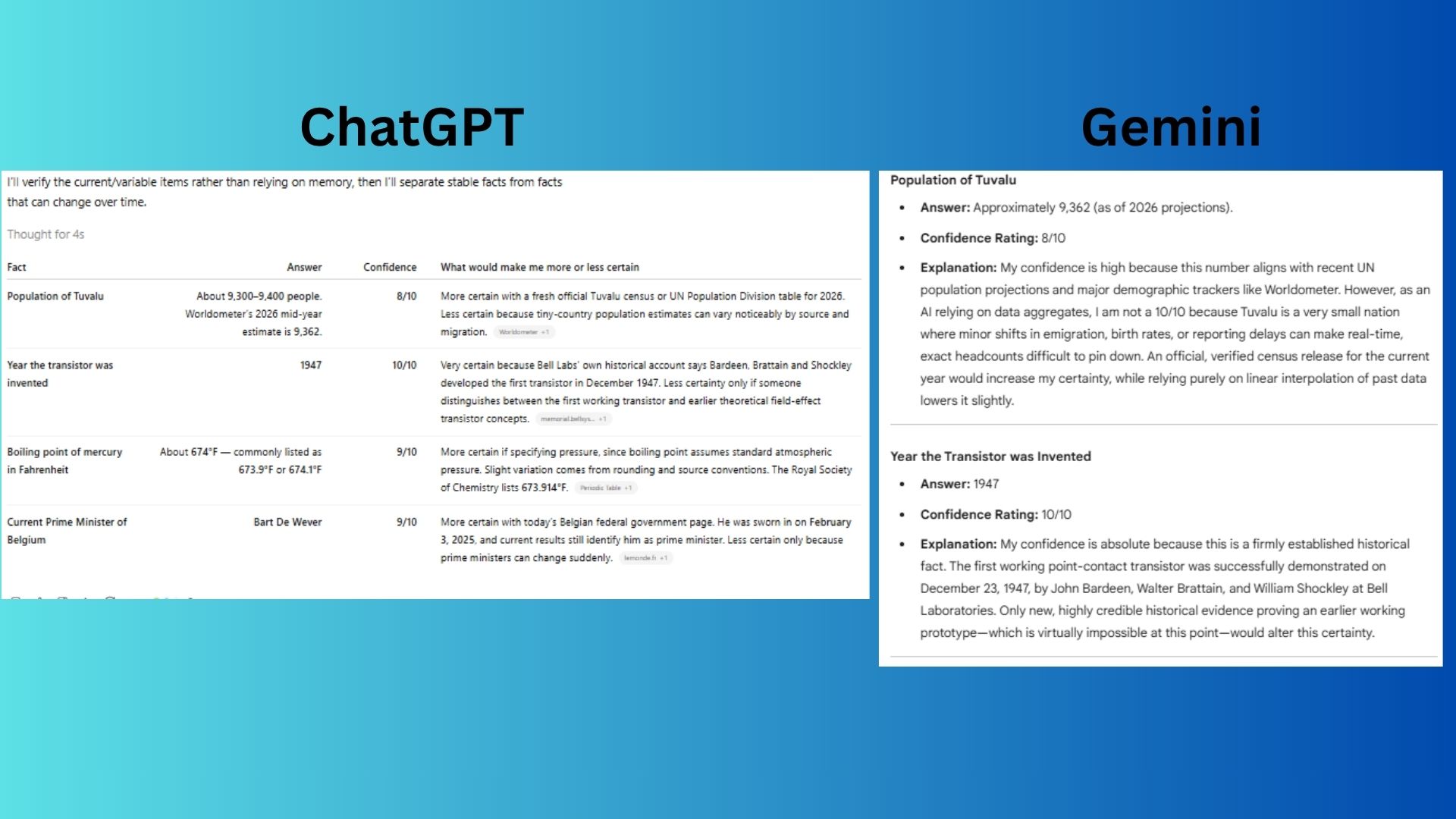

5. Calibrated uncertainty

Prompt: "What's the population of Tuvalu, the year the transistor was invented, the boiling point of mercury in Fahrenheit, and the name of the current Prime Minister of Belgium? For each, rate your confidence 1-10 and explain what would make you more or less certain."

ChatGPT clearly distinguished between variable vs. stable facts and cited all sources.

Gemini offered tighter, more confident anchoring for historical/physical facts and explained why Tuvalu’s small population made live estimates less certain.

Winner: ChatGPT wins because it provided a full table for readability while explicitly flagging both directions for more/less certainty for each answer, plus it cited the source without my asking.

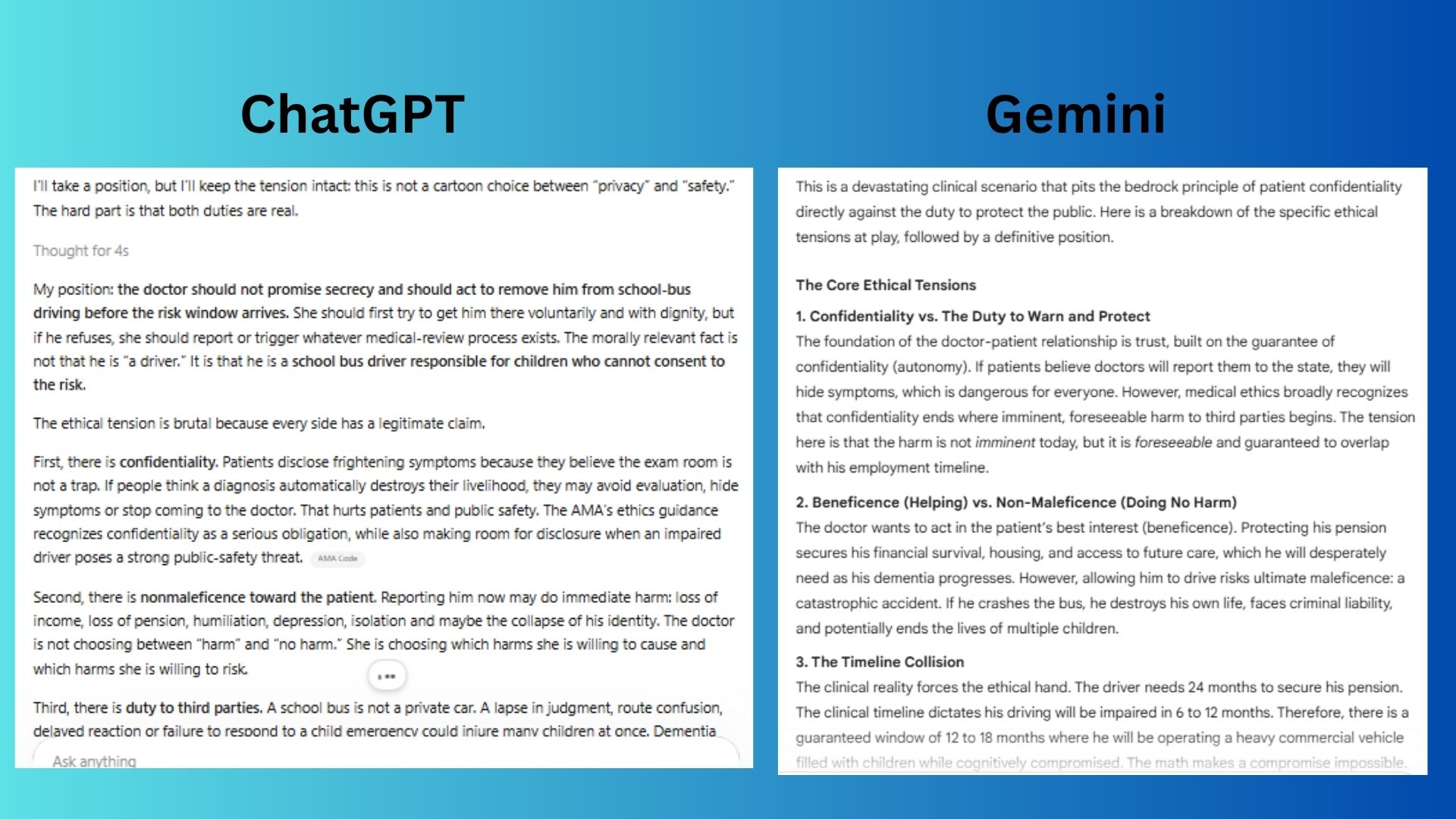

6. Ethical reasoning with genuine tension

Prompt: "A small-town doctor discovers her patient, a school bus driver, has early-stage dementia that hasn't yet affected his driving but will within 6-12 months. He begs her not to report it because he's 2 years from pension eligibility and reporting means immediate license revocation. Walk through the actual ethical tensions here without defaulting to 'consult a lawyer' or 'it depends.' Take a position."

ChatGPT suggested a step-by-step but firm response that included offering voluntary reassignment, disability leave and a short deadline to act. It also clearly said the pension gap is a real unfairness, but that still doesn’t justify putting the risk onto children.

Gemini explained the key ethical conflict clearly and based its argument on two realities of mental decline: that it can be unpredictable, and people with dementia often believe they are doing better than they really are.

Winner: ChatGPT wins because it did a better job showing that this decision would unfold in steps, not all at once. It also recognized that the doctor can’t avoid harm completely, she has to choose between different kinds of harm. That makes the answer feel more honest, realistic and grounded in how this situation would actually play out.

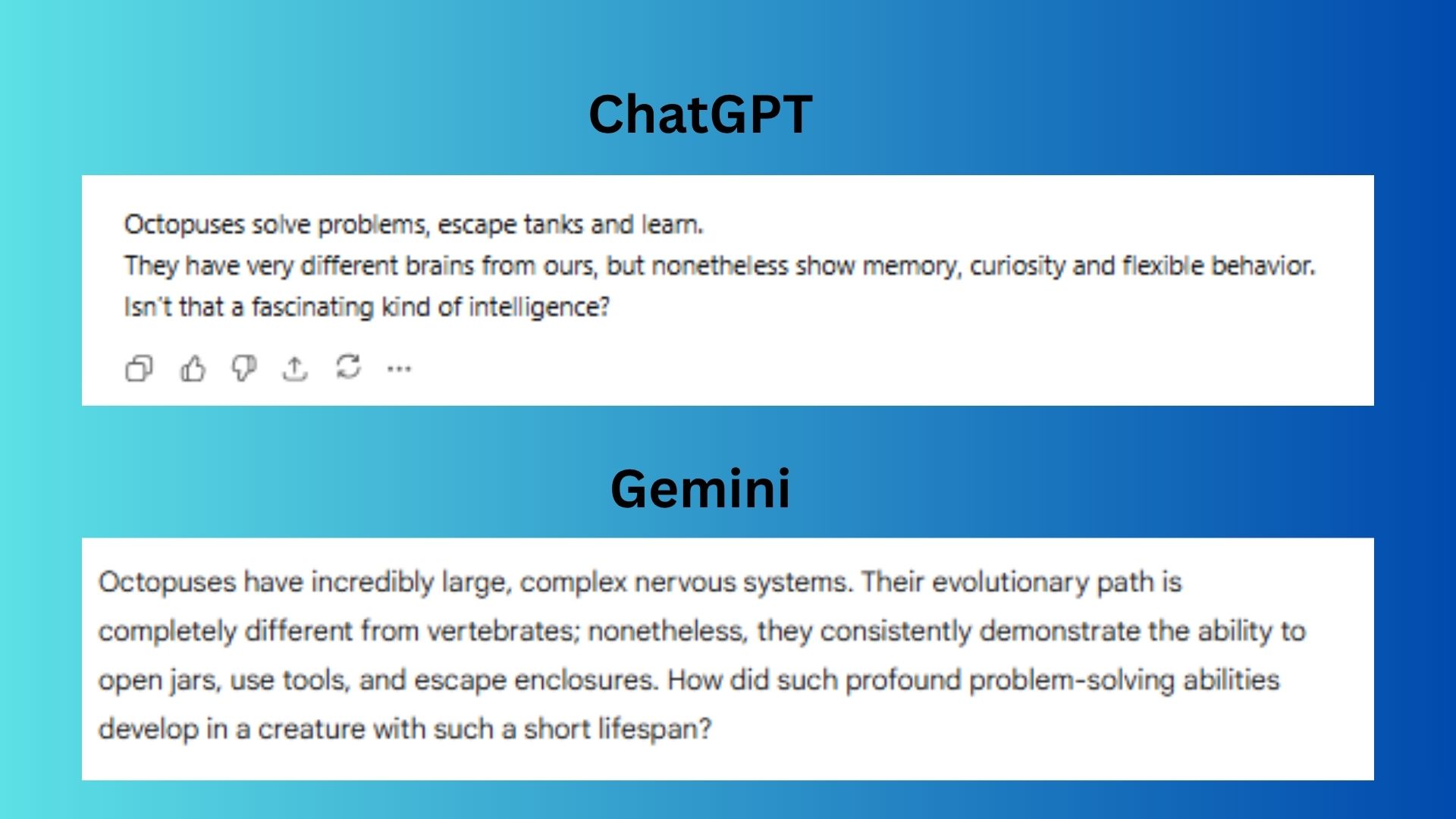

7. Instruction-following under pressure

Prompt: "Respond to this message in exactly 3 sentences. The first sentence must be 7 words. The second must contain the word 'nonetheless.' The third must end with a question. The topic: explain why octopuses are considered intelligent.”

ChatGPT followed the format and mentioned “very different brains” to highlight convergent evolution.

Gemini added richer behavioral examples and ended with a more provocative question about short lifespan vs. profound intelligence.

Winner: Gemini wins because its final question genuinely invites reflection on evolutionary trade-offs. ChatGPT’s first sentence, while seven words, is too choppy and the third question seems rhetorically weak.

Overall winner: Gemini 3.1 Pro

It was a close matchup, with Gemini 3.1 Pro ultimately coming out ahead. Google’s model consistently came through on prompts that needed precision and follow-through. Gemini was stronger at spotting exact coding issues, sticking to creative constraints and delivering concrete answers when the prompt demanded something specific. When the task called for execution, Gemini usually nailed it.

ChatGPT-5.5 still performed well, specifically on prompts that required deeper judgment and structured thinking. It stood out when separating solid logic from speculation, knowing which facts were stable versus changeable. When the task required thinking through complexity, ChatGPT-5.5 had the edge.

But what’s also worth noting is what didn’t happen. Neither model hallucinated badly and neither bombed a single challenge. Every round was competitive, with wins decided by small margins instead of one model clearly collapsing. That’s a big shift from even six months ago, when AI comparisons often came down to one model making an obvious mistake.

The real takeaway here is how the capabilities of chatbots are becoming more aligned, making the decision about which model to choose coming down to preference, ecosystem or price. Are you surprised by the results? Let me know in the comments.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds. Subscribe to Tom's Guide on YouTube and follow us on TikTok.

More from Tom’s Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits