Camera Wars: Why Autofocus is the New Megapixel

As the camera megapixel wars end, the autofocus battle begins closing the gap between DSLRs, mirrorless models and even smartphone cameras.

After introducing a slew of mirrorless cameras recently, Sony returned to DSLRs this month with the Alpha a77 II. This $1,200 camera's headline feature is the ability to shoot 12 photos per second, for up to five seconds — making it one of the fastest DSLRs available. Key to that speed is a rapid autofocus (AF) system based on Sony's "translucent mirror" version of DSLR technology. Meanwhile, Canon introduced last summer its $1,999 EOS 70D camera with an AF innovation called "dual pixel" to lock focus on video subjects.

With the megapixel wars tapering off, autofocus has become the new front for advancing camera performance, promising better ability to capture the fleeting milliseconds of everything from pro sports to hyperactive children.

MORE: Best DSLRs 2014

Article continues belowFine art photographers can take their time composing a shot and manually adjusting focus. Everyone else, from selfie shooters to war correspondents, relies on autofocus. And no matter how good AF technology gets, people still miss shots or get blurry video from time to time.

The autofocus challenge

The advances in autofocus are due to a fundamental shift from a sluggish AF technology, called contrast detection, to a faster one, called phase detection. To understand how important the shift is, we need a little background.

Traditionally, contrast detection has been used in any camera that provides a live preview of a shot on its LCD, such as with smartphones, point-and-shoots and older mirrorless cameras. To understand the premise, think of a silver coin on a dark tabletop. If the image is in focus, the line between bright silver and dark wood is clear, producing high contrast. If the image isn’t in focus, the coin has a hazy edge. To judge focus, contrast detection moves the camera lens back and forth, using the imaging sensor itself to measure the contrast each time, until it finds the spot with the greatest contrast and therefore the sharpest focus.

In phase detection, light from different points on the camera lens is reflected onto several tiny sensors, each consisting of a row of pixels. If the light from two points of the lens hits a particular pixel on each sensor row, those rows are considered to be "in phase." The camera's processor knows that when these light rays pass through the camera lens they will converge right on the image sensor. If the light hits different spots on the two sensor rows, the camera can instantly calculate how far and in which direction to move the lens and nail focus.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

MORE: Best Mirrorless Cameras 2014

Phase detection had until recently been the exclusive realm of DSLRs, and one of the best reasons to buy one. The same mirror mechanism that directs light into a viewfinder and a light meter also passes some light to a phase detection sensor. But there's a weak point: Once you press the shutter and the mirror flips out of the way to expose the image sensor, AF ceases, and a fast-moving subject may come out blurry.

Sony's translucent mirror breakthrough

The Sony Alpha a77 II ($1,200) is its latest model that seeks to remedy the autofocus dilemma using the company's translucent mirror system. Most of the light goes through this fixed piece of glass and onto the image sensor, but a small amount bounces onto a phase detection sensor. The mirror needn't move to take a photo, and the camera is monitoring focus for every shot — 12 times per second. The only DSLRs that come close in speed cost several times more: Canon's $6,800 EOS 1D X shoots 14 fps, and Nikon's $6,500 D4s captures 11 fps.

But even these superfast Canons and Nikons fall apart with video, when DSLRs flip up the mirror to keep the image sensor exposed at all times. That means no phase detection AF; the cameras have only sluggish contrast detection via their main image sensors. Relying on autofocus in this case means that the subject will drift in and out of focus. Any serious filmmaker has to master the art of manual focus to keep a video sharp. Sony's translucent mirror cameras avoid the problem by constantly shining light into the phase detection sensor, even when shooting video.

Canon's dual-pixel sensor

Canon recently introduced its own remedy with the "dual-pixel" sensor in its 70D camera. Eighty percent of the pixels on the 20-MP sensor serve double duty — both capturing images and collecting phase detection data. Using these phase sensors, plus the image processor to analyze action, the 70D can be set to lock on any subject and follow its movements, precisely adjusting focus the whole time.

But if the phase detection sensors can be right on the imaging chip, why even bother with the complex mirror system and separate AF sensor? Many camera makers have decided there is no need to: Fujifilm, Nikon, Olympus, Samsung and Sony all make at least some mirrorless cameras with phase detection on the image sensor, using it alongside contrast detection to refine its results — a system called hybrid AF.

The smartphone camera autofocus breakthrough

The DSLR vs. mirrorless camera debate is becoming an academic one. Both camera types can take good photos. And while DSLRs are bigger, neither model type will fit in your pocket. But a smartphone will, which is why it has become the number one camera for many people.

MORE: Best Smartphone Cameras 2014

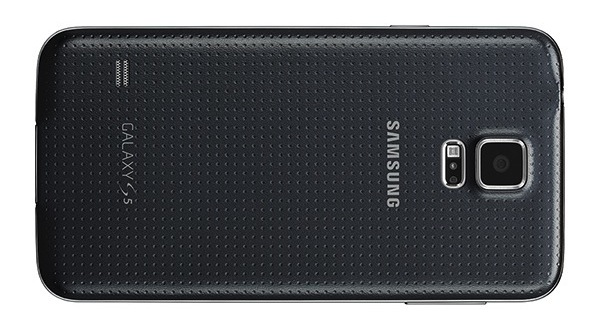

The smartphone camera won't beat out a DSLR or mirrorless model for performance yet. But it's getting closer. Phone makers will probably never install a mirror mechanism and optical viewfinder in a cameraphone, but they can install an imaging chip with DSLR-style phase detection sensors. In fact they just have, starting with the new Samsung Galaxy S5, which claims a 0.3-second autofocus time.

The S5 is alone, but probably not for long. Archrival Apple is expected to introduce an iPhone 6 by the fall. Given the importance Apple places on its cameras, and its fierce rivalry with Samsung, a phase detection AF sensor is a likely upgrade for the iPhone 6 camera. Smartphone rivals HTC, LG, Nokia and Sony probably won't be far behind with the technology.

With DSLR-grade autofocus in everyone's pocket, there will be one fewer reason for hobbyist photographers to lug a big camera around.

Follow Sean Captain @seancaptain and on Google+. Follow us @tomsguide, on Facebook and on Google+.

Sean Captain is a freelance technology and science writer, editor and photographer. At Tom's Guide, he has reviewed cameras, including most of Sony's Alpha A6000-series mirrorless cameras, as well as other photography-related content. He has also written for Fast Company, The New York Times, The Wall Street Journal, and Wired.

-

dstarr3 This is just a temporary trend. For a while, people were distracted by wanting the most zoom in the smallest camera, then people wanted f/2.8 lenses or faster in cameras too small to benefit, and now people are pleased to have better autofocus. But, in between, before, and after all of these trends, people just wanted more megapixels, even though the average consumer doesn't need more than 8MP. It's nice that periodically customers are interested in improving these other technologies that make a better camera when things get a little dated, but once camera technology plateaus, they always go back to demanding more megapixels, just because it's a simple number to look at and assume it has anything to do with quality.Reply -

bujuki I don't know why my previous comment doesn't show up. You should watch Peter Gregg's Youtube video "My new Panasonic GH4 Camera Arrives" (watch?v=Y26VQXB49mM) and add another important aspect, low light AF. That camera is simply awesome.Reply -

bujuki @dstarr3 : Sony decided to make their new full frame mirrorless, A7S, to only had 12.2 MP - lower than 13 MP on many smartphone - but upped the ISO performance to a whopping 409600. Just watch the video test on Youtube : watch?v=XgbUgNiHfXM. So my thought if A7S is a success it will be low light performance race for quite a few years ahead.Reply -

gm0n3y Modern smart phones take pretty good photos, but I'd like to see them improve on low light / motion performance.Reply -

annymmo The future is liquid lenses (can focus much faster) and electrically tunable metamaterial lenses where you change the focus, refractive index by changing a voltage over very small elements that control the light going through it. (electrically tunable metamaterials focus even faster than liquid lenses)Reply

Club Benefits

Club Benefits