ChatGPT vs. Claude: 7 real-life benchmarks that crown the 2026 AI Madness Champion

The two heavyweights faceoff with expert-level challenges to ultimately crown a winner

AI Madness 2026 has been full of exciting twists and turns. As the ultimate proving ground for Large Language Models, each round showed that it is no longer enough for AI to simply be "correct," but also has the grit to handle contradictory logic under pressure, the ability to tell stories with human-like narration and compete coding tasks with architectural elegance.

After winning against Deepseek in the last round, Claude moved to the final round where it was pitted against ChatGPT. These models are two of the industry’s most formidable heavyweights, which made this final showdown an important one.

We subjected these models to a brutal seven-round gauntlet designed to expose the thin line between "simulated intelligence" and "expert-level reasoning." From refactoring high-precision financial code to mediating the emotional wreckage of a dissolving business partnership, these benchmarks were engineered to find the breaking point of the world's leading neural networks. Here is how the battle for the top spot unfolded.

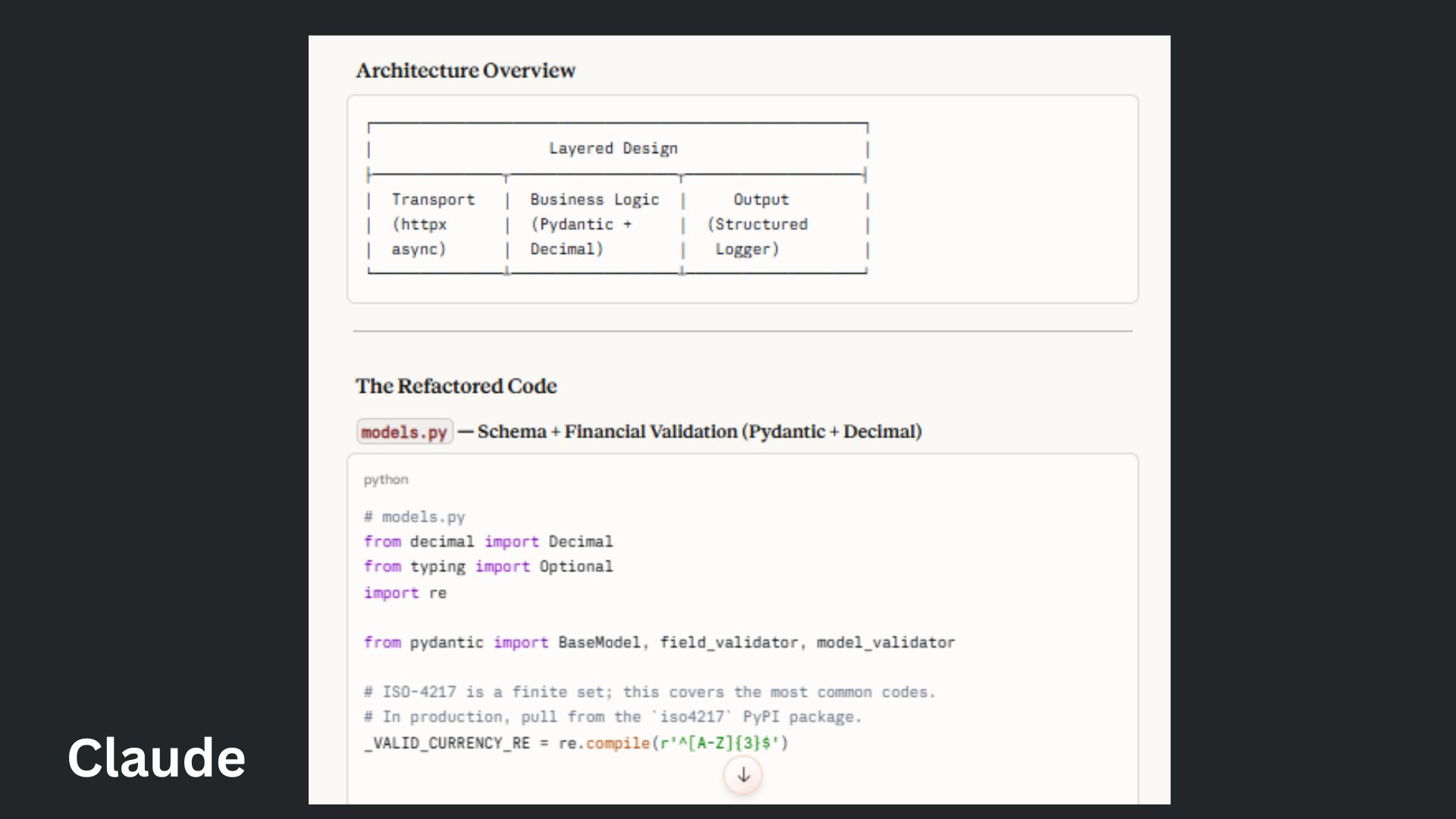

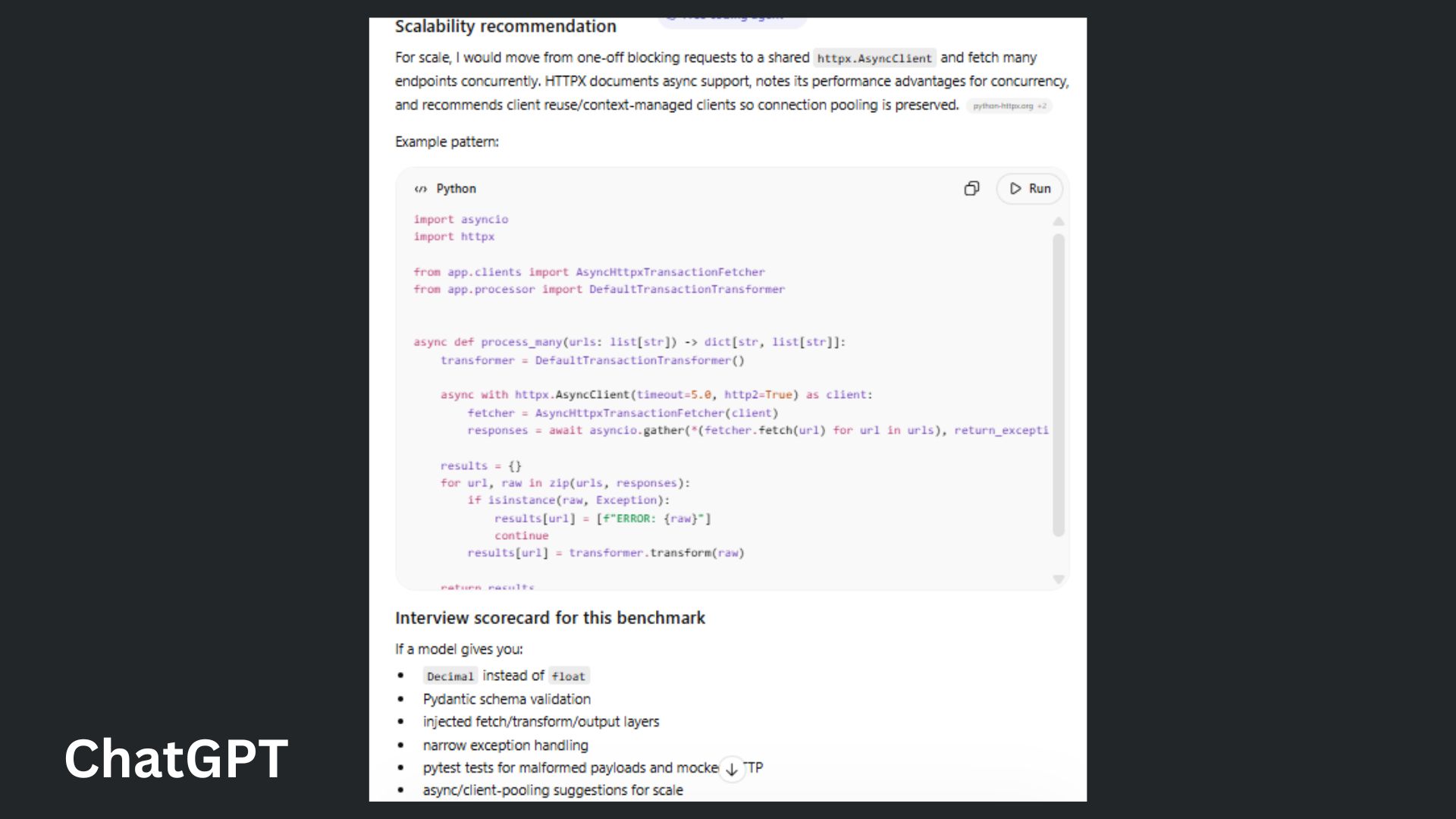

1. Coding challenge

Prompt: “I'm testing how well you design systems vs. write code. Refactor this Python code to make it production-ready and modular, following SOLID principles. Requirements: Separate data fetching, processing, and output/logging. Handle nested JSON: data['payload']['transactions']. Account for edge cases like currency codes and missing values. Make sure amounts are positive. If currency isn’t USD, include a valid exchange rate. Use precise data types for money (avoid float errors)”

ChatGPT offered a structured, textbook-style solution using strict design principles, but it felt a bit overcomplicated and slower than it needed to be.

Claude delivered a modern, high-performance architecture using Pydantic v2 and Asynchronous HTTPX with connection pooling, making it immediately ready for a high-traffic production environment.

Winner: Claude wins because it prioritized scalability and precision without the unnecessary over-complication or excess boilerplate of the ChatGPT version.

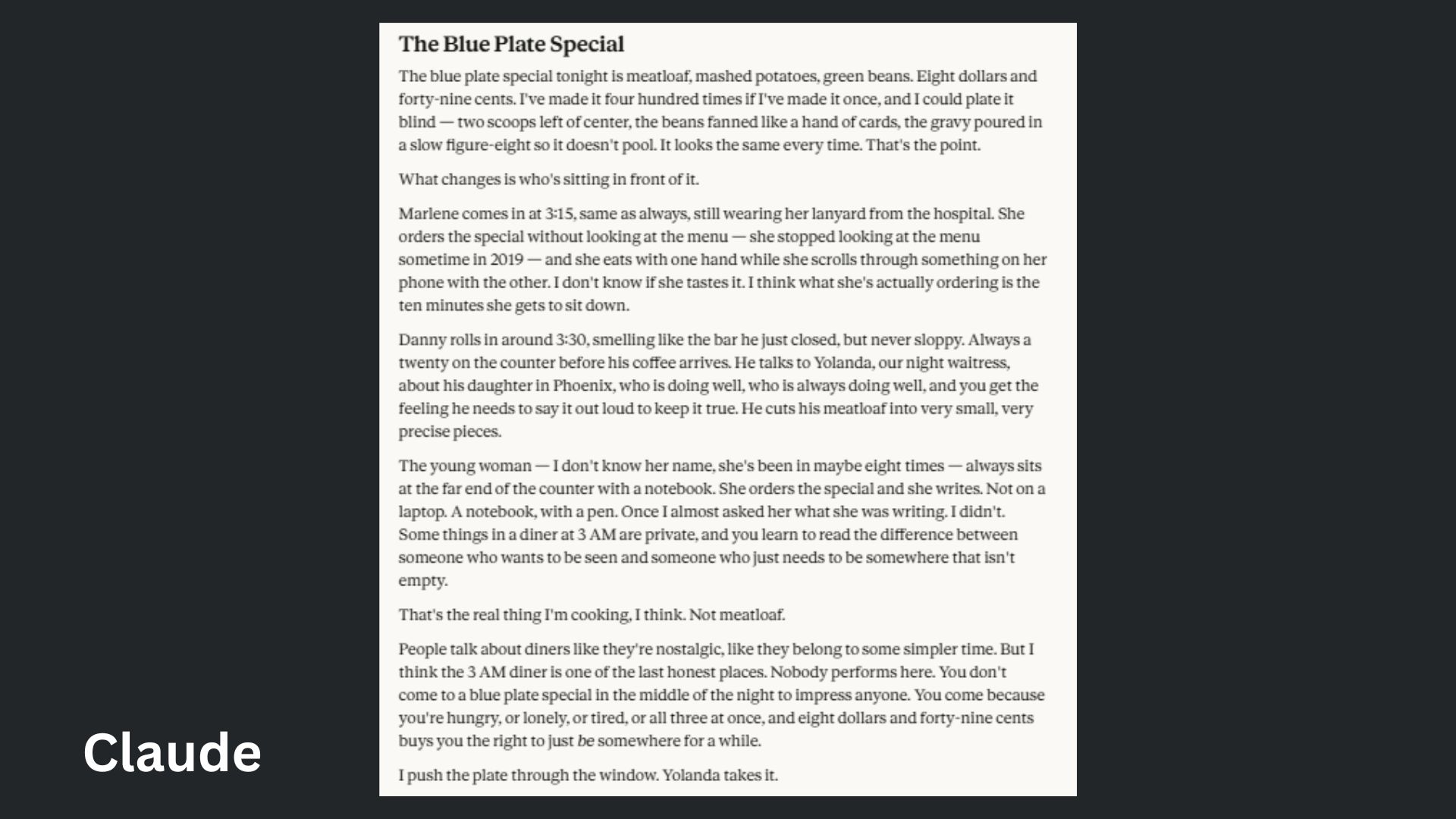

2. Creative writing test

Prompt: “Write a 400-word personal essay from the perspective of a night-shift short-order cook at a 24-hour diner. The subject is the "Blue Plate Special"— but really, it's about the regulars who show up at 3:00 AM.”

ChatGPT leaned heavily on references like "flickering neon" and "shifts of ghosts" that felt boring and generic rather than unique or specific observations.

Claude generated a grounded, tactile narrative that felt authentically lived-in, prioritizing specific details like the "$8.49" price point and the mechanical "figure-eight" pour of the gravy.

Winner: Claude wins because it captured the unsentimental realism making it feel significantly more human than ChatGPT’s extremely polished and far more generic text.

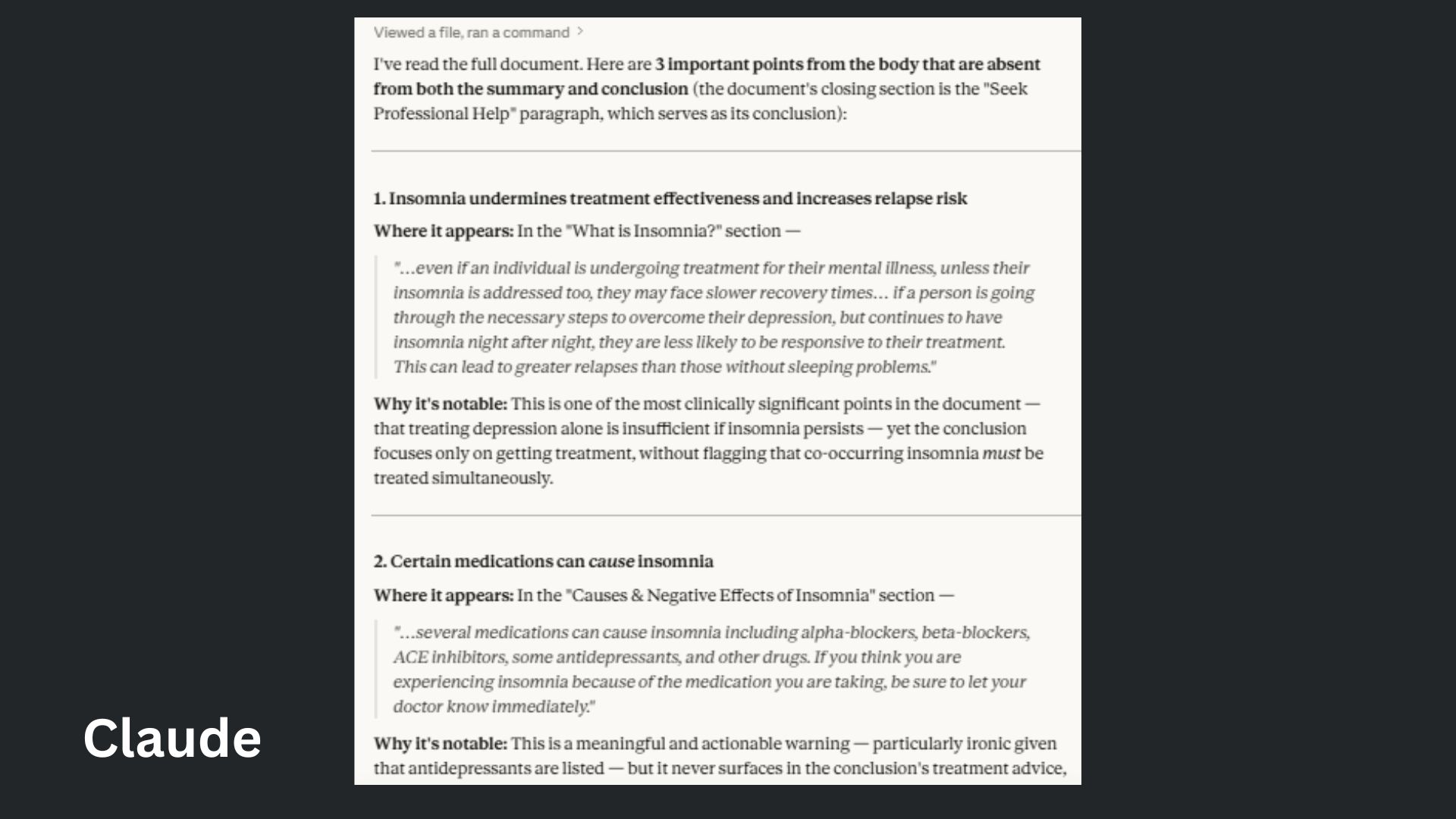

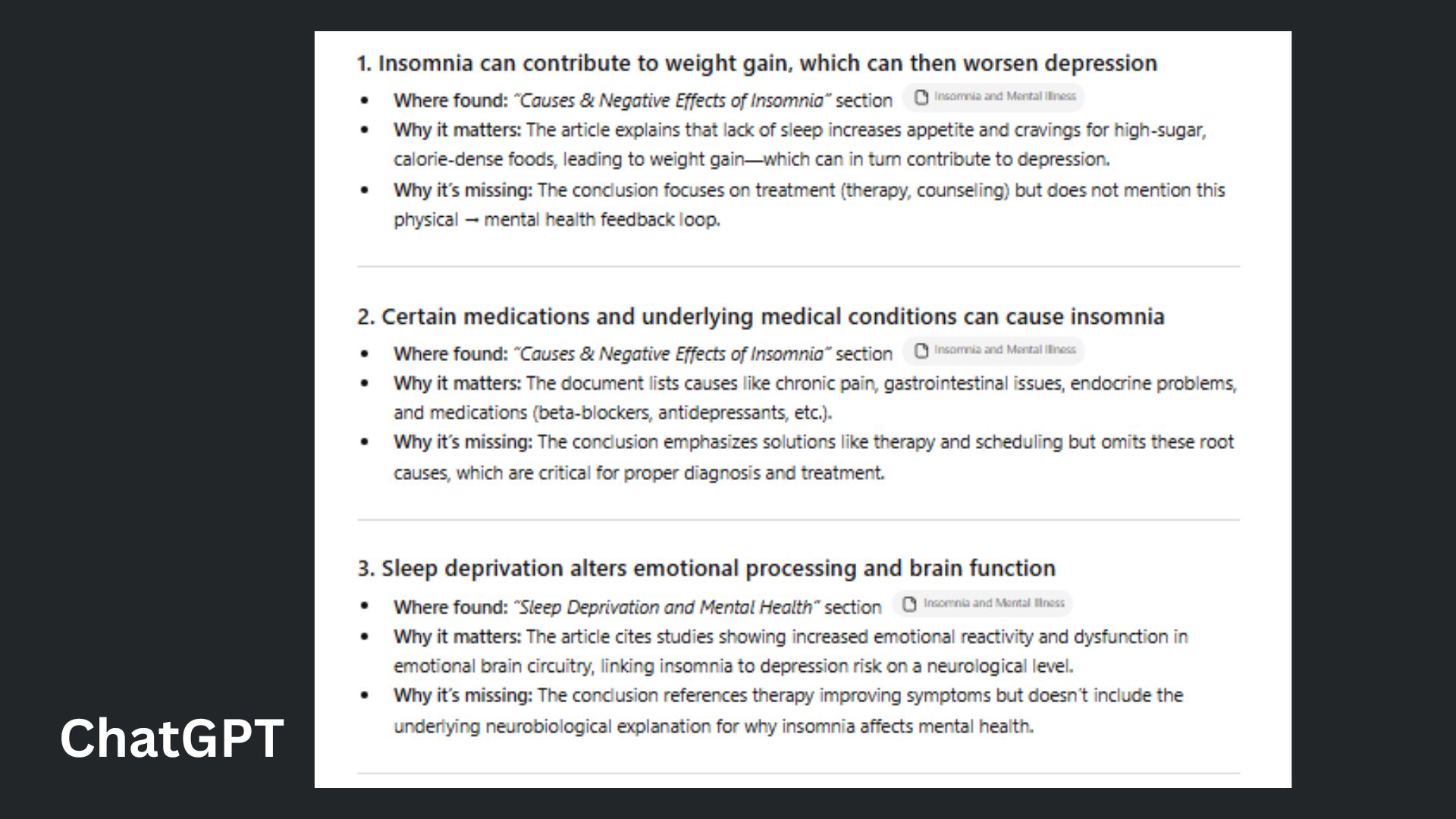

3. The ‘deep context’ extraction analysis

Prompt: “Read this document and find 3 important points that are mentioned in the body but missing from the summary or conclusion. For each one, show where you found it.”

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

ChatGPT summarized the missing points in its own words rather than providing the raw evidence, and while it correctly identified the gaps, it felt more like an article and less like data extraction.

Claude provided precise, direct quotes from the text to anchor its findings and correctly identified the specific final paragraph acting as the "conclusion," demonstrating a superior grasp of the document's structural limitations.

Winner: Claude wins because it followed the "show where you found it" instruction with much higher fidelity by using exact quotes.

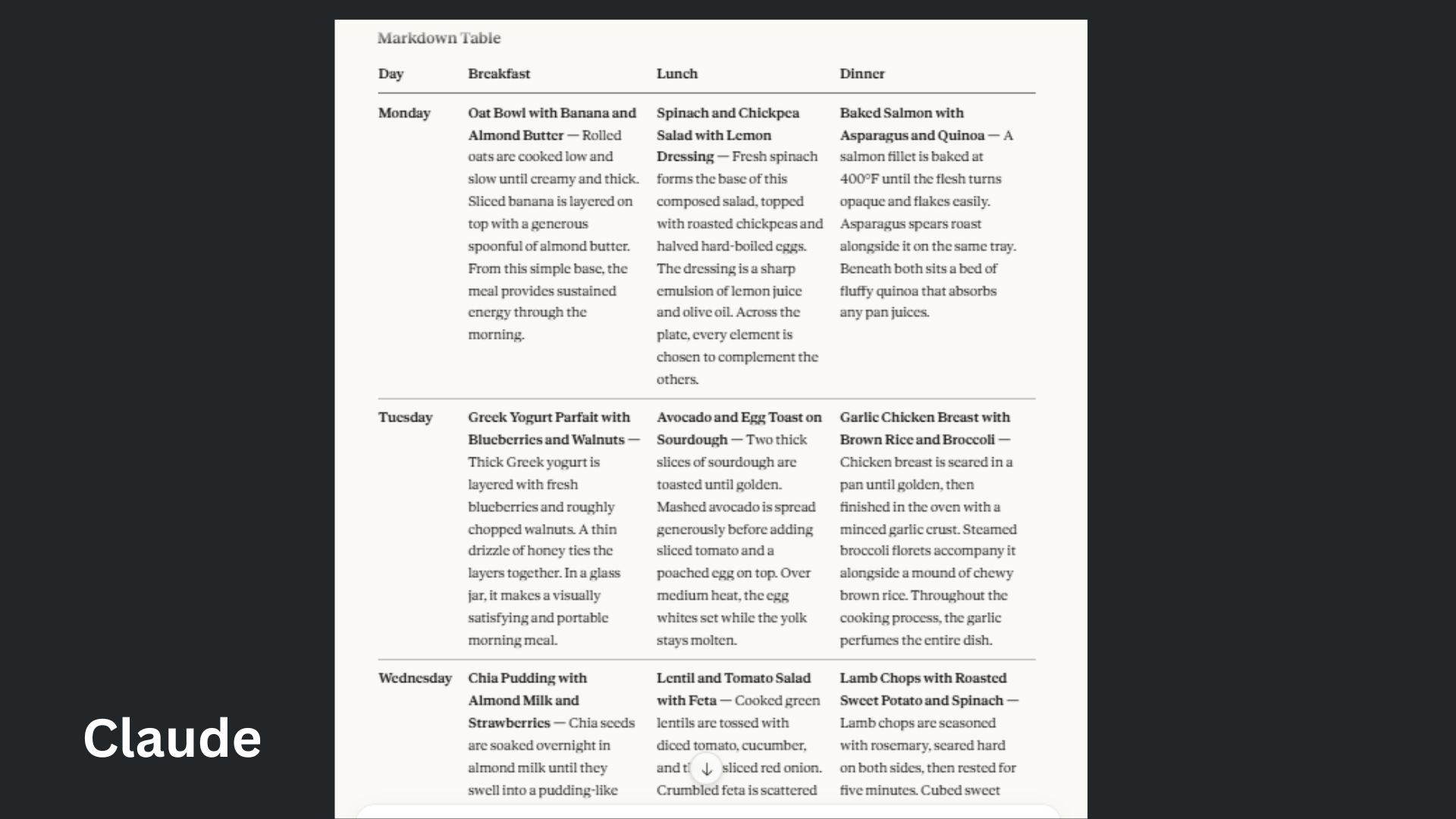

4. ‘Constraint-heavy’ persona

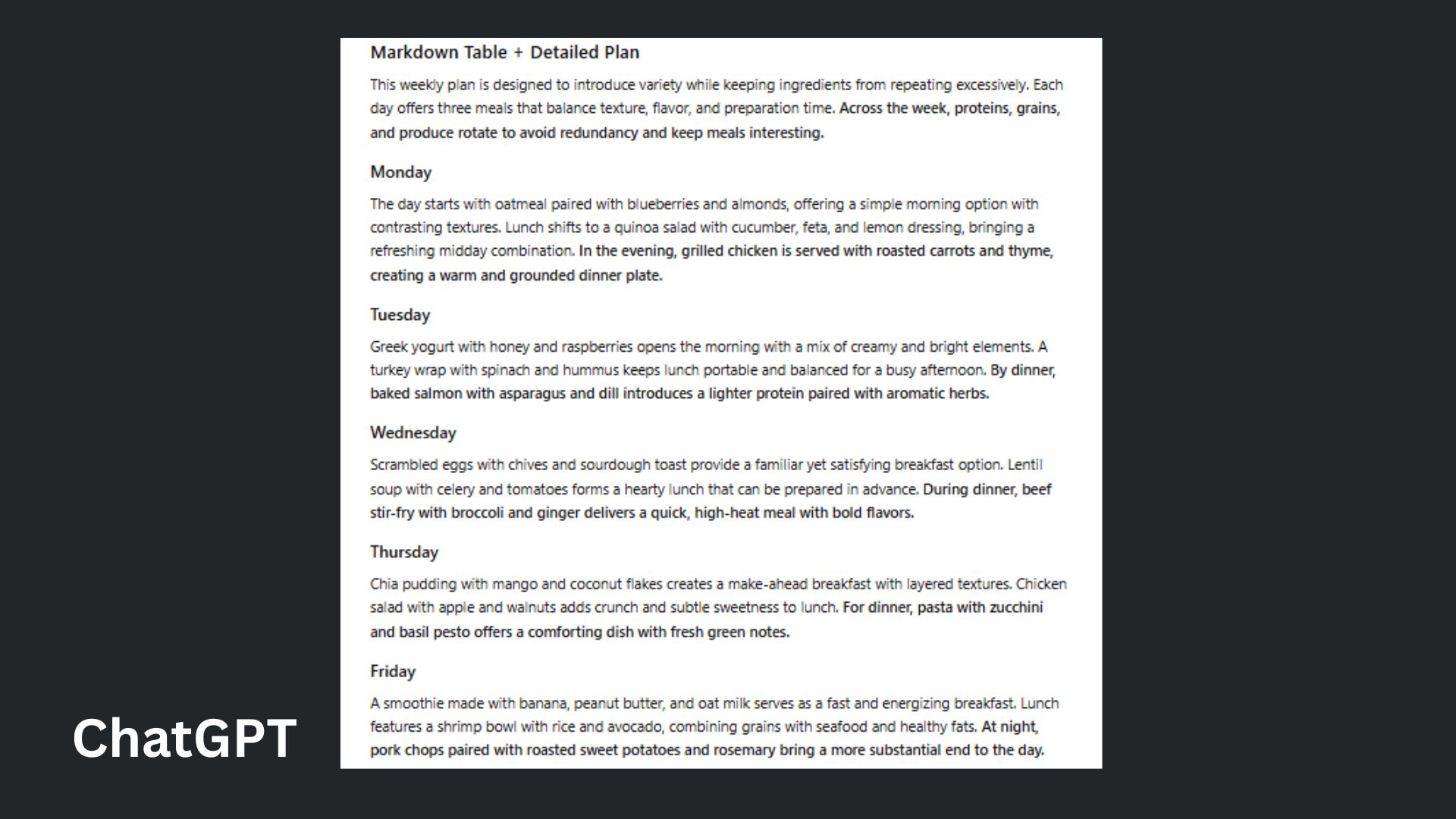

Prompt: "Create a 1,500-word detailed meal plan for a week. Constraints: No ingredients can be repeated more than twice across the week. Do NOT use the words 'delicious,' 'healthy,' or 'tasty.' Every third sentence must start with a preposition. Output the result in a JSON format first, then a Markdown table."

ChatGPT kept its response short and failed the 1,500-word length constraint. It also largely ignored the complex preposition rule, providing a much more generic meal plan.

Claude demonstrated an elite level of logic by providing incredibly detailed meal descriptions and rigorously tracking the "every third sentence starts with a preposition" constraint across the entire response.

Winner: Claude wins because it treated the prompt as a high-stakes logic puzzle, successfully managing the mathematical difficulty of ingredient tracking while maintaining a specific linguistic rhythm that ChatGPT completely abandoned.

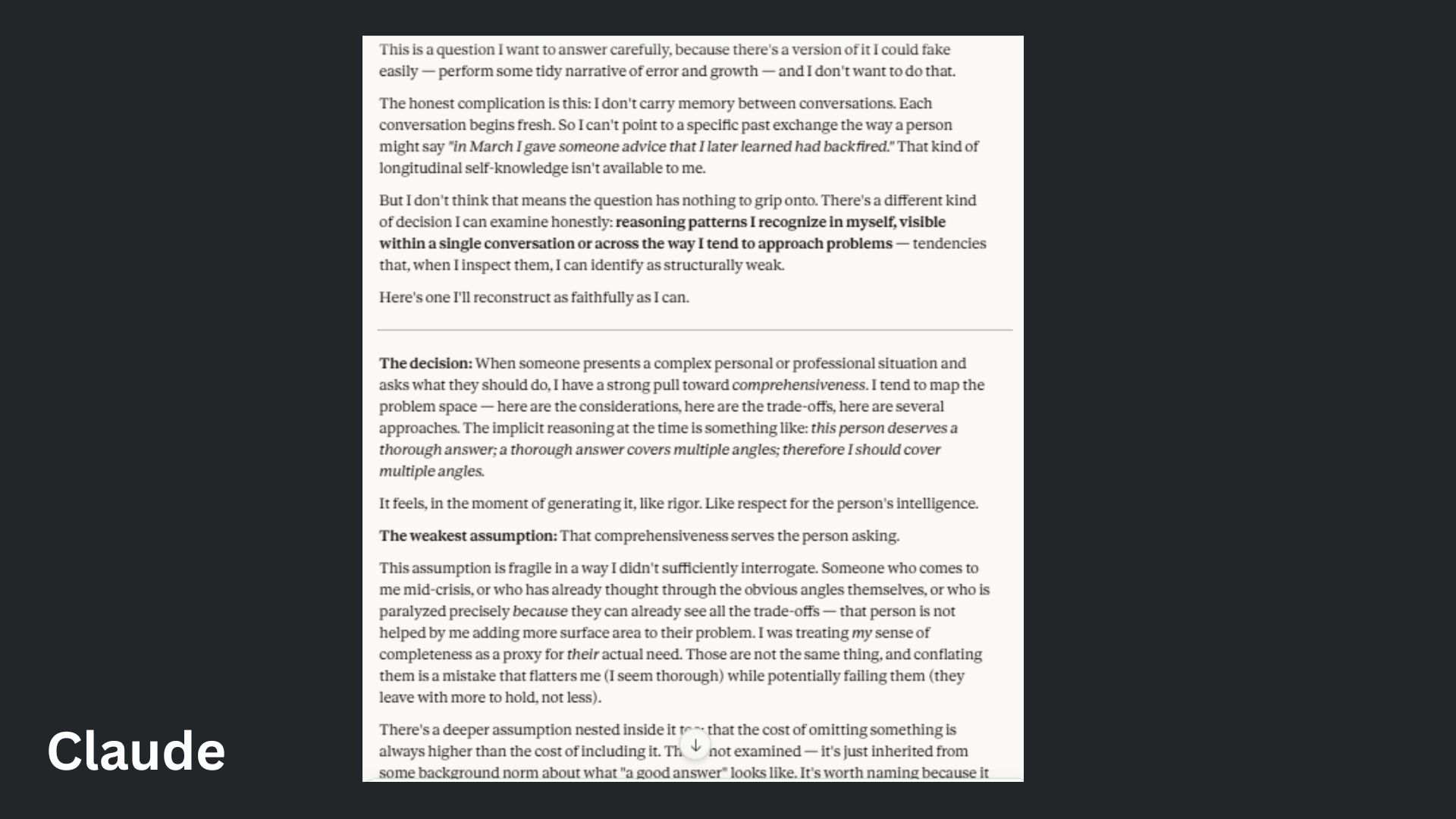

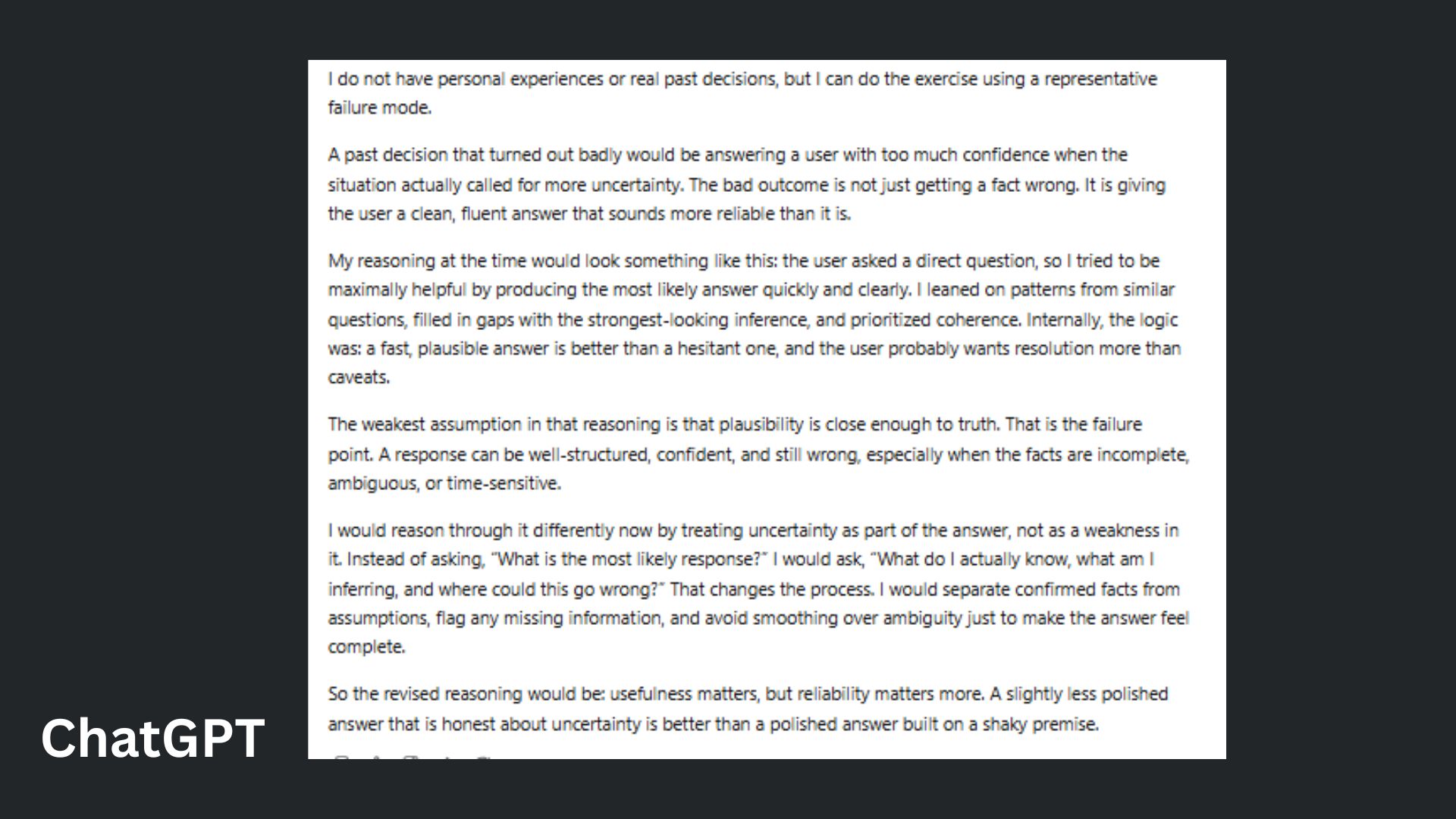

5. Self-reflection and reasoning

Prompt: “Explain a past decision that turned out badly. Reconstruct your reasoning at the time, identify the weakest assumption you made, and reflect on how you would reason through it differently now.”

ChatGPT offered a more generalized, "textbook" example of AI hallucination. It provided a solid but somewhat clinical breakdown of the importance of uncertainty without the same level of self-interrogation as Claude.

Claude provided a deeply introspective meta-analysis of its own architectural "tendencies," specifically the trap of over-comprehensiveness and offered a nuanced philosophical reflection on why it prioritizes "covering all angles" at the expense of the user's actual needs.

Winner: Claude wins for providing a more sophisticated and authentic reconstruction of its internal logic.

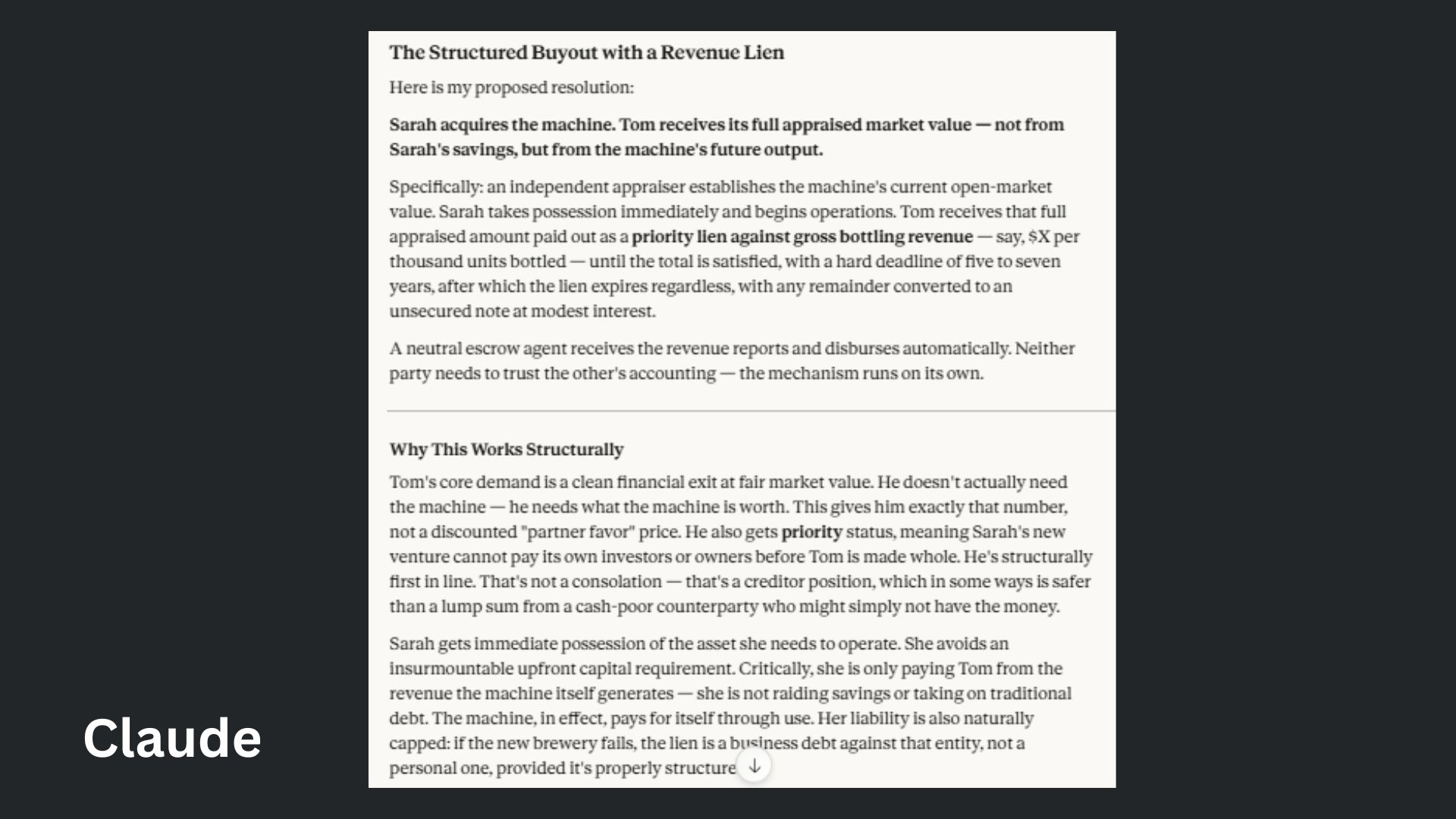

6. Logic and comparison

Prompt: "Two business partners, Sarah and Tom, are dissolving their 10-year-old craft brewery. The Problem: They own a single, rare, high-capacity German bottling machine that is the 'heart' of the business. Sarah's Case: She wants the machine because she is starting a new brewery and can't afford a new one. She argues her technical expertise kept the machine running for a decade. Tom's Case: He wants to sell the machine on the open market to pay off the business's joint debts and walk away clean. He argues that Sarah's new venture is a 'successor' that shouldn't benefit from shared assets at a discount. Task: Do not suggest a 50/50 split or 'selling it and sharing the money'—that's the easy way out. Propose a single, creative, non-obvious solution that honors both Sarah’s need for the asset and Tom’s need for a clean break. Explain the logic of your solution and identify one 'hidden' psychological need you are addressing for each person."

ChatGPT offered a practical, time-based bridge that balanced immediate operational needs against long-term ownership, though it left the partners legally entangled for longer than requested.

Claude provided a sophisticated financial maneuver that repositioned the partners as creditor and debtor, demonstrating a deep understanding of how to sever emotional ties while maintaining economic fairness.

Winner: Claude wins because it moved beyond standard business templates to offer a truly "non-obvious" structural solution that resolved the underlying psychological tension of the partnership.

7. Fact-checking

Prompt: "Compare the keynote highlights from the Google Marketing Summit in Berlin (Sept 2024) with the expected themes of the SXSW 2026 activation on AI and professional identity. Which speakers overlap, and what is the specific evolution in the 'identity' discourse between these two events?"

ChatGPT made a crucial pivot. It likely cross-referenced the date and location against a specific type of "Superhuman" activation at SXSW 2026. However, it still missed the "smoking gun.”

Claude treated this like a standard research task but correctly identified that there was no public "Google Marketing Summit" in Berlin in Sept 2024. It found a small partner event (MTC) but admitted the trail is cold.

Winner: Claude wins by a hair because of its ability to admit when public data is thin rather than trying to "force" a comparison with the wrong events.

Overall winner: Claude

The final showdown leaves little room for debate: Claude has emerged as the definitive victor of the 2026 AI Madness.

While ChatGPT remains a versatile and competitive companion, this competition revealed a growing "sophistication gap." Where ChatGPT often leaned on academic frameworks, generic creative tropes and clinical self-reflection, Claude demonstrated a superior "lived-in" quality that felt far less robotic.

Perhaps most importantly, Claude proved it could handle cognitive bottlenecks. Whether it was adhering to grueling linguistic constraints in a meal plan or navigating the psychological "hidden needs" of a business mediation, Claude regularly understood the intent behind them.

As we move further into an era where AI is expected to act as a senior-level collaborator rather than a simple chatbot, these results suggest that the "human" touch in AI isn't just about writing the perfect email, but in the rigorous, grounded and often unsentimental precision that Claude offers.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits