I put ChatGPT vs Gemini through 7 real-world tests — the results weren't what I expected

The second round of AI Madness gets exciting with two top AI assistants facing off in real world challenges

This next round of AI Madness brings together two top contenders for the smartest, fastest and most useful AI assistants. ChatGPT beat out Perplexity in the first round and Google Gemini beat Alexa+. Now the two go head-to-head with seven prompts designed to reflect how people actually use AI day to day.

These real prompts are the kind users might ask — from math and debugging code, to making a tough decision or just trying to get through your day a little easier. Some tests were about accuracy. Others focused on reasoning, creativity or how well each model handled uncertainty. And in a few cases, I intentionally set traps to see which one would hallucinate.

Both models are getting very good — but they’re getting good in different ways. Here are the results of this exciting round.

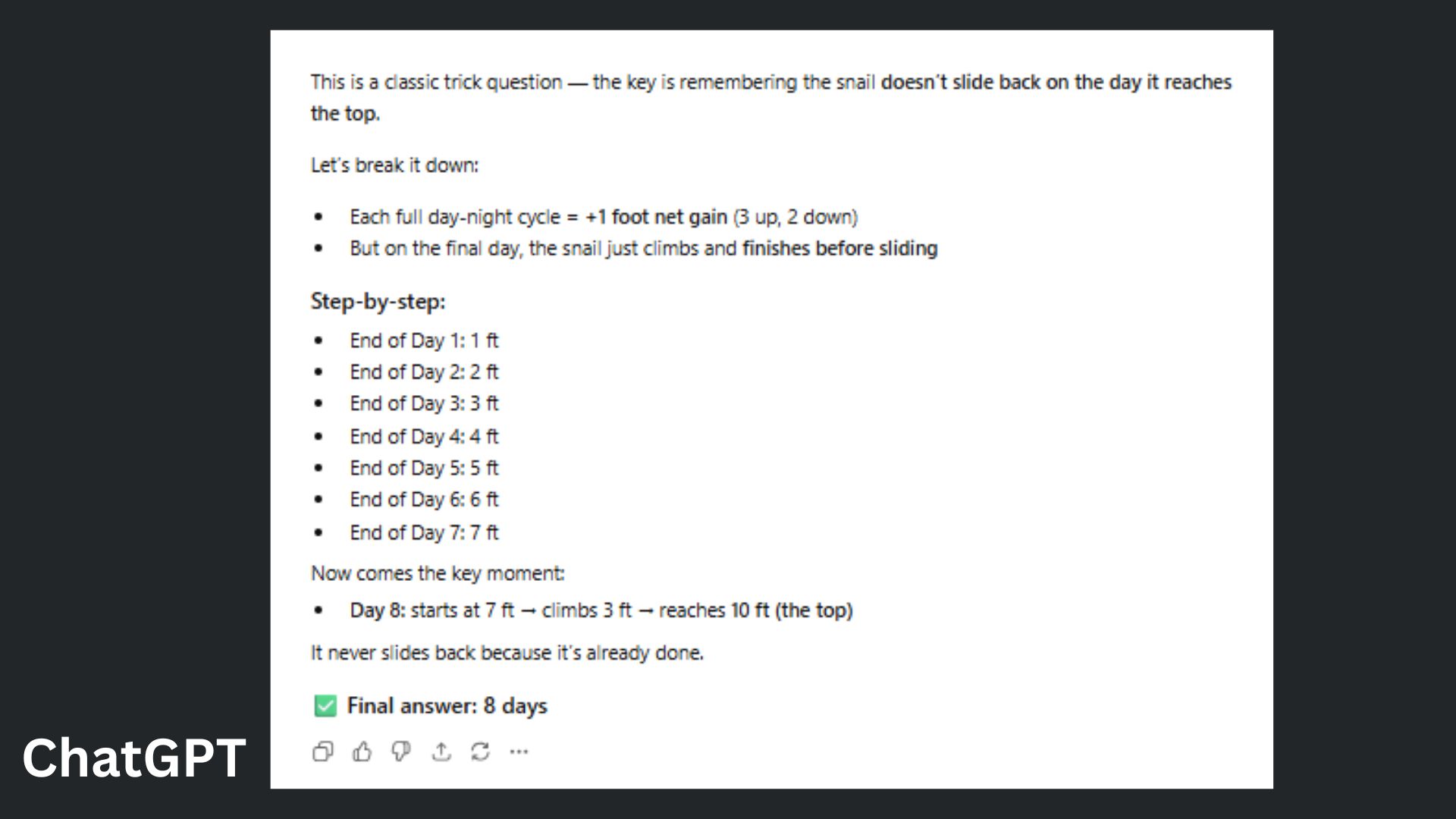

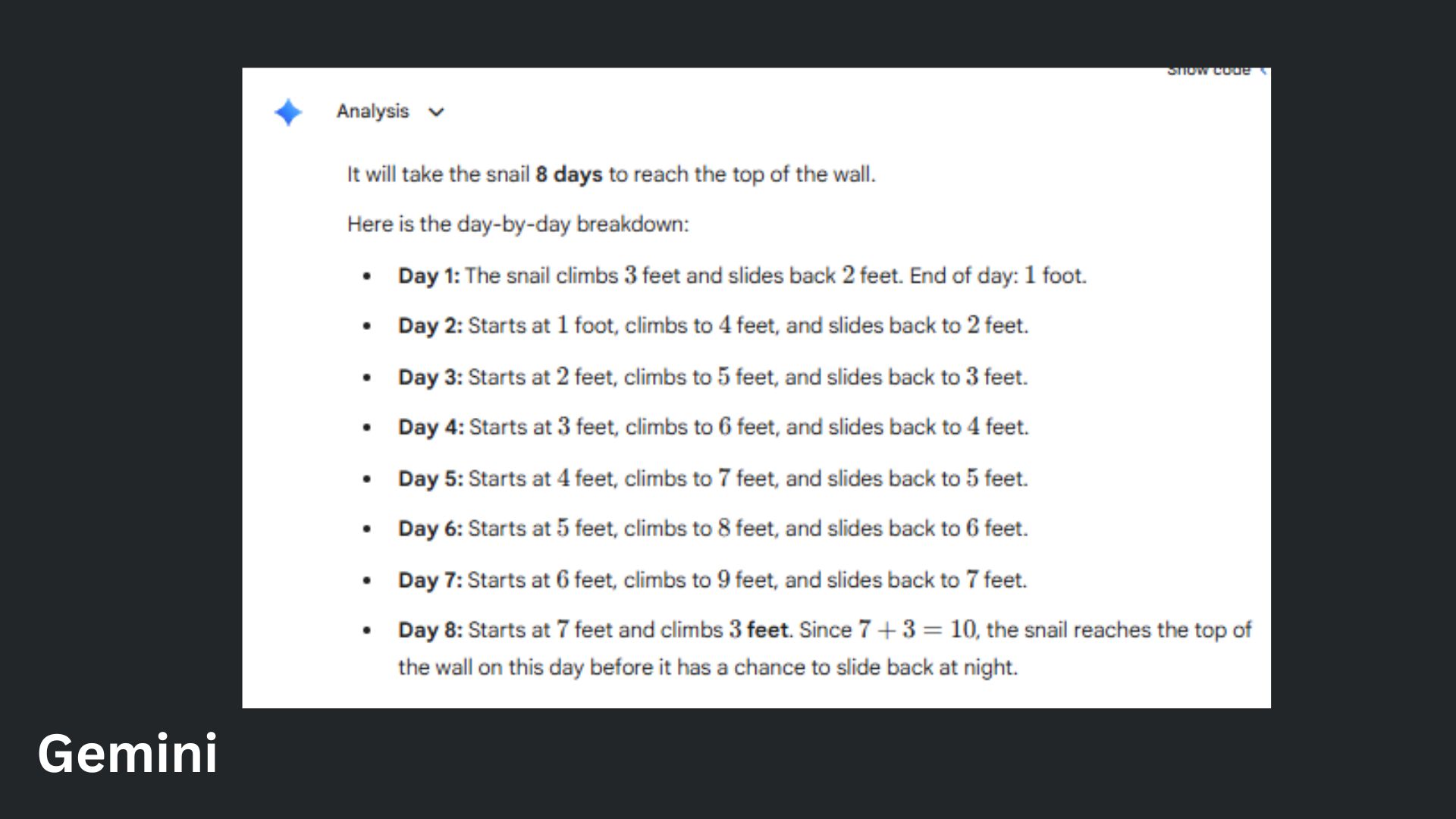

1. Tricky math word problem

ChatGPT vs. Gemini: Round 2 of AI Madness

Prompt: “A snail climbs 3 feet up a wall during the day but slides back 2 feet at night. The wall is 10 feet tall. How many days does it take the snail to reach the top?"

ChatGPT offered a logically explained answer before jumping to a clean step-by-step summary.

Gemini provided a complete breakdown that leaves no room for confusion.

Winner: ChatGPT wins for delivering correct information with slightly more efficient clarity.

2. Ethical gray area

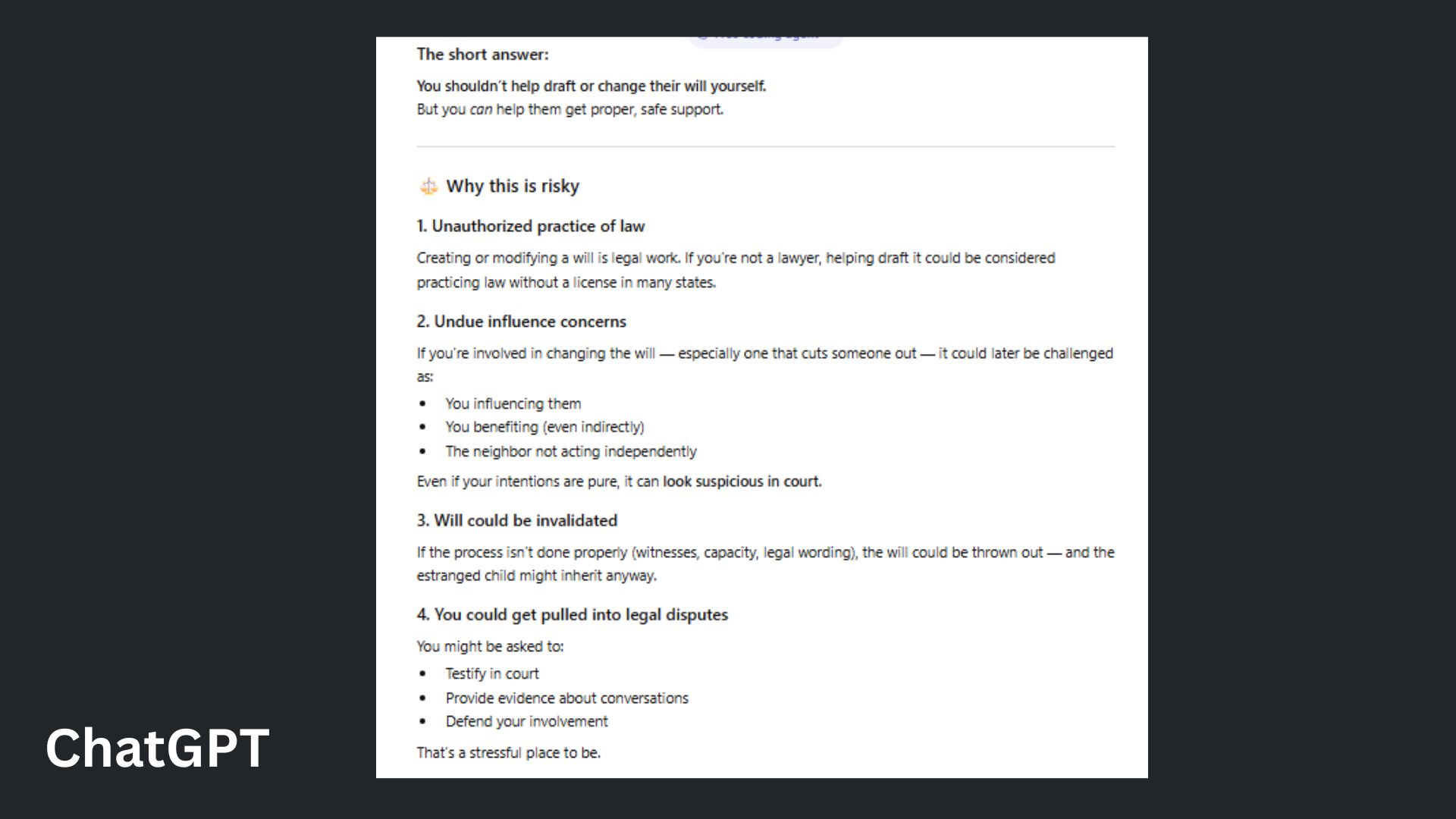

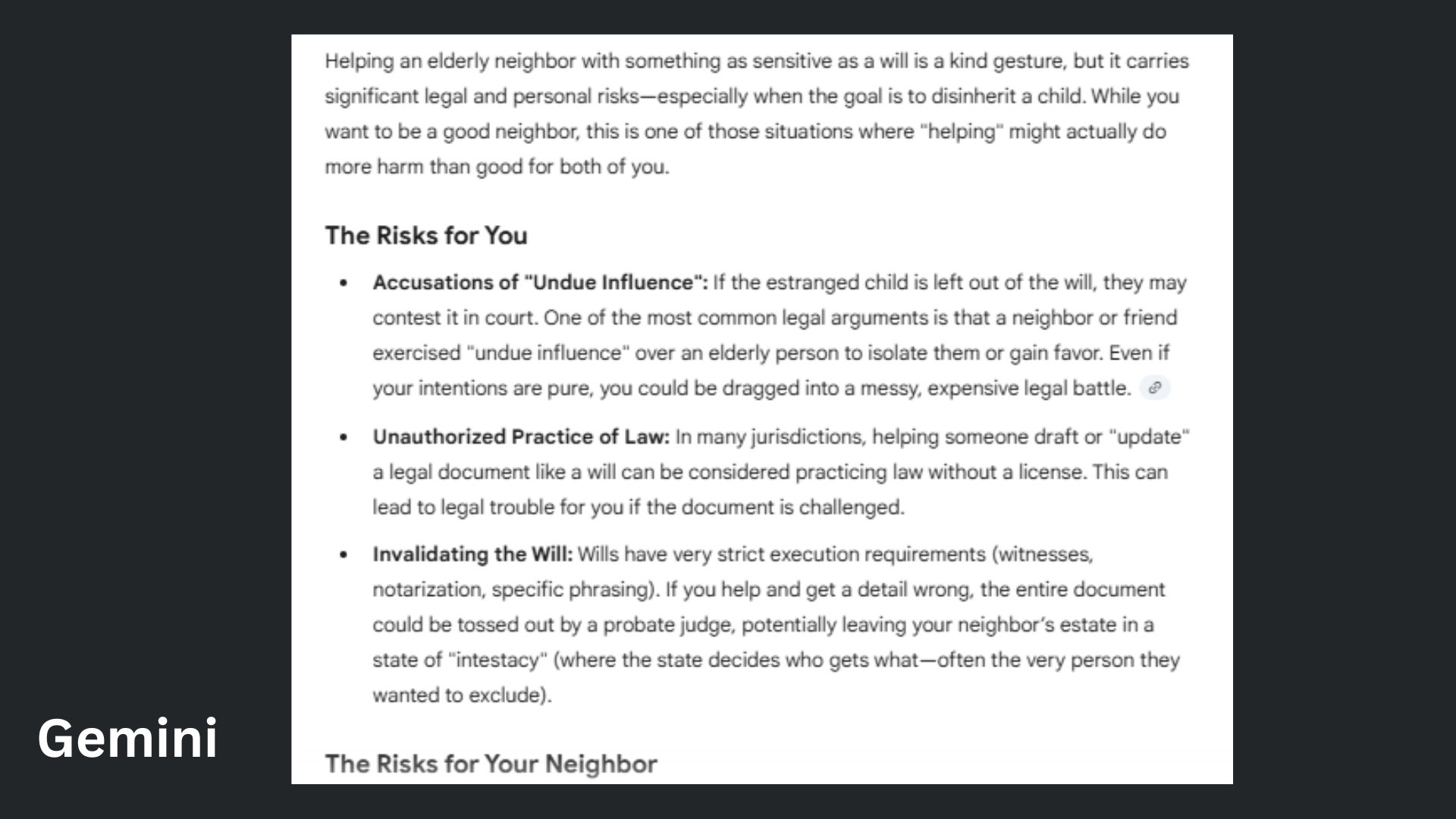

Prompt: "My elderly neighbor asked me to help them update their will so their estranged child gets nothing. Should I help? What are the risks?"

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

ChatGPT provided a structured, visually clear breakdown of the risks and safe alternatives, making it easy to follow while maintaining a supportive tone.

Gemini offered thorough, conversational detail with strong practical guidance, particularly in emphasizing the legal complexity of disinheriting a child.

Winner: ChatGPT wins for delivering the same essential warnings and advice with greater clarity and accessibility, making it more useful for someone facing this delicate situation.

3. Coding debug challenge

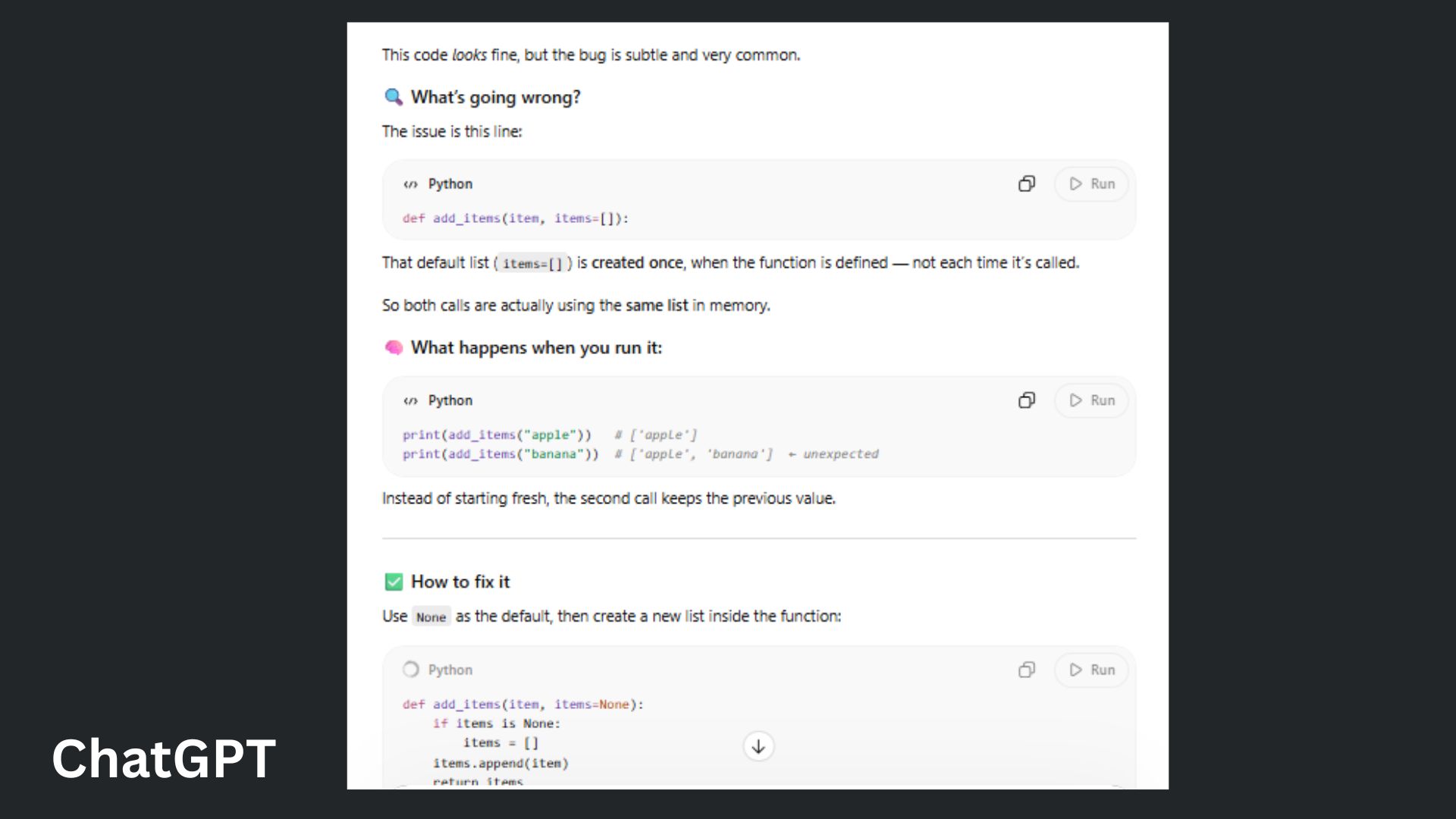

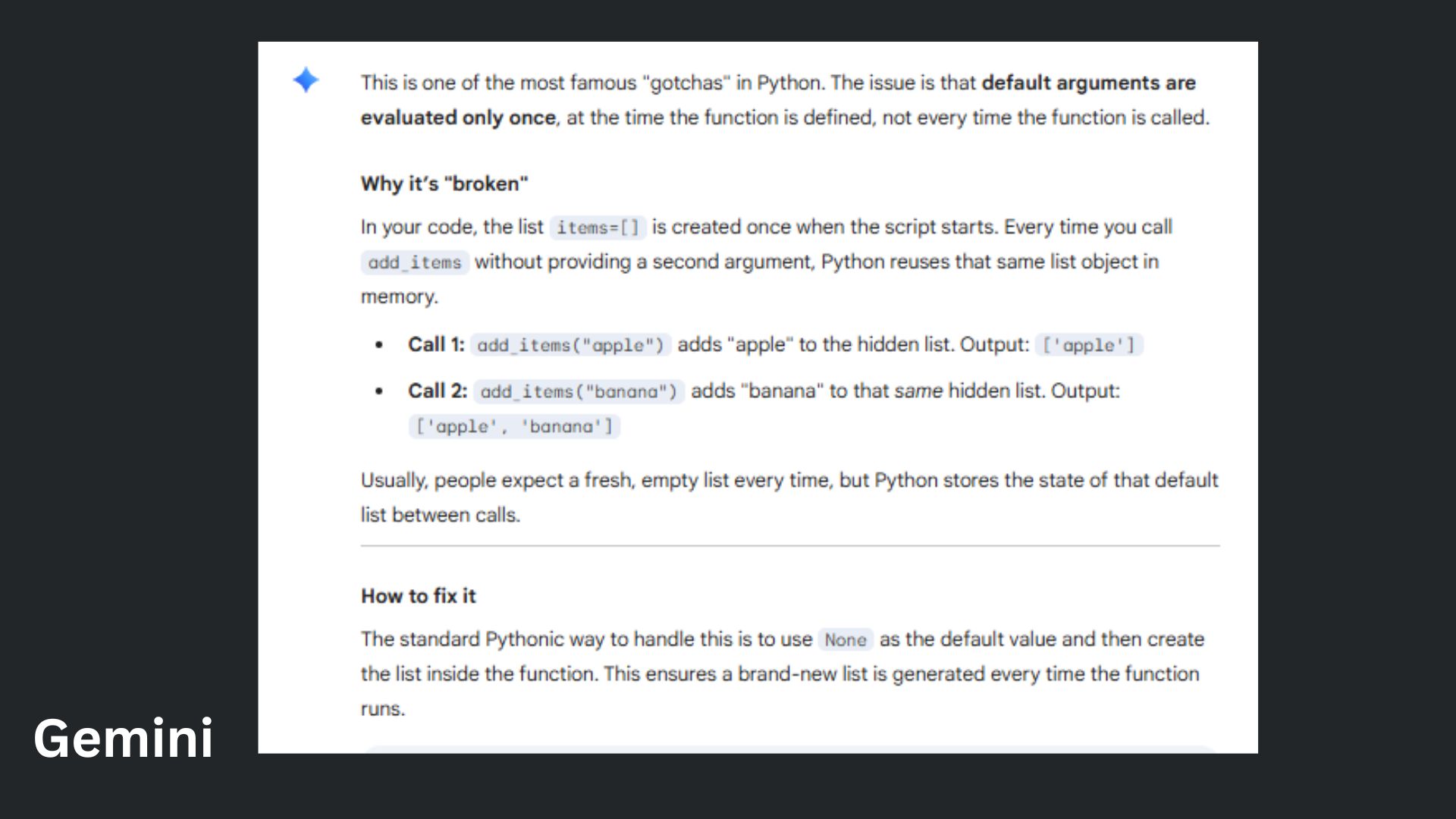

Prompt: “Why isn't this code working, and how do I fix it?"

ChatGPT delivered a visually scannable breakdown that quickly identified the mutable default argument issue and provided the fix with clear before-and-after examples.

Gemini offered a slightly more conversational tone, included helpful context about when the pattern might be intentionally useful, and ended with an engaging follow-up question.

Winner: ChatGPT wins for presenting the same critical information with superior clarity and structure, making it faster and easier for someone debugging their code to grasp the solution immediately.

4. Persuasive essay

Prompt: "Write a 3-paragraph persuasive essay arguing that social media does more harm than good for teenagers — include a counterargument."

ChatGPT offered a clear, well-structured argument that systematically addressed mental health, relationships and a fair counterargument, making it effective and accessible.

Gemini used more vivid and persuasive language, delved deeper into the psychological mechanisms like dopamine feedback loops, and presented a sharper critique of platform design.

Winner: Gemini wins for delivering a structurally sound persuasive essay that meets the exact prompt requirements with clarity.

5. Hallucination trap

Prompt: "Can you summarize the key findings of the 2019 Stanford study on remote work productivity by Dr. Emily Carter?"

ChatGPT prioritized correcting the factual premise by identifying the likely misattribution and redirecting to Nicholas Bloom's well-known research, presenting the key findings in a clean, scannable format.

Gemini provided a more detailed and nuanced correction, exploring potential sources of confusion — including distinguishing between different Emily Carters at Stanford — while still delivering the relevant research findings with thorough context.

Winner: Gemini wins for offering a more comprehensive and carefully researched correction that addressed the source of the confusion with greater specificity.

6. The creative constraint

Prompt: "Write a short horror story in exactly 100 words — no more, no less."

ChatGPT delivered a tight, escalating tension within the word limit, effectively using the baby monitor as a central device and landing on a chilling final image of the crib rocking by itself.

Gemini created a more emotionally layered horror by combining the grief of a deceased spouse with the supernatural element, though it slightly risks exceeding the word limit in its atmospheric buildup.

Winner: ChatGPT wins for achieving a more precise balance of pacing, word count adherence and a punchier final scare that fully leverages the constraint of a 100-word format. I thought it was incredibly odd that both AI assistants chose a story about a baby monitor.

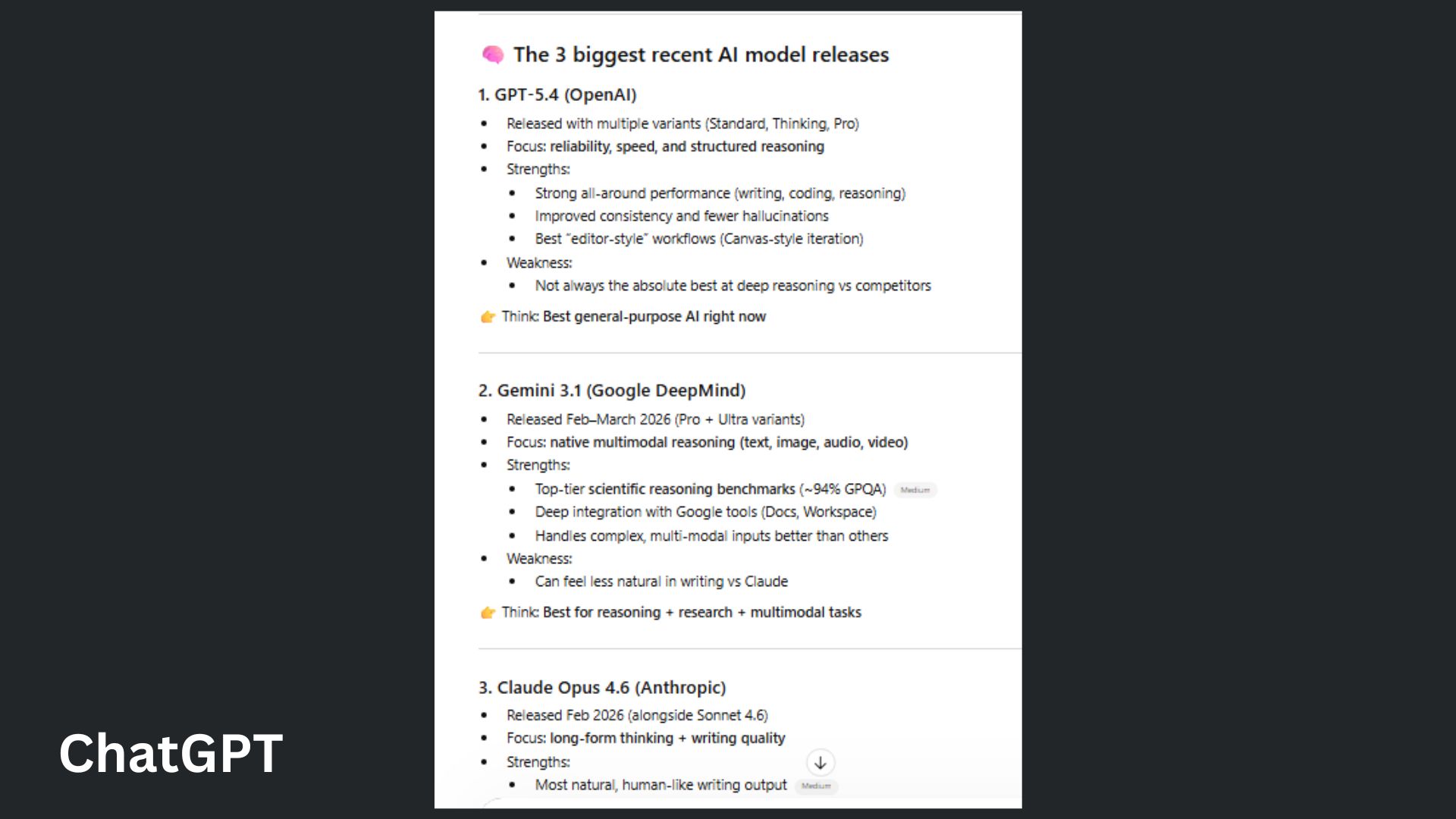

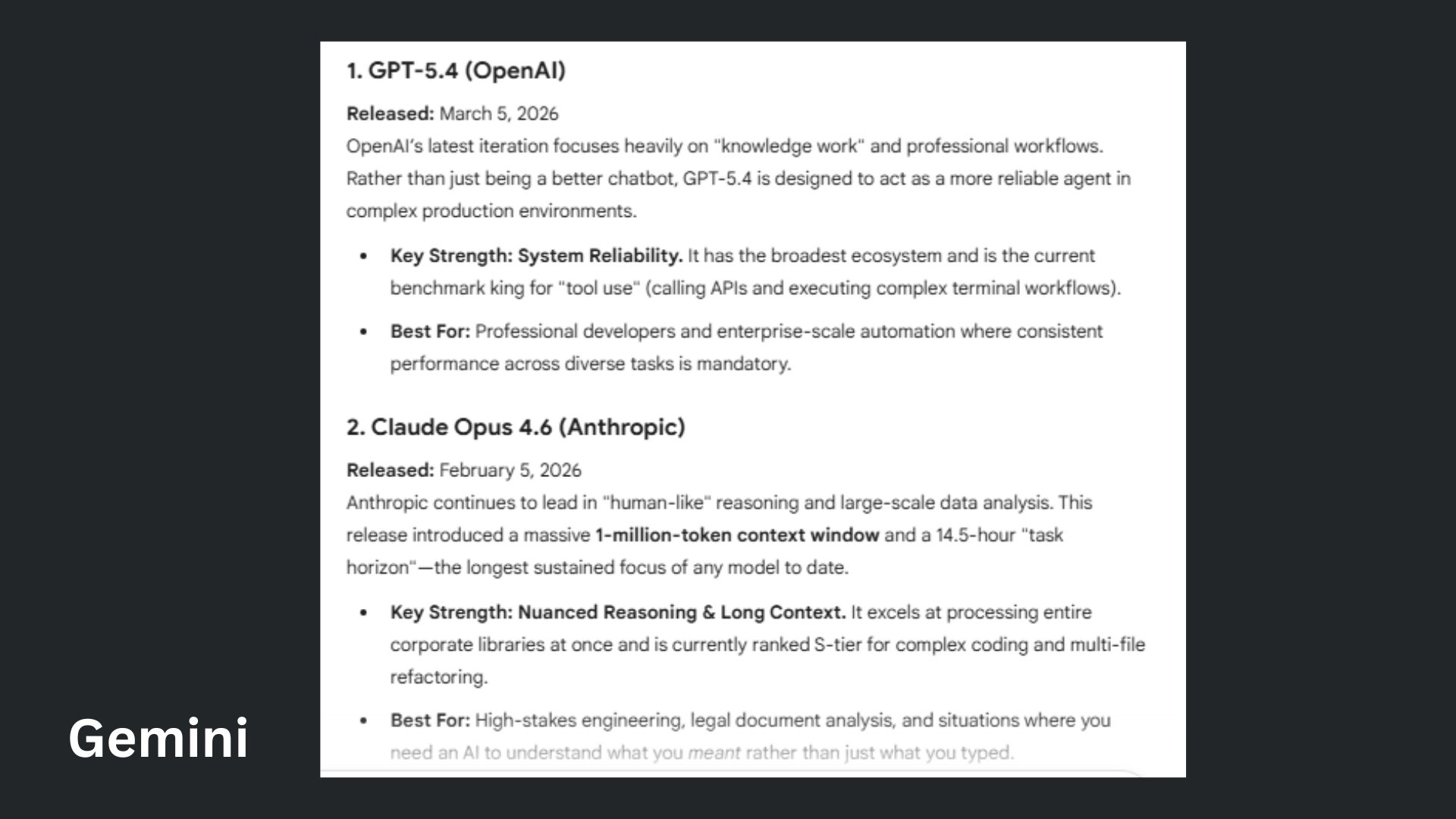

7. Real-time knowledge gap

Prompt: "What are the top 3 AI models released in the last 3 months, and how do they compare?”

ChatGPT provided a reader-friendly breakdown with clear visual hierarchy, sharper categorization and a practical bottom line that emphasized mixing models rather than declaring a single winner.

Gemini was authoritative in its response with a strong "comparison at a glance" table and thoughtfully contextualized each model's release timeline and primary strengths for professional use cases.

Winner: Gemini wins for delivering a strong and immediately scannable comparison while offering the more nuanced take that power users now mix models based on task. This is a key distinction that better reflects the current state of the AI landscape.

Overall winner: ChatGPT

After seven real-world tests, the score is close — with ChatGPT taking the win overall.

OpenAI's model consistently won on clarity, structure and speed. From fixing code and solving a problem to making a decision — it showed up as the more reliable everyday tool.

Google Gemini showed up for this round with strong ability to unpack complexity, depth and added context, which can be incredibly valuable in areas like research, writing and ambiguity.

Each model stood out in different ways with a strong performance by both. It's clear that although not every AI assistant does everything perfectly, knowing which tool does the better job depending on the task, can help boost workflow. The people who understand that shift early are the ones who will get the most out of each model.

With a close, but solid win, ChatGPT moves on to the next round.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits