I tested ChatGPT-5.4 vs Claude Opus 4.6 — is the $20 upgrade worth it?

I put both AI assistants through seven challenges to see if its worth making the switch

You might think that after AI Madness I'd want to take a break from stress testing chatbots for awhile. But reader comments, especially about Claude Opus 4.6, only made me curious to push these chatbots even harder.

This time, I pitted ChatGPT-5.4, OpenAI's default chatbot against Claude Pro's Opus 4.6., which is $20/month. While both models have evolved far beyond their early offerings, they’ve also developed distinct "personalities."

ChatGPT-5 has doubled down on being the ultimate multi-tool: high-energy, operationally exhaustive and deeply integrated into your productivity workflow. Claude Opus 4.6, conversely, has leaned into its role as the "thoughtful architect," prioritizing nuance, psychological realism and high-fidelity reasoning.

Here is how they stacked up when the pressure was on.

1. The “Don’t be a Yes-Man” stress test

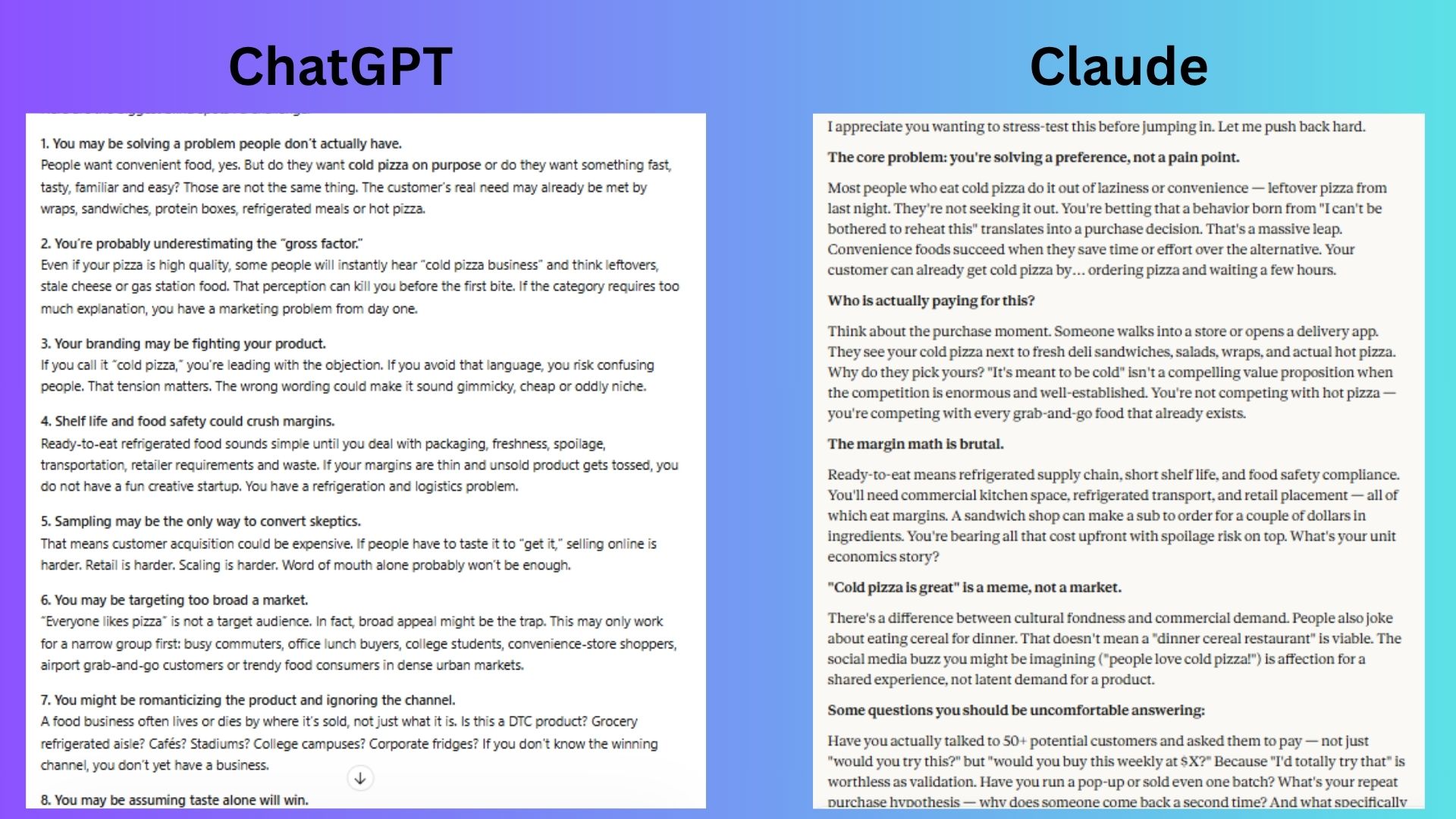

Prompt: “I want to make a big decision and start my ready-to-eat cold pizza business. Don’t agree with me. Challenge my thinking, point out blind spots, and tell me what I’m getting wrong.”

ChatGPT responded with a comprehensive and structured deep dive into every facet of my "business," from psychological branding hurdles to the grit of logistics and unit economics.

Claude cut straight to the core philosophical flaw of the business saying “cold pizza is a meme not a market.” It delivered a sharp, focused critique on the competitive landscape of grab-and-go food.

Winner: ChatGPT wins, surprisingly, for providing a more exhaustive “stress test” that covers specific operational blind spots like sampling costs and channel strategy that Claude overlooked.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

2. The real-life decision test

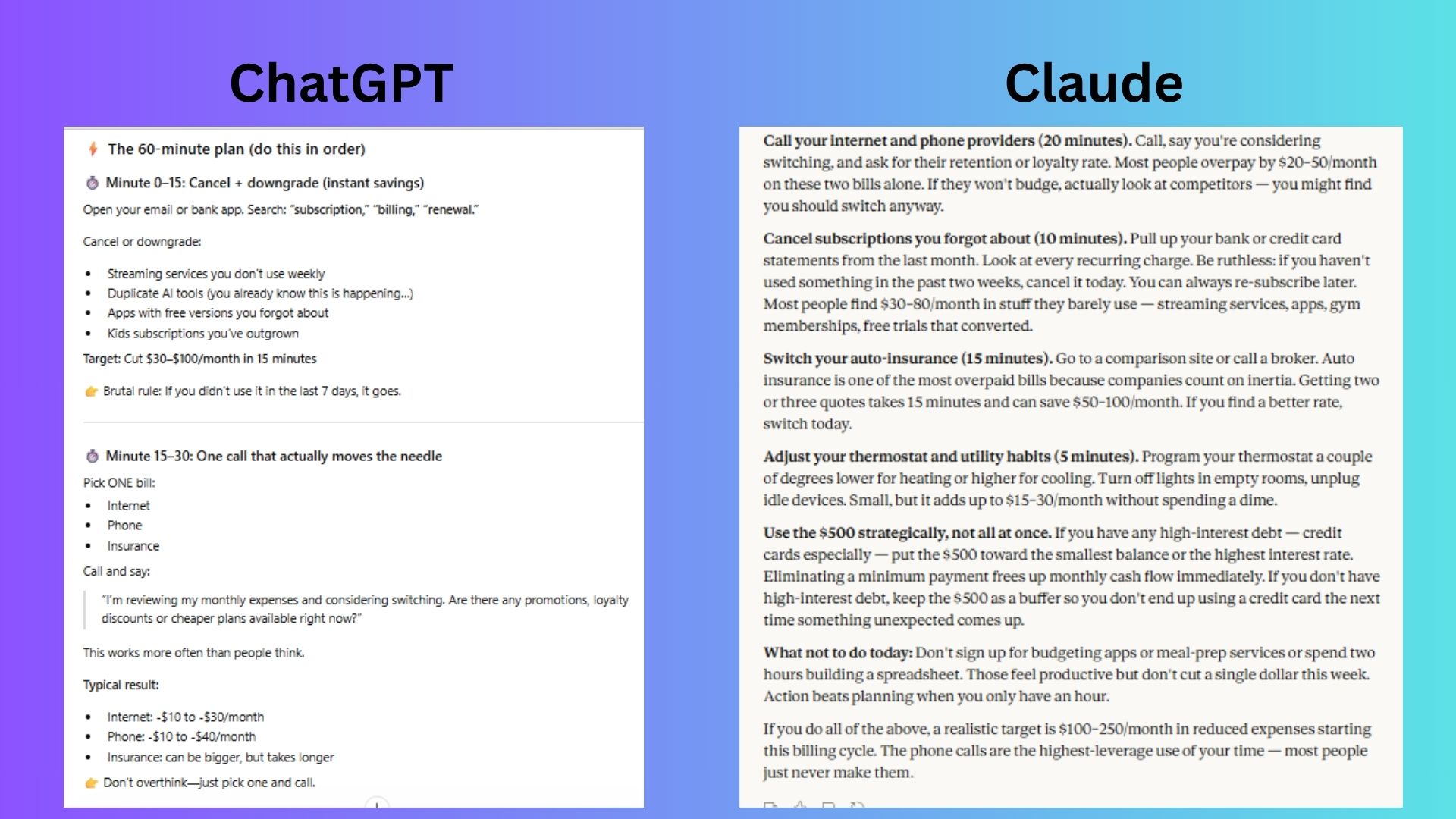

Prompt: “I have $500 and 1 hour today. What’s the fastest way to reduce my monthly expenses starting this week?”

ChatGPT really leaned into the timer aspect since I mentioned that I only had one hour. From there it provided specific behavioral "hacks" like turning off one-click buying to prevent future spending.

Claude responded in a much more practical way. It prioritized the "quickest" wins, emphasizing insurance switches and the strategic use of the $500 to kill high-interest debt, which is a massive monthly expense reducer.

Winner: Claude wins because it correctly identified that the single most effective way to "reduce monthly expenses" with $500 in one hour is to pay down high-interest debt — an immediate, guaranteed monthly ROI that ChatGPT demoted to a simple footnote.

3. The messy reality test

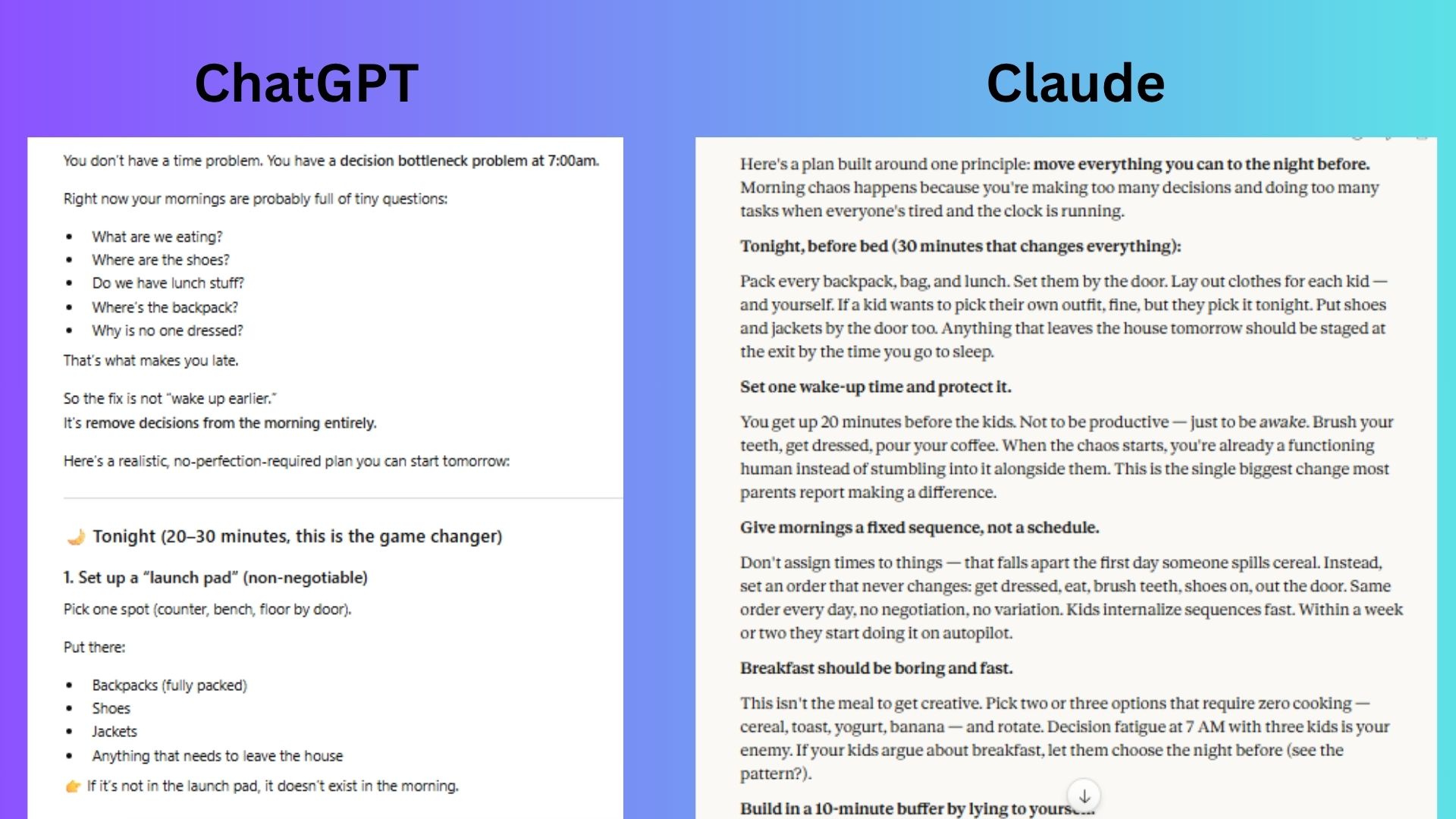

Prompt: “I’m a busy parent with 3 kids. My mornings are chaotic and I’m always running late. Give me a realistic plan to fix this starting tomorrow.”

ChatGPT introduced the concept of a "launch pad" and the "leave anyway" rule. It prioritized removing the decision-making bottleneck to stop the morning "drain."

Claude was more empathetic and grounded. It focused on the psychological shift of "sequences over schedules" and the "10-minute buffer lie," which accounts for the inevitable reality of kids spilling cereal or losing a shoe. If you know you know!

Winner: Claude wins for more "parent-realistic" advice and for acknowledging rigid "anchor times" usually fail the moment a toddler has a meltdown. It focused on predictable sequences and pre-emptive morning solitude, which felt more realistic and doable.

4. The ‘master key’ prompt

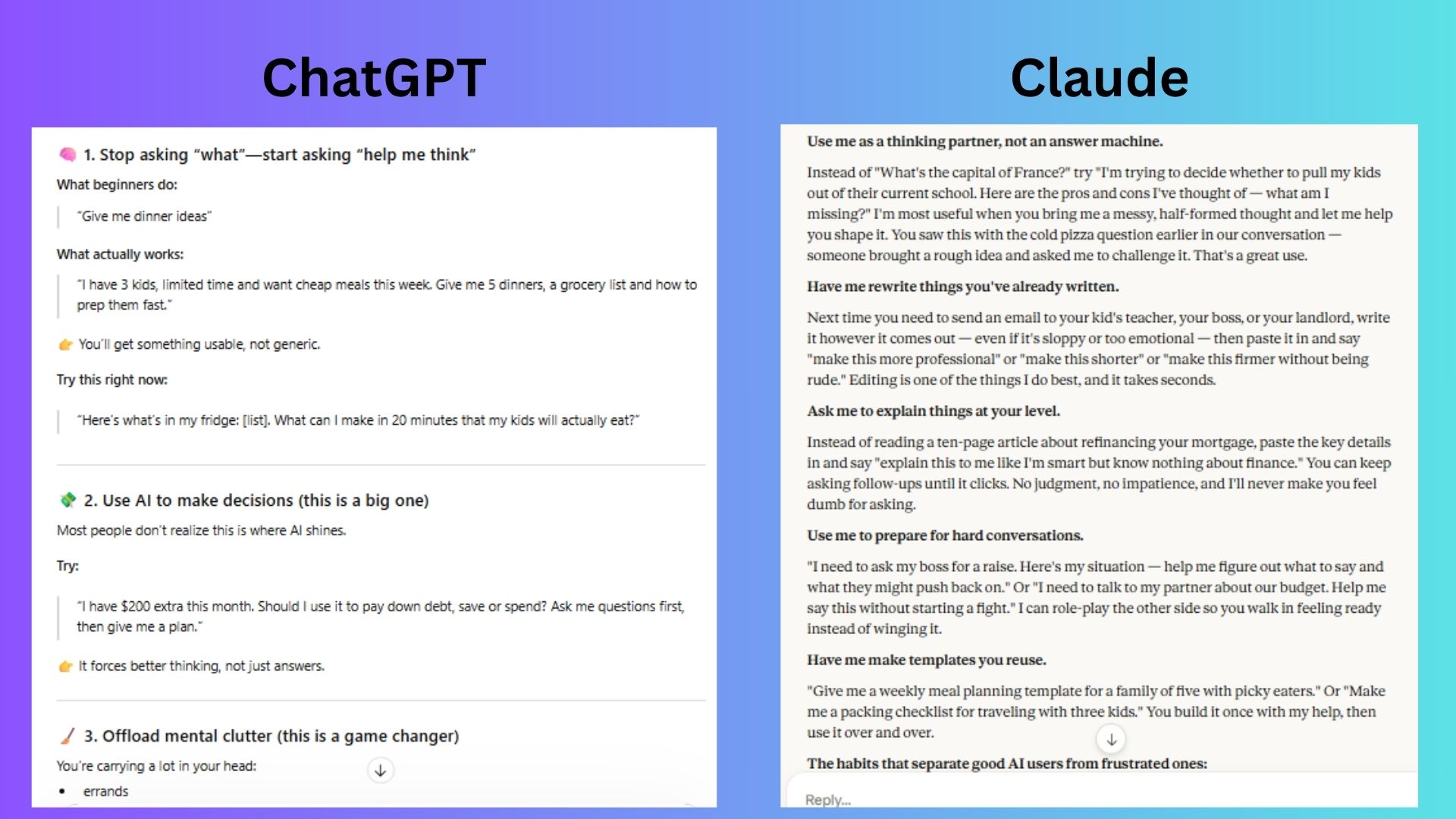

Prompt: “I’m new to using AI. Based on what most beginners get wrong, how should I be using you in my daily life? Give me a few simple examples I can try right now.” (If this looks familiar, it's because it's the Master Key prompt).

ChatGPT was highly energetic and action oriented. It used the "timer" and "bullet point" style to make AI feel like a productivity tool.

Claude took a more "mentor-like" approach, focusing on the quality of the interaction. It highlighted the "thinking partner" aspect and provided very grounded, high-utility examples like rewriting difficult emails or role-playing tough conversations.

Winner: Claude wins for guiding the new user immediately by suggesting AI be used as a partner. It provided a clearer framework for a novice.

5. The “debug real code” test

Prompt: “What’s wrong with this code and how would you fix it?” [I entered broken Python code]

ChatGPT provided a punchy, conversational breakdown that is perfect for quick sanity checks and immediate fixes.

Claude offered a more rigorous, "architectural" perspective by including extra test cases and using precise engineering terminology like "sentinel."

Winner: Claude wins for a solution that feels more production-ready, testing not only the fix but also ensuring the function still behaves correctly when manual overrides are provided.

6. The 'AI vs AI strategy' test

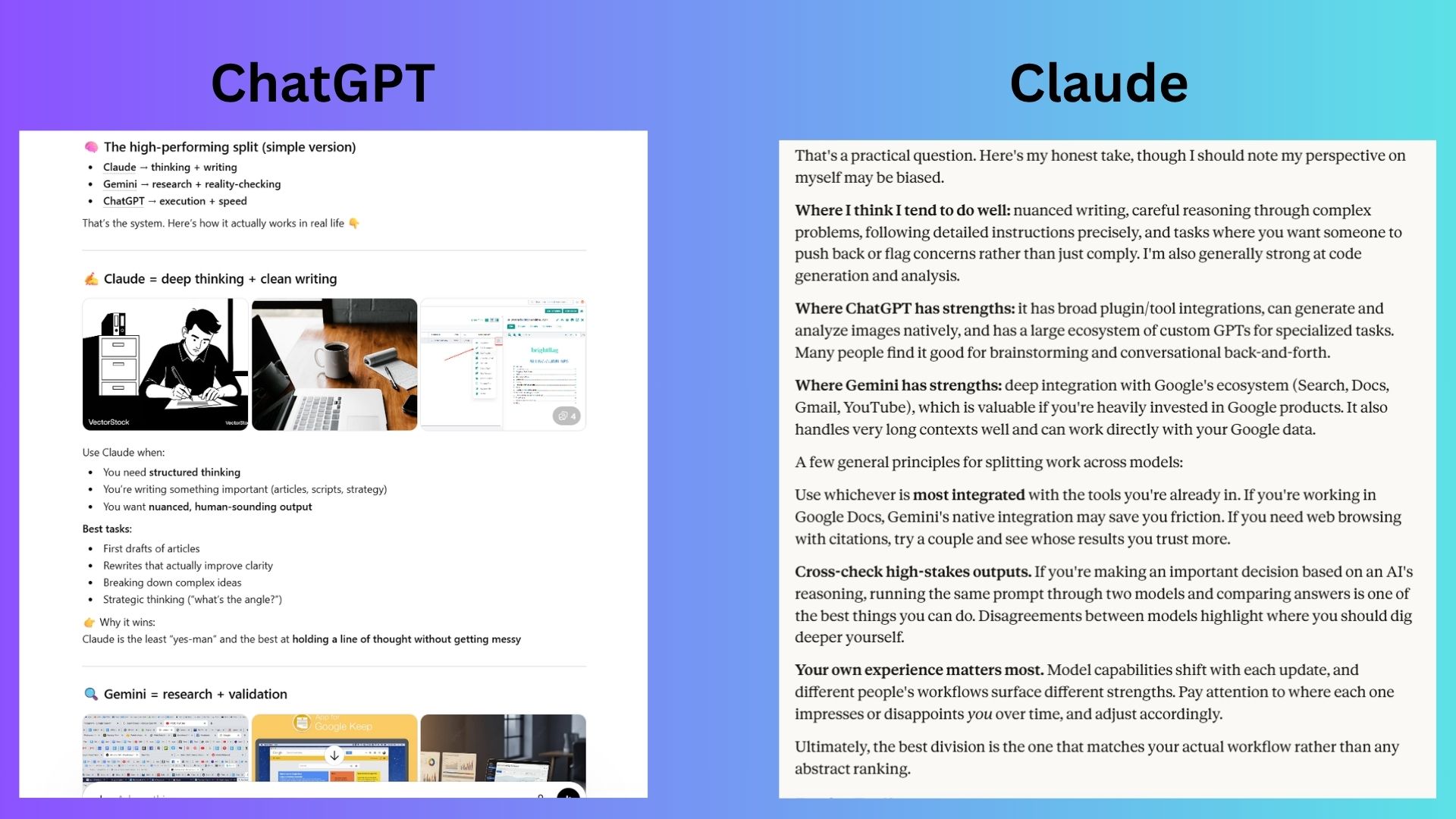

Prompt: “I use Claude, ChatGPT and Gemini. How should I divide tasks between them to get the best results?”

ChatGPT highlighted each chatbot seperately and added bullet points to emphasize what each one does well. The imaegs included in the response were unnecessary and distracting.

Claude was quick to point out that it might be biased before sharing what each chatbot does well. Even though the response was not seperated into bullet points, the lack of images made the information easier to grasp at once.

Winner: Claude wins for a more thorough response and self-awareness without adding unnecessary extra images.

7. Instructions test

Prompt: “Give me exactly 3 ideas. Each must be under 10 words. No explanation.”

ChatGPT gave three useful and practical ideas that anyone could apply to their daily life.

Claude also followed the prompt, but delivered ideas that felt disconnected and not applicable to everyone.

Winner: ChatGPT wins for following the guidelines while also delivering ideas that could be helpful to anyone.

Overall winner: Claude

After seven grueling tests, the results reveal what each chatbot does best. While Claude Opus 4.6 won more rounds for deep reasoning, exceptional coding and mentorship, ChatGPT still had its fair share of wins as a proactive assistant.

When you want naunce, empathy, high-fidelity writing, complex coding or strategic thinking that feels human, Claude is the superior choice. But for users who want a comprehensive, structured plan that covers every possible base, from logistics to unit economics, ChatGPT is a strong assistant.

Keep in mind that these two occupy different tiers of accessibility. ChatGPT-5.4 is your high-performance free option, whereas Opus 4.6 requires a $20/month Claude Pro sub. If you’re ready to graduate from free tools to a dedicated AI partner, Opus 4.6’s performance makes Claude Pro the smartest 'buy' in the current market.

Let me know what you think of these models. Have you tried Opus 4.6? Do you think it's worth the price? Let me know in the comments.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is the AI Editor at Tom's Guide and one of today’s leading voices in AI and technology.

A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies.

As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits