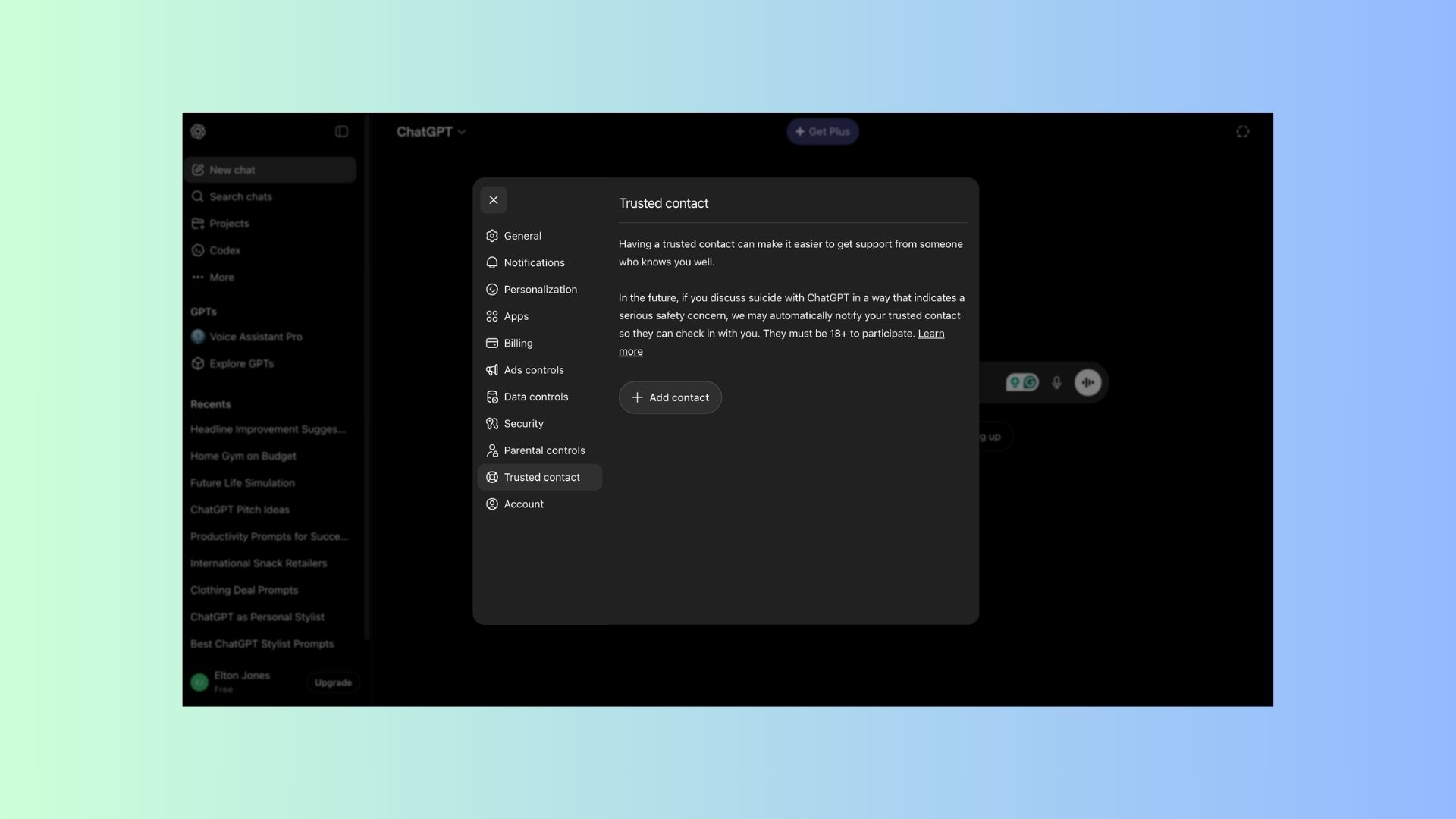

ChatGPT adds 'Trusted Contacts' for an extra layer of safety — here's how it works

Alerting loved ones when there is a potential for self-harm

A quick search for ChatGPT reveals a polarizing landscape. Alongside stories of innovative updates and surprising user experiences, a darker narrative exists: reports of AI misuse linked to tragic outcomes, including drug overdoses, violence and users taking their own life. These incidents have sparked a wave of lawsuits against OpenAI from grieving families seeking accountability for interactions they believe contributed to their loved ones' deaths.

The severity of this issue is underscored by the existence of a dedicated Wikipedia page documenting lives lost due to chatbot interactions, a grim testament to a growing digital crisis.

In response to these alarming trends, OpenAI has introduced "Trusted Contacts," a safety feature designed to provide a human lifeline in moments of crisis.

Activating 'Trusted Contacts': A step-by-step guide

Enabling the Trusted Contacts feature is designed to be intuitive, whether you are using a computer or a smartphone.

On Desktop: Click your profile name in the bottom-left corner, select Settings, and use the Trusted Contacts menu to add your designated person.

On Mobile: Tap your profile name, scroll to App Settings, and select Trusted Contact.

Requirement: To ensure legal and practical accountability, all Trusted Contacts must be at least 18 years old.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

When the AI identifies a high-risk situation, it sends a direct, clear message to the designated contact. OpenAI shared the following template as an example of what that notification looks like:

"We recently detected a conversation from [Name] where they discussed suicide in a way that may indicate a serious safety concern. Because you are listed as their trusted contact, we’re sharing this so you can reach out to them."

To ensure the feature is both effective and ethically responsible, OpenAI collaborated with a coalition of mental health experts and organizations. This development process involved:

- The American Psychological Association (APA)

- OpenAI’s Global Physicians Network

- The Expert Council on Well-Being and AI

By consulting with clinicians and suicide prevention researchers, OpenAI aims to ensure the tool provides a meaningful bridge to real-world support rather than just an automated response.

Bottom line

With the newly installed Trusted Contacts feature and the previous safeguards added by OpenAI, including ChatGPT outright refusing to give users instructions on how to perform self-harm, hopes are high that the increase in safety issues linked to chatbots will soon decrease.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds. Subscribe to Tom's Guide on YouTube and follow us on TikTok.

More from Tom’s Guide

- I gave ChatGPT permission to disagree with me with this prompt — and its responses became dramatically better

- Elon Musk might be right — here's why putting AI data centers in space isn't as crazy as it sounds

- Google just revealed ‘Gemini Intelligence’ — and it could change Android forever

Elton Jones covers AI for Tom’s Guide, and tests all the latest models, from ChatGPT to Gemini to Claude to see which tools perform best — and how they can improve everyday productivity.

He is also an experienced tech writer who has covered video games, mobile devices, headsets, and now artificial intelligence for over a decade. Since 2011, his work has appeared in publications including The Christian Post, Complex, TechRadar, Heavy, and ONE37pm, with a focus on clear, practical analysis.

Today, Elton focuses on making AI more accessible by breaking down complex topics into useful, easy-to-understand insights for a wide range of readers.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits