Google is completely shaking up how we search — what you need to know

Google Search jumps onto the short-video trend

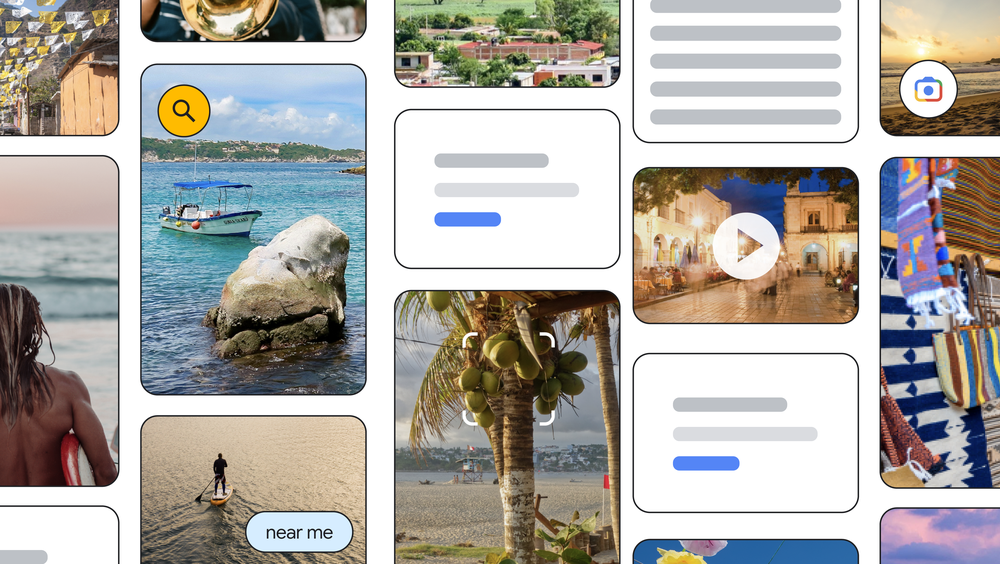

At its recent Search On event, Google announced that it is taking steps to reinvent the wheel with its search engine. As the world moves to a more visual-first approach with apps like TikTok and Instagram, Google is finding new ways to integrate more visual ways for people to search and discover.

With Google Search we could already do a voice search or search using an image, but the tech giant will expand this further to include more content types and many more interactive features. One of the big changes the search engine will get is images and video content in the search results — that will not present themselves as separate boxouts anymore — but will merge into the results itself.

Google is looking at making its search engine more fluid and interactive. On the company blog, Cathy Edwards, who is the Vice President of Search, says, “we’re working to make it so you’ll be able to ask questions with fewer words — or even none at all — and we’ll still understand exactly what you mean”.

Article continues belowFor example, the Google app on iOS will now see shortcuts laid out under the search bar so users are not left wondering “how” to search for something. This will include options like “identify song by listening," “solve homework with camera," “shop for products using screenshots” and “translate text with camera."

Other handy search features will include a more developed autocomplete and there will be keyword or topic options that will also pop up to help specify finding things.

‘Stories’ in Google Search

The big change is in the way Google will show up some of the results using different multimedia. So once you have found the right words for your search, Google will highlight “the most relevant and helpful information, including content from creators on the open web”.

This means that if you search for a specific city, for example, you will see stories and short videos from content creators that highlight things like tips on how to explore the city or things to do.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Google says the information will come in from a variety of sources and won’t be classified separately as text, image or video. The reimagined search will be a lot more interactive and help users “discover” more information as well. The company says this feature will be available in the “coming months."

Google is using advanced machine learning for some of its new translation features as well. There is a new Lens translation update where you can point your camera at a poster in another language and it will translate the text and overlay it on the image itself.

Easy search for specific food items

Google is also making it much easier to find specific food items near you. The new “Multi search near me” feature will let users take a picture of a dish or a screenshot and instantly find it nearby.

Multi search was already a feature that was launched earlier this year — it allows people to search through a screenshot for a specific item using Lens. Now the tool has expanded and will save us some time browsing through menus looking for exactly what we want to eat.

Most of the new features will first make their way to US users on the iOS Google app in the coming months before a more widespread roll out.

Next: Google Search just got a handy upgrade for finding things fast — and you can try it now. Also check out 11 hidden Google Search features that will make your life easier.

Sanjana loves all things tech. From the latest phones, to quirky gadgets and the best deals, she's in sync with it all. Based in Atlanta, she is the news editor at Tom's Guide. Previously, she produced India's top technology show for NDTV and has been a tech news reporter on TV. Outside work, you can find her on a tennis court or sipping her favorite latte in instagrammable coffee shops in the city. Her work has appeared on NDTV Gadgets 360 and CNBC.

Club Benefits

Club Benefits