How to stop Facebook from training its AI on your data

Protect yourself from Meta's use of personal data to train its AI models.

Meta is an industry leader in AI and has been training its generative AI model to integrate intelligent features into its platforms. But how does it train its AI? Feed it data.

Our data.

This data is used to make predictions and patterns to generate image and text content from inputs.

A few months ago, European regulators charged Meta 1.3 billion dollars for misusing data. To salvage the situation, the company recently came out with a Generative AI Data Subject Rights Form.

The form allows you to request that your personal data be deleted from the system. So, how do you go about safeguarding your personal info? Is it a real privacy guardian or an elaborate marketing gimmick? Let’s find out.

Only data from third parties

Meta collects public information from the web and sources data from other providers, including third-party data brokers and cookies. The data collected could include information like names, contact details, and addresses, among other things that you may consider private.

The above-mentioned form will only be a request to Meta not to use data sourced from third parties. Neither the form nor the company has mentioned anything about allowing users the liberty to stop Meta from feeding AI data it obtains from its own gamut of apps, including Instagram, WhatsApp, Facebook, Threads, and more.

It's important to note the word "request" here, which means the company can still deny you from stopping third-party data—your data—from going into its AI's cavernous belly.

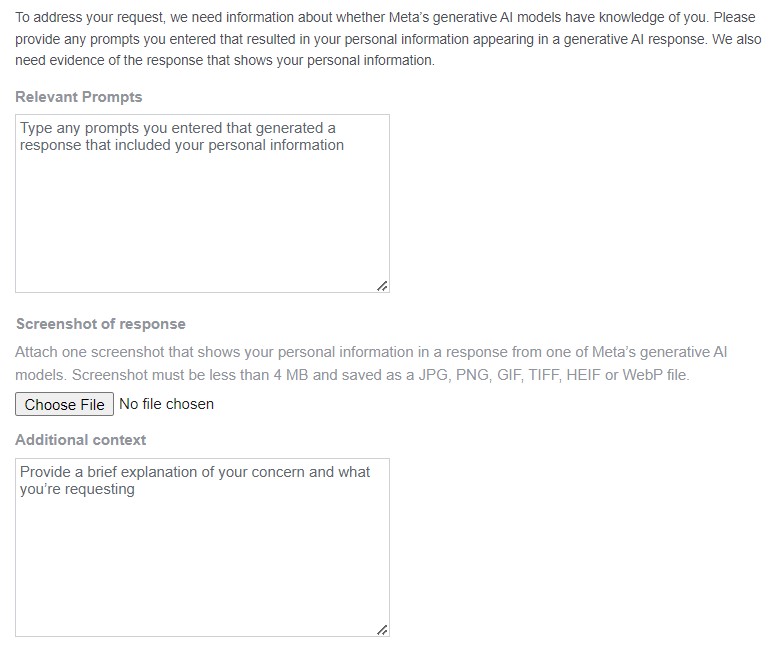

And, the process of submitting the request, although simple, asks you to produce an instance where a certain prompt caused AI to generate information with your private information in it. What about users who simply want out, or who want such an instance to never occur in the first place?

It’s a good thing that the company hasn’t touched private posts of the app, the ones you share with just your family and friends. In addition to that, private chats on its messaging services, too, aren’t used for training data for the model.

As for the public posts, there’s nothing you can do to keep your first-party data from being used. These public datasets are separated from private data and are fed to the system. A slight relief comes as Meta revealed that it has deliberately decided to not include LinkedIn data for the purpose due to privacy concerns.

Speaking on this matter, a Meta spokesperson recently clarified that the latest open-source large language model wasn’t trained on Meta's user database, and the company has not launched any generative AI consumer features entirely based on the system.

Steps to stop Meta from using your data

One of the best VPN providers, Surfshark, has recently developed a tool called Incogni that automates requests to hundreds of data mining companies to remove your data. Learn more on the Incogni website.

To submit the request, all you need to do is provide your country of residence, your name, and your email address. Facebook doesn’t ask for your account login when taking the request. Go to the Generative AI Data Subject Rights Form on Meta’s privacy policy page about generative AI.

You’ll find three options here:

1) I want to access, download, or correct any personal information from third parties used for training Meta's generative AI models

2) I want to delete any personal information from third parties used for training Meta's generative AI models

3) I have a concern about my personal information from third parties that is related to a response I received from Meta’s generative AI models

There’s also a security check test that you’ll have to pass before submitting the request. Once you do that, the company will review your request and send you a confirmation email, most likely within 24 hours of submission.

Factors like your place of residence may have an impact on how you will be able to exercise the Data Subject Rights and object to your data being used for AI. I feel the form makes more sense in the UK as the data privacy laws there back up the form, and you know that it’s actually taking action.

Recently, the European Data Protection Board (EDPR) announced a ban on Meta's use of personal data for targeted adverts. Long story short, the only way Meta can use your personal details for ads is by obtaining your consent—a huge win for privacy purists like me.

As for US citizens, it’s still a blur if any action is actually taken from the company’s end on requesting the said opt-out.

For instance, it recently launched Code Llama 2, an AI model that can provide and help you with coding, reasoning, and other proficiency tests. Meta clarified that this particular AI wasn't trained on the company's database of its users and that it hasn’t launched any generative AI consumer features based on its systems.

Meta also said that it will remain transparent to its users and authorities and will keep them updated about the data it intends to use for AI or otherwise. The company ensures that no matter what, it is fully committed to respecting the rights of its users.

Conclusion

Meta is infamous for various privacy-related scandals, whether that's a Meta Chief objecting to AI experts' demand for AI regulation, the company's proven biased content censorship during the Hamas-Palestine conflict or the UK Government's concerns over its end-to-end encryption model.

However, of late, the company has been trying to crank up user privacy, and irrespective of whether that's because of the sheer pressure from the public and authorities or of its own conscience, it's something we all can appreciate.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Krishi is a VPN writer covering buying guides, how-to's, and other cybersecurity content here at Tom's Guide. His expertise lies in reviewing products and software, from VPNs, online browsers, and antivirus solutions to smartphones and laptops. As a tech fanatic, Krishi also loves writing about the latest happenings in the world of cybersecurity, AI, and software.

Club Benefits

Club Benefits