DisplayPort vs. HDMI: Which connector is better for TV, PC gaming and more

Connector confusion? Here's what you need to know about DisplayPort and HDMI

What's the difference between DisplayPort and HDMI? Whether you're connecting a monitor and desktop PC, a TV to a game console, or setting up your home theater with a soundbar, you've probably wondered about these seemingly similar A/V connections. While DisplayPort and HDMI may look a lot alike, and are used for some of the same purposes, both have some distinct differences.

This guide isn't going to require you to slog through the technical aspects of transmission modes, bit depth or color formats – it's rarely helpful to know this information unless you're wading into the deeper waters of complex A/V installations. Instead, we'll cover the most essential information for DisplayPort and High Definition Multimedia Interface (HDMI) connections. Specifically which connections are best for your gear and intended uses, what connection standards support the resolution and features you want, and which you're likely to find on your computer and home theater devices.

Connections

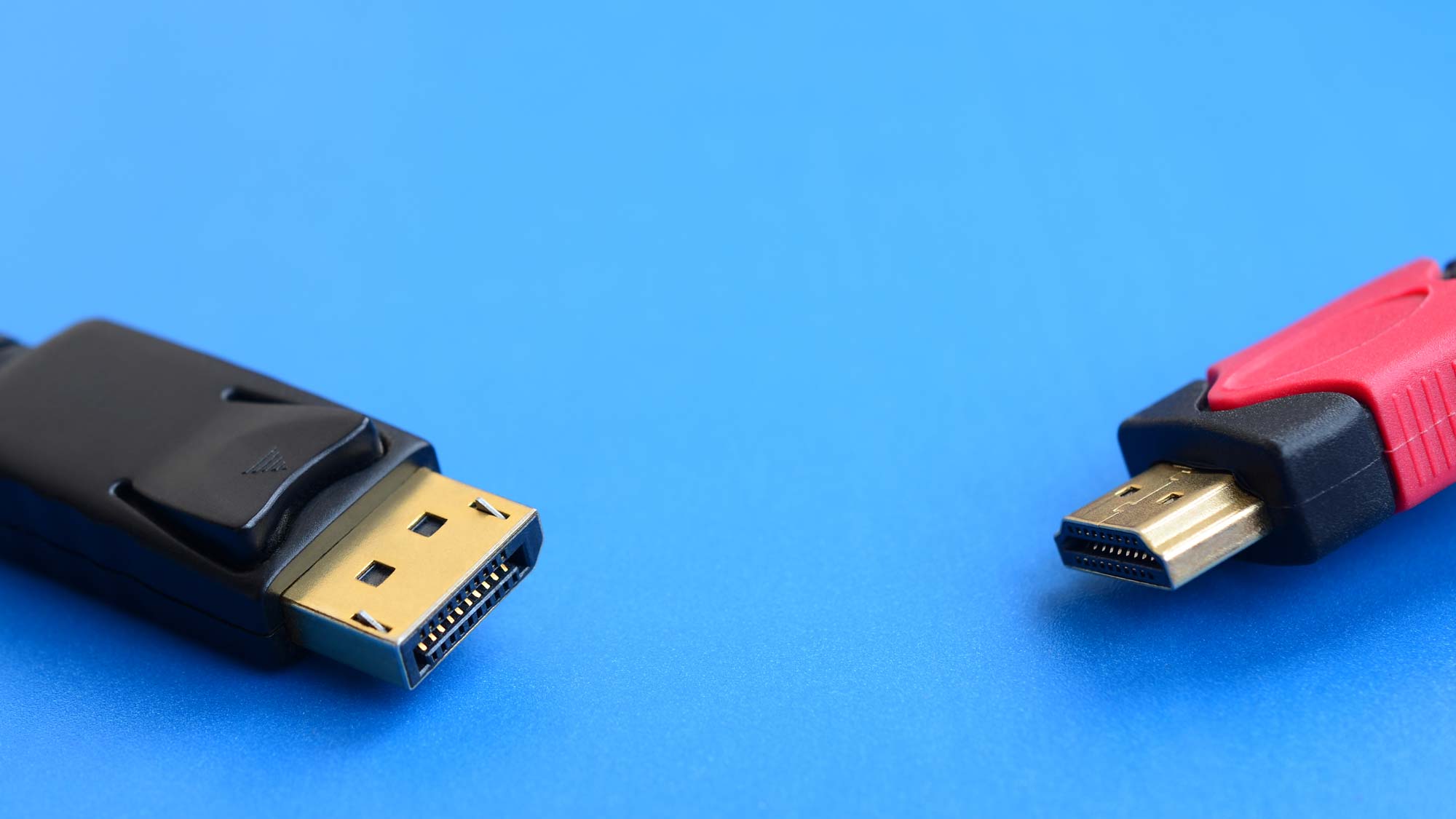

Let's start at the most basic and least technical difference between the two standards: The connector.

Article continues below

DisplayPort connections have an asymmetrical shape that looks like a rectangle with one corner lopped off. Inside, you'll find a 20-pin connector, and the plugs frequently include a physical latch system to secure the cable once it's plugged in, and requires pressing a latch release to unplug the cable.

HDMI, on the other hand, has a symmetrical plug shape, with a 19-pin connector inside. It uses friction to stay plugged in, with no latching system. That does make it possible for HDMI cables to wiggle loose over time, particularly if you regularly move your devices or jostle the cables behind your desk.

Specs and standards

While these two plugs are both used for video connections, there are some pretty major differences between the two in terms of how they function and what data is transmitted. The biggest difference? DisplayPort is often video-only, while HDMI delivers video and audio in a single cable. But the differences don't stop there.

DisplayPort versions

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

There are four different versions of DisplayPort that may be found on monitors and graphics cards, each offering a slightly different mix of support for different resolutions and frame rates.

DisplayPort 1.2 has been in use since 2010, and offers 17.28 Gbps of bandwidth to handle 4K resolution video at 60Hz, as well as any lower resolutions, like Full HD (1920 x 1080) and Quad HD (2560 x 1440). It also outputs wider aspect ratios and resolutions that offer an expanded field of view. These formats are available on differing variations of DisplayPort, such as Mini DisplayPort and Thunderbolt connections, making this an especially handy format for laptop users.

An updated version of the 1.2 specification, DisplayPort 1.2a, also added support for AMD FreeSync, which matches the display refresh rate to the frame-by-frame output of AMD graphics cards, allowing smoother gameplay without screen tearing.

DisplayPort 1.3 offers even better resolution support, with 32.4 Gbps bandwidth to handle 4K resolution at 120Hz or 8K at 30Hz. Introduced in 2014, it was the first single-cable option for 8K video, other than Ethernet.

DisplayPort 1.4 improved on this slightly, with 60Hz 8K support and the introduction of HDR10 metadata for high dynamic range (HDR) content. If you have an HDR-capable monitor but are still using an older DisplayPort standard, you're missing out on HDR gaming.

The 1.4 spec also adds audio transport, making it possible to share sound in addition to video, but support for this feature usually requires downloading additional drivers and enabling the feature in settings Many monitors still don't have built-in speakers to take advantage of this capability.

DisplayPort 2.0 is the newest version of DisplayPort, and really ramps things up with wider bandwidth (77.37 Gbps) and support for 10K (10240 × 4320) and even 16K (15360 × 8640) resolution at 60 Hz, with varying levels of color and compression support. More importantly, it allows for multi-monitor support at high resolutions and frame rates, handling dual 8K displays at 120Hz or up to three 4K displays at 144Hz.

The 2.0 standard was announced for 2020, but thanks to COVID-19 disruptions, 2.0-equipped monitors and graphics cards won't be sold until the second half of 2021.

HDMI versions

Similarly, the HDMI connection encompasses a number of different specs, with 3 primary popular HDMI standards in use now.

The most commonly used HDMI connection is probably the most basic, HDMI 1.4. It's capable of delivering 720p or 1080p resolution, and when it was introduced back in 2009, it offered a respectable maximum video bandwidth of 8.16 Gbps. That made it the first HDMI version capable of delivering 4K picture, but was limited to 24Hz, matching the standard 24 frames per second used by most theater films released on UHD Blu-ray. If you've got a 4K TV that's more than 5 years old, it's probably still using HDMI 1.4.

The 1.4 standard was also a big change over previous versions, because it included 100 Mbps Ethernet connectivity, which was utilized by some early smart TVs and other connected video applications. While the majority of smart TVs rely on Wi-Fi and separate Ethernet connections for network connectivity, this additional two-way data flow was an important evolution in the HDMI standard that led to some of the handiest features available today in later revisions.

It's also the earliest HDMI version to support audio return channel (ARC), which lets you connect a soundbar using HDMI without running another cable between the TV and speaker set. It's a cool feature, and one that's only improved in later HDMI standards.

HDMI 2.0 is sometimes called HDMI UHD, and steps up the bandwidth to 14.4 Gbps, enabling 4K video at 60 Hz. While it's rarely labelled as such, this is a preferred format for many 4K enthusiasts, since it supports 4K 3D material and higher frame rates for 4K gaming. An updated version of the 2.0 standard, HDMI 2.0a, also adds support for high dynamic range (HDR) content, including HDR10 and Dolby Vision. The majority of 4K TVs on the market in the last five years use this updated 2.0a version.

The most recent, and most capable, version is HDMI 2.1. It offers up to 77.4 Gbps bandwidth and includes support for 8K resolution – something that used to require multiple HDMI cables and specialized hardware and software that stitched a quartet of 4K inputs together. With a massive 48 Gbps of bandwidth, HDMI 2.1 actually supports resolutions up to 10K.

It's also capable of supporting 4K resolution at 120Hz, something that high-end TVs have been able to do for a while, but required playing video from attached storage or using complicated video-over-IP connections. The higher bandwidth also allows for all sorts of new features, like enhanced audio return channel (eARC), variable refresh rates (VRR) and automatic low-latency mode (ALLM). You can learn all about it in our guide What is HDMI 2.1?

What does this mean?

Beyond the basics of plug type, resolution and format support discussed above, there are all kinds of technical details that we could dig into. Both HDMI and DisplayPort support variations of color spaces, compression formats, encoding schemes and copy protection standards. And if you want to get into those details, there's more than enough information out there to read up on.

What you do need to know is that HDMI is the format of choice for home theater equipment and game consoles like the PS5 and Xbox Series X. When it comes to TVs, soundbars and console gaming, HDMI is king – just make sure you're using the right cables to get all of the features available to you. And if you're using an inexpensive monitor or a PC that doesn't have a discrete GPU, it's HDMI all the way.

Gaming-oriented PCs and monitors, however, have long relied on DisplayPort for its better resolution and frame rate support, especially as technologies like AMD FreeSync and Nvidia G-Sync have become more popular. Professionals that need exacting color for photo and video editing have also preferred DisplayPort for it's higher technical standards. When visuals matter, pros and enthusiasts prefer DisplayPort.

But this all may change in the coming months, as HDMI 2.1 starts catching up to DisplayPort with its ability to handle 8K video and support those same gamer-friendly features on TVs. The first HDMI 2.1-equipped graphics cards have arrived with Nvidia's 30 series GPUs, and AMD cards are coming soon.

For now, however, the answer to which connection or cables you need will come down to what equipment you already have.

Brian Westover was Senior Editor at Tom's Guide, where he led the site's TV coverage for several years, reviewing scores of sets and writing about everything from 8K to HDR to HDMI 2.1. He also put his computing knowledge to good use by reviewing many PCs and Mac devices, and also led our router and home networking coverage. Prior to joining Tom's Guide, he wrote for TopTenReviews and PCMag.

Club Benefits

Club Benefits