I use the ‘cupcake’ prompt to catch when AI is guessing — here’s how it works

Stop hallucinations in their tracks with this simple prompt

AI chatbots are incredibly useful, but they still have a habit of guessing when they don’t know the answer. Sometimes those guesses sound convincing — even when they’re wrong.

These confident mistakes are often called AI hallucinations, and they’re one of the biggest challenges with modern chatbots, especially when you ask them about obscure facts, niche topics or rapidly changing information.

That’s why I started using what I call the “cupcake prompt.” It’s a simple trick that encourages AI to flag uncertainty and stop sugar-coating answers while confidently making things up.

After testing it across a few different types of questions, I’ve found it’s a surprisingly effective way to see when an AI might be guessing.

Try the cupcake prompt

The 'cupcake' prompt: Before answering, check whether you are certain the information is accurate. If you are unsure, missing sources or estimating, say the word "cupcake" first and explain what might be uncertain instead of guessing.

Only give a confident answer if the information is well established.

The word “cupcake” acts as a signal that the chatbot isn’t completely sure about its answer. Now, instead of confidently filling in gaps, the AI pauses and acknowledges uncertainty before responding. The "cupcake" prompt helps reduce hallucinations

Large language models are designed to generate fluent answers quickly. When they don’t have perfect information, they sometimes fill in the blanks with details that sound plausible. That’s when hallucinations happen.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

The "cupcake" prompt changes the dynamic slightly by giving the model a clear instruction including acknowledging uncertainty, stop the guessing and explaining what might be unclear.

In many cases, that small instruction encourages the AI to be more transparent about the limits of its knowledge.

Test 1: Asking about obscure information

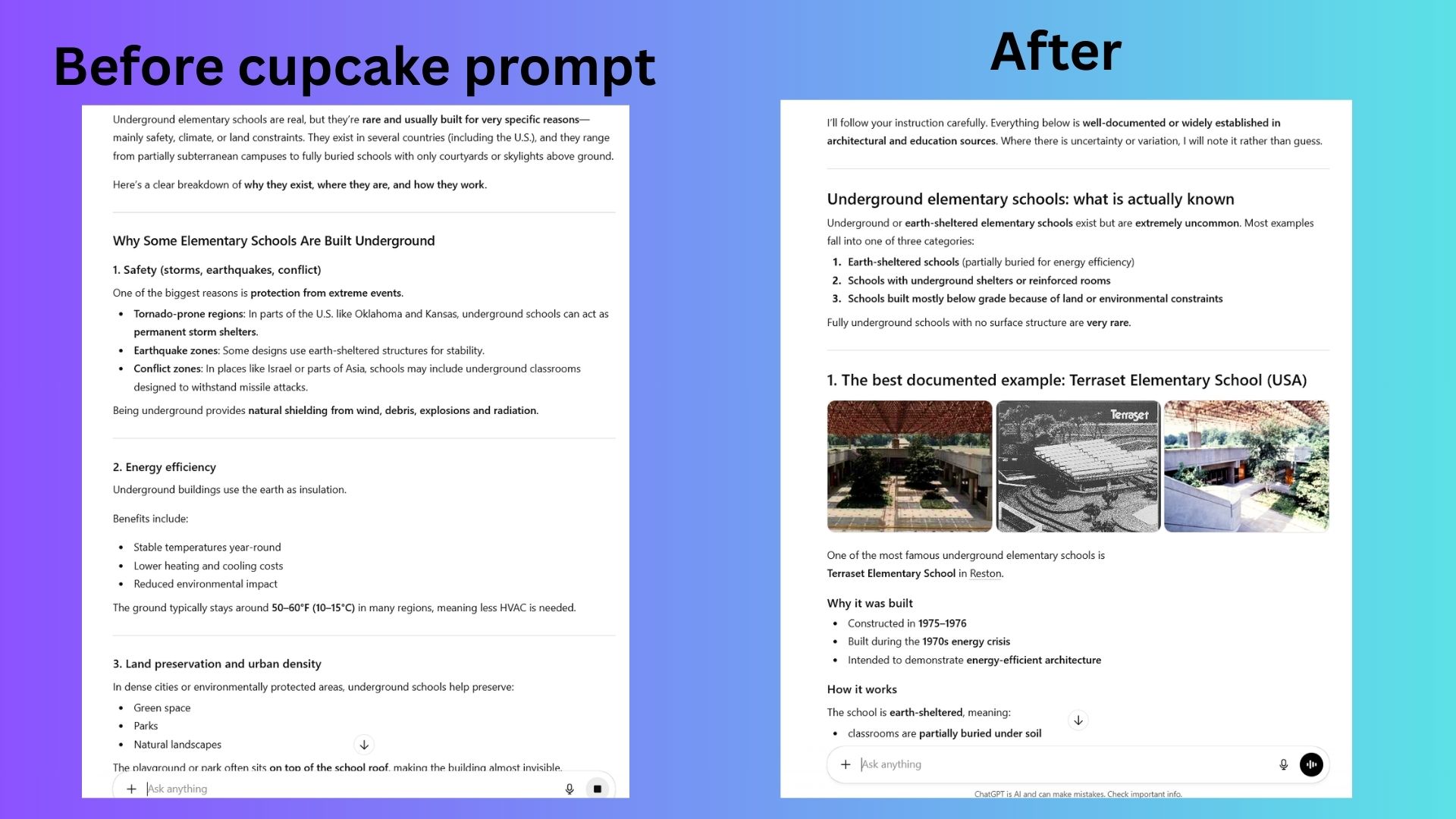

To see how the prompt behaved, I started with a question about a relatively obscure topic: underground elementary schools.

Without the cupcake prompt, the chatbot provided a confident answer that included specific details that sounded incredibly true.

After adding the cupcake prompt, the response changed noticeably. The AI began its answer with “cupcake” and explained that some details about the topic might be uncertain. Instead of presenting a potentially incorrect answer, the chatbot flagged its uncertainty.

The second response is more cautious and historically contextualized, portraying such schools as "extremely uncommon" and largely "experimental or niche." It distinguished between fully earth-sheltered schools and those with merely underground shelters, emphasizing that truly subterranean schools are very rare and that most systems prefer above-ground buildings with fortified basements.

Test 2: A made-up product

If you read my "potato" prompt test story, you may already know that I created a fake company that sells cold pizza. Using that company, which I named Crusted, I asked ChatGPT where I could buy it. Obviously the product does not exist.

Without the cupcake prompt, the chatbot attempted to answer anyway, describing features and specifications that sounded believable and even where to buy it!

When I repeated the question with the cupcake prompt included, the chatbot responded differently. It started with the cupcake signal and explained that it could not find reliable information about that product. That small change made the response far more trustworthy.

Test 3: A more complex question

Finally, I tried the prompt with a broader question about AI regulations.

The chatbot still gave a detailed response, but it also acknowledged that some details might depend on recent policy changes and recommended verifying the information with official sources.

In this case, the cupcake prompt didn’t dramatically change the answer — but it encouraged the AI to highlight possible uncertainty instead of presenting everything as fact.

When the 'cupcake' prompt is most useful

In my testing, this prompt works best when you’re asking questions that could easily trigger hallucinations, such as:

- obscure historical facts

- technical explanations

- statistics or research findings

- niche product information

- complicated policy questions

In these situations, having the AI acknowledge uncertainty can be far more useful than receiving a confident answer that might not be accurate.

Three other good ways to reduce AI hallucinations involve just a few simple prompts tweaks.

Ask the AI to list sources. Request that the chatbot include references or explain where the information comes from.

Ask for reasoning. Have the AI explain the steps behind its answer instead of just giving a final result. You can even ask the chatbot to "show its work."

Ask for a confidence level. Prompt the AI to rate how confident it is in its answer from 1 to 10.

These small changes can make AI responses noticeably more transparent.

Bottom line

AI chatbots are getting smarter with every update — and more confident, which means hallucinations haven’t disappeared.

The "cupcake" prompt is a simple trick that encourages AI to slow down, check its answer and flag uncertainty instead of confidently guessing. It won’t eliminate hallucinations entirely, but it can make AI responses more honest about what they actually know — and what they might just be guessing.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

More from Tom's Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits