Nvidia is teaming up with Span to install mini AI data centers right on the side of your house, turning residential neighborhoods into a distributed supercomputing network that actually pays homeowners for their unused electricity

We could see a future where powerful AI runs right inside your house

For the past few years, AI has lived "far away" or at least behind-the-scenes. We ask ChatGPT questions and giant data centers packed with GPUs do the heavy lifting out of sight.

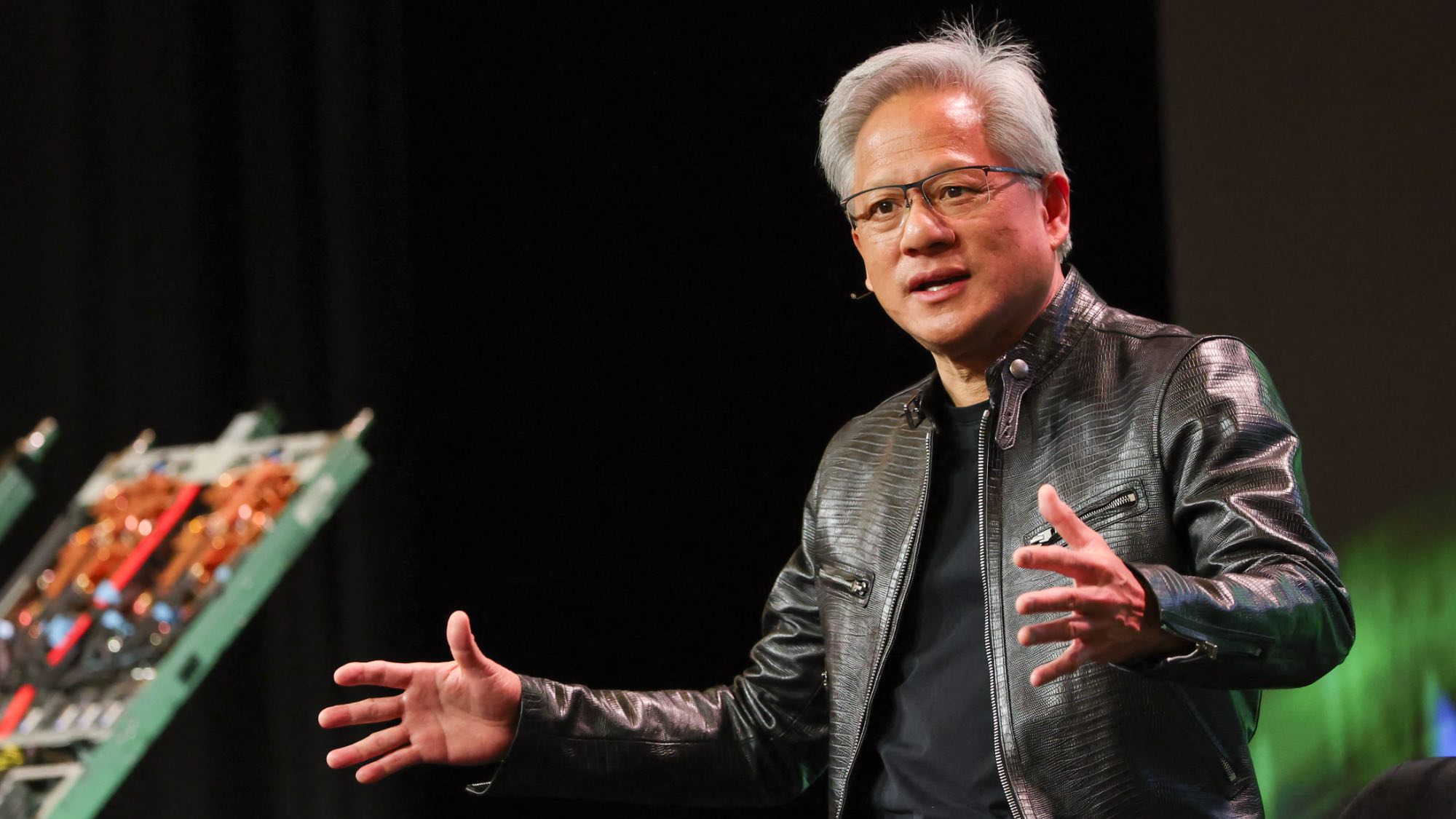

But Nvidia, the company powering much of the AI revolution, appears to be betting on something very different for the future: an AI supercomputer inside your home.

At least, that's the idea gaining traction after viral posts claimed Nvidia wants to turn your house into an AI data center. While the idea might sound a little too sci-fi for most of us to grasp, it's actually rooted in a very real shift happening across the tech industry. Moving AI out of centralized cloud systems and directly onto personal devices is closer than we think.

So what’s actually happening?

What seems like an outrageous claim stems from Nvidia’s push into what it calls “personal AI supercomputers,” compact but extremely powerful AI machines designed to run advanced models locally instead of relying entirely on the cloud.

One example is Nvidia's DGX Spark, a desktop-sized AI system the company literally describes as an “AI supercomputer on your desk.” It’s capable of running large AI models locally and is aimed at developers, researchers and high-end AI workflows.

According to Nvidia, these systems are powerful enough that they are designed for local AI inference, robotics, edge AI applications, autonomous agents, computer vision and smart systems. Essentially, AI that runs closer to you instead of inside distant cloud infrastructure.

Why the industry could be moving in this direction

Right now, most AI tools depend heavily on giant cloud providers. That works — but it also creates some major problems such as:

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

- Expensive AI. Running large AI models costs enormous amounts of money and energy. Data centers are expanding at a staggering pace and putting pressure on electrical grids worldwide. Distributing some of that processing onto local devices could reduce cloud costs and ease infrastructure strain.

- Privacy is a major concern. Local AI means fewer conversations sent to remote servers, better privacy and ultimately better control over your data. This is a huge selling point as people grow more cautious about how AI companies use personal information.

- AI feels faster when it runs locally. Cloud AI always introduces some delay, but local AI can power instant voice assistants, smart glasses, home robots, security systems and offline AI tools with much lower latency.

Your future home might quietly become an AI hub

That idea may sound far-fetched, but it’s actually closer to reality than most people realize. A recent report from Inc. says NVIDIA is partnering with startup Span to test “mini” AI data center units attached to homes and small businesses. The goal is to tap into unused residential electrical capacity to help power AI workloads.

The units, called XFRA nodes, are reportedly designed to sit alongside existing home infrastructure like HVAC systems and electrical panels. Instead of relying entirely on giant centralized facilities, the idea is to distribute computing power across thousands of smaller locations.

The real reason Nvidia is pushing the DGX Spark and the RTX 50-series isn’t just for faster chatbots. It’s for Autonomous AI Agents. In 2026, we are moving away from simple assistants and toward "Agents" that can manage your calendar, handle your finances and even interact with your smart home autonomously.

To do this safely, the industry is pivoting toward Local Inference for three critical reasons:

- 24/7 autonomy: For an AI agent to monitor your home security or manage your energy grid usage, it needs to stay "awake" even if your internet goes down. Local hardware like the Grace Blackwell superchip allows these systems to run 24/7 without a constant cloud uplink.

- Zero-trace privacy: Sending your bank statements, private emails and home camera feeds to the cloud for an AI to "analyze" is a massive security risk. By using tools like Nvidia OpenShell, these agents can operate within a "privacy sandbox" inside your own four walls. Your data never leaves your house.

- The 'Digital Twin' hub: High-end systems like the DGX Spark allow you to run a "Digital Twin" of your entire digital life. This hub acts as a local brain for all your smaller devices like your phone, your smart glasses and your appliances, processing their data in one secure, high-speed location.

The takeaway

Nvidia probably doesn’t expect people to install rows of GPU racks in their basement. But your home could gradually fill with AI-powered systems that process data locally and that's a major shift from the current cloud-first AI model.

And, Nvidia isn’t alone here. Apple, Microsoft, Google and others are all aggressively pushing “on-device AI” and “edge AI” strategies.

Apple Intelligence, for example, emphasizes private on-device processing whenever possible. Microsoft is building AI directly into Windows PCs. Qualcomm is racing to create AI-ready chips for laptops and phones.

Just like gaming PCs slowly evolved from niche enthusiast machines into mainstream household tech, AI hardware may follow a similar path over the next decade. It will be interesting to watch the future of AI move from some distant server farm, to living quietly inside our living rooms instead.

Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds. Subscribe to Tom's Guide on YouTube and follow us on TikTok.

More from Tom’s Guide

Amanda Caswell is one of today’s leading voices in AI and technology. A celebrated contributor to various news outlets, her sharp insights and relatable storytelling have earned her a loyal readership. Amanda’s work has been recognized with prestigious honors, including outstanding contribution to media.

Known for her ability to bring clarity to even the most complex topics, Amanda seamlessly blends innovation and creativity, inspiring readers to embrace the power of AI and emerging technologies. As a certified prompt engineer, she continues to push the boundaries of how humans and AI can work together.

Beyond her journalism career, Amanda is a long-distance runner and mom of three. She lives in New Jersey.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Club Benefits

Club Benefits