Google just changed how we search with 'multisearch' — and you can try it now

Google's new multisearch tool combines text and images — here's how it works

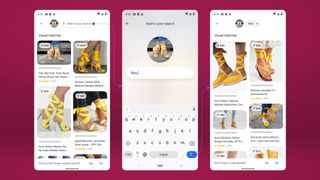

Searching in the Google app is about to get a lot more specific. A new multisearch feature, now available for both Android and iOS users, lets you combine pictures with text for more refined searches.

Google sees its new multisearch capability as ideal for shopping queries, particularly if you're looking for something that fits in with a particular style or home decor. By taking a picture using the Google lens feature and then adding text to the search query, you can track down similar items in specific colors, designs or styles.

Among the examples Google gives for its new multsearch feature are taking a picture of an orange dress and using text search terms to find something similar in green. You can also take pictures of your dining set, Google says, and find a matching table by searching for the term "coffee table."

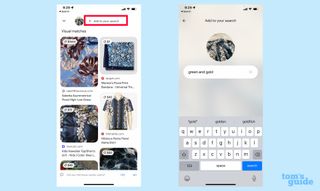

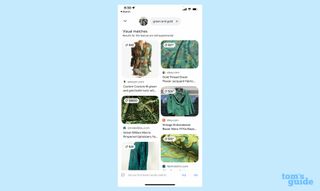

The feature is available now in beta form for English-language searches on either the Android of iOS version of the Google app, and it's pretty easy to use. For example, I was able to snap a photo of one of my favorite Hawaiian shirts to try and find that same style in green and gold.

While Google is touting this as an e-commerce tool at the moment, multisearch will truly be a great addition when its use cases can expand to other areas that would benefit from a combination of search terms using words and pictures. Cleaning tips, first-aid guidance and other how-to suggestions would seem like a natural fit for multisearch.

How to use Google multisearch

You don't have to wait to try the feature out. Here's how to get started with multisearch in the Google app.

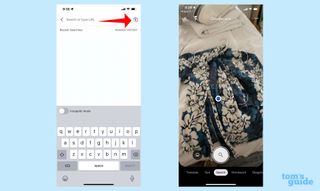

1. From the Google app, tap the Google Lens button. From there you can either search for a screenshot on your camera roll or snap a photo.

Sign up to get the BEST of Tom’s Guide direct to your inbox.

Upgrade your life with a daily dose of the biggest tech news, lifestyle hacks and our curated analysis. Be the first to know about cutting-edge gadgets and the hottest deals.

2. The Google app will list search results. Swipe up and tap the "+ Add to your search button," typing in your search query.

You'll get a page of results based on your search term and photo.

Google says the new search capability is powered by advances in AI. It's looking to enhance the feature using its Multitask Unified Model (MUM) to improve results down the road.

Read next: Google has made it easier to remove personally identifiable information from search — here's how

Philip Michaels is a Managing Editor at Tom's Guide. He's been covering personal technology since 1999 and was in the building when Steve Jobs showed off the iPhone for the first time. He's been evaluating smartphones since that first iPhone debuted in 2007, and he's been following phone carriers and smartphone plans since 2015. He has strong opinions about Apple, the Oakland Athletics, old movies and proper butchery techniques. Follow him at @PhilipMichaels.