How the Galaxy S10's Camera Can Beat the Pixel 3

Samsung needs to swing for the fences to make the Galaxy S10’s camera as great as it can be. Here’s how they can try.

Another February means another new Samsung flagship smartphone on the verge of release. But 2019 could go down as an especially memorable year for the Galaxy S family — both because it marks the 10th anniversary of the company's premium handsets, and because dramatic changes appear to be in store for this year's phones.

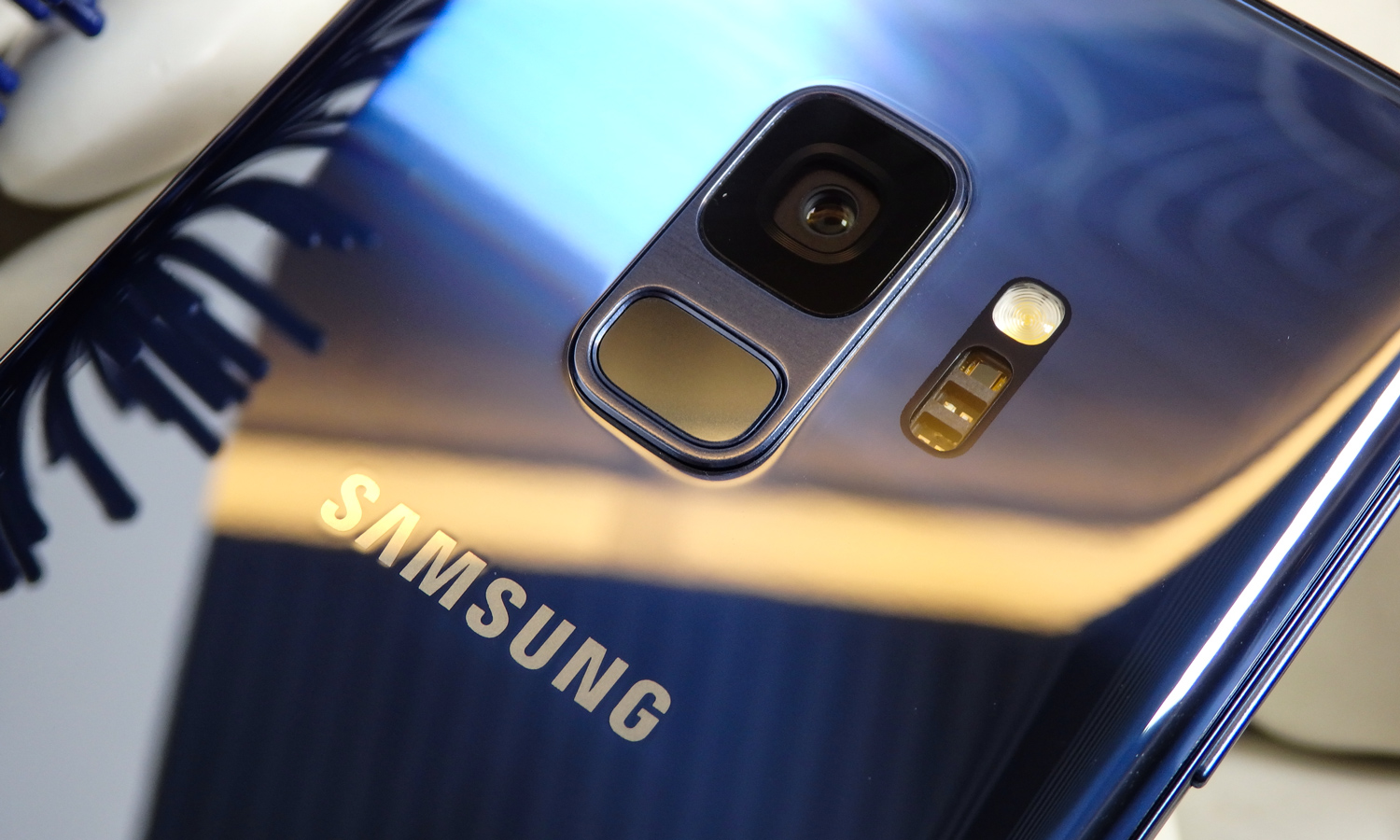

You've likely heard about the Galaxy S10's love-it-or-hate-it hole-punch display, as well as its ultrasonic in-screen fingerprint sensor. You may even be anticipating the heavily rumored 5G-capable special edition of the upcoming device, armed with up to 12GB of RAM, 1TB of storage and a whopping 5,000-mAh battery. But what's going to happen with the S10's cameras?

News on the photography front has actually been a little spotty, mostly because it seems Samsung has radical, sweeping plans for the S10's camera gear. While the Galaxy S9+ merely added a secondary telephoto shooter on the back for shallow depth-of-field portraits, some models of the S10 could sport up to three lenses on the back, along with two front cameras for better selfies.

Article continues belowSamsung's comparatively modest camera improvements to the S9 — it also added a variable aperture to last year's phones — resulted in good photos. But more recent releases from Google and Apple have left Samsung's phones behind when it comes to capturing photos. A serious overhaul to the S10's camera could be exactly what Samsung needs to turn the mobile-imaging tables. Here's how the Galaxy S10's cameras could challenge the other top camera phones.

Deliver Better Live Focus Portraits

Apple set the standard for bokeh-effect portraits when it introduced a secondary telephoto lens on the iPhone 7 Plus in 2016. Since then, phone makers have worked out numerous ways to produce dramatic shots with blurred backgrounds. But Samsung's Live Focus system has fallen behind.

The Galaxy S9+ and Note 9 — much like the Note 8 before them — have a disappointing tendency to produce blurry, shaky portraits with poor metering. It's one of the great mysteries of Samsung's camera technology, which is otherwise stellar. And it's been dragging the company's phones down for too long, as we called out Live Focus' performance ahead of the S9's launch last year, too.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Shallow depth-of-field portraits have become a litmus test for mobile-camera quality in recent years, and Samsung can't afford to lose more ground to competitors like Google, whose Pixel 3 can achieve gorgeous bokeh even without stereoscopic lenses.

Rumors surrounding the Galaxy S10 suggest that the 6.4-inch S10+ will exclusively inherit the telephoto lens, while the cheaper 5.8- and 6.1-inch variants will miss out. The S10+ is also expected to double up on front-facing cameras as well, indicating the phone will be able to leverage the power of two lenses for similarly stylish selfies, too, much as Google's Pixel 3 models have done.

Take Down Google's Night Sight

To its credit, the Galaxy S9 made for a very impressive camera when things got dim. Samsung's engineers set their sights on the iPhone X's low-light performance, and the results spoke for themselves. The S9 duo repeatedly trumped Apple's best efforts in the dark during many of our photo faceoffs last year.

It was partially thanks to Samsung's variable aperture system, which allowed the S9 and S9+ to automatically alternate between ƒ/1.5 and ƒ/2.4 depending on the requirements of the scene. The ƒ/1.5 setting invited more light into the image sensor — a necessity when shooting in unfavorable conditions, where every scrap of light is needed to achieve a decent shot.

MORE: Pixel 3 vs. iPhone XS Camera Face-Off: Why Google Wins

Unfortunately for Samsung, however, the S9 didn't retain the lead for long. Huawei and Google stepped up later in 2018 with their respective modes designed for nighttime photography, which used artificial intelligence to stitch together multiple frames taken with long exposure times into a balanced, perfectly exposed result. The S9, excellent though it was in the dark, simply couldn't compete with that.

We'd love to see Samsung pull a page from its fiercest rivals and add a similar feature to the S10's repertoire aimed at enhancing low-light shots. And chances are quite good we'll see exactly that — a rumor that emerged in December claimed that the S10 will introduce a new mode called Bright Night, which would ideally pair the versatility of Samsung's variable aperture mechanism with the sort of post-processing magic that has proved so successful in the Huawei Mate 20 Pro and Google Pixel 3.

Improve AI Scene Recognition

The proliferation of low-light modes highlights another truth about the state of phone cameras nowadays. Hardware alone doesn't cut it anymore. If Samsung wants the photography crown, it'll have to beef up its software game.

With the Note 9, Samsung introduced its Scene Optimizer feature, which uses AI to recognize the surroundings and tunes exposure parameters dynamically to fit the mood. For example, when shooting a flower, the camera might boost the saturation of greens, reds and yellows, or when capturing text, it might sharpen everything to ensure readability.

Samsung wasn't the first phone maker to do this, however, as Huawei baked AI into its P20 flagship's camera last year. In that handset's case, the Neural Processing Unit inside the P20's Kirin 970 chipset sped up the handling of machine-learning tasks.

MORE: Huawei Mate 20 Pro vs. Galaxy Note 9

We'd wager Samsung has some improvements lined up to make Scene Optimizer even more versatile and responsive going forward. As it stands now, Huawei's just-released Mate 20 Pro can recognize a total of 1,500 scenes categorized into 25 general scenarios. Conversely, the Note 9 covers 20 scenarios (the number of specific "scenes" is unknown). However, Huawei's now in the second generation of its AI imaging initiative, whereas the Note 9 only marked the beginning for Samsung.

To be fair, not all users particularly love the images modes like Scene Optimizer produce. Sometimes, they result in overly processed or artificial-looking photos that seem almost as if they've been run through an Instagram filter. Yet, in an era when phone makers are trying to imbue their cameras with machine learning, it stands to reason these features will only get better and better over time — and Samsung would be wise to capitalize on the possibilities.

Where Samsung Needs to Step Up

Last year, Samsung said the Galaxy S9 would "reinvent the camera," though we'd contest what it actually delivered was more of an evolution.

The S9's 960 frame-per-second Super Slow-Mo technology and variable aperture were welcome innovations, though their impact was limited. Shooting video at such a slow speed wasn't necessarily easy or convenient, and the aperture system's gains were only demonstrable in certain scenarios.

But Samsung's competitors appeared to take a different tack throughout the remainder of 2018, maximizing AI to minimize the level of effort required to take impressive photos.

Take Google's work with the Pixel 3, for example. The company's Top Shot feature bested the Flaw Detection functionality Samsung introduced in the Note 9. Whereas Flaw Detection simply alerted you to potential issues with your photo and suggested you try again, Top Shot actually captured frames before and after your result, and smartly picked out ones that were superior.

That's the sort of clever use of AI that the industry could use more of, and exactly the kind of invention that could make or break Samsung's next-generation cameras. After all, smartphones should democratize the ability to capture amazing moments and eliminate the need for most of us to carry around compact cameras or invest hundreds in expensive DSLRs.

Outlook

Even if the Galaxy S10 incorporates a ton of lenses and the best image sensors on the market, it will have to be intuitive, easy to shoot with and able to yield impressive results with minimal fuss. If Samsung can nail that, then it might actually reimagine the camera for real.

Adam Ismail is a staff writer at Jalopnik and previously worked on Tom's Guide covering smartphones, car tech and gaming. His love for all things mobile began with the original Motorola Droid; since then he’s owned a variety of Android and iOS-powered handsets, refusing to stay loyal to one platform. His work has also appeared on Digital Trends and GTPlanet. When he’s not fiddling with the latest devices, he’s at an indie pop show, recording a podcast or playing Sega Dreamcast.

Club Benefits

Club Benefits