iOS 15 Live Text and Visual Look Up vs. Google Lens: How they compare

iOS 15 adds some smarts to your camera that sound a lot like what Google Lens does

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

iOS 15 brings some new intelligence to your phone in the form of Live Text and Visual Look Up. Both features draw heavily on the neural engine that's part of your iPhone's processor and work to turn the phone's camera into a device that doesn't just capture pictures but can also analyze what’s in the photos and let you do more with that information.

With iOS 15's Live Text, your camera can now capture and copy text in images, allowing you to paste the text into documents, messages and emails. You can even look up web addresses and dial phone numbers that appear in your photos. Meanwhile, Visual Look Up is a great way to learn more about the world around you, as you can swipe up from your photo to look up information on landmarks, paintings, books, flowers and animals.

- iOS 15 beta review: What we think so far

- These are the best camera phones

- Plus: How to download the iOS 15 public beta

If this sounds familiar, it's because Google has offered similar features through Google Lens, which is built into its own Photos app and has rolled out to many Android phones. Apple is never shy about adding features that rival companies have gotten to first, particularly if it can present those features in (what it thinks) is a better way.

Article continues belowWe'll have to wait until the full version of iOS 15 comes out in the fall to see whether Apple succeeded. But using the public beta of iOS 15, we can see just how far along Live Text and Visual Look Up are, especially when compared to what's available on Android phones through Google. First, though, let's take a closer look at what Live Text and Visual Look Up bring to the iPhone in the iOS 15 update.

iOS 15 Live Text: What you can do and how it works

Before you get started with either Live Text or Visual Look Up, you'll need to make sure you have a compatible phone — and in this case being able to run iOS 15 isn't enough. Because both of these features rely so heavily on neural processing, you'll need a device powered by at least an A12 Bionic chip, which means the iPhone XR, iPhone XS, or iPhone XS Max, plus any phone that came out after that 2018 trio.

Live Text is capable of recognizing all kinds of text, whether it's printed text in a photo, handwriting on a piece of paper or scribbles on a whiteboard. The feature doesn't just work in Photos, but also in screenshots, Quick Look and even images you encounter when browsing in Safari. At iOS 15's launch, Live Text will support seven languages — in addition to English, it will recognize Chinese, Portuguese, French, Italian, Spanish and German.

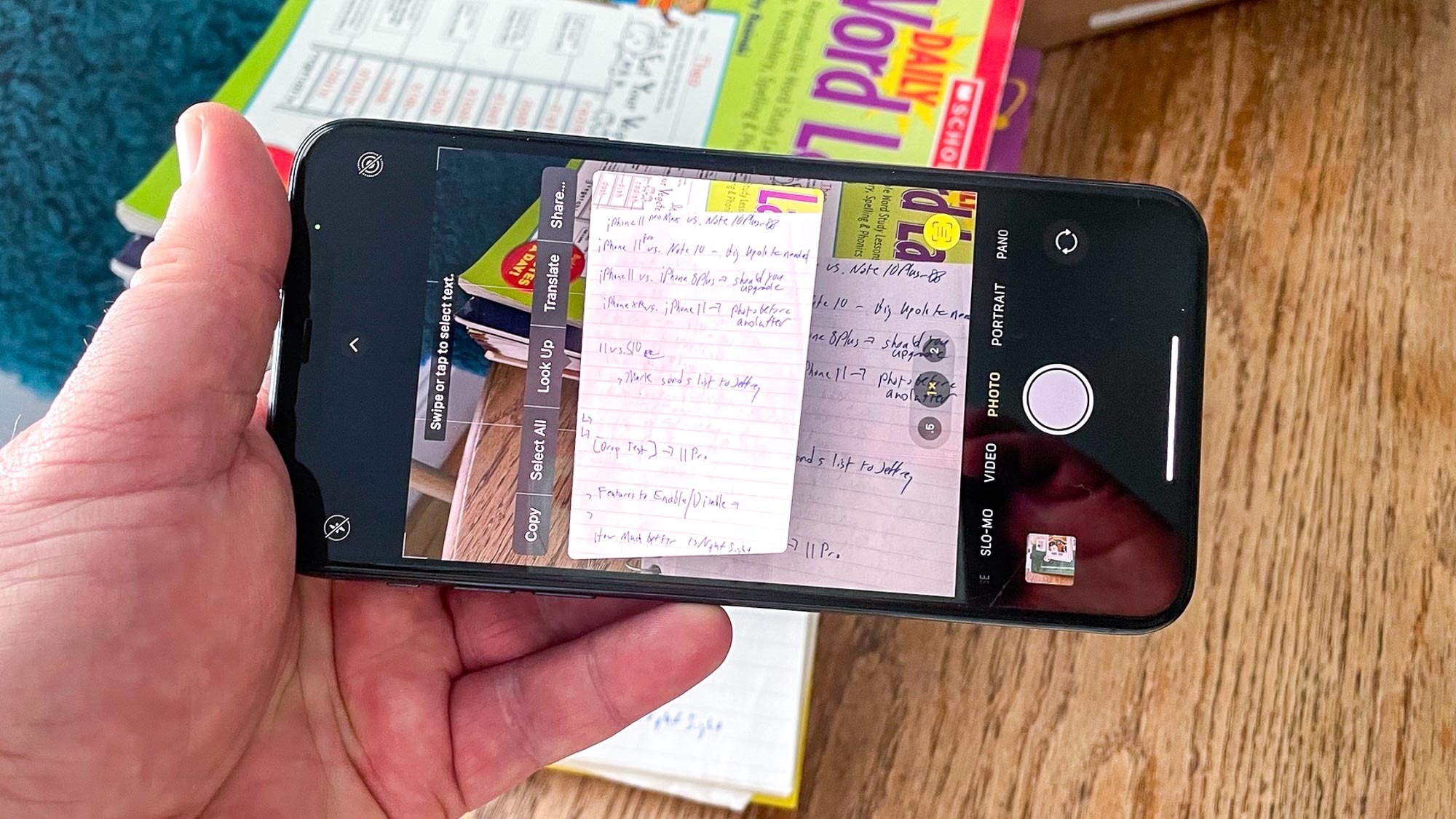

The Camera app in iOS 15 offers a preview of what Live Text is capturing; just tap the text icon in the lower right corner of the viewfinder. From there, you can select text to copy, translate, look or share with other people. As useful as that is, I think the most practical use will be selecting text from photos already in your photo library.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

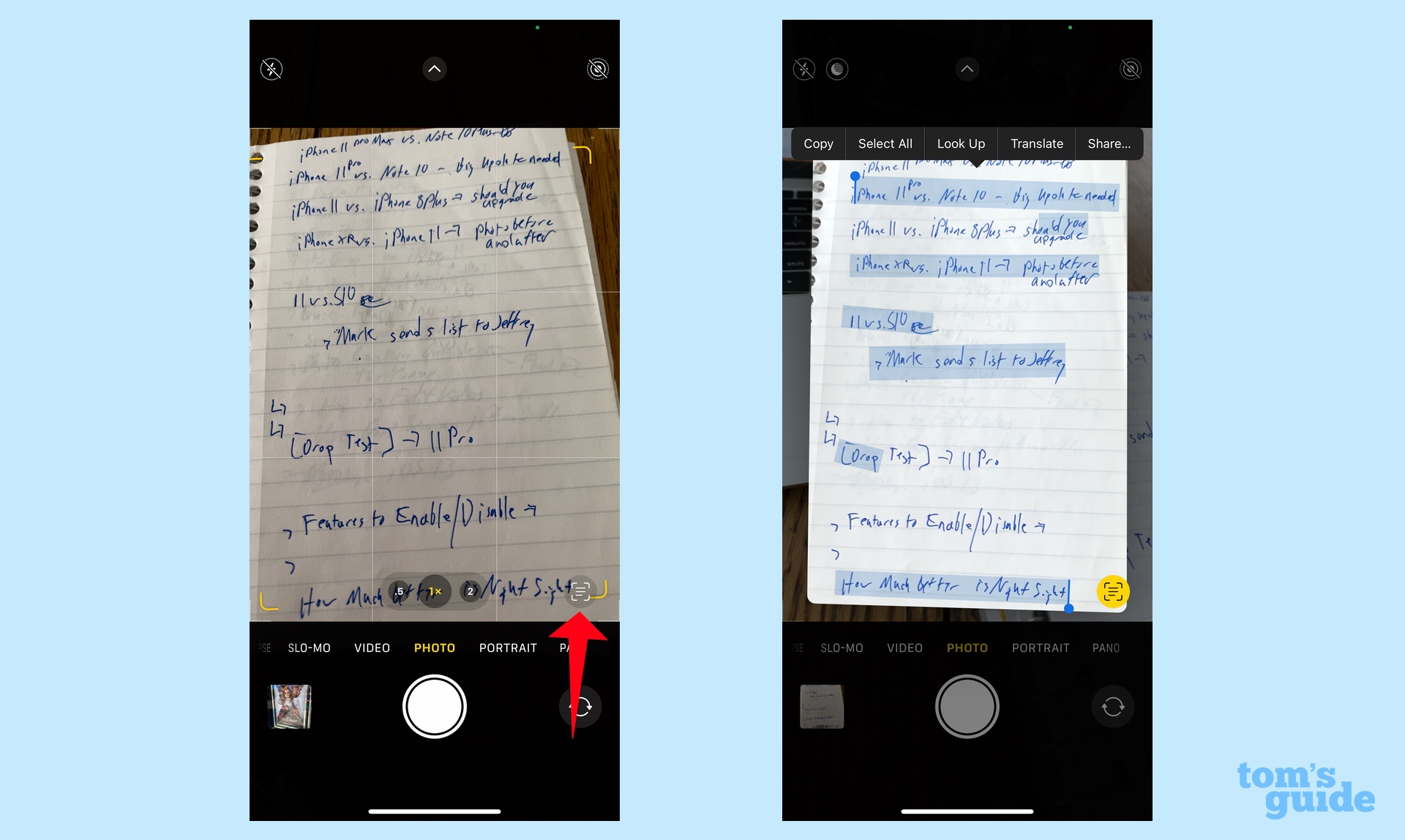

The feature captures more than just text. You can also collect phone numbers, and a long press of the phone number in a photo brings up a menu that lets you call, text or FaceTime with the number; you can also add that number to your Contacts app with a tap. (The feature works in the Camera app's live preview, too — just tap the text button in the lower corner, then long press on the number.) A similar thing happens when you long press on a web address(you have the option of opening a link in your mobile browser) or a physical address (you can get directions in Maps).

iOS 15 Visual Look Up: What you can do and how it works

Visual Look Up is a way to get more information on whatever it is you're taking pictures of. The feature comes in handy looking up background info on all the sights and points of interest you've visited on past vacations, but it's also helpful for finding out more about books, plants and other objects.

Go to the Photos app in iOS 15 and select a photo. Usually, you'll need to swipe up, which will reveal details about the image such as where you took it, on what device and so forth. But if Visual Look Up has something to add, an icon will appear on the photo itself. Tap that, and you'll see a collection of Siri links and similar images from the Web that can tell you more about what you're looking at.

The icon you tap changes based on what you're researching with Visual Look Up. A painting will produce an art-like icon, while a leaf indicates that Visual Look Up can share information on plants.

You don't need to have taken the photos recently or even by an iPhone for Visual Look Up to recognize them. An image of me taken with a Canon PowerShot S200 standing in front of London's Tower Bridge 12 years ago was recognized. But Visual Look Up can be kind of hit-and-miss at this point as Apple fleshes out the feature for iOS 15's final release. Sometimes, you'll get info; sometimes, you won't.

iOS 15 Live Text and Visual Look Up vs. Google Lens: How they compare

To find out how Apple's initial efforts compare to what Android users enjoy right now, I took a walk around San Francisco, armed with an iPhone 11 Pro Max running the iOS 15 beta and a Google Pixel 4a 5G running Android 11. I snapped the photos, headed home and did my comparisons there rather than live in the field. Here's what we found.

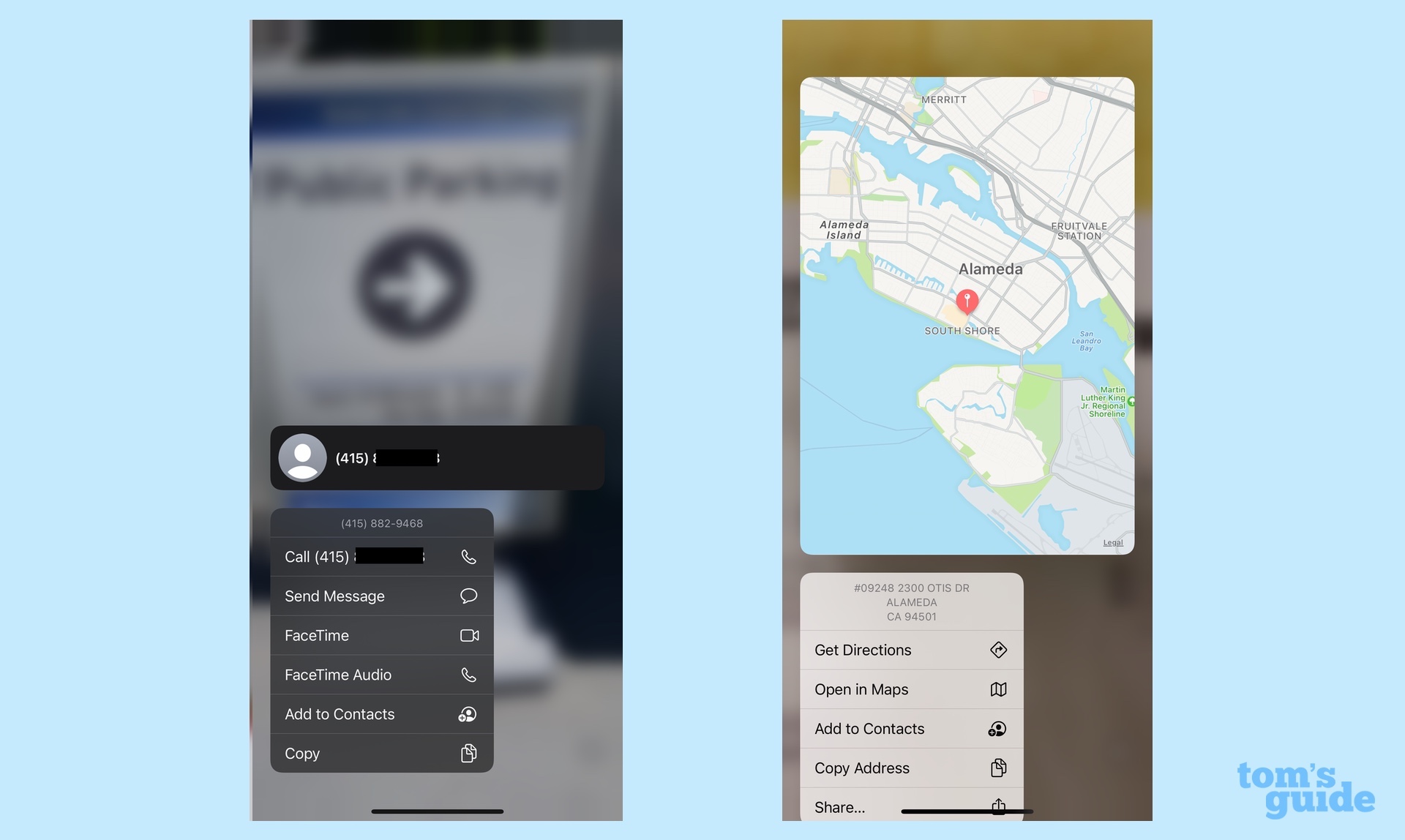

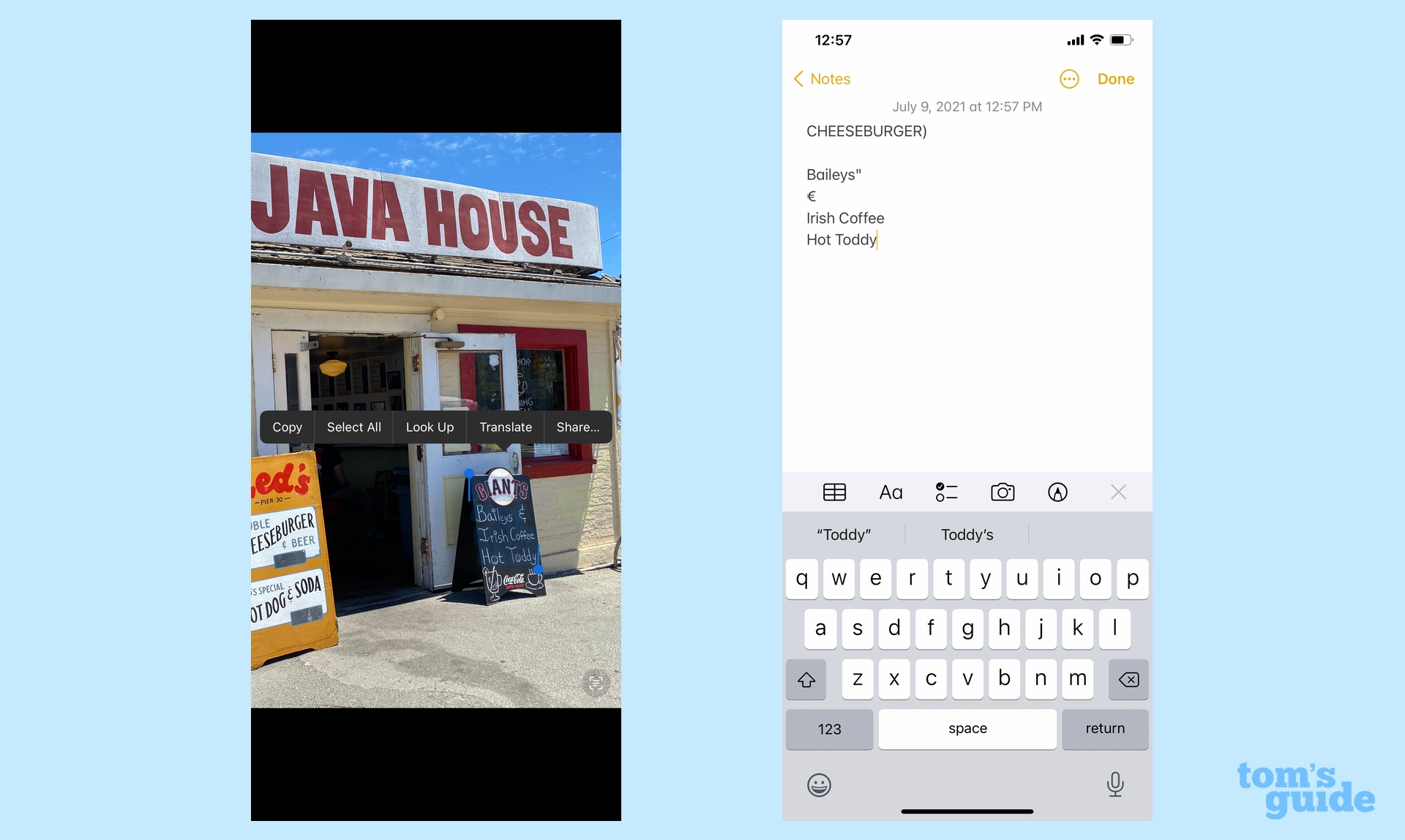

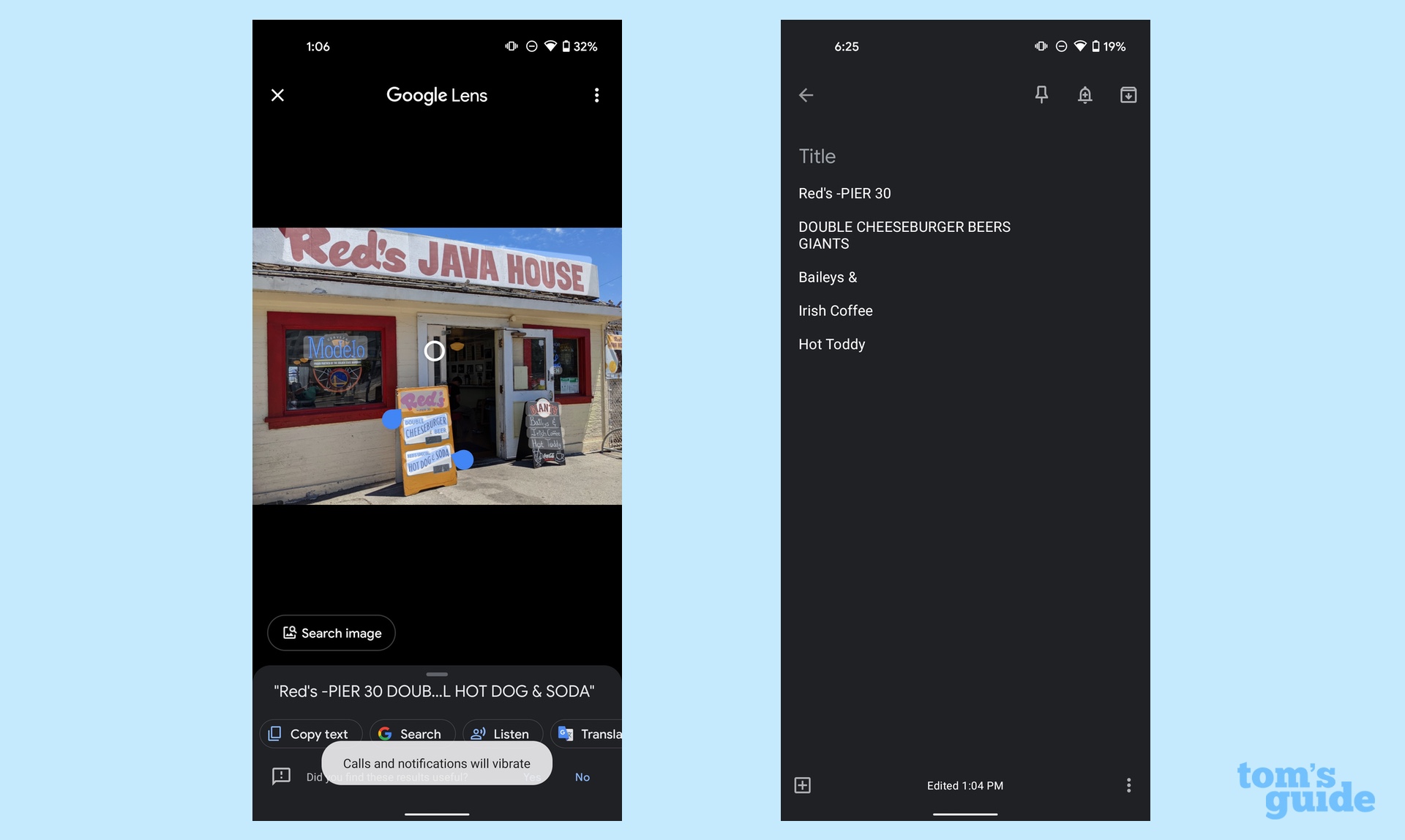

Text capture: Before stopping for lunch at one of my favorite eateries, I took a photo of the restaurant's exterior. I had to zoom in on the iPhone's photo to make sure I was capturing the intended text, but Live Text successfully copied the word Cheeseburger, even though that appears on a slant in the sign. (It added a superfluous parenthesis, but what can you do?) Live Text also grabbed the handwritten text promising Baileys & Irish Coffee Hot Toddy, though it stumbled translating that ampersand.

Google Lens successfully recognized the ampersand in the handwritten sign and also was able to capture the cheeseburger text. However, I found the text selection controls for this particular image to be less precise. The handwritten sign also copied the text from the Giants logo while I also had to copy the entire text of the Cheeseburger sign. In this test at least, Live Text holds its own against Google Lens.

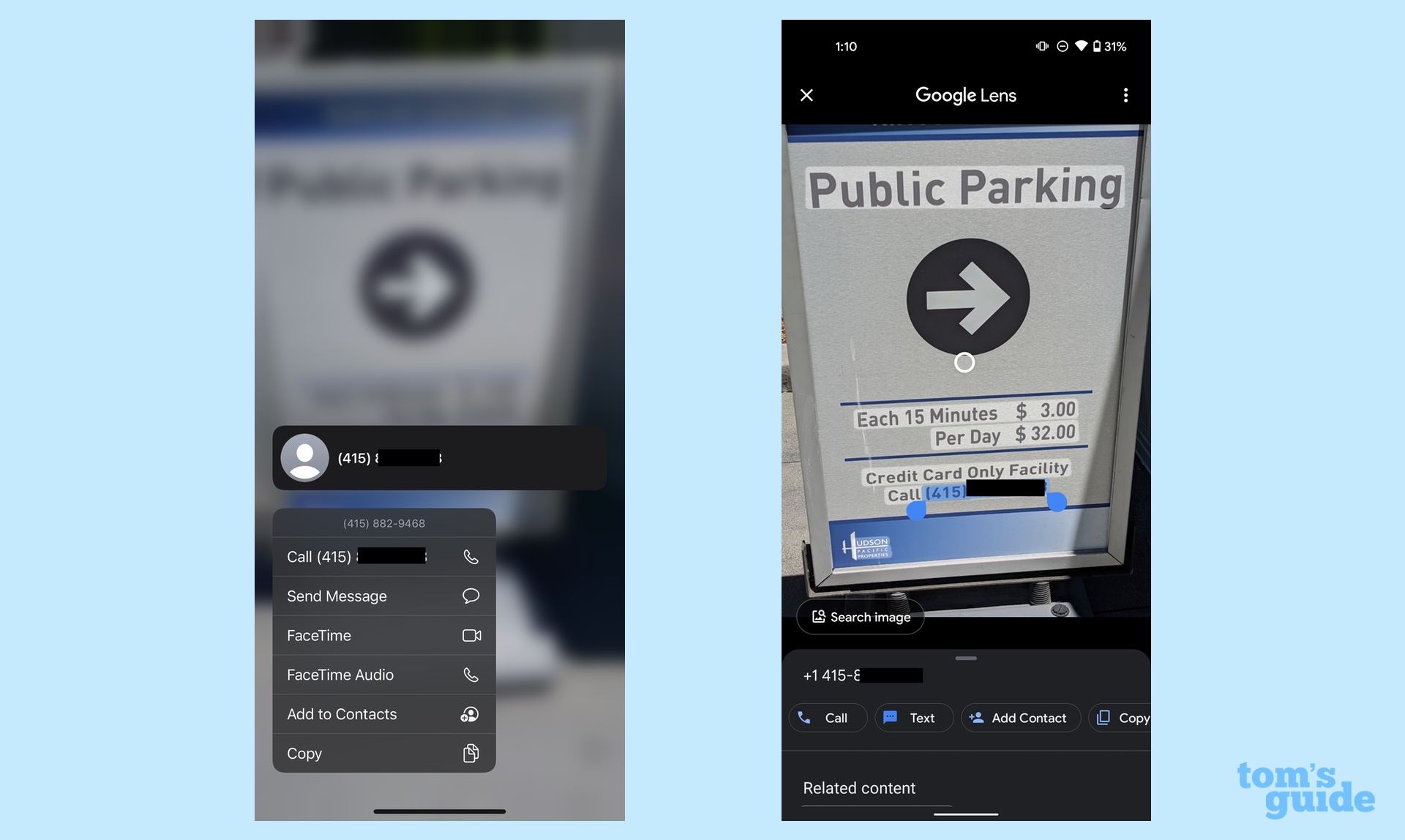

Phone numbers: To test out the ability to call phone numbers I had captured with my phone's camera, I snapped a shot of a parking lot sign. I don't find Live Text's long-press on the number to be a particularly intuitive feature, but the pop-up menu appeared without a problem. Google Lens was a little bit more fussy. I had to carefully select the phone number text, area code included — only then did a call option appear in the menu. I think Apple may have come up with a more elegant solution than Google here.

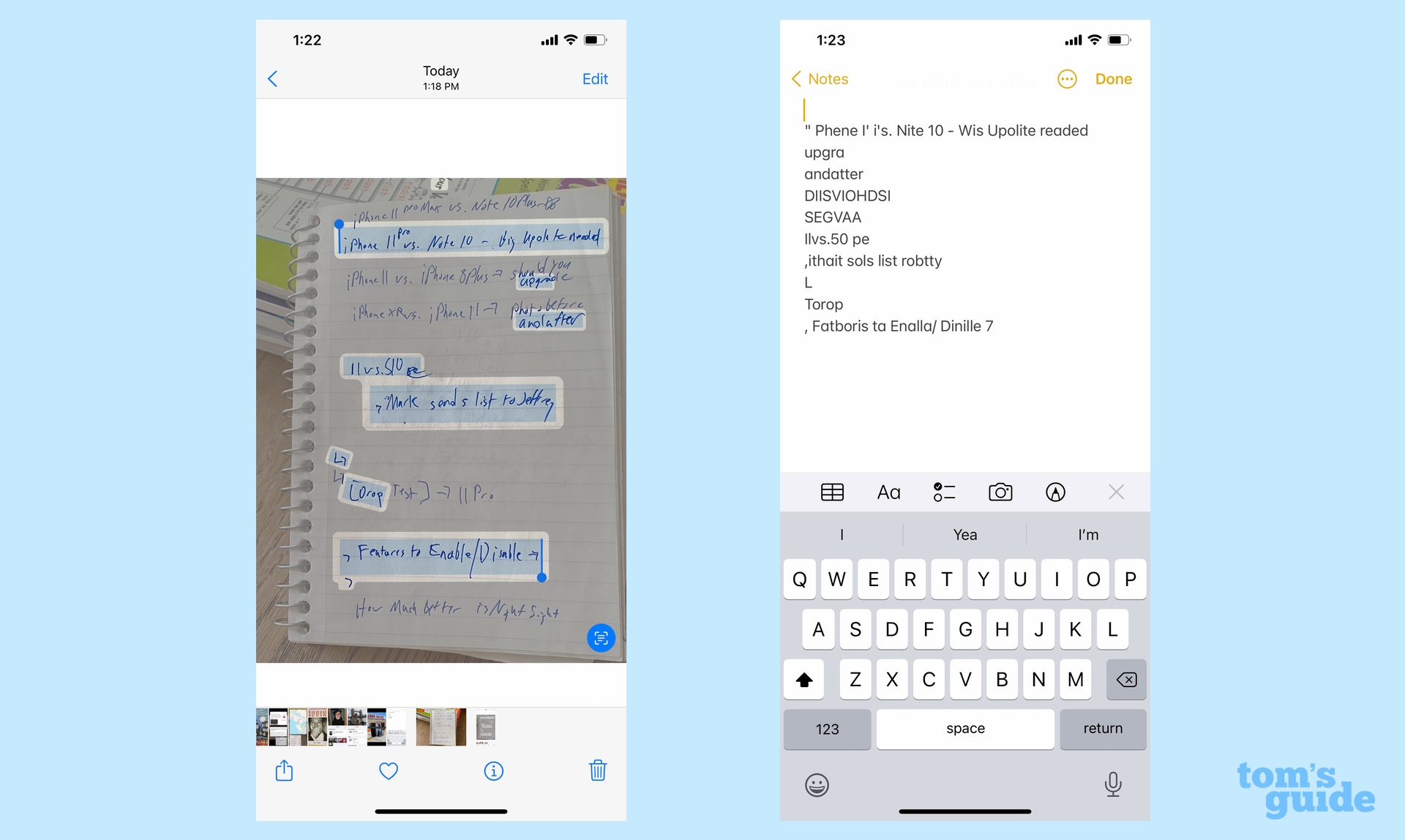

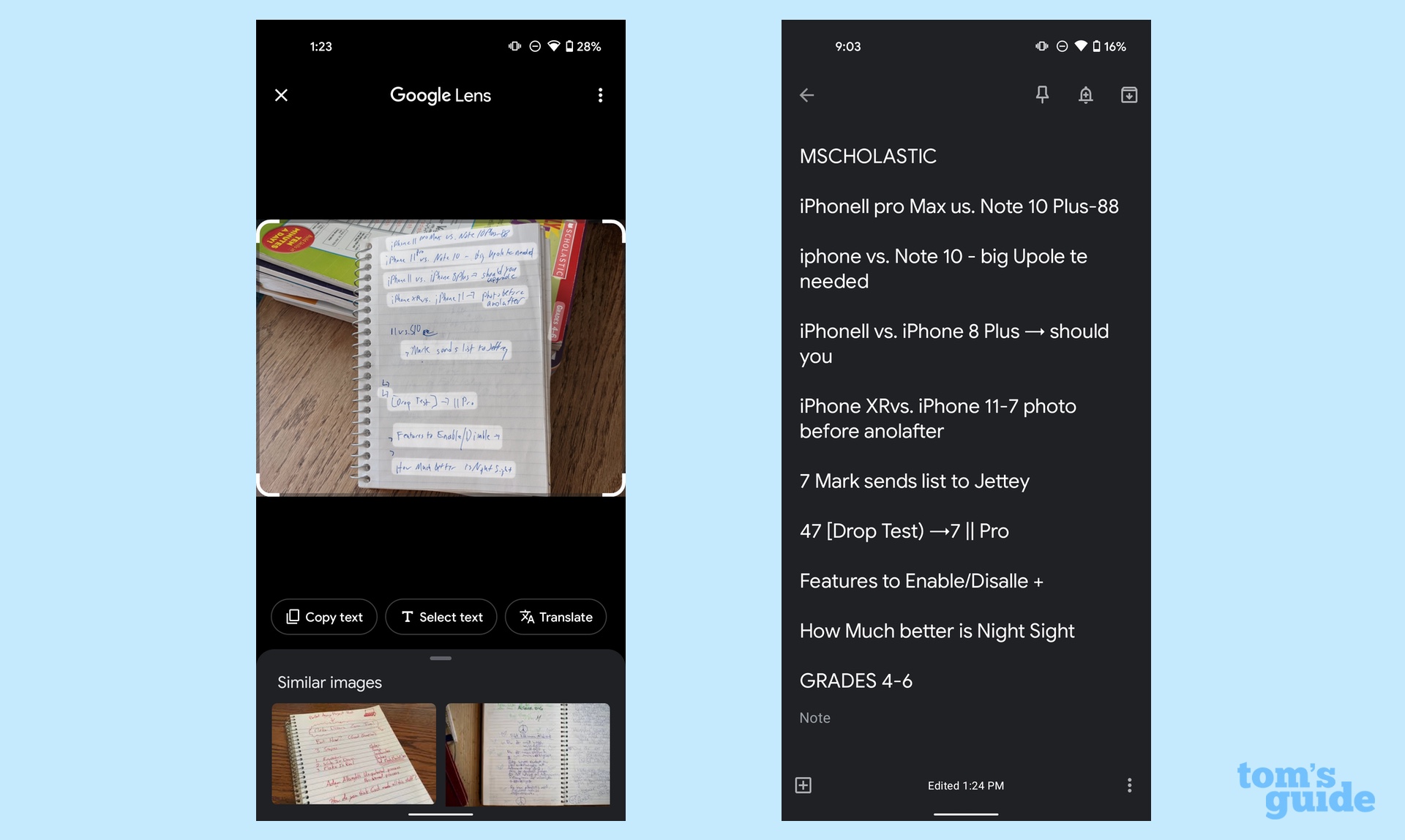

Handwriting: We've already seen Live Text and Google Lens show their stuff with written text on the Red's Java Hut signage, but what about actual handwriting? Knowing you can easily copy your writing into notes can be a real time-saver when it comes to transcribing notes from lectures, meetings or brainstorming sections.

I would describe my own handwriting style as "hastily scrawled ransom note" so capturing it is quite a test for both Live Text and Google Lens. It's a task that Live Text simply isn't up to. First, as you can see from the screenshot, it only captured every other line from my notes on potential iPhone 11 stories from 2019. The text I was able to paste into Notes didn't include one actual word of what I had written down — somehow "Features to Enable/Disable" became "Fatboris ta Enalla/Dinlille 7." Back to the drawing board with this feature, I think.

Google Lens fares better here, though only just. It captures most, but not all of the handwritten text (and some of the text from the books in the background). The Pasted notes resemble actual human language, with some translation errors. ("Disable" becomes "Disalle.") It's not perfect, but it's much farther along than what Apple has to offer.

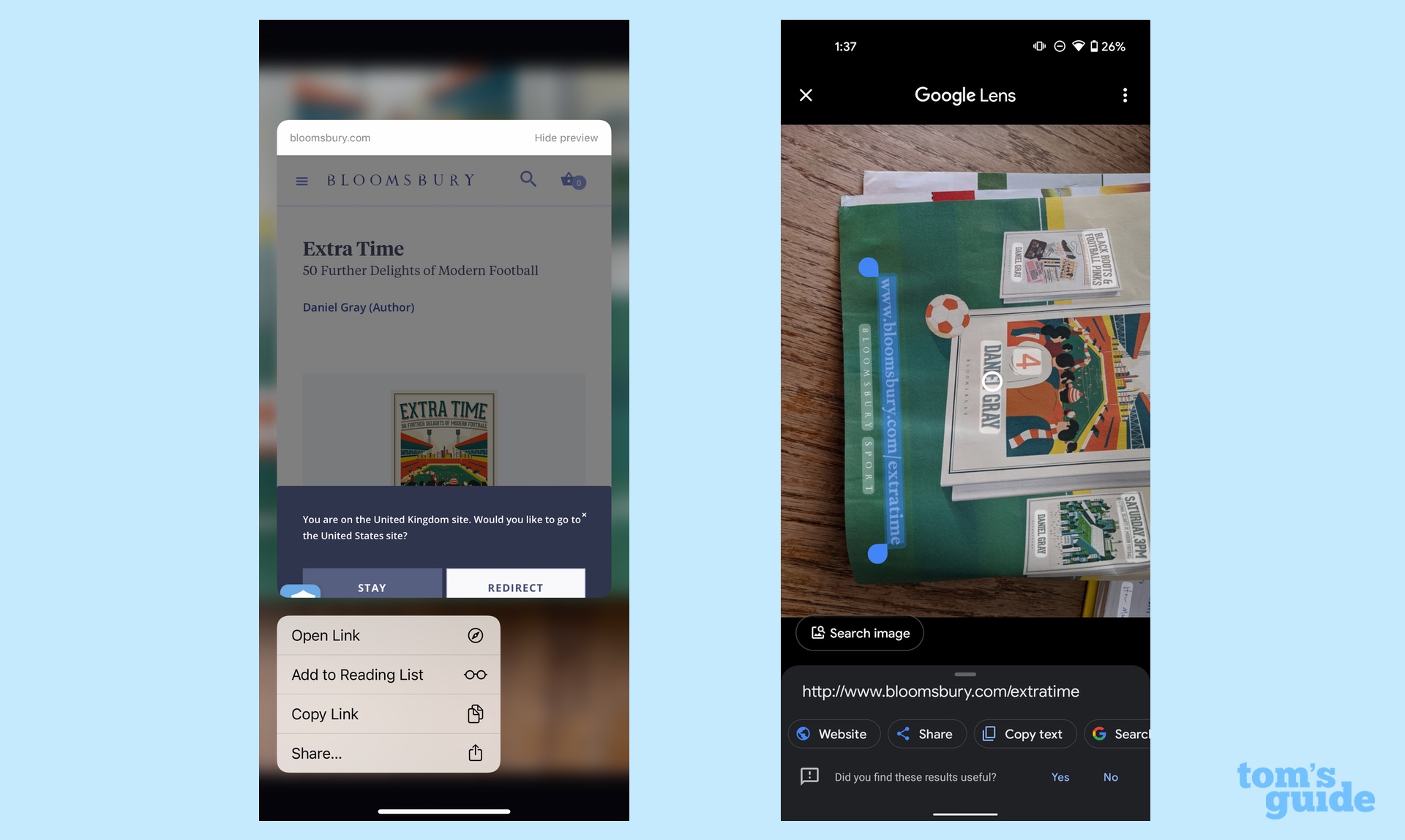

URLs: We could test the ability of Live Text and Google Lens to look up web addresses or physical ones, and I decided to go for the former. Long-pressing on the URL in Photos gave me the option of opening a link in Safari; I could also tap a Live Text button on the image and tap the URL to automatically jump to Safari. Similarly, Google Lens on the Pixel 4a recognized the text and presented me with a button that took me to the right URL in Chrome. This feature works just as well on both phones.

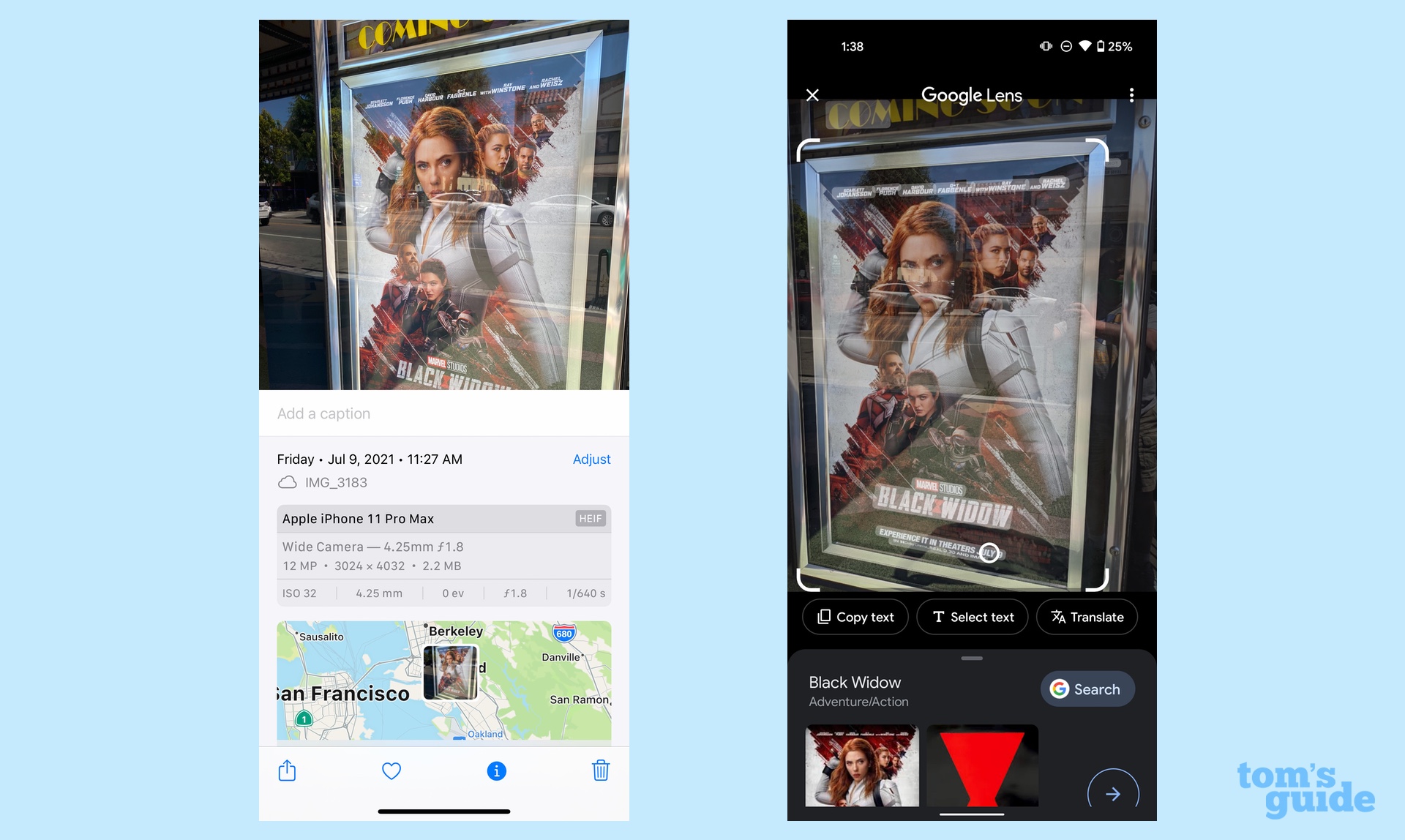

Movie Poster: Turning to Visual Look Up, it may be able to recognize books — it successfully identified David Itzkoff's Robin Williams biography, even linking to reviews — but movie posters seem to flummox it. A shot of the Black Widow poster outside my local cineplex yielded no additional information on the iPhone. Even though this isn't the best of photos, Google Lens on the Pixel 4a still yielded links to reviews and similar images.

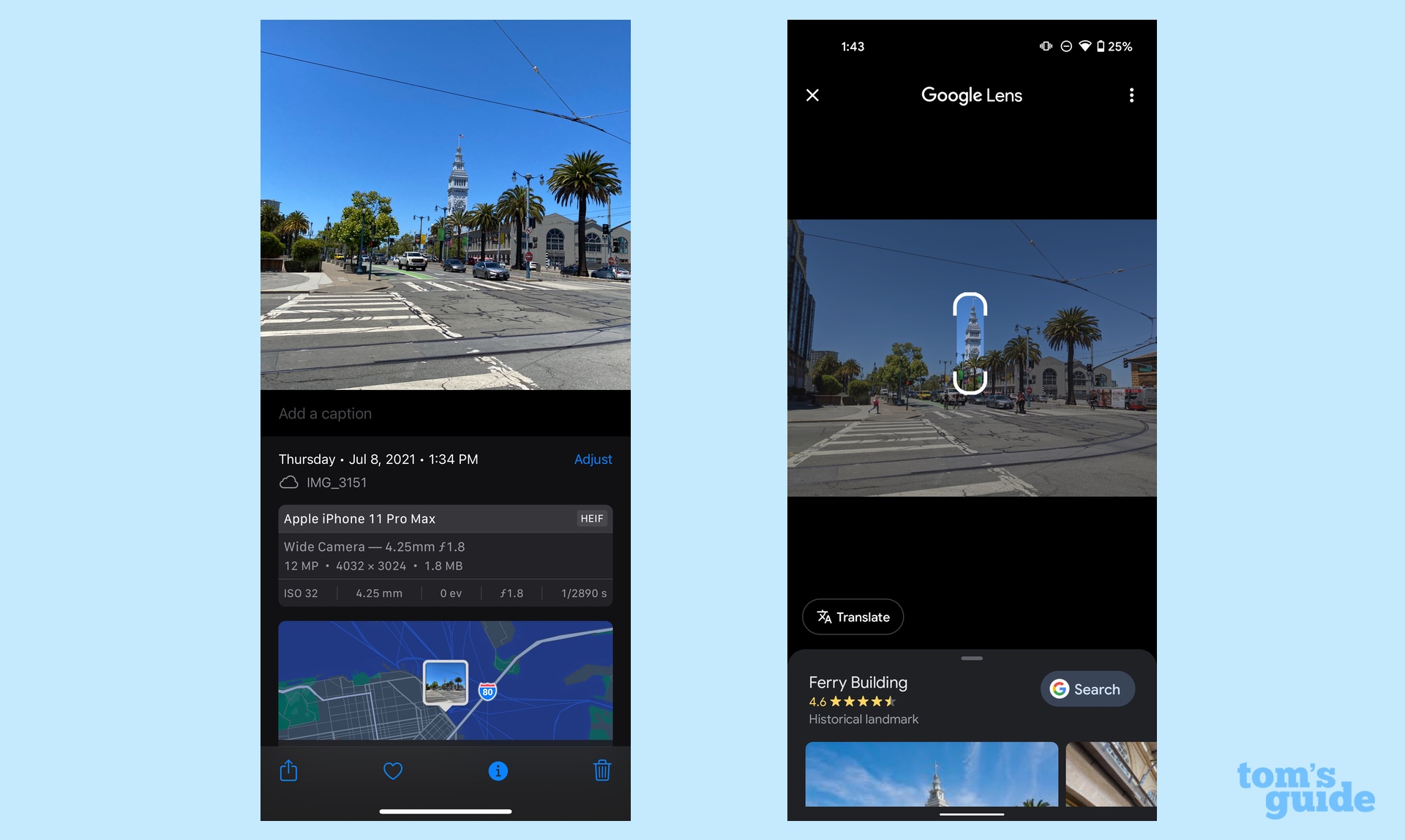

Buildings: I only saw the Ferry Building from a distance in my walk around San Francisco, but I thought it would be a good test as to how far Visual Look Up's vision can reach. The answer is not as far as that, apparently, as I didn't get any information on this fairly recognizable San Francisco fixture. Google Lens had no such trouble, bad angle and all.

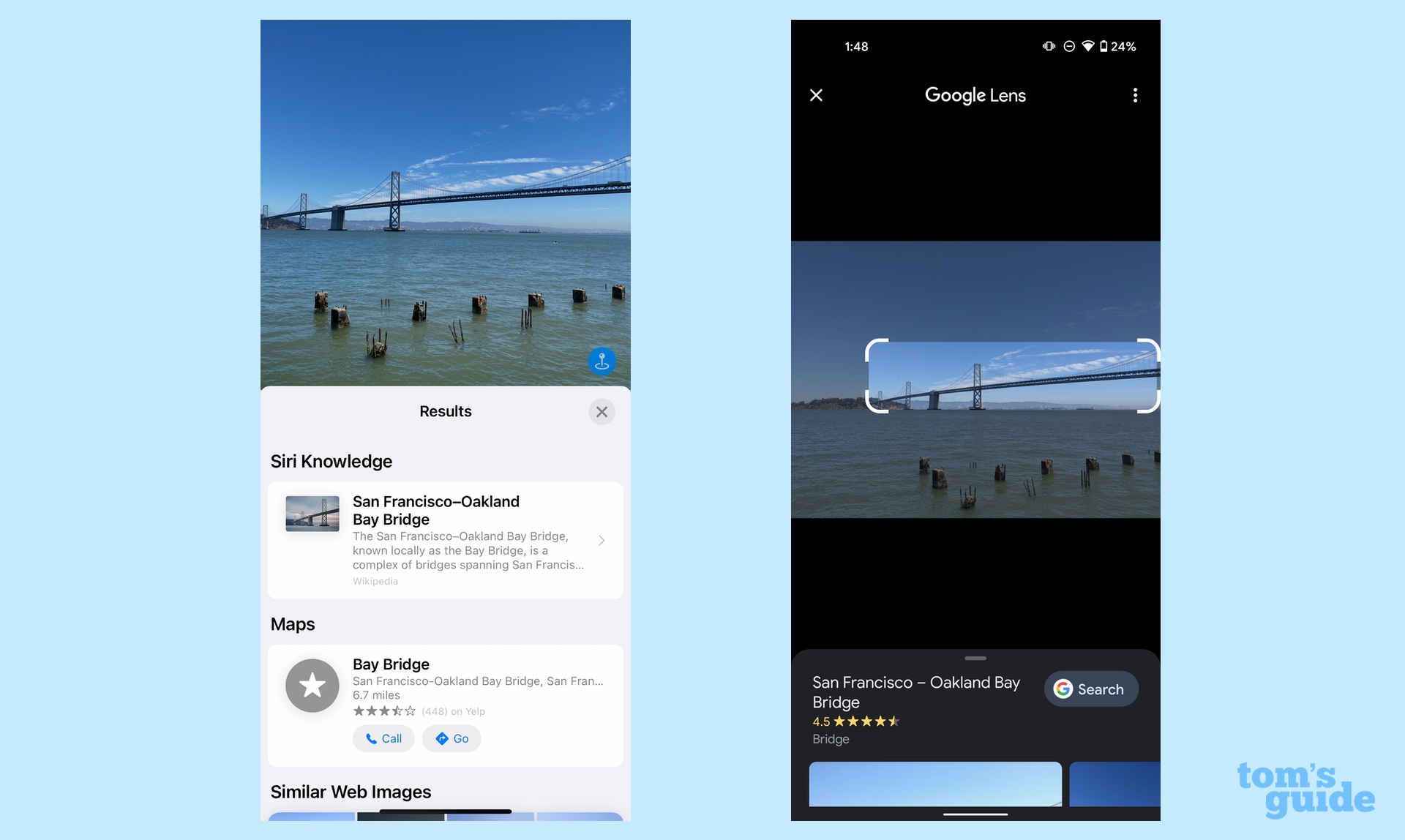

Landmark: At least Visual Look Up can recognize landmarks from a distance. Even from the shoreline, iOS 15's new feature could pull up information on the Bay Bridge, even offering a Siri Knowledge summary of the bridge's specs. Google Lens results focused more on images, though there was a search link that took me to Google results.

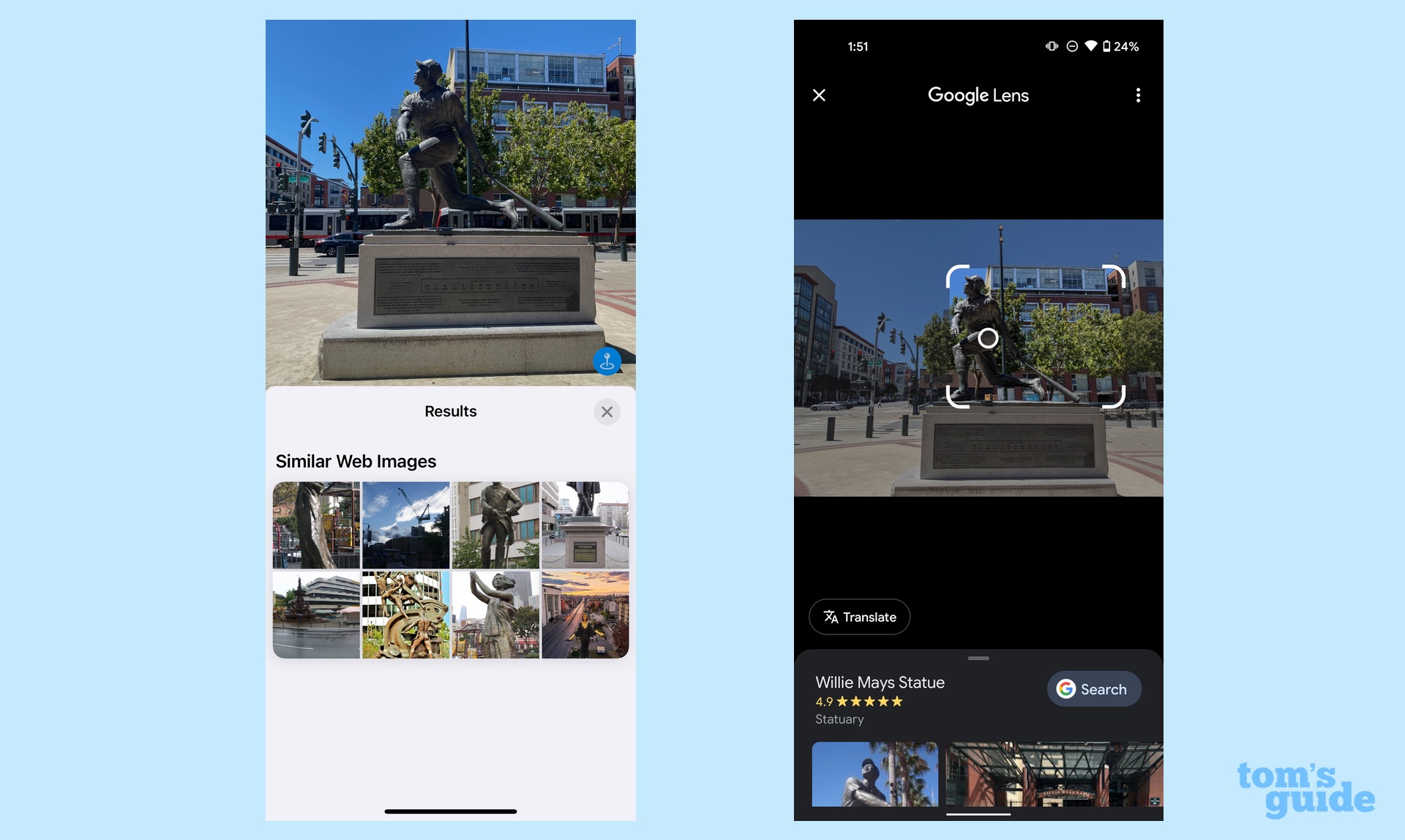

Statue: I regret to inform you that news of Willie Mays' accomplishments have not yet reached Visual Look Up. iOS 15's feature produced similar web images — a series of unrelated statues — but nothing else. In addition to a Google search link, the similar images produced by Google Lens at least featured other baseball greats... including statues of other Giants around the same location as the Willie Mays statue.

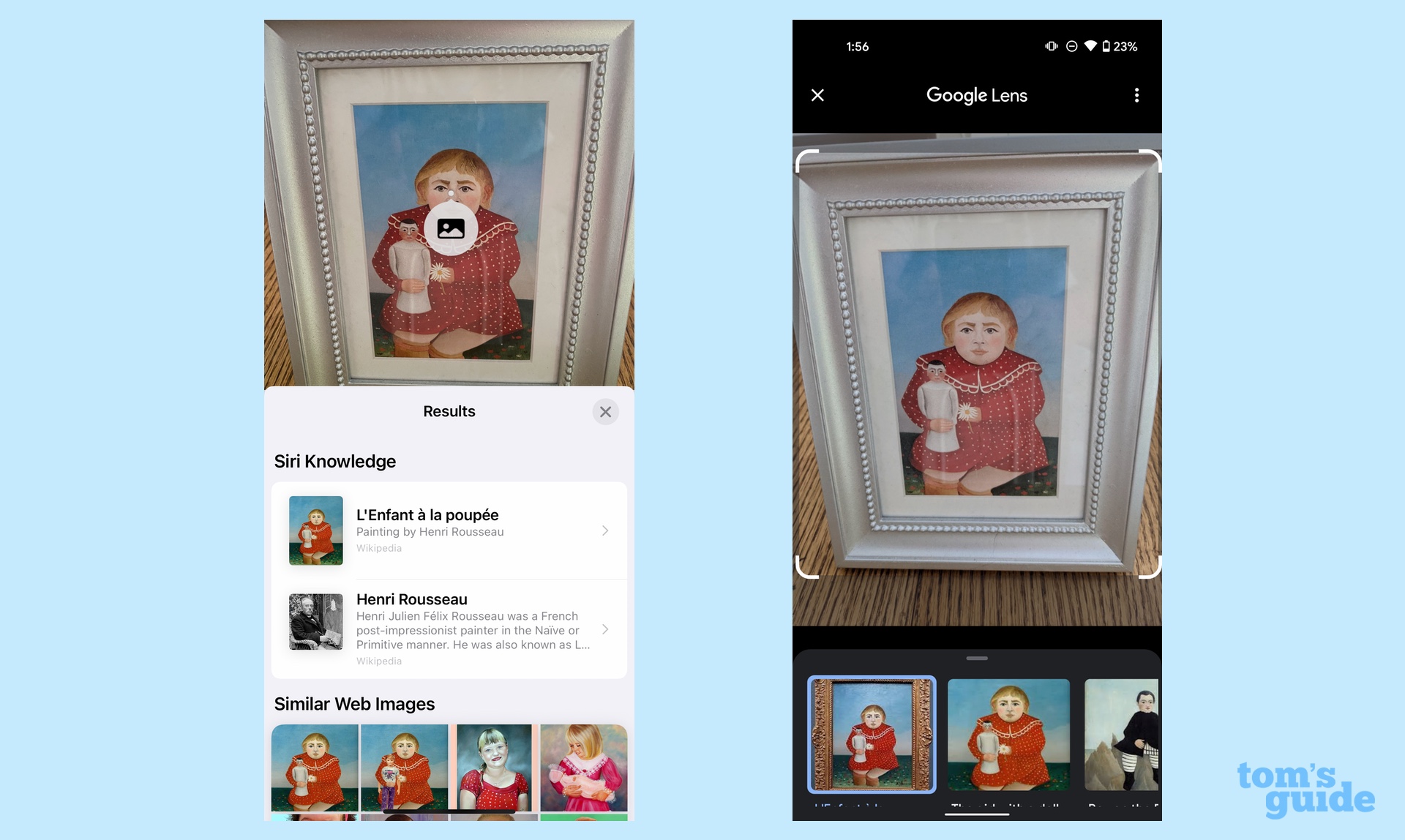

Artwork: Visual Look Up may not know baseball, but it certainly understands art. A picture of Rosseau's L'Enfant a la Poupee brought up Siri Knowledge links not just to the artwork but the artist as well. Google Lens offered its usual assortment of similar images and the ubiquitous search link. Apple's results were more enlightening.

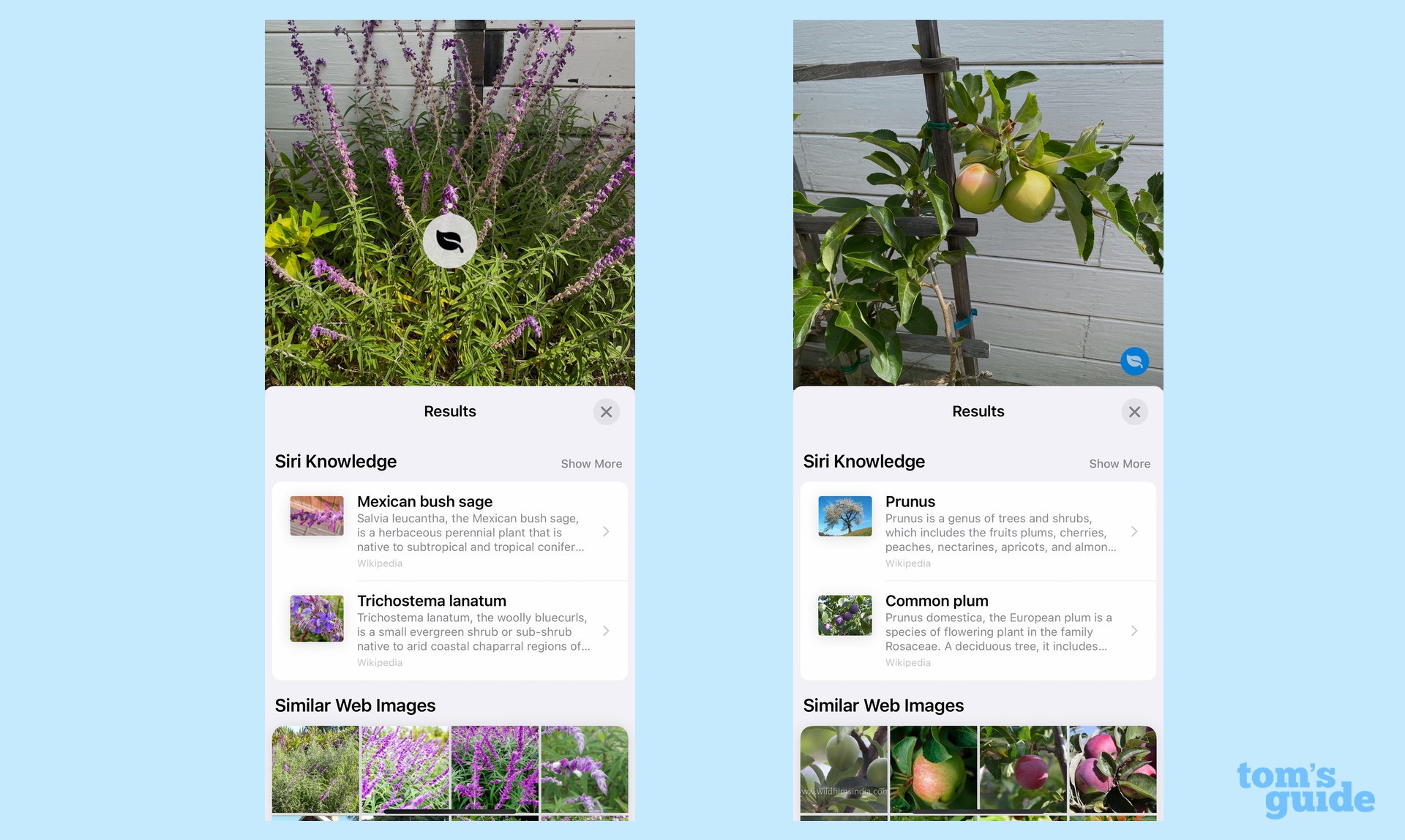

Plant Life: I got mixed results when I set Visual Look Up loose in my garden. The iOS 15 feature correctly identified the Mexican bush sage in my backyard, but it seems to think that the apple tree I have is growing plums. Google Lens correctly identified both plants and even took a stab at trying to figure out what kind of apples are growing on my tree.

iOS 15 Live Text and Visual Look Up vs. Google Lens: Early verdict

iOS 15 may be in beta, but Live Text compares favorably to Google Lens in just about everything but transcribing handwritten text. That's something Apple can probably fine-tune between now and when the final version of iOS 15 ships, though Google has had a head-start here and it still doesn't get everything completely accurate.

The gap between Visual Look Up and Google Lens is a bit wider, as Visual Look Up's results can be hit or miss. When it does have info, though, it's right there in the results field instead of sending you to a search engine for additional data.

In other words, Live Text and Visual Look Up are still very much betas. But you can't help but be impressed with Apple's initial work.

Philip Michaels is a Managing Editor at Tom's Guide. He's been covering personal technology since 1999 and was in the building when Steve Jobs showed off the iPhone for the first time. He's been evaluating smartphones since that first iPhone debuted in 2007, and he's been following phone carriers and smartphone plans since 2015. He has strong opinions about Apple, the Oakland Athletics, old movies and proper butchery techniques. Follow him at @PhilipMichaels.

Club Benefits

Club Benefits