Google Pixel 6 will make photography more inclusive — and that’s worth celebrating

Google is teaming up with image experts to make sure photos are accurate for everyone

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

You may have missed the news tucked away at the end of Google’s I/O 2021 keynote earlier this week, but the software giant took a step toward tackling an issue that has plagued image processing since the advent of color camera technology. Google wants to make mobile photography more inclusive, with more beautiful and accurate images of people of color.

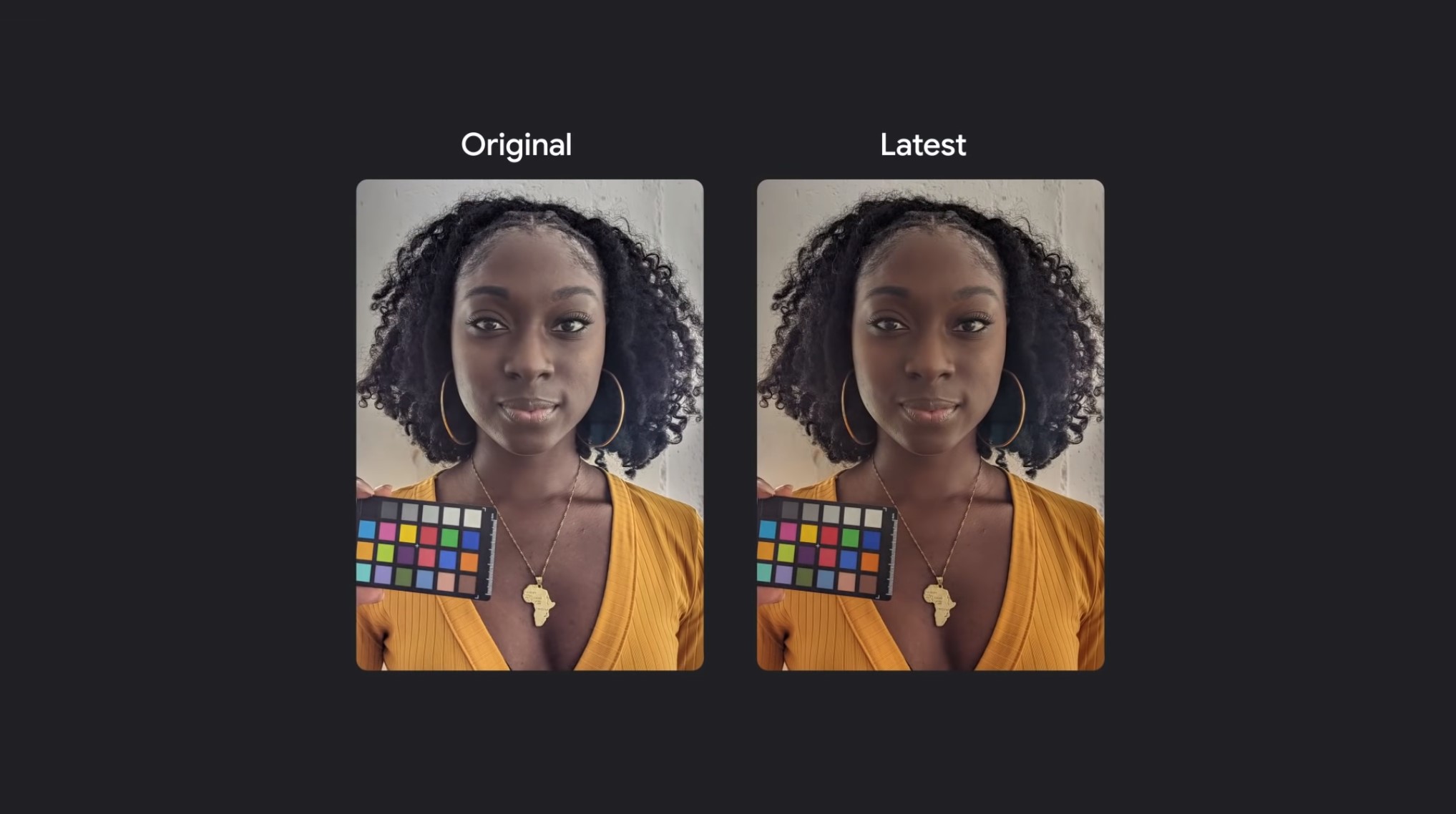

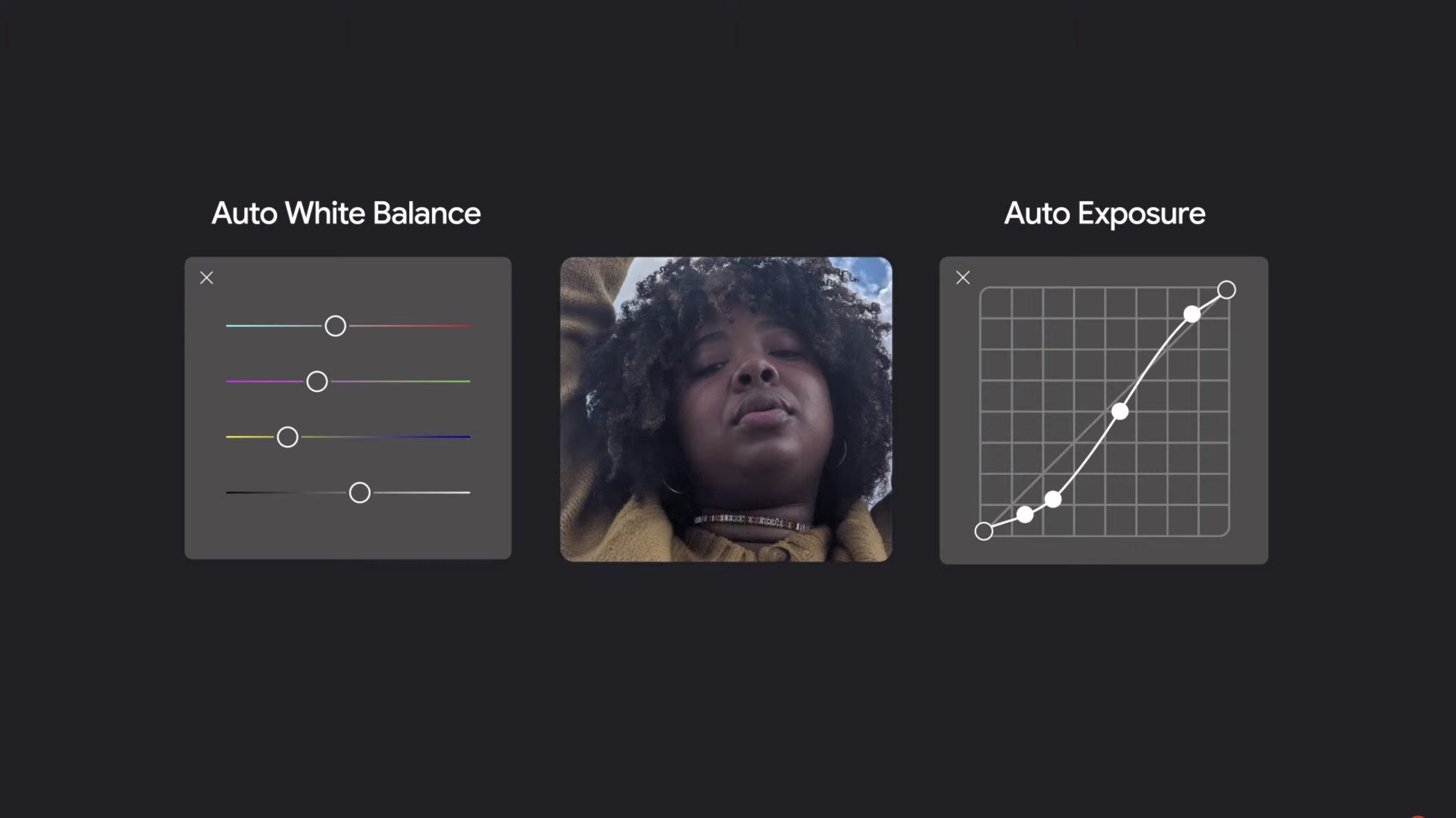

To that end, Google is teaming up with a diverse group of image experts to make the company’s camera and image products better. From there, its engineers are working to improve the accuracy of auto white balance and auto exposure algorithms using diverse image data. The results should result in more accurate images of people, with an emphasis placed on those with darker skin.

- These are the best camera phones out right now

- Google Pixel 5 review

- Plus: Android 12 release date, beta, new design and all the biggest features

Google's efforts will come to the Pixel phone first, with the Pixel 6 introducing the improve algorithms in the fall, but Google plans to offer the improved computational software to other Android camera phones.

Article continues belowThe news hits close to home for me. My wife is dark skinned. I’m a few shades lighter. Our four kids are gradations between us. Our mix-up means we all don’t photograph the same. Sure, the Pixel 3a took great pictures, but there would be times, however, where it failed to appropriately capture a given moment. My wife’s beautiful skin didn’t always look right. Her natural afro puffs would blend into the background. Our farer-skinned children would stand out unnaturally next to their darker-skinned siblings. This wasn’t the case for all photos as some pictures fared better than others.

This sort of thing isn’t new, but can be fixed with “proper lighting.” And it isn’t an issue that’s solely linked to the Pixel line of smartphones either — my Samsung Galaxy S21 also has this issue — as I’m sure other phones struggle as well. This is why hearing about Google’s latest efforts excites me. We’ll potentially be able to take photos that properly depict how we look, all in one family photo.

You can get the complete outline of Google's photo plans in a video featuring engineers talking about the process. Essentially, Google’s engineering team made changes to computational algorithms, making it easier to see dark skin. This also helps the company’s devices pick up different hair textures. Instead of blending in with the background, cameras will be able to better differentiate curly hair. In the end, Black and Brown people will be naturally photographed — no adjustments needed.

Current photography, both intentional and not, tends to lean toward fairer skin. According to Zippia, a majority of software engineers in the U.S. are made up of white men. A plurality of Asian men likely make up engineering teams in Japan, China and Korea. Whether it’s a facial recognition app or what’s supposed to be one of the world’s best mobile cameras, people of color aren’t usually a priority.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Google is looking to change things. This won’t fix everything, but Google has stated that its engineers are still working to improve its photography based-tech in hopes of better representing people of all skin tones. As a Black person who loves to take and share photos of his family, I’m looking forward to what the company has in store.

Kenneth Seward Jr. is a freelance writer, editor and illustrator who covers games, movies and more. Follow him @kennyufg and on Twitch.

Club Benefits

Club Benefits