Tech Events

Latest about Tech Events

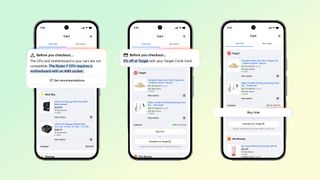

Google’s new 'Universal Cart' can monitor prices, suggest products and even buy items for you — here's how it works

By Olivia Halevy published

A new AI-powered shopping feature was announced at today's Google I/O 2026 event. Here's what to expect.

April Fools' Day 2026 RECAP — rating the best (and worst) jokes and pranks

By Jeff Parsons last updated

We spent April Fools’ Day 2026 judging the best (and worst) pranks that companies are trying to pull today. Take a look back at what went down.

Apple's WWDC 2026 conference kicks off in June: The new Gemini-powered Siri is finally coming

By Scott Younker published

Apple announced that WWDC 2025 will soon show off its 'latest software and technologies.'

The Tom's Guide Best in Show MWC 2026 Awards is now accepting submissions — everything you need to know

By Jeff Parsons published

We're now accepting submissions for our Best in Show at MWC 2026 Awards and we want to hear from you.

12 CES 2026 gadgets you can actually buy right now

By Anthony Spadafora published

CES is always full of cool new tech but these are our favorite products from the show floor you can actually buy.

CES 2026 LIVE — all the best new gadgets announced so far

By Jason England, Mark Spoonauer last updated

Tom's Guide is at CES 2026, bringing you all the latest news and hands-on from our team on the ground in Las Vegas!

7 weirdest gadgets of CES 2026

By Anthony Spadafora published

CES 2026 made us feel like we're living in the future, but the show floor had plenty of oddities too. Here are the weirdest gadgets we saw this year.

CES 2026 Day 4 — 7 top new gadgets you need to see

By Jeff Parsons published

Our pick of some of the best new gadets we saw on day 4 of CES 2026.

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

Club Benefits

Club Benefits