Smile! This Free Photo Storage Service Used Millions of Faces to Train a Creepy AI

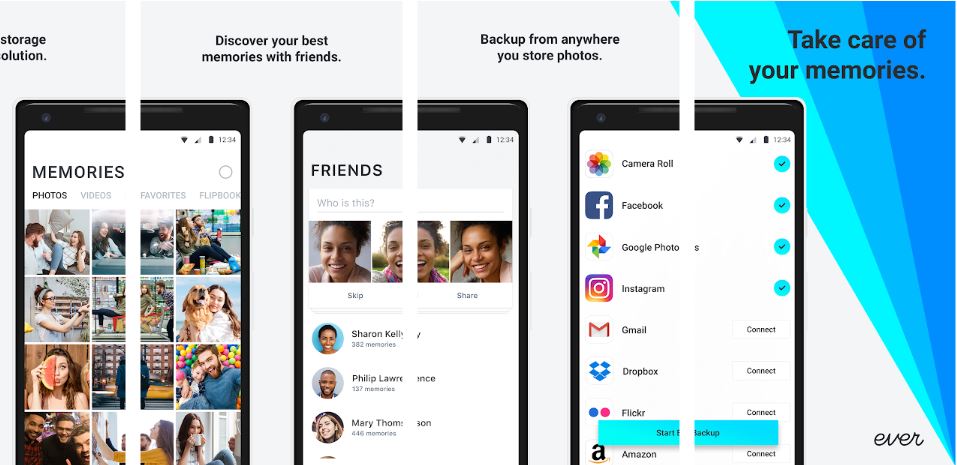

Ever, a photo storage app, reportedly used millions of user photos to train facial-recognition algorithms without proper disclosure.

Ever, a photo storage app and website, reportedly used millions of uploaded user photos to train facial recognition technology without proper disclosure, then put the technology up for sale to third-party entities, including law enforcement and the military.

The app, according to an NBC News report, didn't clarify to millions of users that their uploaded photos were being used to train facial recognition technology. Ever's privacy policy was updated on April 15 to be more transparent, but the change came only after NBC News contacted the company.

The lengthy privacy policy now states, “Ever uses facial recognition technologies as part of the Service. Your Files may be used to help improve and train our products and these technologies. Some of these technologies may be used in our separate products and services for enterprise customers."

NBC News spoke with several Ever users who uploaded their photos to the site, and most had no idea that their photos were being used for a side project. Those photos helped train a facial recognition algorithm sold under the brand Ever AI. Founded in 2013, Ever AI has contracts with SoftBank Robotics, the company behind a robot capable of recognizing human emotions.

Ever AI CEO Doug Aley refuted NBC News' claims, telling CNET that the NBC News report was inaccurate and that the company isn't using photos to generate facial recognition algorithms for Ever AI (despite the privacy-policy update that states the company might). Aley also told NBC News that the privacy policy update was made because of "several recent stories," not NBC's inquiries.

MORE: How to Beat Facial-Recognition Software

"To be absolutely clear, no user information of any kind is provided from our Ever app to our enterprise face-recognition customers," Aley told the Register. "That means that no user images are provided, and no information derived from those images, such as vectors or mathematical representations of the images, are provided to our enterprise customers."

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Ever AI has not signed any contracts with law enforcement or military entities, but the company promises that it can "enhance surveillance capabilities" and "identify and act on threats," according to NBC News.

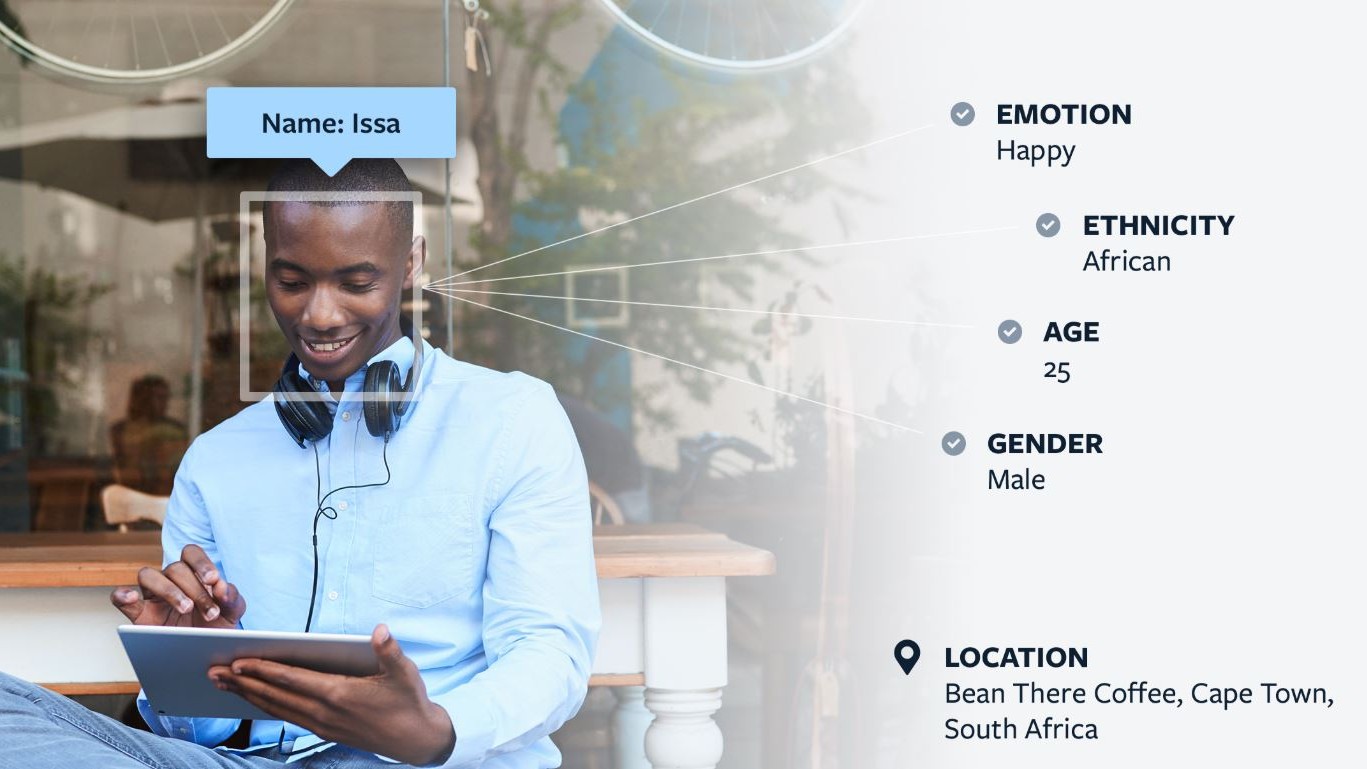

Ever AI claims its facial recognition software is 99.84% accurate, making it one of the most sensitive products on the market. Using its facial recognition technology, the company offers "attribute identification services," which can determine someone's name, ethnicity, age, emotion, gender and even location.

Aley reportedly told NBC News that Ever shifted to creating facial recognition technology when it became clear that a free photo storage wasn't as lucrative as the company had hoped.

Ever wouldn't be the first company to use uploaded photo to generate facial recognition AI without proper consent. Earlier this year, tech giant IBM was caught using Flickr photos for facial recognition training.

Phillip Tracy is the assistant managing editor at Laptop Mag where he reviews laptops, phones and other gadgets while covering the latest industry news. Previously, he was a Senior Writer at Tom's Guide and has also been a tech reporter at the Daily Dot. There, he wrote reviews for a range of gadgets and covered everything from social media trends to cybersecurity. Prior to that, he wrote for RCR Wireless News covering 5G and IoT. When he's not tinkering with devices, you can find Phillip playing video games, reading, traveling or watching soccer.

Club Benefits

Club Benefits