Opinion

Latest Opinion

The DJI Avata 360 is finally here after months of rumors — I tested it and trust me, 'there’s no better drone on the planet'

By Nikita Achanta published

DJI's latest and first-ever 360-degree is also one of its cheapest, and it's a triumph. 8K/60fps video, 120MP stills, and two ways to fly make it a winner.

I watch TV for a living and these are the 5 shows for 2026 I’d actually recommend

By Malcolm McMillan published

I've watched over 15 TV shows in 2026 so far and these are the five shows you need to stream right now.

I spent a week with dual Apple Studio Displays and realized I’ve been lying to myself about glossy screens for years

By Anthony Spadafora published

Apple gave the Studio Display a massive upgrade with its new XDR model but nano-texture vs standard glass was the game changer for me.

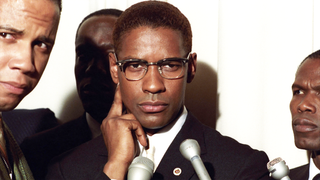

Denzel was robbed of an Oscar in this 1992 biopic — stream it and see for yourself

By Malcolm McMillan published

I'm watching one of Denzel Washington's 52 movies every week this year, and this week, I'm reviewing "Malcolm X," the 1992 biopic that should have won him an Oscar.

3 new to Hulu movies you need to stream this weekend (March 27-29)

By Malcolm McMillan published

Hulu just added a ton of must-watch movies worth watching this weekend. Here's my top 3 picks.

3 new to Paramount+ movies you need to stream this weekend (March 27-29)

By Malcolm McMillan published

Paramount+ just added a ton of must-watch movies worth watching this weekend. Here's my top 3 picks.

3 best new to Prime Video shows to binge-watch this weekend (March 27-29)

By Martin Shore published

Planning to binge-watch something new this weekend? Here's our weekly guide to the best new to Prime Video series you should be streaming right now.

3 best new to Netflix movies you need to watch this weekend (March 27-29)

By Martin Shore published

Looking for Netflix movies to watch this weekend? I've rounded up the three best new to Netflix movies I'd recommend adding to your weekend watchlist.

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

Club Benefits

Club Benefits