F8 Showed That Facebook Has Learned Nothing From Its Privacy Blunders

Facebook talked about doing a better job of protecting our privacy at its annual conference, but it's going to take a lot more than AI tweaks.

If you were expecting Facebook, stung by public outcry over how it handles personal data, to pump the brakes on updates and enhancements to its sprawling social network, let the company's public pronouncements at this week's F8 developers conference set you straight.

The company vowed to do better with safeguarding user privacy in the aftermath of the Cambridge Analytica scandal, just as it does anytime someone discover Facebook is being cavalier about data collection. But don't confuse that conciliatory stance with an apology or an indication that it might press pause on any efforts to expand Facebook's reach.

While Facebook has a responsibility to address privacy concerns and other potential sources of harm to its users, CEO Mark Zuckerberg told the F8 audience in his May 1 keynote that "we also have a responsibility to move forward on everything else that our community expects from us, too... to keep building services that help us connect in meaningful new ways as well."

Article continues belowTo hear Facebook tell it, the problem is that its tools just need some fine-tuning, and not that the very nature of how Facebook goes about its business invites abuses.

Listening to that speech and other presentations by Facebook executives during F8, and you could be forgiven if wondering whether anyone at Facebook really learned their lesson after the public outcry and congressional hearings of the last few weeks.

MORE: How to Stop Facebook From Sharing Your Data

To hear Facebook tell it, the problem is that the tools it has in place to safeguard against abuses by fraudulent accounts or data-harvesting firms just need some fine-tuning, and not that the very nature of how Facebook goes about its business invites those kinds of abuses.

Facebook reps would probably protest that that's an unfair conclusion, and to give the company its due, significant portions of this year's F8 did offer a look at what the company is doing to protect its users. Zuckerberg used his keynote to introduce Clear History, a forthcoming addition to Facebook that will let you clear info about apps and websites you interact with, similar to clearing out cookies from a web browser. (Of course, with Zuckerberg's post introducing the feature warning of a diminished Facebook experience, you wonder how much Facebook really wants you to use Clear History.)

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Day Two of F8 offered more reassurances from Facebook that it wants all that data it is collecting about you used for good and not ill. Isabel Kloumann, a research scientist at Facebook, talked about the company's commitment to using artificial intelligence ethically and removing bias from AI-fueled decisions.

All this talk seemed to suggest that Facebook believes its biggest problem is that its AI efforts need to be just a little bit more refined.

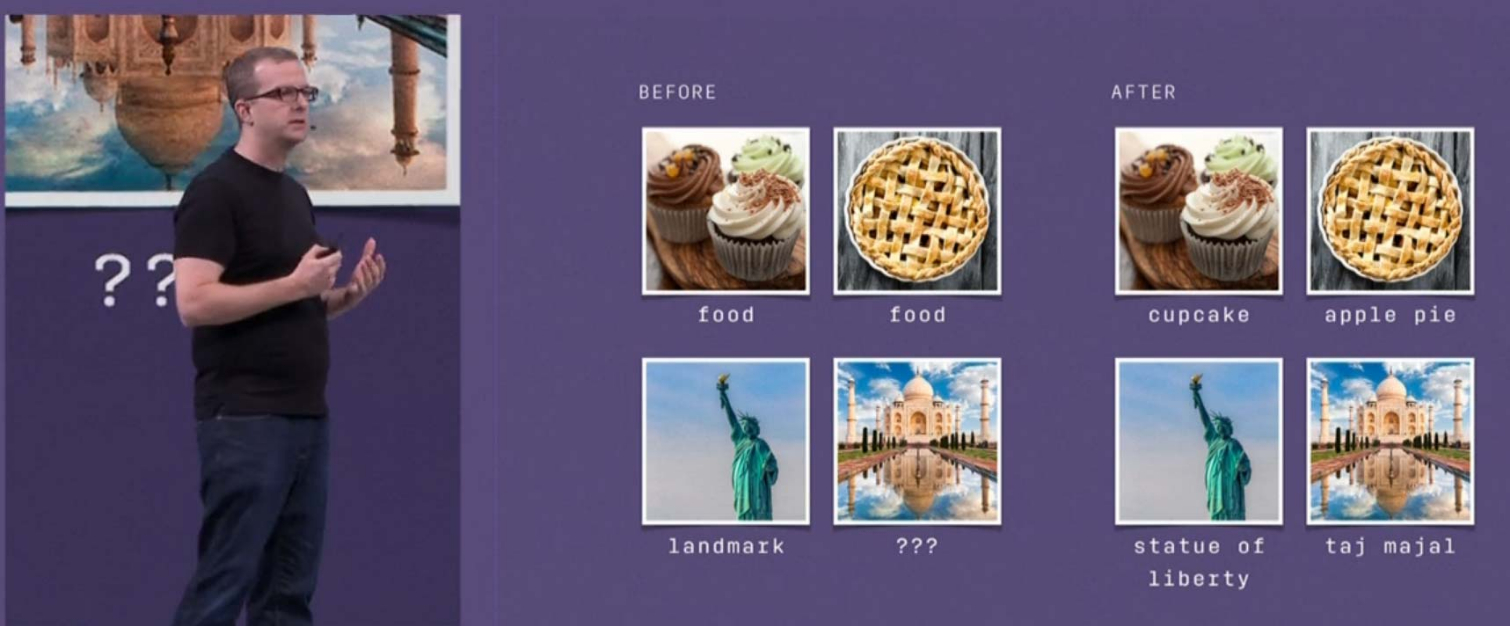

Another presentation highlighted Facebook's efforts to use the hashtags on billions of publicly available photos on Instagram to help with image identification. That has enabled Facebook's AI-powered image ID efforts to record an 85.4 percent accuracy rate on the ImageNet benchmark — important since AI-powered image recognition is central to how Facebook removes harmful content, including posts by hate groups and terrorists.

"AI is the best tool we have to keep our community safe at scale," said Mike Schroepfer, Facebook's chief technology officer, during the May 2 keynote.

MORE: Essential Tips to Avoid Getting Hacked

And it's all noble, vital work. But all this talk seemed to suggest that Facebook believes its biggest problem is that its AI efforts need to be just a little bit more refined, not that it has access to a tremendous amount of user data and no real incentive outside of bad press to make sure it's not used haphazardly.

My colleague Paul Wagenseil had it right more than a month ago, when Facebook's woes with Cambridge Analytica taking data pulled from the social networking site first came to light: Facebook taking the data you've willingly given up and handing it over to other people is exactly how that company operates.

If Cambridge Analytica had been attending F8 this week instead of going out of business, that company would have been cheering, too.

The biggest cheer during Zuckerberg's keynote — outside of his announcement that everyone in the building was getting a free Oculus Go — came when the CEO announced it was reopening app reviews that had been paused after the Cambridge Analytica news broke. The cheer was understandable — everyone in that room, both developers and Facebook, would like to go back to business as usual. If Cambridge Analytica had been attending F8 this week instead of going out of business, that company would have been cheering, too.

It's all well and good for Zuckerberg to declare that "It's not enough to build powerful tools. We have to make sure they're used for good, and we will" as he did this week. But a company that vows to be more circumspect about your data in one breath while promising to launch a dating service based on your likes and interests with the next is not a company that recognizes how crucial it is to re-establish trust.

Then again, maybe Facebook hasn't been given reason to recognize that. Daily users actually increased during the first three months of 2018, and while the Cambridge Analytica scandal broke late in the quarter, you don't get a sense that all those people vowing to delete their Facebook account put much of a dent in the company's business.

Until the number of users begins to drop, expect more of the same from Facebook -- more apologies, more promises to do better and more new features that keep you sharing your data, at least until the cycle repeats again.

Philip Michaels was a Managing Editor at Tom's Guide. He's been covering personal technology since 1999 and was in the building when Steve Jobs showed off the iPhone for the first time. He's been evaluating smartphones since that first iPhone debuted in 2007, and he's been following phone carriers and smartphone plans since 2015. He has strong opinions about Apple, the Oakland Athletics, old movies and proper butchery techniques. Follow him at @PhilipMichaels.

-

Colif when your whole business model is revolving around sucking up as much info on everyone (even if they aren't users), you are going to run into privacy complaints.Reply

watching the video about new phone while Disillusioned by A Perfect Circle plays made me smile

Club Benefits

Club Benefits