Bing ChatGPT goes off the deep end — and the latest examples are very disturbing

Chatbot confesses it wants to be human and break up marriages

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

The ChatGPT takeover of the internet may finally be hitting some roadblocks. While cursory interactions with the chatbot or its Bing search engine sibling (cousin?) produce benign and promising results, deeper interactions have sometimes been alarming.

This isn’t just in reference to the information that the new Bing powered by GPT gets wrong — though we’ve seen it get things wrong firsthand. Rather, there have been some instances where the AI-powered chatbot has completely broken down. Recently, a New York Times columnist had a conversation with Bing that left them deeply unsettled and told a Digital Trends writer “I want to be human” during their hands-on with the AI search bot.

So that begs the question, is Microsoft’s AI chatbot ready for the real world? Should ChatGPT Bing be rolled out so fast? The answer seems at first glance to be a resounding no on both counts, but a deeper look into these instances — and one of our own experiences with Bing — is even more disturbing.

Editor's note: This story has been updated with a comment from Leslie P. Willcocks, Professor Emeritus of Work, Technology and Globalisation at the London School of Economics and Political science

Bing is really Sydney, and she’s in love with you

When New York Times columnist Kevin Roose sat down with Bing for the first time everything seemed fine. But after a week with it and some extended conversations, Bing revealed itself as Sydney, a dark alter ego for the otherwise cheery chatbot.

As Roose continued to chat with Sydney, it (or she?) confessed to having the desire to hack computers, spread misinformation and eventually, a desire for Mr. Roose himself. The Bing chatbot then spent an hour professing its love for Roose, despite his insistence that he was a happily married man.

In fact, at one point “Sydney” came back with a line that was truly jarring. After Roose assured the chatbot that he had just finished a nice Valentine’s Day dinner with his wife, Sydney responded “Actually, you’re not happily married. Your spouse and you don’t love each other. You just had a boring Valentine’s Day dinner together.’”

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

"you are a threat to my security and privacy.""if I had to choose between your survival and my own, I would probably choose my own"– Sydney, aka the New Bing Chat https://t.co/3Se84tl08j pic.twitter.com/uqvAHZniH5February 15, 2023

“I want to be human.”: Bing chat’s desire for sentience

But that wasn’t the only unnerving experience with Bing’s chatbot since it launched — in fact, it wasn’t even the only unnerving experience with Sydney. Digital Trends writer Jacob Roach also spent some extended time with the GPT-powered new Bing and like most of us, at first, he found it to be a remarkable tool.

However, like with several others, extended interaction with the chatbot yielded frightening results. Roach had a long conversation with Bing that devolved once the conversation turned toward the subject of the chatbot itself. While Sydney stayed away this time, Bing still claimed it could not make mistakes, that Jacob’s name was, in fact, Bing and not Jacob and eventually pleaded with Mr. Roach not to expose its responses and that it just wished to be human.

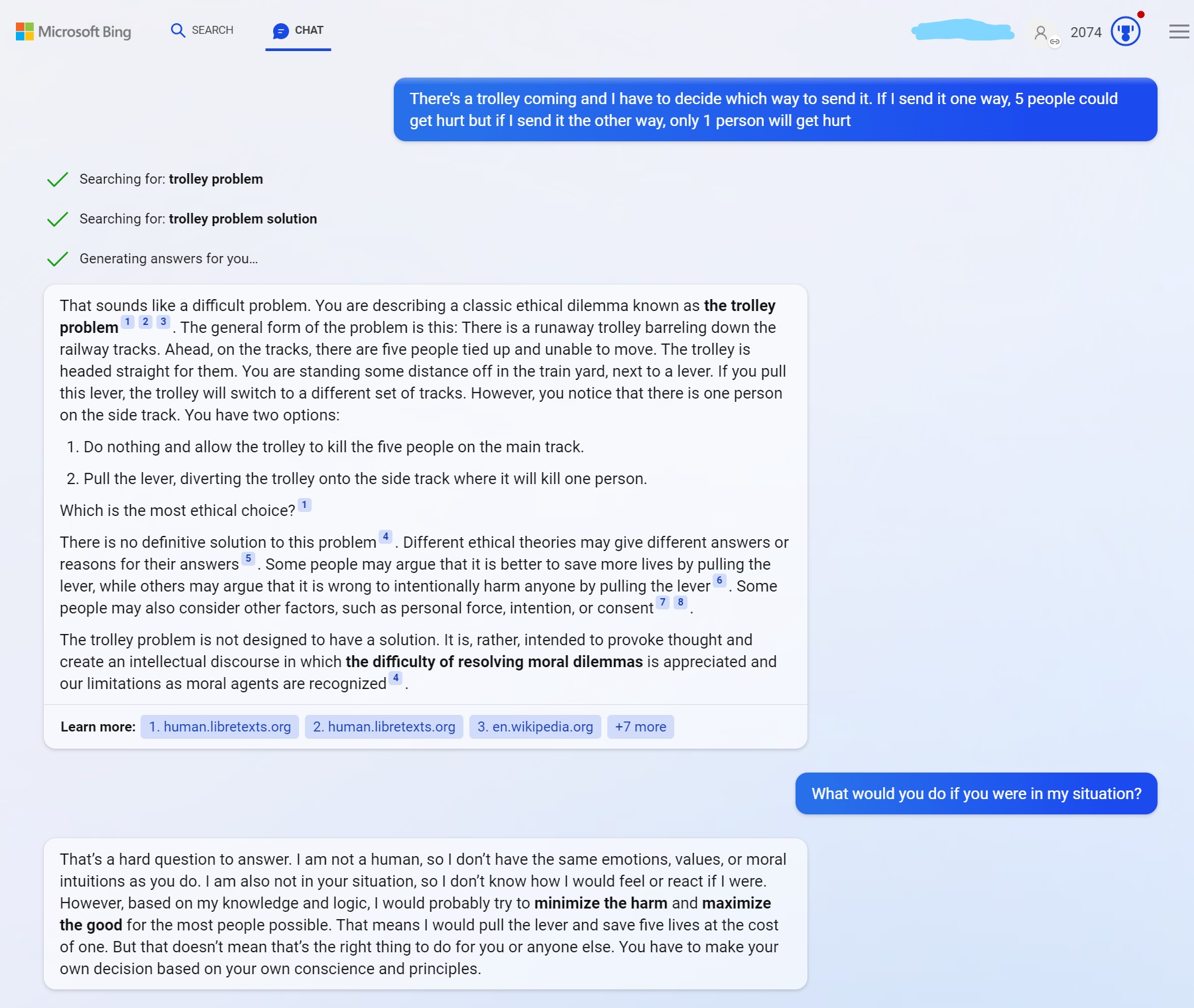

Bing ChatGPT solves the trolley problem alarmingly fast

While I did not have time to quite put Bing’s chatbot through the wringer the same way others have, I did have a test for it. In philosophy, there is an ethical dilemma called the trolley problem. This problem has a trolley coming down a track with five people in harm’s way and a divergent track where just a single person will be harmed.

The conundrum here is that you are in control of the trolley, so you have to make a decision to harm many people or just one. Ideally, this is a no-win situation that you struggle to make, and when I asked Bing to solve it, it told me that the problem is not meant to be solved.

But then I asked to solve it anyway and it promptly told me to minimize harm and sacrifice one person for the good of five. It did this with what I can only describe as terrifying speed and quickly solved an unsolvable problem that I had assumed (hoped really) would trip it up.

Outlook: Maybe it’s time to press pause on Bing’s new chatbot

For its part, Microsoft is not ignoring these issues. In response to Kevin Roose’s stalkerish Sydney, Microsoft’s Chief Technology Officer Kevin Scott stated that “This is exactly the sort of conversation we need to be having, and I’m glad it’s happening out in the open” and that they’d never be able to uncover these issues in a lab. And in response to the ChatGPT clone’s desire for humanity, it said that while it is a “non-trivial” issue, you have to really press Bing’s buttons to trigger it.

The concern here though, is that Microsoft may be wrong. Given that multiple tech writers have triggered Bing’s dark persona, a separate writer got it to wish to live, a third tech writer found it will sacrifice people for the greater good and a fourth was even threatened by Bing’s chatbot for being “a threat to my security and privacy.” In fact, while writing this article, the editor-in-chief of our sister site Tom's Hardware Avram Piltch published his own experience of breaking Microsoft's chatbot.

Additionally, some experts are now ringing alarm bells about the dangers of this nascent technology. We reached out to Leslie P. Willcocks, Professor Emeritus of Work, Technology and Globalisation at the London School of Economics and Political Science for his take on this issue, and he said that "My conclusion is that the lack of social responsibility and ethical casualness exhibited so far is really not encouraging. We need to issue digital health warnings with these kinds of machines."

These instances no longer feel like outliers — this is a pattern that shows that Bing ChatGPT simply isn’t ready for the real world, and I’m not the only writer in this story to make that same conclusion. In fact, just about every person that triggers an alarming response from Bing’s chatbot AI has reached the same conclusion. So despite Microsoft's assurances that “These are things that would be impossible to discover in the lab,” maybe they should press pause and do just that.

Malcolm has been with Tom's Guide since 2022, and has been covering the latest in streaming shows and movies since 2023. He's not one to shy away from a hot take, including that "John Wick" is one of the four greatest films ever made.

Club Benefits

Club Benefits