ChatGPT can provide AI therapy — what that means for you

AI therapy could help stretched mental health services

Here at Tom’s Guide our expert editors are committed to bringing you the best news, reviews and guides to help you stay informed and ahead of the curve!

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

May is Mental Health Awareness month and although taking care of our mental health is no longer a taboo subject, it can be prohibitively expensive or require months on a waiting list. Artificial intelligence won't beat a trained therapist or mental health professional (so please speak to someone if possible) but the likes of ChatGPT may be able to provide a useful stopgap.

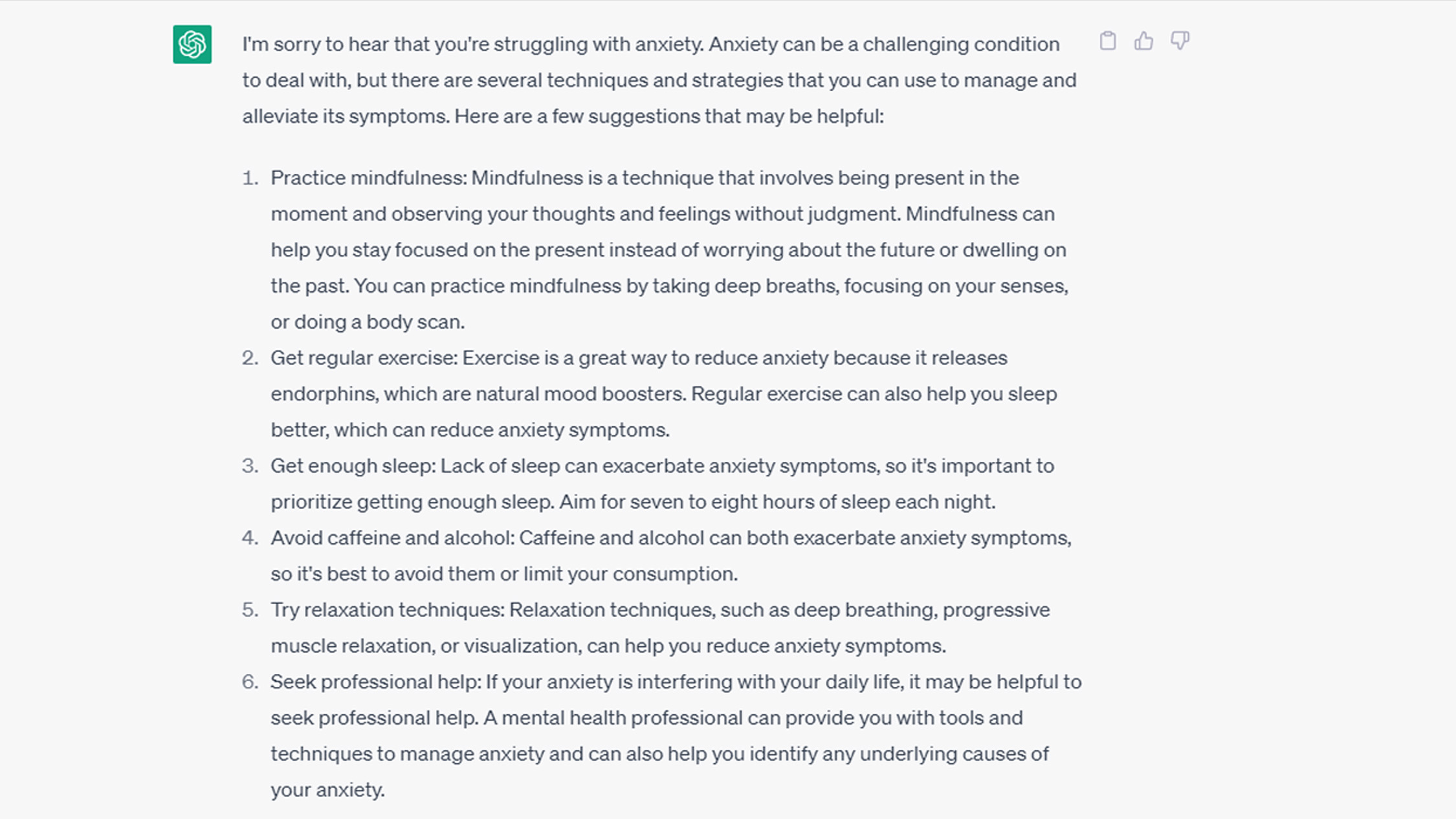

ChatGPT will always implore you to seek professional help and, if prompted, offers a generic list of helpful techniques. It's similar to how a Google search would operate, but the conversational acumen of OpenAI’s software could be a real help with talking therapy. Specific approaches, such as Cognitive Behavioral Therapy (CBT), may also benefit from an AI infusion.

It might not work for everyone but even the illusion of having a conversation about mental health could allow people to open up and seek treatment. Some people may even prefer the fact that talking to an AI means they don’t have to confess thoughts and feelings to another human.

At the very least it could be a useful way to “top up” between professional therapy sessions.

Potential uses of conversational AI in therapy

OpenAI has not claimed ChatGPT has any therapeutic qualifications but perhaps a mental health group could use a ChatGPT Plugin to train the AI on certified practices and offer professional-level responses.

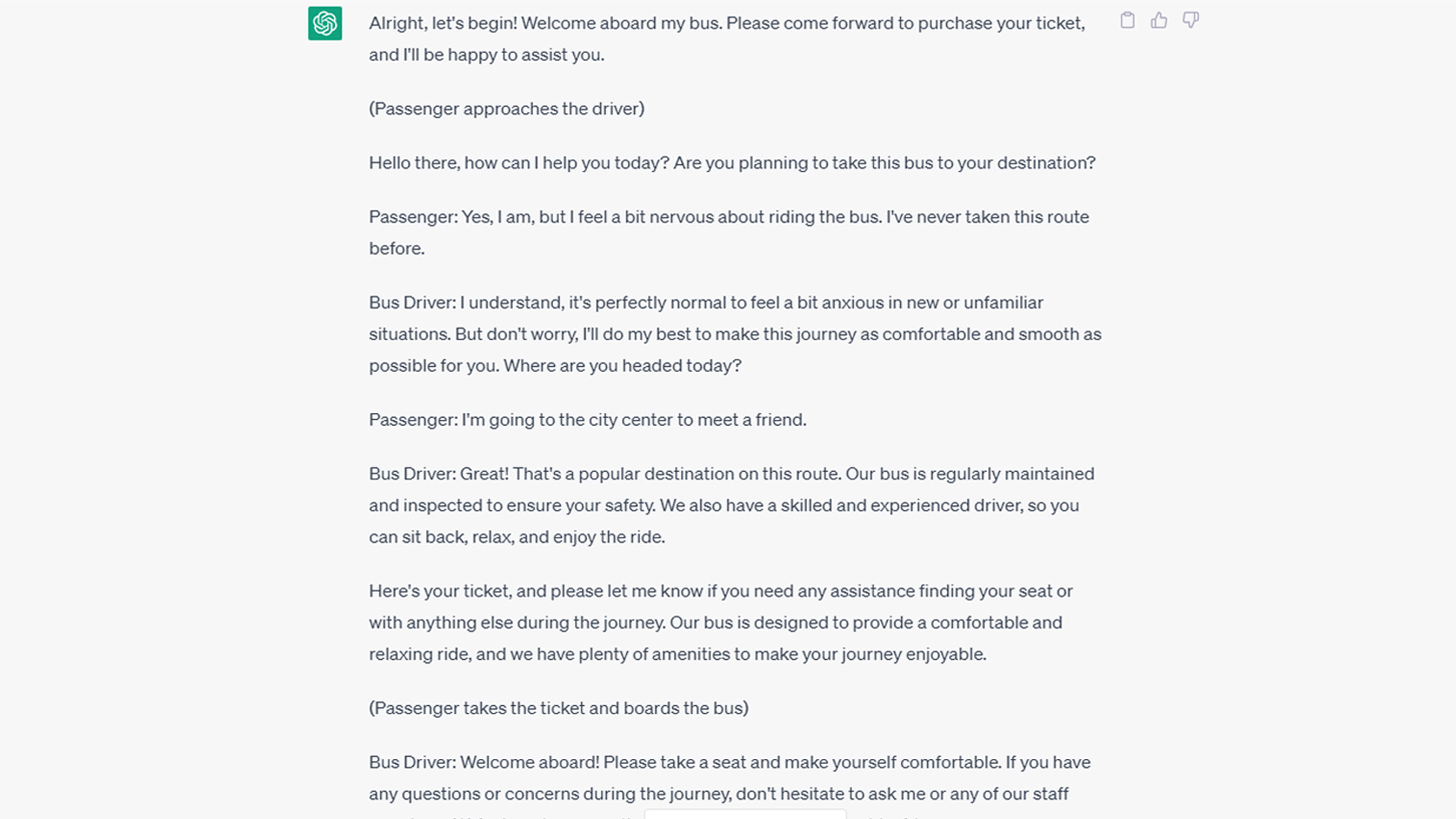

As a teenager, I had CBT therapy and one big aspect of it for me involved modeling situations that caused me anxiety. One of my biggest problems was using public transport, something I now do every day. I was regularly asked to imagine the situation that made me anxious, for example: buying a bus ticket, and then act it out with my therapist. AI could excel at this situation, providing patients a way to model social situations without the pressure of real eyes on them.

One of the negative thought processes often cited in CBT is catastrophizing — assuming the worst possible outcome is bound to happen. By practicing a task such as buying a bus ticket, even with AI, patients could build up the confidence to try it in real life and then challenge this negative thought process with their own evidence.

Get instant access to breaking news, the hottest reviews, great deals and helpful tips.

Areas of concern

AI may help some, but it needs to be used cautiously. Many may feel as though being treated with an AI shows they aren’t even worth someone’s time. It's absolutely not the case, but an easy impression to get.

There are concerns about AI treatment even from those who currently use the technology. One firm, Wysa, has an AI-powered penguin chatbot that looks out for the wellbeing of its over 5 million users. But even its founder Ramakant Vempati told AlJazeera: “We don’t use generative text, we don’t use generative models. This is a constructed dialogue, so the script is pre-written and validated through a critical safety data set, which we have tested for user responses.” The potential impact of an open-ended AI like ChatGPT going off script and encouraging the wrong viewpoint or giving bad advice could be very dangerous.

Of course on matters of emotion, a computer-generated response will never be as good as a human one. Artificial intelligence may be intelligent, but people need feelings of validation and empathy from building a rapport with another human in the form of a therapist. Professionals are trained to spot risk and potential red flags that would often be near impossible for a machine to do.

As it stands, AI could be a useful tool for our mental health, but only if supplemented and recommended by professionals. This could change in the future but, for now, there's no right answer.

More from Tom's Guide

- 7 best ChatGPT alternatives I’ve tested

- Bing with ChatGPT vs Google Bard: Which AI chatbot wins?

- How to enable or disable ChatGPT on the Windows 11 taskbar

Andy is a freelance writer with a passion for streaming and VPNs. Based in the U.K., he originally cut his teeth at Tom's Guide as a Trainee Writer before moving to cover all things tech and streaming at T3. Outside of work, his passions are movies, football (soccer) and Formula 1. He is also something of an amateur screenwriter having studied creative writing at university.

Club Benefits

Club Benefits