How Tom's Guide Tests and Reviews TVs

From color accuracy and contrast to gamma and overall image quality, here's how we evaluate TVs using objective tests and subjective comparisons.

They say beauty is in the eye of the beholder, but when you read a TV review, you want to know that the evaluation you're reading will reflect your own experiences, and isn't overly influenced by the eyes and ears of the reviewer. Here at Tom's Guide, we work hard to make sure our TV reviews are objective and accurate, whether we're looking at one of the best TVs on the market, or one of many inexpensive TVs out there.

That's why we put each television we review through a series of instrument tests to measure different aspects of performance, like color accuracy, brightness, and more. We use the results as an objective measure to supplement our reviewers' subjective impressions of color, contrast and detail in the tests with real-world content.

Instrument Tests and Equipment

We perform our testing and evaluation in a dedicated test space in Salt Lake City, Utah. This space not only lets us test TVs individually, but also compare sets side-by-side to get a clear understanding of how each TV performs.

We take measurements in a completely dark room, using an X-Rite i1 Pro spectrophotometer, a specialized measurement device used to capture and evaluate color and brightness data. This instrument is calibrated to ensure accurate measuring of both light output and color representation.

The X-Rite is paired with an AccuPel DVG-5000 video test pattern generator. Because the device creates HDMI-native output, we can be sure that the colors we see on the display are as accurate as they can be, with no distortion or degradation in transmitting the test patterns to a TV.

We analyze this data using SpectraCal's professional calibration software, CalMAN Ultimate. Using a handful of custom workflows, we test display performance in several display modes, always testing in the set's standard or default mode; a second time using the best picture mode offered by the set (generally Cinema mode or the equivalent); and then again in the best mode, with HDR support enabled. Where appropriate, we also test in other picture modes. In addition to data analysis, we use the charts created by this software to present the test information in an understandable format.

Sign up to get the BEST of Tom’s Guide direct to your inbox.

Upgrade your life with a daily dose of the biggest tech news, lifestyle hacks and our curated analysis. Be the first to know about cutting-edge gadgets and the hottest deals.

Finally, we use a Leo Bodnar Video Signal Input Lag Tester to test video signal delay, or the time (in milliseconds) it takes for the signal to travel from the video input until it displays on-screen. We perform this test in Game mode whenever it is available; modern 4K and HD TVs use a great deal of image processing to present the best-quality picture, but that processing takes time, even if it's not perceptible to the average viewer. In Game mode, most of this processing is stripped away to offer a faster response time and reduce the gap between an action in-game and the resulting image on-screen. Thus, it offers the best performance the TV is capable of, in terms of lag time. While this particular data point will have little importance to the folks watching movies or live TV, the milliseconds of lag between signal and display do make a difference when gaming. The device measures combined input lag and pixel response time, but we don't differentiate between the two in our evaluations.

Here are the main measurements we use to objectively evaluate TV performance.

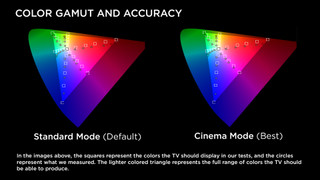

Color Accuracy and Gamut

A gamut represents every color a television is capable of producing, which is still a subset of every color the human eye can see. For Ultra HD (4K) and HD (720p and 1080p) TVs, we measure against a standard gamut measurement called Rec. 709. The higher the percentage, which can exceed 100 percent, the greater the range of color the display can reproduce.

We compare colors in these gamuts to what the TVs we're testing can reproduce. This means measuring test patterns on the TV that use all three primary colors (red, green and blue) and all three secondary colors (cyan, magenta and yellow).

We also measure dozens of lighter shades in both primary and secondary colors. From this information, we can determine, for example, if a color that should be a medium magenta is a bit purple, or if a light yellow is too orange.

In the chart above, the light triangle represents the target gamut for the type of TV we are reviewing (in this case, Rec. 709). The squares represent the colors of the test patterns we send to the TV, and the dots represent the color the TV actually displayed.

Brightness and Contrast

While our reviews frequently refer to both brightness and contrast, the more accurate terms would be peak brightness and black level.

The actual levels of light put out by a TV — either by an LED backlight or the display panel itself, in the case of OLED displays — play an enormous role in how vibrant colors look, and higher brightness levels translate into whiter whites and colors that pop. To test this, we set the display brightness as high as it will go (with HDR enabled, where applicable) and measure the luminance of a white test pattern on an otherwise black screen. This measure is reported in nits, an industry term for candela per square meter (cd/m2).

Black level is the flip side of peak brightness, and directly impacts the sharpness of the display. Traditionally, contrast is presented as a ratio of the brightness measured on the brightest and darkest colors the display can produce, namely white and black. Brighter whites are a direct result of overall brightness, while darker blacks are the result of reducing that backlight to its lowest levels.

We test all TVs using the same method, so we can make direct comparisons among different models. We use the same pitch-black room for each test and then compare the brightness of the whitest white and the blackest black the TV can display. A higher number is better, with anything above 500:1 being comparable to the contrast ratio found on a typical movie theater screen.

Unfortunately, manufacturers have rendered this specification almost meaningless. There is no standardized measurement test for contrast, so the number listed in a TV's specifications can rarely be taken at face value. Additionally, as technologies like OLED and local zone dimming have become common, it gets harder to gather objective data on contrast levels that reflects the actual viewing experience. We still test for contrast levels and discuss this data in reviews as needed, but we generally relegate discussion of contrast to our subjective evaluation.

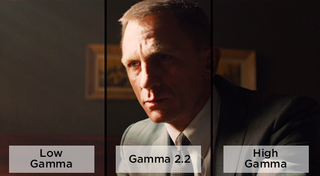

Gamma vs. Contrast

Ever watched a scary movie and been annoyed because everything is so bright that you can easily see the monster lurking in the shadows? That's probably because the TV's gamma is too low.

Whereas contrast ratio compares the whitest white to the darkest black, gamma concerns the "mid-tones" in between. The lower the gamma, the more washed-out the shadows may appear, and the higher the gamma, the less detail is found in those shadows.

We have found that, as with contrast measurements, there is significant variance among manufacturers and models, and the objective data we could gather on gamma levels bears little resemblance to the actual viewing experience. As such, we opt to discuss it in our subjective evaluation of a display.

Subjective Evaluation by Reviewer

Once our lab staff has completed instrumental testing, our reviewer spends time with each TV in our A/V lab. During this period, we strive to complement the hard data we collected with real-world experience. This involves both side-by-side comparisons with similar TVs and stand-alone viewing in a simulated living room.

Our reviewer watches a variety of content, using several content sources and media formats. These range from newly released movies on 4K UHD Blu-ray to digital files on USB and upscaled 1080p content from standard Blu-ray discs.

Our current selection of movies includes Blade Runner 2049, Spider-Man: Homecoming, Arrival, and Deadpool — all of which are available in 4K and feature various high-dynamic-range formats — but that list will change as new movies are released. Other movies, like Mad Max: Fury Road and the James Bond film Skyfall, are Blu-ray versions of the films in 1080p. We use a Philips 4K Blu-ray player and a Monoprice Blackbird 4K 1x8 HDMI Splitter, an amplified splitter that allows us to view the same media on several TVs at once, allowing us to directly compare how different sets handle the same content.

We watch live over-the-air channels and stream video from several apps and services, such as YouTube and Netflix. Throughout our viewing, we look at everything from color quality and on-screen detail to upscaling of lower resolution content and backlight quality.

We know that it's easy to get lost in jargon and specifications when comparing TVs, so we aim to present this information in a way that's useful and informed, without digging too deeply into the more granular aspects of our testing processes. In all of our reviews, we strive to combine hard data with our viewing evaluation to present a clear explanation of a given TV's performance and viewing experience. The result should be a clear discussion of how well a given set looks, sounds and compares to other TVs on the market.

Tom's Guide upgrades your life by helping you decide what products to buy, finding the best deals and showing you how to get the most out of them and solving problems as they arise. Tom's Guide is here to help you accomplish your goals, find great products without the hassle, get the best deals, discover things others don’t want you to know and save time when problems arise. Visit the About Tom's Guide page for more information and to find out how we test products.